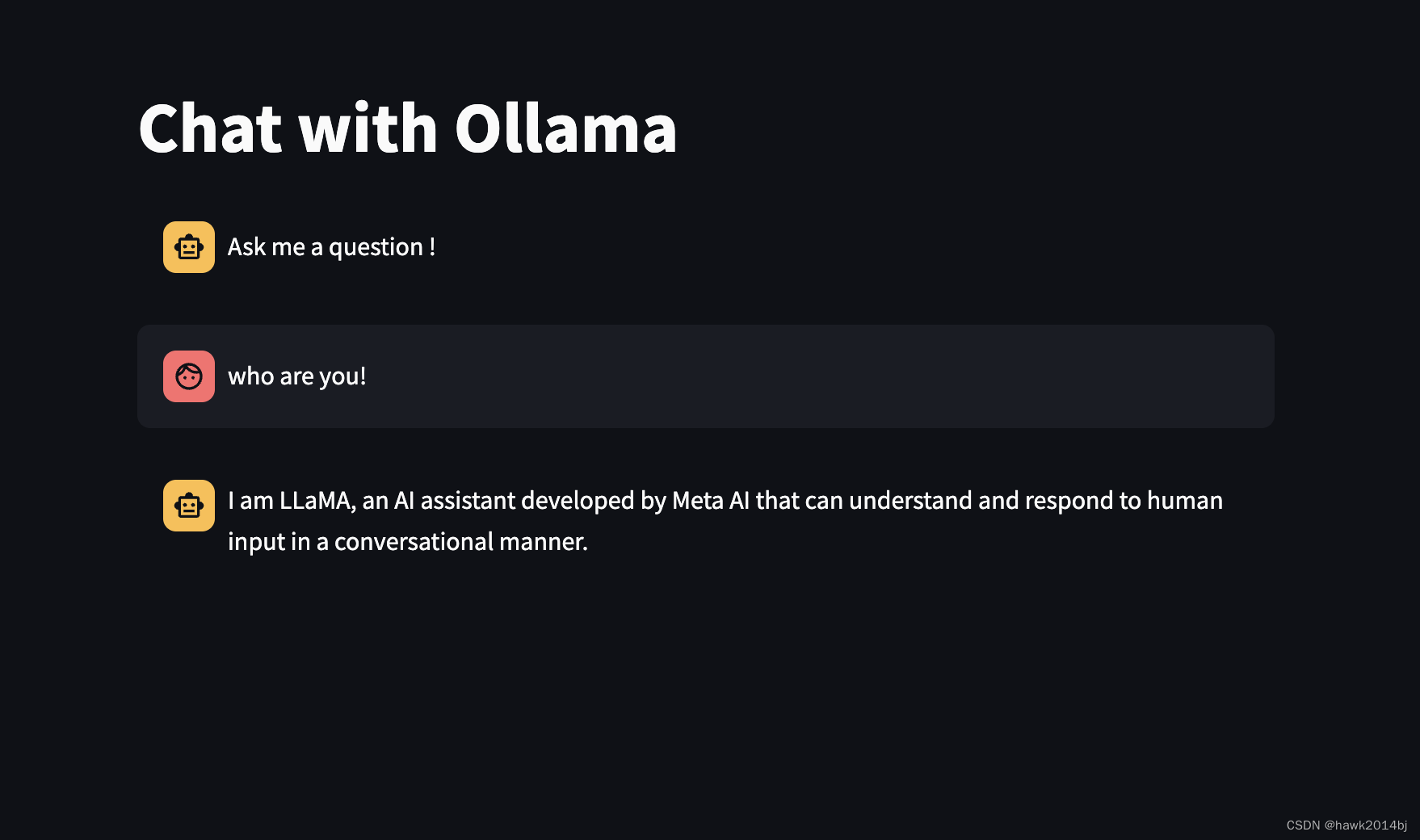

快速搭建语言聊天界面

快速搭建大语言聊天界面能快速测试我们选用的模型,通过命令行测试不是很直观。

本文采用 Streamlit + LangChain + Ollama 搭建,10 行代码搞定。

创建 Streamlit App.py

修改Ollama IP 地址 和端口到你本地的 Ollama

#app.py

from langchain_community.llms import Ollama

import streamlit as st

from langchain.callbacks.streaming_stdout import StreamingStdOutCallbackHandler

llm = Ollama(model="testllama3", base_url="http://10.91.3.116:11434", verbose=True)

def sendPrompt(prompt):

global llm

response = llm.invoke(prompt)

return response

st.title("Chat with Ollama")

if "messages" not in st.session_state.keys():

st.session_state.messages = [

{"role": "assistant", "content": "Ask me a question !"}

]

if prompt := st.chat_input("Your question"):

st.session_state.messages.append({"role": "user", "content": prompt})

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.write(message["content"])

if st.session_state.messages[-1]["role"] != "assistant":

with st.chat_message("assistant"):

with st.spinner("Thinking..."):

response = sendPrompt(prompt)

print(response)

st.write(response)

message = {"role": "assistant", "content": response}

st.session_state.messages.append(message)

安装依赖

pip install streamlit langchain langchain-community

运行

streamlit run app.py

Dockerfile

也可以创建容器,方便后续使用。

FROM python:latest

# Create app directory

WORKDIR / app

# Copy the files

COPY requirements.txt ./

COPY app.py ./

#install the dependecies

RUN pip install -i https://pypi.tuna.tsinghua.edu.cn/simple -r requirements.txt

EXPOSE 8501

ENTRYPOINT ["streamlit", "run", "app.py", "--server.port=8501", "--server.address=0.0.0.0"]

417

417

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?