妈耶今天考研出分,妈耶今天还有组会。

先浅读一篇论文吧

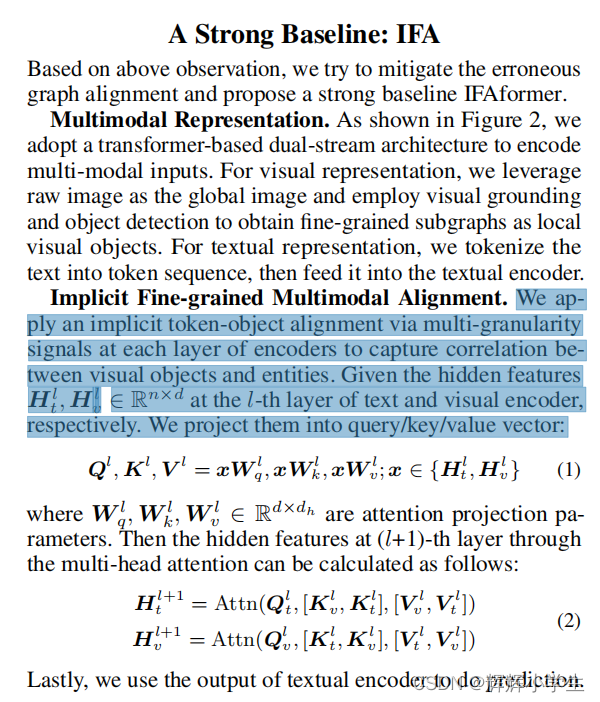

On Analyzing the Role of Image for Visual-enhanced Relation Extraction :

把图文对打乱,发现性能没降低,离谱!

就这么多吧

做了下午组会的ppt

学一下然后准备开会吧:

BERT task2:

import pandas as pd

import numpy as np

import re

import math

import random

from tqdm import tqdm

def read_data(file_path):

all_data = pd.read_csv(file_path)

all_texts = all_data["content"].tolist()

return all_texts

def split_text(text):

#pattern = r"。|?"

pattern = r"[,。?!;:、]"

sp_text = re.split(pattern, text)

new_text = resplit_text(sp_text)

return new_text

def resplit_text(text_list):

result = []

sentence = ""

for text in text_list:

if sentence == "":

if len(text)<3:

continue

if random.random() < 0.1:

result.append(text + "。")

continue

if len(sentence) < 30 or random.random() < 0.1:

sentence += text + ","

else:

result.append(sentence[:-1]+"。")

sentence = text

return result

def build_neg_pos_text(text_list):

all_text1, all_text2 = [], []

all_label = []

for text_id, text in enumerate(text_list):

if text_id == (len(text_list)-1):

break

all_text1.append(text)

all_text2.append(text_list[text_id+1])

all_label.append(1)

c_id = [i for i in range(len(text_list)) if i != text_id and i != (text_id+1)]

other_id = random.choice(c_id)

all_text1.append(text)

all_text2.append(text_list[other_id])

all_label.append(0)

return all_text1, all_text2, all_label

def build_task2_dataset(text_list):

all_text1 = []

all_text2 = []

all_label = []

for text in tqdm(text_list):

sp_text = split_text(text)

if len(sp_text) <= 2:

continue

t1, t2, l = build_neg_pos_text(sp_text)

all_text1.extend(t1)

all_text2.extend(t2)

all_label.extend(l)

pd.DataFrame({"text1": all_text1, "text2": all_text2, "label": all_label}).to_csv("..//self_bert//data//my_task2.csv", index=False)

def build_word_2_index(all_text):

word_2_index = {"{[PAD]": 0, "[CLS]": 1, "[SEP]": 2, "[MASK]": 3, "[UNK]": 4}

for text in all_text:

for w in text:

if w not in word_2_index:

word_2_index[w] = len(word_2_index)

index_2_word = list(word_2_index)

return word_2_index, index_2_word

if __name__ == "__main__":

all_texts = read_data("..//self_bert//data//test_data.csv")

#build_task2_dataset(all_texts)

word_2_index, index_2_word = build_word_2_index(all_texts)

#with open("..//self_bert//data//index_2_word.txt", "w", encoding="utf-8") as f:

#f.write("\n".join(index_2_word))

鱼书:

回家学一下深度学习花书:

4136

4136

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?