首先要准备两个东西

| BSTLD数据集 | 下载地址:(打开迅雷复制进行就可以下载) 6b77e9bb941a2da928f0e1221a9c4ffbbfdaf936 |

| BSTLD github官方工具 | 地址: |

1.数据集处理

本文只是用了BSTLD数据集中dataset_train中的图像数据

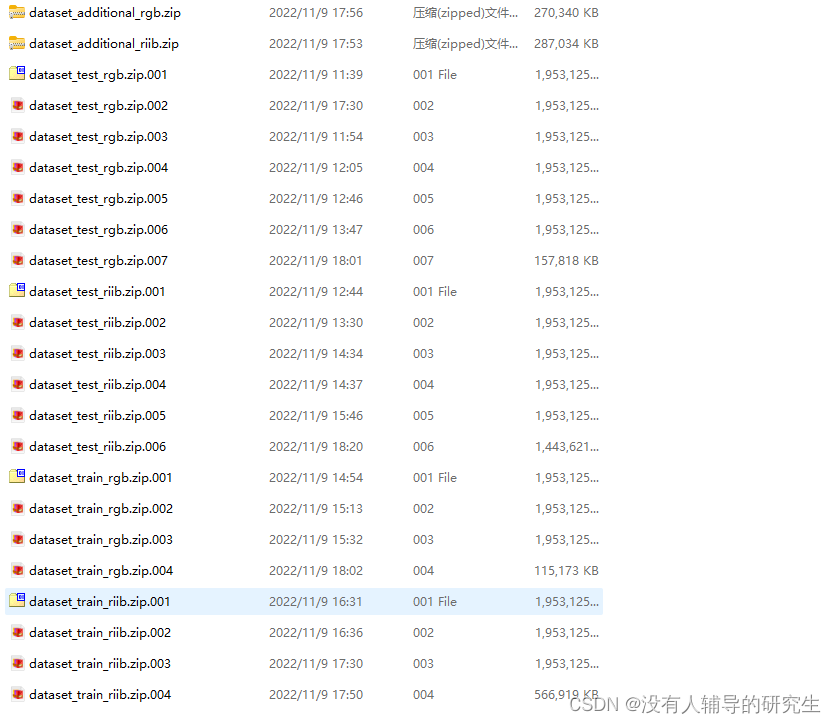

下载完的数据集应该是如下图1所示:

图1 BSTLD数据集

注释:因为自己课题中使用到的是yolov5来训练识别红绿灯 所以只需要使用rgb属性的图片即可

首先解压好数据集:

打开dataset_train_rgb.zip.001 只要打开这一个压缩包即可 将数据解压出来

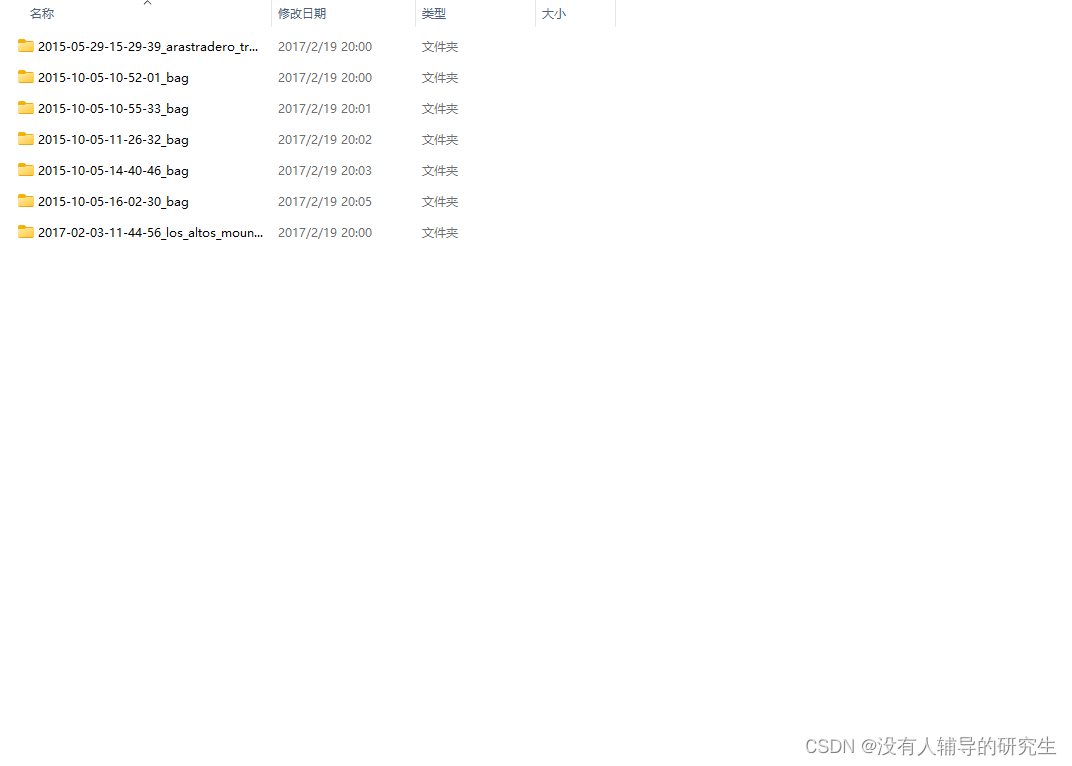

得到下面图2所示

图2 BSTLD train数据集

2.使用官方工具来转换yaml文件

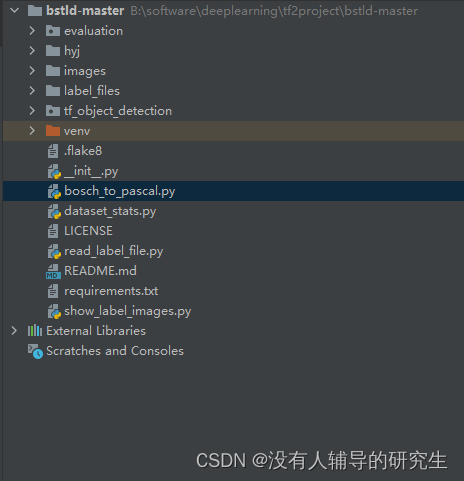

从github上下载的程序打开后如下图3所示:

图3 BSTLD 官方工具代码

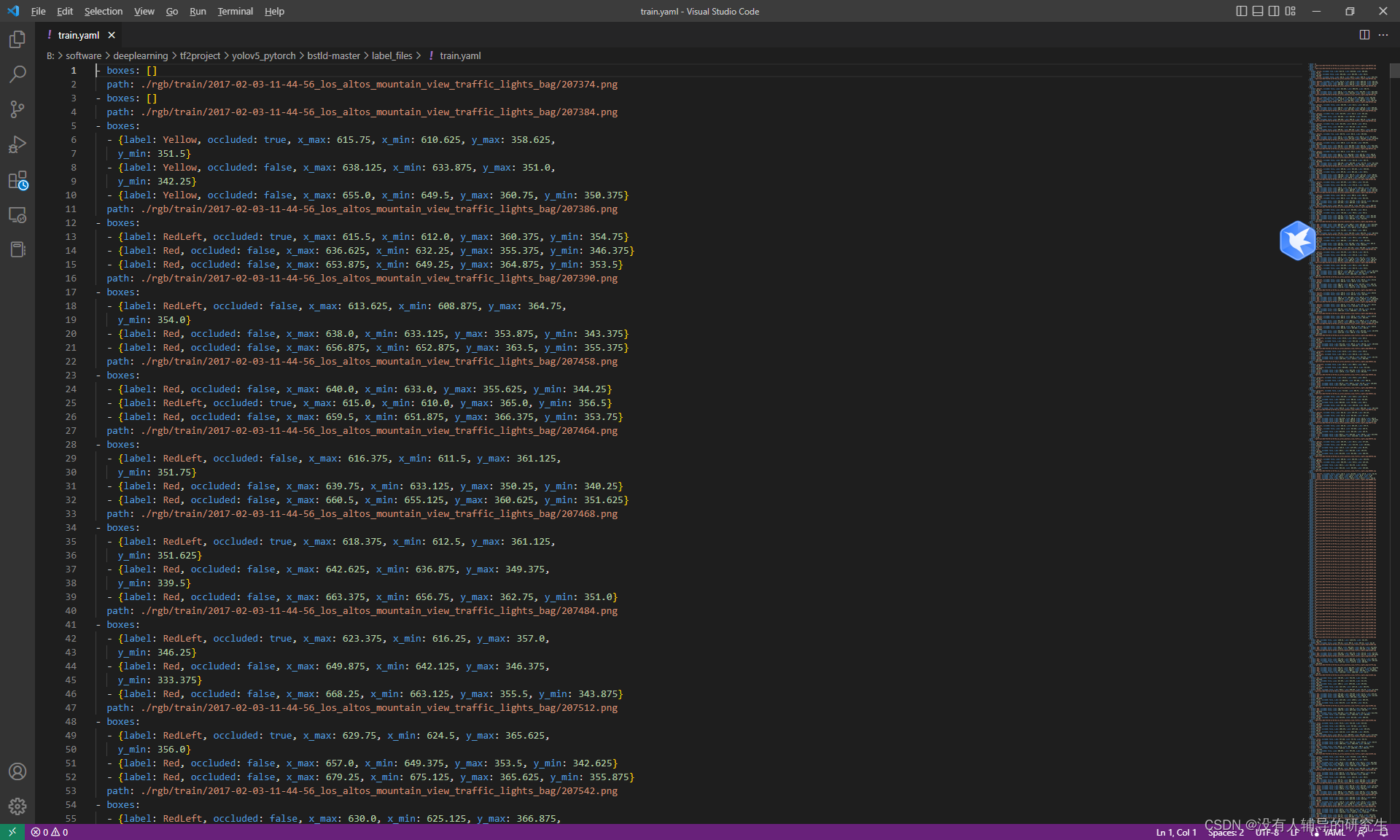

首先,我们先处理yaml文件。官方提供的yaml文件是所有训练图片位置信息和状态信息的一个集合。使用记事本打开会看到下面内容图4中所展示。(注释:官方给的train.yaml在目录label_files文件夹中)

图4 BSTLD train.yaml文件

我们现在要做的任务就是将这么多集合在一起的信息给拆分开。其步骤如下所示:

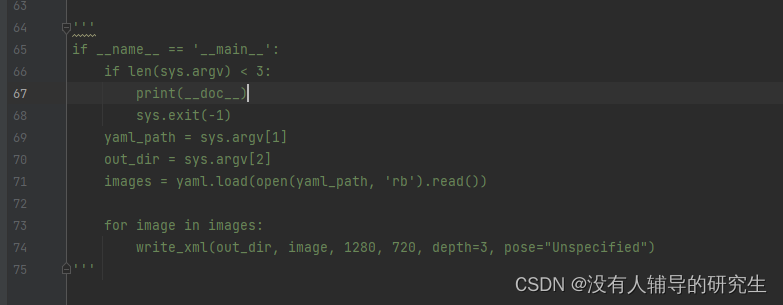

打开官方提供的程序bosch_to_pascal.py文件会看到如图5所示的程序(注释:这里我直接注释了因为不知道咋用)

图5 注释

自己添加了一行下面的程序

out_dir = 'hyj' #这里是目录里新建的空的文件夹名字 随便定义

images = yaml.safe_load(open('label_files/train.yaml', 'rb').read())#读取标签文件

for image in images:

write_xml(out_dir, image, 1280, 720, depth=3, pose="Unspecified") #这里在生产xml文件总程序如下所示:

#!/usr/bin/env python

"""

This script Converts Yaml annotations to Pascal .xml Files

of the Bosch Small Traffic Lights Dataset.

Example usage:

python bosch_to_pascal.py input_yaml out_folder

"""

import os

import sys

import yaml

from lxml import etree

import os.path

import xml.etree.cElementTree as ET

def write_xml(savedir, image, imgWidth, imgHeight,

depth=3, pose="Unspecified"):

boxes = image['boxes']

impath = image['path']

imagename = impath.split('/')[-1]

currentfolder = savedir.split("\\")[-1]

annotation = ET.Element("annotation")

ET.SubElement(annotation, 'folder').text = str(currentfolder)

ET.SubElement(annotation, 'filename').text = str(imagename)

imagename = imagename.split('.')[0]

size = ET.SubElement(annotation, 'size')

ET.SubElement(size, 'width').text = str(imgWidth)

ET.SubElement(size, 'height').text = str(imgHeight)

ET.SubElement(size, 'depth').text = str(depth)

ET.SubElement(annotation, 'segmented').text = '0'

for box in boxes:

obj = ET.SubElement(annotation, 'object')

ET.SubElement(obj, 'name').text = str(box['label'])

ET.SubElement(obj, 'pose').text = str(pose)

ET.SubElement(obj, 'occluded').text = str(box['occluded'])

ET.SubElement(obj, 'difficult').text = '0'

bbox = ET.SubElement(obj, 'bndbox')

ET.SubElement(bbox, 'xmin').text = str(box['x_min'])

ET.SubElement(bbox, 'ymin').text = str(box['y_min'])

ET.SubElement(bbox, 'xmax').text = str(box['x_max'])

ET.SubElement(bbox, 'ymax').text = str(box['y_max'])

xml_str = ET.tostring(annotation)

root = etree.fromstring(xml_str)

xml_str = etree.tostring(root, pretty_print=True)

save_path = os.path.join(savedir, imagename + ".xml")

with open(save_path, 'wb') as temp_xml:

temp_xml.write(xml_str)

out_dir = 'hyj'

images = yaml.safe_load(open('label_files/train.yaml', 'rb').read())

for image in images:

write_xml(out_dir, image, 1280, 720, depth=3, pose="Unspecified")

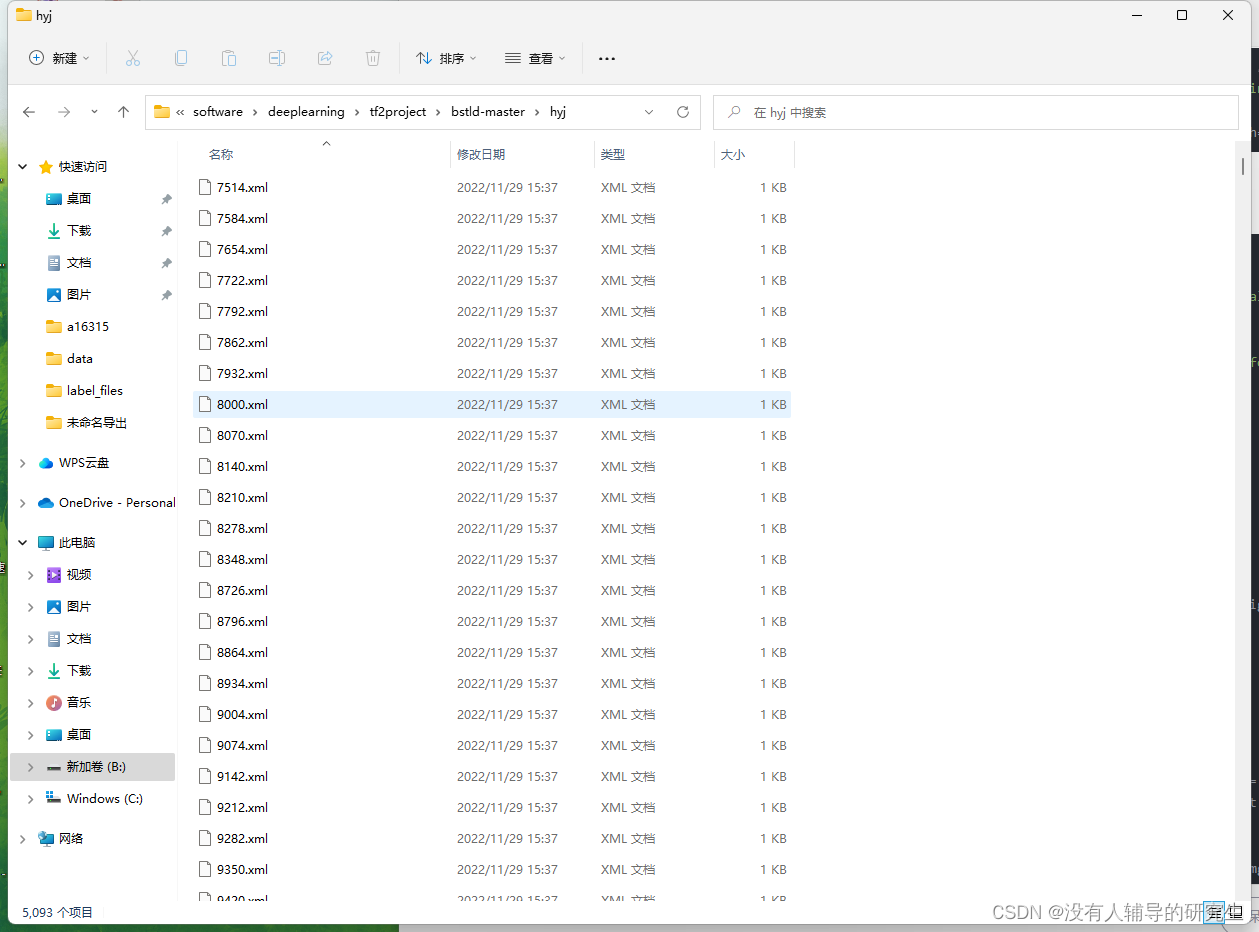

运行以后 在自己建立的hyj文件夹里就会有生产的xml后缀的标签文件 如下图6所示:

图6 标签文件

关于dataset_stats.py文件是用来统计标签文件里有哪些类别的使用方法如下:

k = quick_stats('label_files/train.yaml')跟上面方法一样 把最后if 这一段注释了 然后加上这个运行 就可以看到数据集里对于的类别和数量了。

还有两个read_label_file.py和show_label_images.py 这两个是用来展示标定的图片的使用方法如下所示:

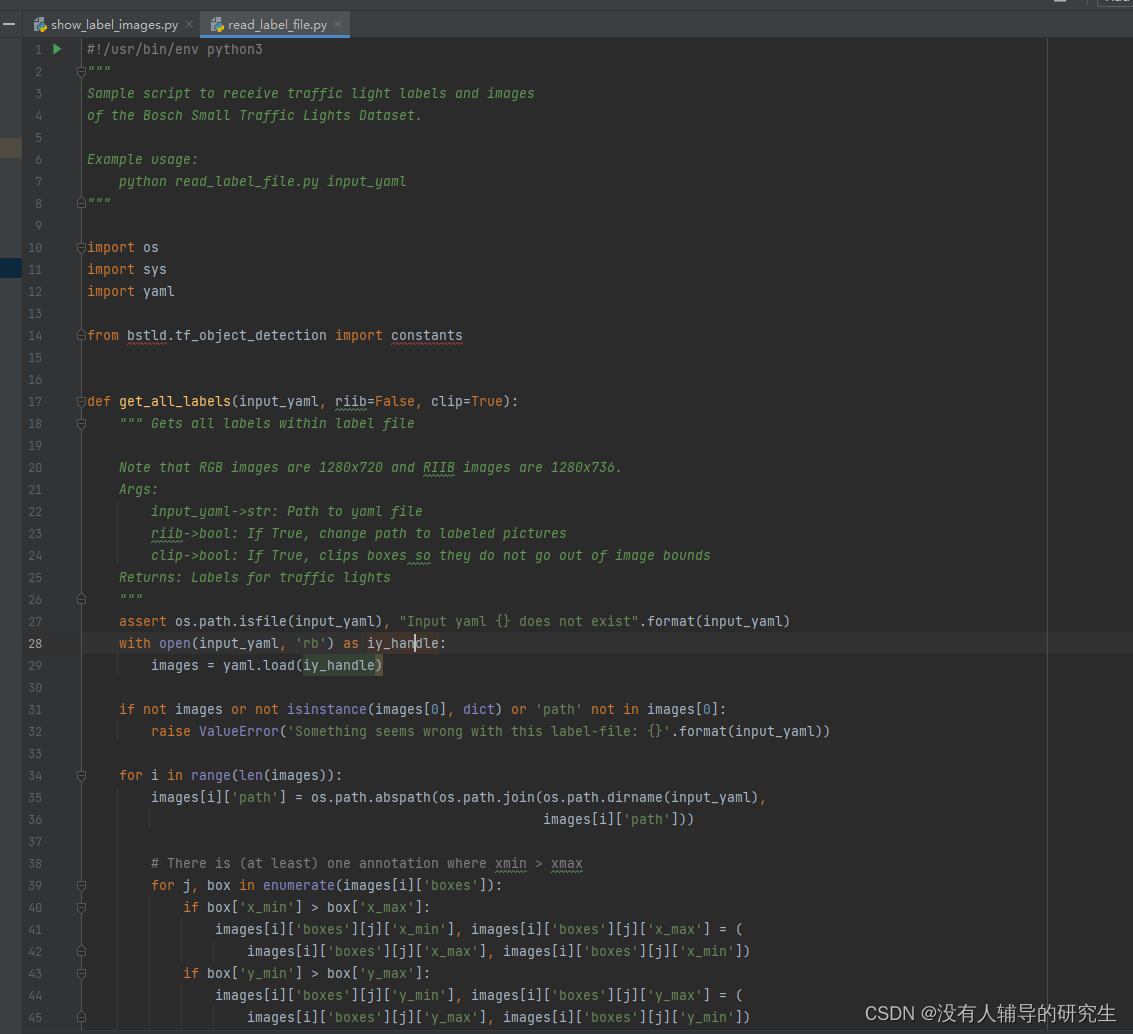

如果打开read_label_file.py文件发生报错,如下图7所示:

图7 报错

只需要删除前面的bstld.就好变成

from tf_object_detection import constantsread_label_file.py中还有28行改成这样才不会报错

with open(input_yaml, 'rb') as iy_handle:

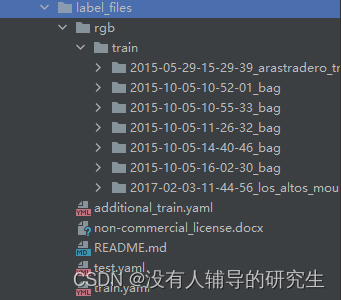

images = yaml.safe_load(iy_handle)接着如图8所示,把解压出来的图2中文件放到一下根目录中即可:(注释:rgb和train不可缺少!)

图8 根目录内容

打开show_label_images.py文件 如下代码即可运行

#!/usr/bin/env python

"""

Quick sample script that displays the traffic light labels within

the given images.

If given an output folder, it draws them to file.

Example usage:

python write_label_images input.yaml [output_folder]

"""

import sys

import os

import cv2

from read_label_file import get_all_labels

def ir(some_value):

"""Int-round function for short array indexing """

return int(round(some_value))

def show_label_images(input_yaml, output_folder=None):

"""

Shows and draws pictures with labeled traffic lights.

Can save pictures.

:param input_yaml: Path to yaml file

:param output_folder: If None, do not save picture. Else enter path to folder

"""

images = get_all_labels(input_yaml)

if output_folder is not None:

if not os.path.exists(output_folder):

os.makedirs(output_folder)

for i, image_dict in enumerate(images):

image = cv2.imread(image_dict['path'])

if image is None:

raise IOError('Could not open image path', image_dict['path'])

for box in image_dict['boxes']:

cv2.rectangle(image,

(ir(box['x_min']), ir(box['y_min'])),

(ir(box['x_max']), ir(box['y_max'])),

(0, 255, 0))

cv2.imshow('labeled_image', image)

cv2.waitKey(10)

if output_folder is not None:

cv2.imwrite(os.path.join(output_folder, str(i).zfill(10) + '_'

+ os.path.basename(image_dict['path'])), image)

k = 'label_files/train.yaml'

h = show_label_images(k)

2953

2953

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?