0. 前言

第一次看吴恩达老师机器学习视频时, 在 9.2 9.2 9.2节卡住。看到评论区别人解答(Arch725 的解答)发现有一些疏漏,而且缺少一些铺垫,所以进行了一些修改补充。

本文的反向传播算法的推导过程根据的是交叉熵代价函数,并非二次代价函数。不同代价函数的求导结果不同所以结果略有差异,但本质都是相同的。

交叉熵代价函数:

J ( Θ ) = − 1 m ∑ i = 1 m ( y ( i ) l o g ( h θ ( x ( i ) ) ) + ( 1 − y ( i ) ) l o g ( 1 − h θ ( x ( i ) ) ) ) J(\Theta) = -\frac{1}{m}\sum_{i=1}^{m}(y^{(i)}log(h_\theta(x^{(i)})) + (1-y^{(i)})log(1-h_\theta(x^{(i)}))) J(Θ)=−m1i=1∑m(y(i)log(hθ(x(i)))+(1−y(i))log(1−hθ(x(i))))

二次代价函数:

J ( Θ ) = 1 2 m ∑ i = 1 m ( h θ ( x ( i ) ) − y ( i ) ) 2 J(\Theta) = \frac{1}{2m}\sum_{i=1}^{m}(h_\theta(x^{(i)})-y^{(i)})^{2} J(Θ)=2m1i=1∑m(hθ(x(i))−y(i))2

本文参考自Arch725 的 github。如果读完后觉得有所收获, 请在里点个 star 吧~

1. 潜在读者

这篇文章的潜在读者为:

- 学习吴恩达机器学习课程, 看完 9.1 9.1 9.1节及以前的内容而在 9.2 9.2 9.2节一脸懵逼的同学

- 知道偏导数是什么, 也知道偏导数的求导法则

这篇文章会帮助你完全搞懂 9.2 9.2 9.2节是怎么回事, 除 9.1 9.1 9.1节及以前的内容外, 不需要任何额外的机器学习的知识.

2. 铺垫

2.1 复合函数求导的链式法则

这里只提供公式方便大家回忆,若没有学习相关知识,请学习相关内容(偏导数)。

(链式法则)设 u = f ( x , y ) , x = φ ( s , t ) , y = ϕ ( s , t ) u=f(x,y),x=\varphi(s,t),y=\phi(s,t) u=f(x,y),x=φ(s,t),y=ϕ(s,t),此时 f f f在点 ( x , y ) (x,y) (x,y)可微,又 x x x和 y y y都在点 ( s , t ) (s,t) (s,t)关于 s , t s,t s,t的偏导数存在,则

∂ u ∂ s = ∂ u ∂ x ∗ ∂ x ∂ s + ∂ u ∂ y ∗ ∂ y ∂ s \frac{\partial{u}}{\partial{s}} = \frac{\partial{u}}{\partial{x}}*\frac{\partial{x}}{\partial{s}}+\frac{\partial{u}}{\partial{y}}*\frac{\partial{y}}{\partial{s}} ∂s∂u=∂x∂u∗∂s∂x+∂y∂u∗∂s∂y

∂ u ∂ t = ∂ u ∂ x ∗ ∂ x ∂ t + ∂ u ∂ y ∗ ∂ y ∂ t \frac{\partial{u}}{\partial{t}} = \frac{\partial{u}}{\partial{x}}*\frac{\partial{x}}{\partial{t}}+\frac{\partial{u}}{\partial{y}}*\frac{\partial{y}}{\partial{t}} ∂t∂u=∂x∂u∗∂t∂x+∂y∂u∗∂t∂y

2.2 神经网络的各种记号

约定神经网络的层数为 L L L, 其中第 l l l层的的神经元数为 s l s_l sl, 该层第 i i i个神经元的输出值为 a i ( l ) a_i^{(l)} ai(l), 该层的每个神经元输出值计算如下:

a ( l ) = [ a 1 ( l ) a 2 ( l ) ⋯ a s l ( l ) ] = s i g m o i d ( z ( l ) ) = 1 1 + e − z ( l ) (1) a^{(l)} = \begin{bmatrix} a_1^{(l)} \\ a_2^{(l)} \\ \cdots \\ a_{s_l}^{(l)} \end{bmatrix} = sigmoid(z^{(l)}) = \frac{1}{1+e^{-z^{(l)}}} \tag{1} a(l)=⎣⎢⎢⎢⎡a1(l)a2(l)⋯asl(l)⎦⎥⎥⎥⎤=sigmoid(z(l))=1+e−z(l)1(1)

其中 s i g m o i d ( z ( l ) ) sigmoid(z^{(l)}) sigmoid(z(l))是激活函数, z ( l ) z^{(l)} z(l)是上一层神经元输出结果 a ( l − 1 ) a^{(l-1)} a(l−1)的线性组合 Θ ( l − 1 ) \Theta^{(l-1)} Θ(l−1)是参数矩阵, k ( l − 1 ) k^{(l-1)} k(l−1)为常数向量:

z ( l ) = Θ ( l − 1 ) a ( l − 1 ) + k ( l − 1 ) (2) z^{(l)} = {\Theta^{(l-1)}} a^{(l-1)} + k^{(l-1)} \tag{2} z(l)=Θ(l−1)a(l−1)+k(l−1)(2)

Θ ( s l × s l − 1 ) ( l − 1 ) = [ θ 11 ( l − 1 ) θ 12 ( l − 1 ) ⋯ θ 1 s l − 1 ( l − 1 ) θ 21 ( l − 1 ) θ 22 ( l − 1 ) ⋯ θ 2 s l − 1 ( l − 1 ) ⋮ ⋮ ⋱ ⋮ θ s l 1 ( l − 1 ) θ s l 2 ( l − 1 ) ⋯ θ s l s l − 1 ( l − 1 ) ] (3) \Theta^{(l-1)}_{(s_{l} \times s_{l-1})} = \begin{bmatrix} \theta_{11}^{(l-1)} & \theta_{12}^{(l-1)} & \cdots & \theta_{1s_{l-1}}^{(l-1)}\\ \theta_{21}^{(l-1)} & \theta_{22}^{(l-1)} & \cdots & \theta_{2s_{l-1}}^{(l-1)}\\ \vdots & \vdots & \ddots & \vdots \\ \theta_{s_{l}1}^{(l-1)} & \theta_{s_{l}2}^{(l-1)} & \cdots & \theta_{s_{l}s_{l-1}}^{(l-1)}\\ \end{bmatrix} \tag{3} Θ(sl×sl−1)(l−1)=⎣⎢⎢⎢⎢⎡θ11(l−1)θ21(l−1)⋮θsl1(l−1)θ12(l−1)θ22(l−1)⋮θsl2(l−1)⋯⋯⋱⋯θ1sl−1(l−1)θ2sl−1(l−1)⋮θslsl−1(l−1)⎦⎥⎥⎥⎥⎤(3)

参数(权重)矩阵 Θ ( l − 1 ) \Theta^{(l-1)} Θ(l−1)的任务就是将第 l − 1 l-1 l−1层的 s l − 1 s_{l-1} sl−1个参数线性组合为 s l s_{l} sl个参数, 其中 θ i j l − 1 \theta_{ij}^{l-1} θijl−1表示 a j ( l − 1 ) a_j^{(l-1)} aj(l−1)在 a i ( l ) a_i^{(l)} ai(l)中的权重(没错, 这里的 i i i是终点对序号, j j j是起点的序号), 用单一元素具体表示为:

z i ( l ) = θ i 1 ( l − 1 ) ∗ a 1 ( l − 1 ) + θ i 2 ( l − 1 ) ∗ a 2 ( l − 1 ) + ⋯ + θ i s l − 1 ( l − 1 ) ∗ a s l − 1 ( l − 1 ) + k i ( l − 1 ) = ( ∑ k = 1 s l − 1 θ i k ( l − 1 ) ∗ a k ( l − 1 ) ) + k i ( l − 1 ) (4) z_i^{(l)} = \theta_{i1}^{(l-1)} * a_1^{(l-1)} + \theta_{i2}^{(l-1)} * a_2^{(l-1)} + \cdots + \theta_{is_{l-1}}^{(l-1)} * a_{s_{l-1}}^{(l-1)} + k_i^{(l-1)} = (\sum_{k=1}^{s_{l-1}} \theta_{ik}^{(l-1)} * a_k^{(l-1)}) + k_i^{(l-1)} \tag{4} zi(l)=θi1(l−1)∗a1(l−1)+θi2(l−1)∗a2(l−1)+⋯+θisl−1(l−1)∗asl−1(l−1)+ki(l−1)=(k=1∑sl−1θik(l−1)∗ak(l−1))+ki(l−1)(4)

另外, 在 l o g i s t i c logistic logistic回归中, 定义损失函数如下:

c o s t ( a ) = { log ( a ) y = 1 log ( 1 − a ) y = 0 = y log ( a ) + ( 1 − y ) log ( 1 − a ) , y ∈ { 0 , 1 } (5) cost(a) = \begin{cases} \log(a) & y=1 \\ \log(1-a) & y=0 \end{cases} = y\log(a) + (1-y)\log(1-a) , y \in \{0, 1\} \tag{5} cost(a)={log(a)log(1−a)y=1y=0=ylog(a)+(1−y)log(1−a),y∈{0,1}(5)

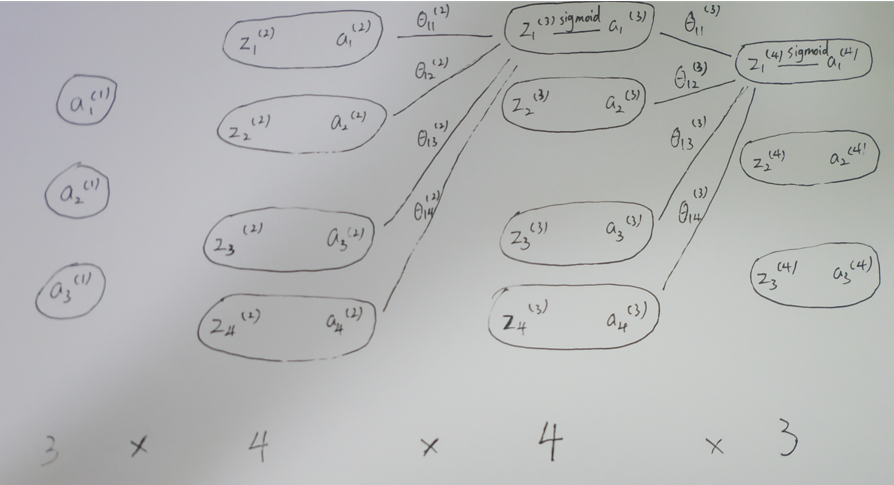

记不住那么多符号可以看看下面的神经网络示例图。

3. 正式推导

据此, 我们的思路是, 计算出神经网络中的损失函数

J

(

Θ

)

J(\Theta)

J(Θ), 然后通过梯度下降来求

J

(

Θ

)

J(\Theta)

J(Θ)的极小值.

以一个

3

∗

4

∗

4

∗

3

3*4*4*3

3∗4∗4∗3的神经网络为例.图如下:

在该神经网络中, 损失函数如下:

J ( Θ ) = y 1 log ( a 1 ( 4 ) ) + ( 1 − y 1 ) log ( 1 − a 1 ( 4 ) ) + y 2 log ( a 2 ( 4 ) ) + ( 1 − y 2 ) log ( 1 − a 2 ( 4 ) ) + y 3 log ( a 3 ( 4 ) ) + ( 1 − y 3 ) log ( 1 − a 3 ( 4 ) ) (6) J(\Theta) = y_1 \log(a_1^{(4)}) + (1-y_1) \log(1-a_1^{(4)}) \\ + y_2 \log(a_2^{(4)})+ (1-y_2) \log(1-a_2^{(4)}) \\ + y_3 \log(a_3^{(4)}) + (1-y_3) \log(1-a_3^{(4)}) \tag{6} J(Θ)=y1log(a1(4))+(1−y1)log(1−a1(4))+y2log(a2(4))+(1−y2)log(1−a2(4))+y3log(a3(4))+(1−y3)log(1−a3(4))(6)

反向算法的精髓就是提出了一种算法, 让我们能快速地求出 J ( Θ ) J(\Theta) J(Θ)对 Θ \Theta Θ的各个分量的导数(这就是 9.2 9.2 9.2和 9.3 9.3 9.3节在做的工作). 相信大家在看到误差公式那里和我一样懵, 我们接下来就从根本目标——求偏导数入手, 先不管”误差”这个概念. 在求导的过程中, “误差”这一概念会更自然的浮现出来.

我们首先对于最后一层参数求导, 即 Θ ( 3 ) \Theta^{(3)} Θ(3). 首先明确, Θ ( 3 ) \Theta^{(3)} Θ(3)是一个 3 ∗ 4 3*4 3∗4的矩阵( Θ ( 3 ) a ( 4 × 1 ) ( 3 ) = z ( 3 × 1 ) ( 4 ) \Theta^{(3)} a^{(3)}_{(4 \times 1)} = z^{(4)}_{(3 \times 1)} Θ(3)a(4×1)(3)=z(3×1)(4)), ∂ J ∂ Θ ( 3 ) \frac{\partial{J}}{\partial{\Theta^{(3)}}} ∂Θ(3)∂J也是一个 3 ∗ 4 3*4 3∗4矩阵, 我们需要对 Θ ( 3 ) \Theta^{(3)} Θ(3)的每一个分量求导. 让我们首先对 θ 12 ( 3 ) \theta^{(3)}_{12} θ12(3)求导:

∂ J ∂ θ 12 ( 3 ) = ∂ J ∂ a 1 ( 4 ) ⏟ ( 7.1 ) ∗ ∂ a 1 ( 4 ) ∂ z 1 ( 4 ) ⏟ ( 7.2 ) ∗ ∂ z 1 ( 4 ) ∂ θ 12 ( 3 ) ⏟ ( 7.3 ) (7) \frac{\partial{J}}{\partial{\theta^{(3)}_{12}}} = \underbrace{\frac{\partial{J}}{\partial{a^{(4)}_{1}}}}_{(7.1)} * \underbrace{\frac{\partial{a^{(4)}_{1}}}{\partial{z^{(4)}_{1}}}}_{(7.2)} * \underbrace{\frac{\partial{z^{(4)}_{1}}}{\partial{\theta_{12}^{(3)}}}}_{(7.3)} \tag{7} ∂θ12(3)∂J=(7.1) ∂a1(4)∂J∗(7.2) ∂z1(4)∂a1(4)∗(7.3) ∂θ12(3)∂z1(4)(7)

分别由式 ( 6 ) (6) (6), ( 1 ) (1) (1), ( 4 ) (4) (4)知:

( 7.1 ) = ∂ J ∂ a 1 ( 4 ) = y 1 a 1 ( 4 ) − 1 − y 1 1 − a 1 ( 4 ) (8) (7.1) = \frac{\partial{J}}{\partial{a^{(4)}_{1}}} = \frac{y_1}{a_1^{(4)}} - \frac{1-y_1}{1-a_1^{(4)}} \tag{8} (7.1)=∂a1(4)∂J=a1(4)y1−1−a1(4)1−y1(8)

( 7.2 ) = ∂ a 1 ( 4 ) ∂ z 1 ( 4 ) = − e − z 1 ( 4 ) ( 1 + e − z 1 ( 4 ) ) 2 = 1 1 + e − z 1 ( 4 ) ∗ ( 1 − 1 1 + e − z 1 ( 4 ) ) = a 1 ( 4 ) ( 1 − a 1 ( 4 ) ) (9) (7.2) = \frac{\partial{a^{(4)}_{1}}}{\partial{z^{(4)}_{1}}} = \frac{-e^{-z_1^{(4)}}}{{(1 + e^{-z_1^{(4)}})}^2} = \frac{1}{1 + e^{-z_1^{(4)}}} * (1 - \frac{1}{1 + e^{-z_1^{(4)}}}) = a^{(4)}_{1} (1-a^{(4)}_{1}) \tag{9} (7.2)=∂z1(4)∂a1(4)=(1+e−z1(4))2−e−z1(4)=1+e−z1(4)1∗(1−1+e−z1(4)1)=a1(4)(1−a1(4))(9)

( 7.3 ) = ∂ z 1 ( 4 ) ∂ θ 12 ( 3 ) = a 2 ( 3 ) (10) (7.3) = \frac{\partial{z^{(4)}_{1}}}{\partial{\theta_{12}^{(3)}}} = a_{2}^{(3)} \tag{10} (7.3)=∂θ12(3)∂z1(4)=a2(3)(10)

代入 ( 7 ) (7) (7)知:

∂ J ∂ θ 12 ( 3 ) = ( y 1 ( 1 − a 1 ( 4 ) ) − ( 1 − y 1 ) a 1 ( 4 ) ) a 2 ( 3 ) = ( y 1 − a 1 ( 4 ) ) a 2 ( 3 ) (11) \frac{\partial{J}}{\partial{\theta^{(3)}_{12}}} = (y_1(1-a_1^{(4)})-(1-y_1)a_1^{(4)})a_2^{(3)} = (y_1-a_1^{(4)})a_2^{(3)} \tag{11} ∂θ12(3)∂J=(y1(1−a1(4))−(1−y1)a1(4))a2(3)=(y1−a1(4))a2(3)(11)

同理可知 J ( Θ ) J(\Theta) J(Θ)对 Θ ( 3 ) \Theta^{(3)} Θ(3)其他分量的导数. 将 ∂ J ∂ Θ ( 3 ) \frac{\partial{J}}{\partial{\Theta^{(3)}}} ∂Θ(3)∂J写成矩阵形式:

∂ J ∂ Θ ( 3 ) = [ ( y 1 − a 1 ( 4 ) ) a 1 ( 3 ) ( y 1 − a 1 ( 4 ) ) a 2 ( 3 ) ( y 1 − a 1 ( 4 ) ) a 3 ( 3 ) ( y 1 − a 1 ( 4 ) ) a 4 ( 3 ) ( y 2 − a 2 ( 4 ) ) a 1 ( 3 ) ( y 2 − a 2 ( 4 ) ) a 2 ( 3 ) ( y 2 − a 2 ( 4 ) ) a 3 ( 3 ) ( y 1 − a 1 ( 4 ) ) a 4 ( 3 ) ( y 3 − a 3 ( 4 ) ) a 1 ( 3 ) ( y 3 − a 3 ( 4 ) ) a 2 ( 3 ) ( y 3 − a 3 ( 4 ) ) a 3 ( 3 ) ( y 1 − a 1 ( 4 ) ) a 4 ( 3 ) ] (12) \frac{\partial{J}}{\partial{\Theta^{(3)}}} = \begin{bmatrix} (y_1-a_1^{(4)})a_1^{(3)} & (y_1-a_1^{(4)})a_2^{(3)} & (y_1-a_1^{(4)})a_3^{(3)} & (y_1-a_1^{(4)})a_4^{(3)} \\ (y_2-a_2^{(4)})a_1^{(3)} & (y_2-a_2^{(4)})a_2^{(3)} & (y_2-a_2^{(4)})a_3^{(3)} & (y_1-a_1^{(4)})a_4^{(3)} \\ (y_3-a_3^{(4)})a_1^{(3)} & (y_3-a_3^{(4)})a_2^{(3)} & (y_3-a_3^{(4)})a_3^{(3)} & (y_1-a_1^{(4)})a_4^{(3)} \\ \end{bmatrix} \tag{12} ∂Θ(3)∂J=⎣⎢⎡(y1−a1(4))a1(3)(y2−a2(4))a1(3)(y3−a3(4))a1(3)(y1−a1(4))a2(3)(y2−a2(4))a2(3)(y3−a3(4))a2(3)(y1−a1(4))a3(3)(y2−a2(4))a3(3)(y3−a3(4))a3(3)(y1−a1(4))a4(3)(y1−a1(4))a4(3)(y1−a1(4))a4(3)⎦⎥⎤(12)

如果我们定义一个“误差”向量为 δ ( 4 ) = [ ( y 1 − a 1 ( 4 ) ) ( y 2 − a 2 ( 4 ) ) ( y 3 − a 3 ( 4 ) ) ] T \delta^{(4)} = \begin{bmatrix}(y_1-a_1^{(4)}) & (y_2-a_2^{(4)}) & (y_3-a_3^{(4)})\end{bmatrix}^T δ(4)=[(y1−a1(4))(y2−a2(4))(y3−a3(4))]T, 衡量最后一层神经元的输出与真实值之间的差异, 那么 ( 12 ) (12) (12)可以写成两个矩阵相乘的形式:

∂ J ∂ Θ ( 3 ) = δ ( 4 ) [ a 1 ( 3 ) a 2 ( 3 ) a 3 ( 3 ) a 4 ( 3 ) ] = δ ( 3 × 1 ) ( 4 ) ( a ( 3 ) ) ( 1 × 4 ) T (13) \frac{\partial{J}}{\partial{\Theta^{(3)}}} = \delta^{(4)} \begin{bmatrix} a_1^{(3)} & a_2^{(3)} & a_3^{(3)} & a_4^{(3)} \end{bmatrix} = \delta^{(4)}_{(3 \times 1)} (a^{(3)})^T_{(1 \times 4)} \tag{13} ∂Θ(3)∂J=δ(4)[a1(3)a2(3)a3(3)a4(3)]=δ(3×1)(4)(a(3))(1×4)T(13)

让我们先记下这个式子, 做接下来的工作: 计算 ∂ J ∂ Θ ( 2 ) \frac{\partial{J}}{\partial{\Theta^{(2)}}} ∂Θ(2)∂J. 和计算 ∂ J ∂ Θ ( 3 ) \frac{\partial{J}}{\partial{\Theta^{(3)}}} ∂Θ(3)∂J一样, 让我们先计算 ∂ J θ 12 ( 2 ) \frac{\partial{J}}{\theta^{(2)}_{12}} θ12(2)∂J:

∂ J ∂ θ 12 ( 2 ) = ∂ J ∂ a 1 ( 3 ) ⏟ ( 14.1 ) ∗ ∂ a 1 ( 3 ) ∂ z 1 ( 3 ) ⏟ ( 14.2 ) ∗ ∂ z 1 ( 3 ) ∂ θ 12 ( 2 ) ⏟ ( 14.3 ) (14) \frac{\partial{J}}{\partial{\theta^{(2)}_{12}}} = \underbrace{\frac{\partial{J}}{\partial{a^{(3)}_{1}}}}_{(14.1)} * \underbrace{\frac{\partial{a^{(3)}_{1}}}{\partial{z^{(3)}_{1}}}}_{(14.2)} * \underbrace{\frac{\partial{z^{(3)}_{1}}}{\partial{\theta_{12}^{(2)}}}}_{(14.3)} \tag{14} ∂θ12(2)∂J=(14.1) ∂a1(3)∂J∗(14.2) ∂z1(3)∂a1(3)∗(14.3) ∂θ12(2)∂z1(3)(14)

θ 12 ( 2 ) {\theta^{(2)}_{12}} θ12(2)对 J ( Θ ) J(\Theta) J(Θ)求导,根据偏导数的求导法则,要找到 J ( Θ ) J(\Theta) J(Θ)中所有与 θ 12 ( 2 ) {\theta^{(2)}_{12}} θ12(2)有关的项进行链式求导。由上式可以看出 θ 12 ( 2 ) {\theta^{(2)}_{12}} θ12(2)只是 a 1 ( 3 ) a_1^{(3)} a1(3)的变量,所以只需要考虑 J ( Θ ) J(\Theta) J(Θ)有多少项和 a 1 ( 3 ) a_1^{(3)} a1(3)相关。

z 1 ( 4 ) = θ 11 ( 3 ) ∗ a 1 ( 3 ) + θ 12 ( 3 ) ∗ a 2 ( 3 ) + θ 13 ( 3 ) ∗ a 3 ( 3 ) + θ 14 ( 3 ) ∗ a 4 ( 3 ) + k 1 ( 4 ) z_1^{(4)} = \theta_{11}^{(3)}*a_1^{(3)} + \theta_{12}^{(3)}*a_2^{(3)} + \theta_{13}^{(3)}*a_3^{(3)} +\theta_{14}^{(3)}*a_4^{(3)} + k_1^{(4)} z1(4)=θ11(3)∗a1(3)+θ12(3)∗a2(3)+θ13(3)∗a3(3)+θ14(3)∗a4(3)+k1(4)

z 2 ( 4 ) = θ 21 ( 3 ) ∗ a 1 ( 3 ) + θ 22 ( 3 ) ∗ a 2 ( 3 ) + θ 23 ( 3 ) ∗ a 3 ( 3 ) + θ 24 ( 3 ) ∗ a 4 ( 3 ) + k 2 ( 4 ) z_2^{(4)} = \theta_{21}^{(3)}*a_1^{(3)} + \theta_{22}^{(3)}*a_2^{(3)} + \theta_{23}^{(3)}*a_3^{(3)} +\theta_{24}^{(3)}*a_4^{(3)}+ k_2^{(4)} z2(4)=θ21(3)∗a1(3)+θ22(3)∗a2(3)+θ23(3)∗a3(3)+θ24(3)∗a4(3)+k2(4)

z 3 ( 4 ) = θ 31 ( 3 ) ∗ a 1 ( 3 ) + θ 32 ( 3 ) ∗ a 2 ( 3 ) + θ 33 ( 3 ) ∗ a 3 ( 3 ) + θ 34 ( 3 ) ∗ a 4 ( 3 ) + k 3 ( 4 ) z_3^{(4)} = \theta_{31}^{(3)}*a_1^{(3)} + \theta_{32}^{(3)}*a_2^{(3)} + \theta_{33}^{(3)}*a_3^{(3)} +\theta_{34}^{(3)}*a_4^{(3)}+ k_3^{(4)} z3(4)=θ31(3)∗a1(3)+θ32(3)∗a2(3)+θ33(3)∗a3(3)+θ34(3)∗a4(3)+k3(4)

上面三式是最后一层 z ( 4 ) z^{(4)} z(4)的求和过程,可以看到 z 1 ( 4 ) , z 2 ( 4 ) , z 3 ( 4 ) z_1^{(4)},z_2^{(4)},z_3^{(4)} z1(4),z2(4),z3(4)中都包含 a 1 ( 3 ) a_1^{(3)} a1(3),所以 ( 14.1 ) (14.1) (14.1)式还可以做如下拆分:

( 14.1 ) = ∂ J ∂ a 1 ( 3 ) = ∂ J ∂ a 1 ( 4 ) ∗ ∂ a 1 ( 4 ) ∂ z 1 ( 4 ) ∗ ∂ z 1 ( 4 ) ∂ a 1 ( 3 ) ⏟ ( 15.1 ) + ∂ J ∂ a 2 ( 4 ) ∗ ∂ a 2 ( 4 ) ∂ z 2 ( 4 ) ∗ ∂ z 2 ( 4 ) ∂ a 1 ( 3 ) ⏟ ( 15.2 ) + ∂ J ∂ a 3 ( 4 ) ∗ ∂ a 3 ( 4 ) ∂ z 3 ( 4 ) ∗ ∂ z 3 ( 4 ) ∂ a 1 ( 3 ) ⏟ ( 15.3 ) (15) (14.1) = \frac{\partial{J}}{\partial{a_1^{(3)}}} = \underbrace{\frac{\partial{J}}{\partial{a^{(4)}_{1}}} * \frac{\partial{a^{(4)}_{1}}}{\partial{z^{(4)}_{1}}} * \frac{\partial{z^{(4)}_{1}}}{\partial{a^{(3)}_{1}}}}_{(15.1)} +\underbrace{\frac{\partial{J}}{\partial{a^{(4)}_{2}}} * \frac{\partial{a^{(4)}_{2}}}{\partial{z^{(4)}_{2}}} * \frac{\partial{z^{(4)}_{2}}}{\partial{a^{(3)}_{1}}}}_{(15.2)} +\underbrace{\frac{\partial{J}}{\partial{a^{(4)}_{3}}} * \frac{\partial{a^{(4)}_{3}}}{\partial{z^{(4)}_{3}}} * \frac{\partial{z^{(4)}_{3}}}{\partial{a^{(3)}_{1}}}}_{(15.3)} \tag{15} (14.1)=∂a1(3)∂J=(15.1) ∂a1(4)∂J∗∂z1(4)∂a1(4)∗∂a1(3)∂z1(4)+(15.2) ∂a2(4)∂J∗∂z2(4)∂a2(4)∗∂a1(3)∂z2(4)+(15.3) ∂a3(4)∂J∗∂z3(4)∂a3(4)∗∂a1(3)∂z3(4)(15)

注意到 ( 15.1 ) (15.1) (15.1), ( 15.2 ) (15.2) (15.2)与 ( 15.3 ) (15.3) (15.3)式都和 ( 7 ) (7) (7)式类似, 三式子可分别化为:

( 15.1 ) = ∂ J ∂ a 1 ( 4 ) ∗ ∂ a 1 ( 4 ) ∂ z 1 ( 4 ) ∗ ∂ z 1 ( 4 ) ∂ a 1 ( 3 ) = ( y 1 − a 1 ( 4 ) ) θ 11 ( 3 ) (16) (15.1) = \frac{\partial{J}}{\partial{a^{(4)}_{1}}} * \frac{\partial{a^{(4)}_{1}}}{\partial{z^{(4)}_{1}}} * \frac{\partial{z^{(4)}_{1}}}{\partial{a^{(3)}_{1}}} = (y_1-a_1^{(4)})\theta_{11}^{(3)} \tag{16} (15.1)=∂a1(4)∂J∗∂z1(4)∂a1(4)∗∂a1(3)∂z1(4)=(y1−a1(4))θ11(3)(16)

( 15.2 ) = ∂ J ∂ a 2 ( 4 ) ∗ ∂ a 2 ( 4 ) ∂ z 2 ( 4 ) ∗ ∂ z 2 ( 4 ) ∂ a 1 ( 3 ) = ( y 2 − a 2 ( 4 ) ) θ 21 ( 3 ) (17) (15.2) = \frac{\partial{J}}{\partial{a^{(4)}_{2}}} * \frac{\partial{a^{(4)}_{2}}}{\partial{z^{(4)}_{2}}} * \frac{\partial{z^{(4)}_{2}}}{\partial{a^{(3)}_{1}}} = (y_2-a_2^{(4)})\theta_{21}^{(3)} \tag{17} (15.2)=∂a2(4)∂J∗∂z2(4)∂a2(4)∗∂a1(3)∂z2(4)=(y2−a2(4))θ21(3)(17)

( 15.3 ) = ∂ J ∂ a 3 ( 4 ) ∗ ∂ a 3 ( 4 ) ∂ z 3 ( 4 ) ∗ ∂ z 3 ( 4 ) ∂ a 1 ( 3 ) = ( y 3 − a 3 ( 4 ) ) θ 31 ( 3 ) (18) (15.3) = \frac{\partial{J}}{\partial{a^{(4)}_{3}}} * \frac{\partial{a^{(4)}_{3}}}{\partial{z^{(4)}_{3}}} * \frac{\partial{z^{(4)}_{3}}}{\partial{a^{(3)}_{1}}} = (y_3-a_3^{(4)})\theta_{31}^{(3)} \tag{18} (15.3)=∂a3(4)∂J∗∂z3(4)∂a3(4)∗∂a1(3)∂z3(4)=(y3−a3(4))θ31(3)(18)

将 ( 16 ) (16) (16), ( 17 ) (17) (17), ( 18 ) (18) (18)代入 ( 19 ) (19) (19):

( 14.1 ) = ∂ J ∂ θ 12 ( 2 ) = ( y 1 − a 1 ( 4 ) ) θ 11 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 21 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 31 ( 3 ) (19) (14.1) = \frac{\partial{J}}{\partial{\theta^{(2)}_{12}}} = (y_1-a_1^{(4)})\theta_{11}^{(3)} + (y_2-a_2^{(4)})\theta_{21}^{(3)} + (y_3-a_3^{(4)})\theta_{31}^{(3)} \tag{19} (14.1)=∂θ12(2)∂J=(y1−a1(4))θ11(3)+(y2−a2(4))θ21(3)+(y3−a3(4))θ31(3)(19)

另外, 与 ( 9 ) (9) (9), ( 10 ) (10) (10)类似, 可以得到 ( 14.2 ) (14.2) (14.2)和 ( 14.3 ) (14.3) (14.3):

( 14.2 ) = ∂ a 1 ( 3 ) ∂ z 1 ( 3 ) = a 1 ( 3 ) ( 1 − a 1 ( 3 ) ) (20) (14.2) = \frac{\partial{a^{(3)}_{1}}}{\partial{z^{(3)}_{1}}} = a^{(3)}_{1} (1-a^{(3)}_{1}) \tag{20} (14.2)=∂z1(3)∂a1(3)=a1(3)(1−a1(3))(20)

( 14.3 ) = ∂ z 1 ( 3 ) ∂ θ 12 ( 2 ) = a 2 ( 2 ) (21) (14.3) = \frac{\partial{z^{(3)}_{1}}}{\partial{\theta^{(2)}_{12}}} = a_{2}^{(2)} \tag{21} (14.3)=∂θ12(2)∂z1(3)=a2(2)(21)

将 ( 19 ) (19) (19), ( 20 ) (20) (20), ( 21 ) (21) (21)共同代入 ( 14 ) (14) (14), 得到 ∂ J θ 12 ( 2 ) \frac{\partial{J}}{\theta^{(2)}_{12}} θ12(2)∂J:

∂ J ∂ θ 12 ( 2 ) = [ ( y 1 − a 1 ( 4 ) ) θ 11 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 21 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 31 ( 3 ) ] a 1 ( 3 ) ( 1 − a 1 ( 3 ) ) a 2 ( 2 ) (22) \frac{\partial{J}}{\partial{\theta^{(2)}_{12}}} = [(y_1-a_1^{(4)})\theta_{11}^{(3)} + (y_2-a_2^{(4)})\theta_{21}^{(3)} + (y_3-a_3^{(4)})\theta_{31}^{(3)}] a^{(3)}_{1} (1-a^{(3)}_{1}) a_{2}^{(2)} \tag{22} ∂θ12(2)∂J=[(y1−a1(4))θ11(3)+(y2−a2(4))θ21(3)+(y3−a3(4))θ31(3)]a1(3)(1−a1(3))a2(2)(22)

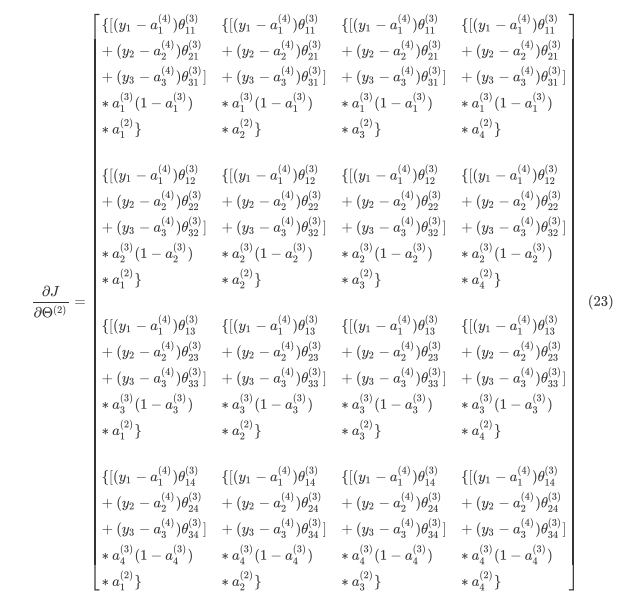

同理也可知 J ( Θ ) J(\Theta) J(Θ)对 Θ ( 2 ) \Theta^{(2)} Θ(2)其他分量的导数. 将 ∂ J ∂ Θ ( 2 ) \frac{\partial{J}}{\partial{\Theta^{(2)}}} ∂Θ(2)∂J写成矩阵形式:

( 23 ) (23) (23)只是看起来很复杂, 实际上只是一个普通的 ( 4 ∗ 4 ) (4*4) (4∗4)矩阵. 让我们先参考 ( 13 ) (13) (13)将 a ( 2 ) T {a^{(2)}}^T a(2)T拆出来:

∂ J ∂ Θ ( 2 ) 4 × 4 = [ { [ ( y 1 − a 1 ( 4 ) ) θ 11 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 21 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 31 ( 3 ) ] ∗ a 1 ( 3 ) ( 1 − a 1 ( 3 ) ) } { [ ( y 1 − a 1 ( 4 ) ) θ 12 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 22 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 32 ( 3 ) ] ∗ a 2 ( 3 ) ( 1 − a 2 ( 3 ) ) } { [ ( y 1 − a 1 ( 4 ) ) θ 13 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 23 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 33 ( 3 ) ] ∗ a 3 ( 3 ) ( 1 − a 3 ( 3 ) ) } { [ ( y 1 − a 1 ( 4 ) ) θ 14 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 24 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 34 ( 3 ) ] ∗ a 4 ( 3 ) ( 1 − a 4 ( 3 ) ) } ] 4 × 1 ⏟ 24.1 [ a 1 ( 2 ) a 2 ( 2 ) a 3 ( 2 ) a 4 ( 2 ) ] 1 × 4 ⏟ 24.2 (24) \frac{\partial{J}}{\partial{\Theta^{(2)}}}_{4 \times 4} = \underbrace{\begin{bmatrix} \{[(y_1-a_1^{(4)})\theta_{11}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{21}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{31}^{(3)}] \\ *a^{(3)}_{1}(1-a^{(3)}_{1})\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{12}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{22}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{32}^{(3)}] \\ *a^{(3)}_{2} (1-a^{(3)}_{2})\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{13}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{23}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{33}^{(3)}] \\ *a^{(3)}_{3} (1-a^{(3)}_{3})\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{14}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{24}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{34}^{(3)}] \\ *a^{(3)}_{4} (1-a^{(3)}_{4})\} \end{bmatrix}_{4 \times 1}}_{24.1} \underbrace{\begin{bmatrix} a_{1}^{(2)} & a_{2}^{(2)} & a_{3}^{(2)} & a_{4}^{(2)} \end{bmatrix}_{1 \times 4}}_{24.2} \tag{24} ∂Θ(2)∂J4×4=24.1 ⎣⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎡{[(y1−a1(4))θ11(3)+(y2−a2(4))θ21(3)+(y3−a3(4))θ31(3)]∗a1(3)(1−a1(3))}{[(y1−a1(4))θ12(3)+(y2−a2(4))θ22(3)+(y3−a3(4))θ32(3)]∗a2(3)(1−a2(3))}{[(y1−a1(4))θ13(3)+(y2−a2(4))θ23(3)+(y3−a3(4))θ33(3)]∗a3(3)(1−a3(3))}{[(y1−a1(4))θ14(3)+(y2−a2(4))θ24(3)+(y3−a3(4))θ34(3)]∗a4(3)(1−a4(3))}⎦⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎤4×124.2 [a1(2)a2(2)a3(2)a4(2)]1×4(24)

其中 ( 24.2 ) (24.2) (24.2)是我们熟悉的 a ( 2 ) T {a^{(2)}}^T a(2)T, 而我们先暂时定义 ( 24.1 ) (24.1) (24.1)为 δ ( 3 ) \delta^{(3)} δ(3)(先不管它的实际意义), 引入 H a d a m a r d Hadamard Hadamard积的概念(就是 9.2 9.2 9.2节视频中的 ⋅ ∗ ·* ⋅∗符号), 对 δ ( 3 ) \delta^{(3)} δ(3)进一步拆分:

δ ( 3 ) = [ { [ ( y 1 − a 1 ( 4 ) ) θ 11 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 21 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 31 ( 3 ) ] } { [ ( y 1 − a 1 ( 4 ) ) θ 12 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 22 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 32 ( 3 ) ] } { [ ( y 1 − a 1 ( 4 ) ) θ 13 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 23 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 33 ( 3 ) ] } { [ ( y 1 − a 1 ( 4 ) ) θ 14 ( 3 ) + ( y 2 − a 2 ( 4 ) ) θ 24 ( 3 ) + ( y 3 − a 3 ( 4 ) ) θ 34 ( 3 ) ] } ] ∘ [ a 1 ( 3 ) ( 1 − a 1 ( 3 ) ) a 2 ( 3 ) ( 1 − a 2 ( 3 ) ) a 3 ( 3 ) ( 1 − a 3 ( 3 ) ) a 4 ( 3 ) ( 1 − a 4 ( 3 ) ) ] = [ θ 11 ( 3 ) θ 21 ( 3 ) θ 31 ( 3 ) θ 12 ( 3 ) θ 22 ( 3 ) θ 32 ( 3 ) θ 13 ( 3 ) θ 23 ( 3 ) θ 33 ( 3 ) θ 14 ( 3 ) θ 24 ( 3 ) θ 34 ( 3 ) ] [ y 1 − a 1 ( 4 ) y 2 − a 2 ( 4 ) y 3 − a 3 ( 4 ) ] ∘ [ a 1 ( 3 ) ( 1 − a 1 ( 3 ) ) a 2 ( 3 ) ( 1 − a 2 ( 3 ) ) a 3 ( 3 ) ( 1 − a 3 ( 3 ) ) a 4 ( 3 ) ( 1 − a 4 ( 3 ) ) ] = Θ ( 3 ) T δ ( 4 ) ∘ ∂ a ( 3 ) ∂ z ( 3 ) (25) \delta^{(3)} = \begin{bmatrix} \{[(y_1-a_1^{(4)})\theta_{11}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{21}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{31}^{(3)}]\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{12}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{22}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{32}^{(3)}]\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{13}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{23}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{33}^{(3)}]\} \\ \\ \{[(y_1-a_1^{(4)})\theta_{14}^{(3)} \\ + (y_2-a_2^{(4)})\theta_{24}^{(3)} \\ + (y_3-a_3^{(4)})\theta_{34}^{(3)}]\} \end{bmatrix} \circ \begin{bmatrix} a^{(3)}_{1} (1-a^{(3)}_{1}) \\ a^{(3)}_{2} (1-a^{(3)}_{2}) \\ a^{(3)}_{3} (1-a^{(3)}_{3}) \\ a^{(3)}_{4} (1-a^{(3)}_{4}) \end{bmatrix} = \\ \begin{bmatrix} \theta_{11}^{(3)} & \theta_{21}^{(3)} & \theta_{31}^{(3)} \\ \theta_{12}^{(3)} & \theta_{22}^{(3)} & \theta_{32}^{(3)} \\ \theta_{13}^{(3)} & \theta_{23}^{(3)} & \theta_{33}^{(3)} \\ \theta_{14}^{(3)} & \theta_{24}^{(3)} & \theta_{34}^{(3)} \\ \end{bmatrix} \begin{bmatrix} y_1-a_1^{(4)} \\ y_2-a_2^{(4)} \\ y_3-a_3^{(4)} \end{bmatrix} \tag{25} \circ \begin{bmatrix} a^{(3)}_{1} (1-a^{(3)}_{1}) \\ a^{(3)}_{2} (1-a^{(3)}_{2}) \\ a^{(3)}_{3} (1-a^{(3)}_{3}) \\ a^{(3)}_{4} (1-a^{(3)}_{4}) \end{bmatrix} = {\Theta^{(3)}}^T \delta^{(4)} \circ \frac{\partial{a^{(3)}}}{\partial{z^{(3)}}} δ(3)=⎣⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎢⎡{[(y1−a1(4))θ11(3)+(y2−a2(4))θ21(3)+(y3−a3(4))θ31(3)]}{[(y1−a1(4))θ12(3)+(y2−a2(4))θ22(3)+(y3−a3(4))θ32(3)]}{[(y1−a1(4))θ13(3)+(y2−a2(4))θ23(3)+(y3−a3(4))θ33(3)]}{[(y1−a1(4))θ14(3)+(y2−a2(4))θ24(3)+(y3−a3(4))θ34(3)]}⎦⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎥⎤∘⎣⎢⎢⎢⎡a1(3)(1−a1(3))a2(3)(1−a2(3))a3(3)(1−a3(3))a4(3)(1−a4(3))⎦⎥⎥⎥⎤=⎣⎢⎢⎢⎡θ11(3)θ12(3)θ13(3)θ14(3)θ21(3)θ22(3)θ23(3)θ24(3)θ31(3)θ32(3)θ33(3)θ34(3)⎦⎥⎥⎥⎤⎣⎢⎡y1−a1(4)y2−a2(4)y3−a3(4)⎦⎥⎤∘⎣⎢⎢⎢⎡a1(3)(1−a1(3))a2(3)(1−a2(3))a3(3)(1−a3(3))a4(3)(1−a4(3))⎦⎥⎥⎥⎤=Θ(3)Tδ(4)∘∂z(3)∂a(3)(25)

综合 ( 24 ) (24) (24), ( 25 ) (25) (25)式可以得到:

∂ J ∂ Θ ( 2 ) = δ ( 3 ) a ( 2 ) T (26) \frac{\partial{J}}{\partial{\Theta^{(2)}}} = \delta^{(3)} {a^{(2)}}^T \tag{26} ∂Θ(2)∂J=δ(3)a(2)T(26)

其中

δ ( 3 ) = Θ ( 3 ) T δ ( 4 ) ∘ ∂ a ( 3 ) ∂ z ( 3 ) (27) \delta^{(3)} = {\Theta^{(3)}}^T \delta^{(4)} \circ \frac{\partial{a^{(3)}}}{\partial{z^{(3)}}} \tag{27} δ(3)=Θ(3)Tδ(4)∘∂z(3)∂a(3)(27)

至此, 基本大功告成. 让我们将 ( 26 ) (26) (26), ( 27 ) (27) (27)与 ( 13 ) (13) (13)对照观察:

∂ J ∂ Θ ( 3 ) = δ ( 4 ) a ( 3 ) T \frac{\partial{J}}{\partial{\Theta^{(3)}}} = \delta^{(4)} {a^{(3)}}^T ∂Θ(3)∂J=δ(4)a(3)T

∂ J ∂ Θ ( 2 ) = δ ( 3 ) a ( 2 ) T \frac{\partial{J}}{\partial{\Theta^{(2)}}} = \delta^{(3)} {a^{(2)}}^T ∂Θ(2)∂J=δ(3)a(2)T

δ ( 3 ) = Θ ( 3 ) T δ ( 4 ) ∘ ∂ a ( 3 ) ∂ z ( 3 ) (28) \delta^{(3)} = {\Theta^{(3)}}^T \delta^{(4)} \circ \frac{\partial{a^{(3)}}}{\partial{z^{(3)}}} \tag{28} δ(3)=Θ(3)Tδ(4)∘∂z(3)∂a(3)(28)

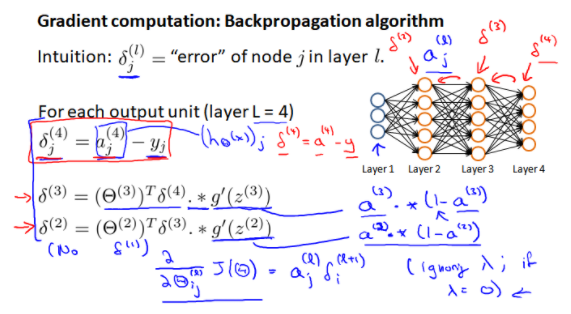

归纳总结即可推广到 L L L层的神经网络:

∂ J ∂ Θ ( l ) = δ ( l + 1 ) a ( l ) T \frac{\partial{J}}{\partial{\Theta^{(l)}}} = \delta^{(l+1)} {a^{(l)}}^T ∂Θ(l)∂J=δ(l+1)a(l)T

δ ( l ) = { 0 l = 0 Θ ( l ) T δ ( l + 1 ) ∘ ∂ a ( l ) ∂ z ( l ) 1 ⩽ l ⩽ L − 1 y − a ( l ) l = L (29) \delta^{(l)} = \begin{cases} 0 & l=0 \\ {\Theta^{(l)}}^T \delta^{(l+1)} \circ \frac{\partial{a^{(l)}}}{\partial{z^{(l)}}} & 1\leqslant{l}\leqslant{L-1} \\ y-a^{(l)} & l=L \end{cases} \tag{29} δ(l)=⎩⎪⎨⎪⎧0Θ(l)Tδ(l+1)∘∂z(l)∂a(l)y−a(l)l=01⩽l⩽L−1l=L(29)

其中

∂

a

(

l

)

∂

z

(

l

)

\frac{\partial{a^{(l)}}}{\partial{z^{(l)}}}

∂z(l)∂a(l)就是

s

i

g

m

o

i

d

(

x

)

sigmoid(x)

sigmoid(x)求导.

根据

(

29

)

(29)

(29), 我们就可以顺利、轻松、较快速地迭代求出

J

(

Θ

)

J(\Theta)

J(Θ)对

Θ

\Theta

Θ的各个分量的导数.

对比吴恩达课程中的结果可以发现是完全相同的。

867

867

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?