本文主要解析caffe源码文件/src/caffe/layers/Data_layer.cpp和Base_Data_layer.cpp,这两个文件主要实现caffe数据层的定义。

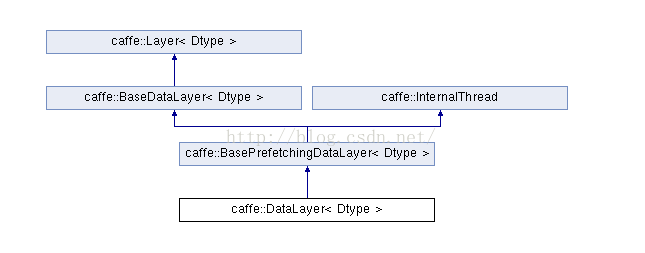

data_layer应该是网络的最底层,主要是将数据送给blob进入到net中。能过代码可以看到Data_Layer类与Layer类之间存在着如下的继承关系:::

所以要看懂Data_Layer类构造,要先了解Layer类的构造:http://blog.csdn.net/lanxuecc/article/details/53023211

其次了解Base_data_layer.cpp中的BaseDataLayer类与BasePrefetchingDataLayer类,InternalThread类是Caffe中的多线程接口虚类。

Base_data_layer.hpp::::::::

#ifndef CAFFE_DATA_LAYERS_HPP_

#define CAFFE_DATA_LAYERS_HPP_

#include <vector>

#include "caffe/blob.hpp"

#include "caffe/data_transformer.hpp" //data_transformer文件中实现了常用的数据预处理操作,如尺度变换,减均值,镜像变换等

#include "caffe/internal_thread.hpp" //处理多线程的代码文件

#include "caffe/layer.hpp"

#include "caffe/proto/caffe.pb.h"

#include "caffe/util/blocking_queue.hpp" //线程队列的相关文件

namespace caffe {

/**

* @brief Provides base for data layers that feed blobs to the Net.

*

* TODO(dox): thorough documentation for Forward and proto params.

*/

/*Layer的子类,data_layer的基类负责将Blobs数据送入网络*/

template <typename Dtype>

class BaseDataLayer : public Layer<Dtype> {

public:

explicit BaseDataLayer(const LayerParameter& param); //构造函数,传入的参数就是solover.prototxt文件中定义的每层的参数

// LayerSetUp: implements common data layer setup functionality, and calls

// DataLayerSetUp to do special data layer setup for individual layer types.

// This method may not be overridden except by the BasePrefetchingDataLayer.

// 该虚函数实现了一般data_layer的功能,能够调用DataLayerSetUp来完成具体的data_layer的设置

// 只能被BasePrefetchingDataLayer类来重载

virtual void LayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

// Data layers should be shared by multiple solvers in parallel

// 数据层可以被其他的solver共享

virtual inline bool ShareInParallel() const { return true; }

// 层数据设置,具体要求的data_layer要重载这个函数来具体实现

virtual void DataLayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {}

// Data layers have no bottoms, so reshaping is trivial.

virtual void Reshape(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {}

//虚函数由子类具体实现具体的cpu与gpu的后向传播

virtual void Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) {}

virtual void Backward_gpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom) {}

protected:

// 在caffe.proto中定义的参数类

TransformationParameter transform_param_;

//DataTransformer类的智能指针,DataTransformer类主要负责对数据进行预处理

shared_ptr<DataTransformer<Dtype> > data_transformer_;

//是否有labels

bool output_labels_;

};

//两个blob类的对象,数据与标签

template <typename Dtype>

class Batch {

public:

Blob<Dtype> data_, label_;

};

/*派生自类BaseDataLayer和类InternalThread*/

template <typename Dtype>

class BasePrefetchingDataLayer :

public BaseDataLayer<Dtype>, public InternalThread {

public:

//构造函数

explicit BasePrefetchingDataLayer(const LayerParameter& param);

// LayerSetUp: implements common data layer setup functionality, and calls

// DataLayerSetUp to do special data layer setup for individual layer types.

// This method may not be overridden.

// 该虚函数实现了一般data_layer的功能,能够调用DataLayerSetUp来完成具体的data_layer的设置

// 该函数不能被重载

void LayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

//具体的data_layer具体的实现这两个函数

virtual void Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Forward_gpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

// Prefetches batches (asynchronously if to GPU memory)

static const int PREFETCH_COUNT = 3;

protected:

//通过这个函数执行线程函数

virtual void InternalThreadEntry();

//加载batch

virtual void load_batch(Batch<Dtype>* batch) = 0;

/*batch数组*/

Batch<Dtype> prefetch_[PREFETCH_COUNT];

/*两个阻塞队列*/

BlockingQueue<Batch<Dtype>*> prefetch_free_; /*从prefetch_free_队列取数据结构,填充数据结构放到prefetch_full_队列*/

BlockingQueue<Batch<Dtype>*> prefetch_full_; /*从prefetch_full_队列取数据,使用数据,清空数据结构,放到prefetch_free_队列*/

/*转换过的blob数据,中间变量用来辅助图像变换*/

Blob<Dtype> transformed_data_;

};

} // namespace caffe

#endif // CAFFE_DATA_LAYERS_HPP_

Base_data_layer.cpp::::::::

#include <boost/thread.hpp>

#include <vector>

#include "caffe/blob.hpp"

#include "caffe/data_transformer.hpp"

#include "caffe/internal_thread.hpp"

#include "caffe/layer.hpp"

#include "caffe/layers/base_data_layer.hpp"

#include "caffe/proto/caffe.pb.h"

#include "caffe/util/blocking_queue.hpp"

namespace caffe {

// 构造函数初始化,先用param初始化父类Layer

// 再用param.transform_param()初始化transform_param_s

template <typename Dtype>

BaseDataLayer<Dtype>::BaseDataLayer(const LayerParameter& param)

: Layer<Dtype>(param),

transform_param_(param.transform_param()) {

}

template <typename Dtype>

void BaseDataLayer<Dtype>::LayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) {

if (top.size() == 1) { //获得是否有label

output_labels_ = false;

} else {

output_labels_ = true;

}

/*创建DataTransformer类的智能指针,用来预处理数据*/

data_transformer_.reset(

new DataTransformer<Dtype>(transform_param_, this->phase_));

data_transformer_->InitRand(); //生成随机数据种子

// The subclasses should setup the size of bottom and top

DataLayerSetUp(bottom, top); //层数据设置

}

// BasePrefetchingDataLayer构造函数

template <typename Dtype>

BasePrefetchingDataLayer<Dtype>::BasePrefetchingDataLayer(

const LayerParameter& param)

: BaseDataLayer<Dtype>(param),

prefetch_free_(), prefetch_full_() {

for (int i = 0; i < PREFETCH_COUNT; ++i) {

prefetch_free_.push(&prefetch_[i]);

}

}

template <typename Dtype>

void BasePrefetchingDataLayer<Dtype>::LayerSetUp(

const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) {

BaseDataLayer<Dtype>::LayerSetUp(bottom, top);// 先调用父类BaseDataLayer的LayerSetUp

// Before starting the prefetch thread, we make cpu_data and gpu_data

// calls so that the prefetch thread does not accidentally make simultaneous

// cudaMalloc calls when the main thread is running. In some GPUs this

// seems to cause failures if we do not so.

// 在开启prefetch线程之前,调用cpu_data和gpu_data,

// 这样主线程正在运行时,prefetch线程避免同时调用cudaMalloc,

// 这样做避免了某些gpu上出现错误

for (int i = 0; i < PREFETCH_COUNT; ++i) {

prefetch_[i].data_.mutable_cpu_data(); /*依次给队列中每个batch的数据blob分配cpu内存*/

if (this->output_labels_) {

prefetch_[i].label_.mutable_cpu_data(); /*依次分配每个每个batch的标签blob分配cpu内存*/

}

}

#ifndef CPU_ONLY

if (Caffe::mode() == Caffe::GPU) {

for (int i = 0; i < PREFETCH_COUNT; ++i) {

prefetch_[i].data_.mutable_gpu_data();/*依次给队列中每个batch的数据blob分配gpu内存*/

if (this->output_labels_) {

prefetch_[i].label_.mutable_gpu_data();/*依次分配每个每个batch的标签blob分配gpu内存*/

}

}

}

#endif

DLOG(INFO) << "Initializing prefetch";

this->data_transformer_->InitRand();//生成随机数据种子

StartInternalThread();//启动内部读取数据线程

DLOG(INFO) << "Prefetch initialized.";

}

// 如果有空闲线程,让该线程去取数据

template <typename Dtype>

void BasePrefetchingDataLayer<Dtype>::InternalThreadEntry() {

#ifndef CPU_ONLY

cudaStream_t stream;

if (Caffe::mode() == Caffe::GPU) {

CUDA_CHECK(cudaStreamCreateWithFlags(&stream, cudaStreamNonBlocking));

}

#endif

try {

while (!must_stop()) {

Batch<Dtype>* batch = prefetch_free_.pop();//从free_队列去数据结构

load_batch(batch);//取数据,填充数据结构。在其派生类实现的

#ifndef CPU_ONLY

if (Caffe::mode() == Caffe::GPU) {

batch->data_.data().get()->async_gpu_push(stream);//异步,把数据同步到GPU,使用Syncedmem->async_gpu_push

CUDA_CHECK(cudaStreamSynchronize(stream));

}

#endif

prefetch_full_.push(batch);//把数据放到full_队列

}

} catch (boost::thread_interrupted&) {

// Interrupted exception is expected on shutdown

}

#ifndef CPU_ONLY

if (Caffe::mode() == Caffe::GPU) {

CUDA_CHECK(cudaStreamDestroy(stream));

}

#endif

}

// 将预处理过的batch,送到top

// 数据层的forward函数不进行计算,不使用bottom,只是准备数据,填充到top

template <typename Dtype>

void BasePrefetchingDataLayer<Dtype>::Forward_cpu(

const vector<Blob<Dtype>*>& bottom, const vector<Blob<Dtype>*>& top) {

Batch<Dtype>* batch = prefetch_full_.pop("Data layer prefetch queue empty");//从full队列取数据

// Reshape to loaded data.

top[0]->ReshapeLike(batch->data_);//调整top数据形状大小,一次读取一个batch大小的数据

// Copy the data

caffe_copy(batch->data_.count(), batch->data_.cpu_data(),

top[0]->mutable_cpu_data());// Copy the data。把数据拷贝到top中

DLOG(INFO) << "Prefetch copied";

if (this->output_labels_) {//如果有标签,也要把标签拷贝到top中

// Reshape to loaded labels.

top[1]->ReshapeLike(batch->label_);//调整top标签形状大小

// Copy the labels.

caffe_copy(batch->label_.count(), batch->label_.cpu_data(),

top[1]->mutable_cpu_data()); //拷贝标签到top中

}

prefetch_free_.push(batch);//用过的数据结构,放回free队列

}

#ifdef CPU_ONLY

STUB_GPU_FORWARD(BasePrefetchingDataLayer, Forward);

#endif

INSTANTIATE_CLASS(BaseDataLayer);

INSTANTIATE_CLASS(BasePrefetchingDataLayer);

} // namespace caffe

再来看看Data_layer.cpp中定义的Data_layer类。

Data_layer.hpp

#include "caffe/data_reader.hpp"

#include "caffe/data_transformer.hpp"

#include "caffe/internal_thread.hpp"

#include "caffe/layer.hpp"

#include "caffe/layers/base_data_layer.hpp"

#include "caffe/proto/caffe.pb.h"

#include "caffe/util/db.hpp"

namespace caffe {

/*datalayer继承了类BasePrefetchingDataLayer*/

template <typename Dtype>

class DataLayer : public BasePrefetchingDataLayer<Dtype> {

public:

//构造函数

explicit DataLayer(const LayerParameter& param); /*传入protobuf的网络的层的参数*/

//析构函数

virtual ~DataLayer();

//层设置函数

virtual void DataLayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

// DataLayer uses DataReader instead for sharing for parallelism

// 是否在并行时共享该层

virtual inline bool ShareInParallel() const { return false; }

//返回该层类型

virtual inline const char* type() const { return "Data"; }

//返回输入的blobs数量,因为数据层最底层,所以为0

virtual inline int ExactNumBottomBlobs() const { return 0; }

//返回最小的输出blobs数量

virtual inline int MinTopBlobs() const { return 1; }

//返回最大的输出blobs数量

virtual inline int MaxTopBlobs() const { return 2; }

protected:

virtual void load_batch(Batch<Dtype>* batch); //加载数据

DataReader reader_; /*其作用是添加读取数据任务至,一个专门读取数据库(examples/mnist/mnist_train_lmdb)的线程(若还不存在该线程,则创建该线程)*/

};

} // namespace caffe

#endif // CAFFE_DATA_LAYER_HPP_

Data_layer.cpp

#ifdef USE_OPENCV

#include <opencv2/core/core.hpp>

#endif // USE_OPENCV

#include <stdint.h>

#include <vector>

#include "caffe/data_transformer.hpp"

#include "caffe/layers/data_layer.hpp"

#include "caffe/util/benchmark.hpp"

namespace caffe {

template <typename Dtype>

DataLayer<Dtype>::DataLayer(const LayerParameter& param)

: BasePrefetchingDataLayer<Dtype>(param), /*调用基类构造函数BasePrefetchingDataLayer()之后,对 DataReader reader_ 进行赋值*/

reader_(param) {

}

template <typename Dtype>

DataLayer<Dtype>::~DataLayer() {

this->StopInternalThread(); //终止线程

}

/*Data_layer用该函数来完成具体的层设置*/

template <typename Dtype>

void DataLayer<Dtype>::DataLayerSetUp(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top) { /*这里batch_size就是solver.prototxt中传入的*/

const int batch_size = this->layer_param_.data_param().batch_size(); /*layer_param_在父类layer中定义*/

// Read a data point, and use it to initialize the top blob.

// 获取读的数据指针,然后用它初始化top blob

// Datum是在caffe.prototxt中定义的,DataReader用LayerParameter初始化后(内含有DataParameter),

// 可以获取要读的数据信息,并返回Datum,后面在根据Datum来reshape

Datum& datum = *(reader_.full().peek());

// Use data_transformer to infer the expected blob shape from datum.

// 从datum中判断top blob的形状

vector<int> top_shape = this->data_transformer_->InferBlobShape(datum);

//转换成top_blob需要的形状

this->transformed_data_.Reshape(top_shape);

// Reshape top[0] and prefetch_data according to the batch_size.

top_shape[0] = batch_size;

top[0]->Reshape(top_shape); /*reshape top[0]中数据*/

// reshape每个线程的prefetch 数据,并且分配内存

for (int i = 0; i < this->PREFETCH_COUNT; ++i) {

this->prefetch_[i].data_.Reshape(top_shape);

}

LOG(INFO) << "output data size: " << top[0]->num() << ","

<< top[0]->channels() << "," << top[0]->height() << ","

<< top[0]->width();

// label

// 如果有标签,每个线程的标签也要reshape,并且分配内存

if (this->output_labels_) {

vector<int> label_shape(1, batch_size);

top[1]->Reshape(label_shape);

for (int i = 0; i < this->PREFETCH_COUNT; ++i) {

this->prefetch_[i].label_.Reshape(label_shape);

}

}

}

// This function is called on prefetch thread

// 这个函数被prefetch线程所调用

template<typename Dtype>

void DataLayer<Dtype>::load_batch(Batch<Dtype>* batch) {

CPUTimer batch_timer;

batch_timer.Start();

double read_time = 0;

double trans_time = 0;

CPUTimer timer;

CHECK(batch->data_.count());

CHECK(this->transformed_data_.count());

// 读取一个dataum,用来初始化top blob维度

// Reshape according to the first datum of each batch

// on single input batches allows for inputs of varying dimension.

const int batch_size = this->layer_param_.data_param().batch_size();

Datum& datum = *(reader_.full().peek());

// Use data_transformer to infer the expected blob shape from datum.

vector<int> top_shape = this->data_transformer_->InferBlobShape(datum);

this->transformed_data_.Reshape(top_shape);

// Reshape batch according to the batch_size.

top_shape[0] = batch_size;

batch->data_.Reshape(top_shape); /*同时分配内存*/

Dtype* top_data = batch->data_.mutable_cpu_data();

Dtype* top_label = NULL; // suppress warnings about uninitialized variables

if (this->output_labels_) {

top_label = batch->label_.mutable_cpu_data();

}

//循环加载batch

for (int item_id = 0; item_id < batch_size; ++item_id) {

timer.Start();

// get a datum

// 读取数据datum

Datum& datum = *(reader_.full().pop("Waiting for data"));

// 统计读取时间

read_time += timer.MicroSeconds();

timer.Start();

// 计算指针offset

// Apply data transformations (mirror, scale, crop...)

int offset = batch->data_.offset(item_id);

this->transformed_data_.set_cpu_data(top_data + offset);

// 将datum数据拷贝到batch中

this->data_transformer_->Transform(datum, &(this->transformed_data_));

// Copy label.

// 拷贝标签

if (this->output_labels_) {

top_label[item_id] = datum.label();

}

// 统计拷贝时间

trans_time += timer.MicroSeconds();

reader_.free().push(const_cast<Datum*>(&datum));

}

timer.Stop();

// 统计加载batch总时间

batch_timer.Stop();

// 输出时间开销

DLOG(INFO) << "Prefetch batch: " << batch_timer.MilliSeconds() << " ms.";

DLOG(INFO) << " Read time: " << read_time / 1000 << " ms.";

DLOG(INFO) << "Transform time: " << trans_time / 1000 << " ms.";

}

INSTANTIATE_CLASS(DataLayer);

REGISTER_LAYER_CLASS(Data);

} // namespace caffe

感谢::::http://blog.csdn.net/iamzhangzhuping/article/details/50582503

4万+

4万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?