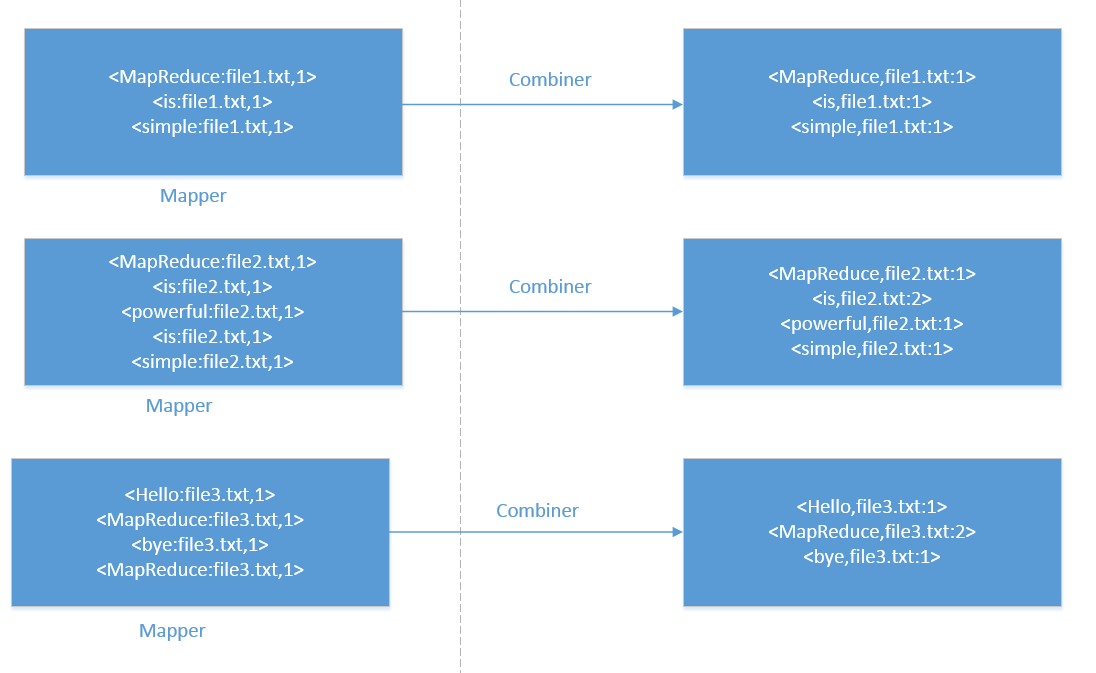

1、Combiner的作用是把一个map产生的多个(key,value)合并成一个新的(key,value),然后再将新的(key,value)作为reduce的输入

2、在map函数与reduce函数多了一个combine函数,目的是为了减少map输出的中间结果,这样减少了reduce复制map输出的数据,减少网络传输负载。

3、并不是所有情况下都能使用Combiner,Combiner使用于对记录的汇总的场景(如求和),但是求平均数的场景就不能使用了。

Combiner组件是针对每一个map进行局部处理。。。。

什么时候运行Combiner:

1、当job设置了Combiner,并且spill( 达到溢写阈值后就会写入到磁盘中)的个数达到min.num.spill.for.combine(默认是3)的时候,那么combiner就会在Merge(Merge将许多小的spill文件合并成一个更大的spill文件)之前执行

2、但是有的情况下,Merge开始执行,但spill文件的个数没有达到需求,这个时候Combiner可能会在Merge之后运行

3、Combiner也有可能不运行,Combiner会考虑当时集群的一个负载情况

Combiner不运行,

定义MyMapper.java

package com.mr;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class MyMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

private IntWritable one = new IntWritable(1) ;

@Override

protected void map(LongWritable key, Text value,

org.apache.hadoop.mapreduce.Mapper.Context context)

throws IOException, InterruptedException {

String[] s = value.toString().split("\\s+") ;

for(String str : s){

context.write(new Text(str), one) ;

}

}

}

定义MyCombiner.java

package com.mr;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class MyCombiner extends Reducer<Text,IntWritable,Text,IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> value,Context context)

throws IOException, InterruptedException {

int sum = 0 ;

for(IntWritable val : value){

sum += val.get() ;

}

context.write(key, new IntWritable(sum)) ;

}

}

定义TestCombiner.java

package com.mr;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser;

public class TestCombiner {

public static void main(String args[])throws Exception{

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(TestCombiner.class);

job.setMapperClass(MyMapper.class);

job.setCombinerClass(MyCombiner.class);//Combiner根本没有执行。。。

// job.setReducerClass(IntSumReducer.class);

job.setNumReduceTasks(0) ;

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

Combiner运行。。。。

TestCombiner.java代码如下:

package com.mr;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.reduce.IntSumReducer;

import org.apache.hadoop.util.GenericOptionsParser;

public class TestCombiner {

public static void main(String args[])throws Exception{

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: wordcount <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "word count");

job.setJarByClass(TestCombiner.class);

job.setMapperClass(MyMapper.class);

job.setCombinerClass(MyCombiner.class);//Combiner执行。。。

job.setReducerClass(IntSumReducer.class);

// job.setNumReduceTasks(0) ;

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

540

540

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?