状态机由一组状态组成,这些状态分为三类:初始状态、中间状态和最终状态。状态机从初始状态开始运行,经过一系列中间状态后,到达最终状态并退出。在一个状态机中,每个状态都可以接收一组特定事件,并根据具体的事件类型转换到另一个状态。当状态机转换到最终状态时,则退出。

YARN状态转换方式

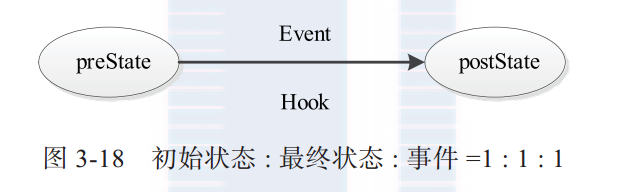

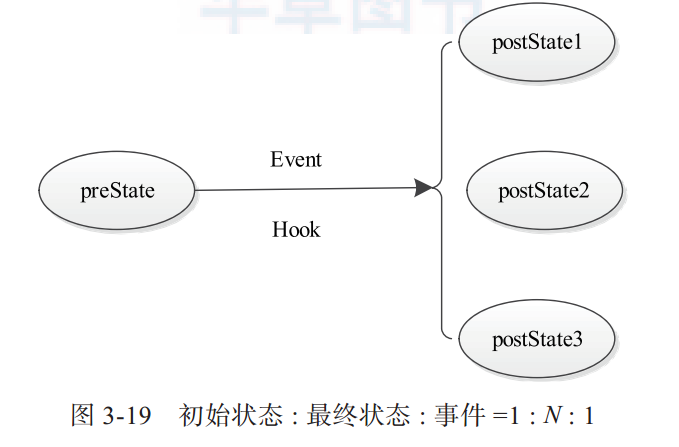

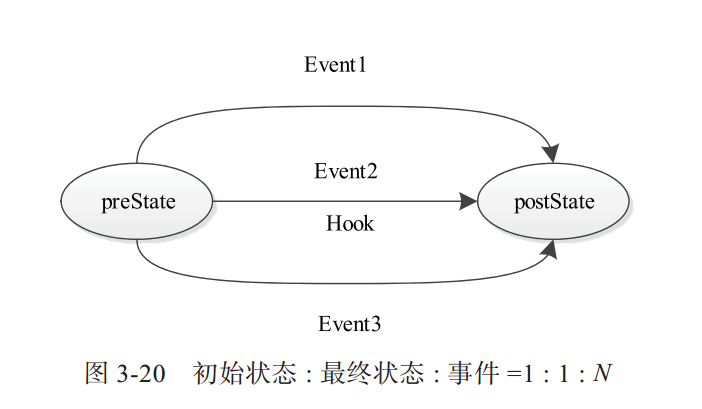

在YARN中,每种状态转换由一个四元组表示,分别是转换前状态(preState)、转换后状态(postState)、事件(event)和回调函数(hook)。YARN定义了三种状态转换方式,具体如下:

1)一个初始状态、一个最终状态、一种事件。该方式表示状态机在preState状态下,接收到Event事件后,执行函数状态转义函数Hook,并在执行完成后将当前状态转换为postState。

2)一个初始状态、多个最终状态、一种事件。该方式表示状态机在preState状态下,接收到Event事件后,执行函数状态转移函数Hook,并将当前状态转移为函数Hook的返回值表示的状态。

3)一个初始状态、一个最终状态、多种事件。该方式表示状态机在preState状态下,接收到Event1、Event2和Event3中的任何一个事件,将执行函数状态转移函数Hook,并在执行完成后将当前状态转换为postState。

状态机的使用方法

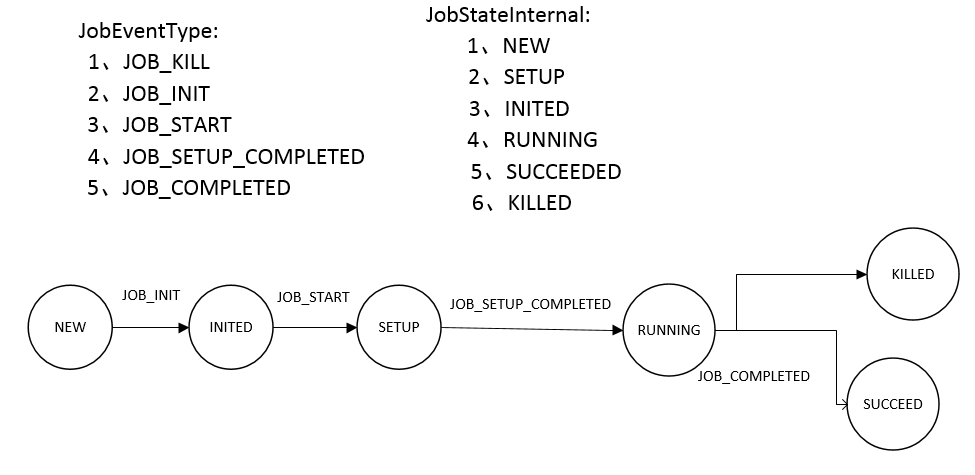

为了说明状态机的使用方法,举例如下。在该例子中,创建了一个作业状态机JobStateMachine,该状态机维护作业内部的各种状态变化。该状态机同时也是一个事件处理器,当接收到某种事件后,会触发相应的状态转移。

定义Job事件

package com.statemachine;

import org.apache.hadoop.yarn.event.AbstractEvent;

public class JobEvent extends AbstractEvent<JobEventType> {

private String jobID ;

public JobEvent(String jobID , JobEventType type){

super(type) ;

this.jobID = jobID ;

}

public String getJobID(){

return this.jobID ;

}

}

其中,Job事件类型定义如下

package com.statemachine;

public enum JobEventType {

JOB_KILL ,

JOB_INIT ,

JOB_START ,

JOB_SETUP_COMPLETED ,

JOB_COMPLETED ,

}

定义中央异步调度器

package com.statemachine;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.service.CompositeService;

import org.apache.hadoop.service.Service;

import org.apache.hadoop.yarn.event.AsyncDispatcher;

import org.apache.hadoop.yarn.event.Dispatcher;

import org.apache.hadoop.yarn.event.EventHandler;

@SuppressWarnings("unchecked")

public class SimpleMRAppMaster extends CompositeService {

private Dispatcher dispatcher ; // 中央异步调度器

private String jobID ;

public SimpleMRAppMaster(String name , String jobID){

super(name) ;

this.jobID = jobID ;

}

public void serviceInit(final Configuration conf) throws Exception{

dispatcher = new AsyncDispatcher() ; //定义一个中央异步调度器

//注册状态机处理器

dispatcher.register(JobEventType.class, new JobStateMachine(jobID,dispatcher.getEventHandler())) ;

addService((Service)dispatcher) ;

super.serviceInit(conf) ;

}

public Dispatcher getDispatcher(){

return dispatcher ;

}

}

定义状态机和状态机处理器

package com.statemachine;

import java.util.EnumSet;

import java.util.concurrent.locks.Lock;

import java.util.concurrent.locks.ReadWriteLock;

import java.util.concurrent.locks.ReentrantReadWriteLock;

import org.apache.hadoop.yarn.event.EventHandler;

import org.apache.hadoop.yarn.state.InvalidStateTransitonException;

import org.apache.hadoop.yarn.state.MultipleArcTransition;

import org.apache.hadoop.yarn.state.SingleArcTransition;

import org.apache.hadoop.yarn.state.StateMachine;

import org.apache.hadoop.yarn.state.StateMachineFactory;

@SuppressWarnings({"rawtypes","unchecked"})

public class JobStateMachine implements EventHandler<JobEvent> {

private final String jobID ;

private EventHandler eventHandler ;

private final Lock writeLock ;

private final Lock readLock ;

private final StateMachine<JobStateInternal,JobEventType,JobEvent> stateMachine ;

public JobStateMachine(String jobID, EventHandler eventHandler){

this.jobID = jobID ;

ReadWriteLock readWriteLock = new ReentrantReadWriteLock() ;

this.readLock = readWriteLock.readLock() ;

this.writeLock = readWriteLock.writeLock() ;

this.eventHandler = eventHandler ;

stateMachine = stateMachineFactory.make(this) ;

}

public String getJobID(){

return this.jobID ;

}

//定义状态机

protected static final StateMachineFactory<JobStateMachine,JobStateInternal,JobEventType,JobEvent>

stateMachineFactory = new StateMachineFactory<JobStateMachine,JobStateInternal,JobEventType,JobEvent>(JobStateInternal.NEW)

.addTransition(JobStateInternal.NEW, JobStateInternal.INITED, JobEventType.JOB_INIT,new InitTransition())

.addTransition(JobStateInternal.INITED, JobStateInternal.SETUP, JobEventType.JOB_START,new StartTransition())

.addTransition(JobStateInternal.SETUP, JobStateInternal.RUNNING, JobEventType.JOB_SETUP_COMPLETED,new SetupCompletedTransition())

.addTransition(JobStateInternal.RUNNING, EnumSet.of(JobStateInternal.KILLED, JobStateInternal.SUCCEEDED), JobEventType.JOB_COMPLETED,new JobTasksCompletedTransition())

.installTopology() ;

protected StateMachine<JobStateInternal,JobEventType,JobEvent> getStateMachine(){

return this.stateMachine ;

}

public static class InitTransition implements SingleArcTransition<JobStateMachine,JobEvent>{

@Override

public void transition(JobStateMachine job, JobEvent event) {

System.out.println("Receiving event " + event);

job.eventHandler.handle(new JobEvent(job.getJobID(),JobEventType.JOB_START)) ;

}

}

public static class StartTransition implements SingleArcTransition<JobStateMachine,JobEvent>{

@Override

public void transition(JobStateMachine job, JobEvent event) {

System.out.println("Receiving event " + event);

job.eventHandler.handle(new JobEvent(job.getJobID(),JobEventType.JOB_SETUP_COMPLETED)) ;

}

}

public static class SetupCompletedTransition implements SingleArcTransition<JobStateMachine,JobEvent>{

@Override

public void transition(JobStateMachine job, JobEvent event) {

System.out.println("Receiving event " + event);

job.eventHandler.handle(new JobEvent(job.getJobID(),JobEventType.JOB_COMPLETED)) ;

}

}

public static class JobTasksCompletedTransition implements MultipleArcTransition<JobStateMachine, JobEvent, JobStateInternal>{

@Override

public JobStateInternal transition(JobStateMachine job, JobEvent event) {

System.out.println("Receiving event " + event);

return JobStateInternal.SUCCEEDED ;

}

}

@Override

public void handle(JobEvent event) {

try{

writeLock.lock() ;

JobStateInternal oldState = getInternalState() ;

try {

getStateMachine().doTransition(event.getType(), event) ;

} catch (InvalidStateTransitonException e) {

System.out.println("Can't handle this event at current state") ;

}

if(oldState != getInternalState()){

System.out.println("Job Transitioned from " + oldState + " to " + getInternalState()) ;

}

}finally{

writeLock.unlock() ;

}

}

public JobStateInternal getInternalState(){

readLock.lock() ;

try{

return getStateMachine().getCurrentState() ;

}finally{

readLock.unlock() ;

}

}

public enum JobStateInternal{//作业内部状态

NEW ,

SETUP ,

INITED ,

RUNNING ,

SUCCEEDED ,

KILLED

}

}

测试程序

package com.statemachine;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.yarn.conf.YarnConfiguration;

public class SimpleMRAppMasterTest {

public static void main(String args[]) throws Exception{

String jobID = "job_20131215_12" ;

SimpleMRAppMaster appMaster = new SimpleMRAppMaster("Simple MRAppMaster" , jobID ) ;

YarnConfiguration conf = new YarnConfiguration(new Configuration()) ;

appMaster.serviceInit(conf) ;

appMaster.init(conf) ;

appMaster.start() ;

appMaster.getDispatcher().getEventHandler().handle(new JobEvent(jobID,JobEventType.JOB_INIT)) ;

}

}

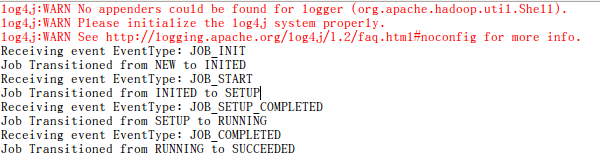

运行结果如下

解释一下程序

状态机迁移如下图所示

首先定义状态机

//定义状态机

protected static final StateMachineFactory<JobStateMachine,JobStateInternal,JobEventType,JobEvent>

stateMachineFactory = new StateMachineFactory<JobStateMachine,JobStateInternal,JobEventType,JobEvent>(JobStateInternal.NEW)

.addTransition(JobStateInternal.NEW, JobStateInternal.INITED, JobEventType.JOB_INIT,new InitTransition())

.addTransition(JobStateInternal.INITED, JobStateInternal.SETUP, JobEventType.JOB_START,new StartTransition())

.addTransition(JobStateInternal.SETUP, JobStateInternal.RUNNING, JobEventType.JOB_SETUP_COMPLETED,new SetupCompletedTransition())

.addTransition(JobStateInternal.RUNNING, EnumSet.of(JobStateInternal.KILLED, JobStateInternal.SUCCEEDED), JobEventType.JOB_COMPLETED,new JobTasksCompletedTransition())

.installTopology() ;注册状态机处理器,当接收到某种事件(本例是JobEventType)后,会触发相应的状态转移,

//注册状态机处理器

dispatcher.register(JobEventType.class, new JobStateMachine(jobID,dispatcher.getEventHandler())) ;1、首先,往中央处理器放入JobEventType.JOB_INIT事件

appMaster.getDispatcher().getEventHandler().handle(new JobEvent(jobID,JobEventType.JOB_INIT)) ;2、中央异步处理器将JobEventType.JOB_INIT事件发往状态机处理器JobStateMachine,调用invoke方法,调用doTransition

getStateMachine().doTransition(event.getType(), event) ;3、回调与JOB_INIT事件关联的Hook函数 InitTransition.transition函数,该函数将下一个事件JobEventType.JOB_START放入中央异步处理器。这时候,状态机已经转移到下一个状态INITED。

job.eventHandler.handle(new JobEvent(job.getJobID(),JobEventType.JOB_START)) ;4、重复2-4,完成状态机的迁移。

484

484

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?