最近两天,因为项目原因必须要将深度学习模型工程化。在MFC框架内实现分类功能。所以用了两天时间又深入研究了一下。

一、基于opencv的dnn模块的调用。

笔者在1年多前的上一篇博客中已经详细讲过这部分。当时觉得opencv越来越强大了,但实际情况opencv也有它开发的局限性。后面我们会详细提到。opencv自从进去3.X的时代,新增了dnn模块,实现了对部分深度学习框架的支持。直到一周之前刚发布的最新版本OpenCV4.0,官方明确支持以下5种深度学习框架的调用。

- Caffe

- TensorFlow

- Torch

- Darknet

- Models in ONNX format

当然,我们发现4.0版本的opencv增加了许多新特点。其中有一点就是新版本需要c++ 11新特性的支持,这可能意味着我们经典的VS2010极其以下的vs版本将彻底和新版opencv无缘。当然,不排除一些技术大牛过两天就搞了一个可支持多种编译器的opencv改进版出来。

回归正题,首先我们需要下载编译完整的opencv库,相关操作可参考我上一篇博客。其对caffe深度学习模型的调用可参考官方给出的例程,官方链接:https://docs.opencv.org/trunk/d5/de7/tutorial_dnn_googlenet.html 网上大多数的技术博客都是由官方例程改进而来(包括我自己的博客),这部分代码调试网上有很多教程,也很容易实现。需要注意的是,opencv对caffe模型的调用是有局限性的,它只支持一些深度学习的公共网络层(例如卷积,池化,softmax层等等)和一些出名网络结构(如ssd目标检测网络)的特殊网络层的调用。换句话说,像作者自己训练的模型因为根据需求定义添加了自己的新的网络层,在caffe中重新编译过,opencv在调用该模型时会报错没办法创建笔者自己定义的网络层。当然,最新版本的opencv已经开始支持很多类型的网络层了,具体请查看官方链接:https://github.com/opencv/opencv/wiki/Deep-Learning-in-OpenCV 查看OpenCV源码,我们可以发现定义的网络层主要保存在\modules\dnn\src\layers这个路径下,它的设计参考了caffe源码中对网络层的添加,采用工厂设计模式。笔者曾尝试着修改opencv的源码以支持自己定义的网络层。但是水平有限,最终放弃了这个方案,其实现的技术细节和caffe源码还是有一定的差别。网上也没有一条相关的技术信息可以参考,大多数都停留在调用简单的模型上。所以还是希望有技术大牛能在这上面研究一下。第一种方案在我这个项目上目前行不通,话不多说,马上开始下一个方案。

二、基于caffe内部c++接口的调用

笔者已经默认大家都编译过Caffe并且能够训练自己的模型了。至于编译caffe的时候会遇到很多巨坑,你要相信,大家都在陪你。所以这也是为什么笔者强烈建议已经2018年马上2019年了。希望大家不要再用caffe了。这种实现方法是需要自己编译caffe的(建议在release和debug模式下都编译,方便后续程序调试)。所以这方法一听就没有用opencv方便。不过没办法,进入正题:

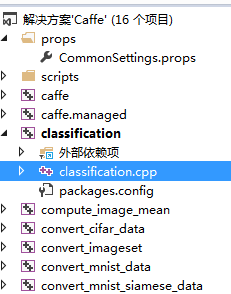

在caffe工程内部:

有一个classification.cpp。caffe已经帮你写好了分类测试的代码。你只需要改改路径什么的,就可以直接运行测试了。网上有很多博客都讲了怎么运行这个,所以跑通这个,绝大多数人都没问题。笔者重点讲的是把这个cpp拿出来单独新建一个工程项目,绝大多数坑都是因为笔者的模型有自己新建的网络层,所以,没办法,一步步填坑。首先。附上最开始的测试代码:

// WheatTest.cpp : 定义控制台应用程序的入口点。

#include "stdafx.h"

#include "Head.h"

#include <caffe/caffe.hpp>

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <algorithm>

#include <iosfwd>

#include <memory>

#include <string>

#include <utility>

#include <vector>

using namespace caffe; // NOLINT(build/namespaces)

using std::string;

/* Pair (label, confidence) representing a prediction. */

typedef std::pair<string, float> Prediction;

class Classifier {

public:

Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file);

std::vector<Prediction> Classify(const cv::Mat& img, int N = 6);

private:

void SetMean(const string& mean_file);

std::vector<float> Predict(const cv::Mat& img);

void WrapInputLayer(std::vector<cv::Mat>* input_channels);

void Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels);

private:

shared_ptr<Net<float> > net_;

cv::Size input_geometry_;

int num_channels_;

cv::Mat mean_;

std::vector<string> labels_;

};

Classifier::Classifier(const string& model_file,

const string& trained_file,

const string& mean_file,

const string& label_file) {

Caffe::set_mode(Caffe::CPU);

/* Load the network. */

net_.reset(new Net<float>(model_file, TEST));

net_->CopyTrainedLayersFrom(trained_file);

CHECK_EQ(net_->num_inputs(), 1) << "Network should have exactly one input.";

CHECK_EQ(net_->num_outputs(), 1) << "Network should have exactly one output.";

Blob<float>* input_layer = net_->input_blobs()[0];

num_channels_ = input_layer->channels();

CHECK(num_channels_ == 3 || num_channels_ == 1)

<< "Input layer should have 1 or 3 channels.";

input_geometry_ = cv::Size(input_layer->width(), input_layer->height());

/* Load the binaryproto mean file. */

SetMean(mean_file);

/* Load labels. */

std::ifstream labels(label_file.c_str());

CHECK(labels) << "Unable to open labels file " << label_file;

string line;

while (std::getline(labels, line))

labels_.push_back(string(line));

Blob<float>* output_layer = net_->output_blobs()[0];

CHECK_EQ(labels_.size(), output_layer->channels())

<< "Number of labels is different from the output layer dimension.";

}

static bool PairCompare(const std::pair<float, int>& lhs,

const std::pair<float, int>& rhs) {

return lhs.first > rhs.first;

}

/* Return the indices of the top N values of vector v. */

static std::vector<int> Argmax(const std::vector<float>& v, int N) {

std::vector<std::pair<float, int> > pairs;

for (size_t i = 0; i < v.size(); ++i)

pairs.push_back(std::make_pair(v[i], static_cast<int>(i)));

std::partial_sort(pairs.begin(), pairs.begin() + N, pairs.end(), PairCompare);

std::vector<int> result;

for (int i = 0; i < N; ++i)

result.push_back(pairs[i].second);

return result;

}

/* Return the top N predictions. */

std::vector<Prediction> Classifier::Classify(const cv::Mat& img, int N) {

std::vector<float> output = Predict(img);

N = std::min<int>(labels_.size(), N);

std::vector<int> maxN = Argmax(output, N);

std::vector<Prediction> predictions;

for (int i = 0; i < N; ++i) {

int idx = maxN[i];

predictions.push_back(std::make_pair(labels_[idx], output[idx]));

}

return predictions;

}

/* Load the mean file in binaryproto format. */

void Classifier::SetMean(const string& mean_file) {

BlobProto blob_proto;

ReadProtoFromBinaryFileOrDie(mean_file.c_str(), &blob_proto);

/* Convert from BlobProto to Blob<float> */

Blob<float> mean_blob;

mean_blob.FromProto(blob_proto);

CHECK_EQ(mean_blob.channels(), num_channels_)

<< "Number of channels of mean file doesn't match input layer.";

/* The format of the mean file is planar 32-bit float BGR or grayscale. */

std::vector<cv::Mat> channels;

float* data = mean_blob.mutable_cpu_data();

for (int i = 0; i < num_channels_; ++i) {

/* Extract an individual channel. */

cv::Mat channel(mean_blob.height(), mean_blob.width(), CV_32FC1, data);

channels.push_back(channel);

data += mean_blob.height() * mean_blob.width();

}

/* Merge the separate channels into a single image. */

cv::Mat mean;

cv::merge(channels, mean);

/* Compute the global mean pixel value and create a mean image

* filled with this value. */

cv::Scalar channel_mean = cv::mean(mean);

mean_ = cv::Mat(input_geometry_, mean.type(), channel_mean);

}

std::vector<float> Classifier::Predict(const cv::Mat& img) {

Blob<float>* input_layer = net_->input_blobs()[0];

input_layer->Reshape(1, num_channels_,

input_geometry_.height, input_geometry_.width);

/* Forward dimension change to all layers. */

net_->Reshape();

std::vector<cv::Mat> input_channels;

WrapInputLayer(&input_channels);

Preprocess(img, &input_channels);

net_->Forward();

/* Copy the output layer to a std::vector */

Blob<float>* output_layer = net_->output_blobs()[0];

const float* begin = output_layer->cpu_data();

const float* end = begin + output_layer->channels();

return std::vector<float>(begin, end);

}

/* Wrap the input layer of the network in separate cv::Mat objects

* (one per channel). This way we save one memcpy operation and we

* don't need to rely on cudaMemcpy2D. The last preprocessing

* operation will write the separate channels directly to the input

* layer. */

void Classifier::WrapInputLayer(std::vector<cv::Mat>* input_channels) {

Blob<float>* input_layer = net_->input_blobs()[0];

int width = input_layer->width();

int height = input_layer->height();

float* input_data = input_layer->mutable_cpu_data();

for (int i = 0; i < input_layer->channels(); ++i) {

cv::Mat channel(height, width, CV_32FC1, input_data);

input_channels->push_back(channel);

input_data += width * height;

}

}

void Classifier::Preprocess(const cv::Mat& img,

std::vector<cv::Mat>* input_channels) {

/* Convert the input image to the input image format of the network. */

cv::Mat sample;

if (img.channels() == 3 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGR2GRAY);

else if (img.channels() == 4 && num_channels_ == 1)

cv::cvtColor(img, sample, cv::COLOR_BGRA2GRAY);

else if (img.channels() == 4 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_BGRA2BGR);

else if (img.channels() == 1 && num_channels_ == 3)

cv::cvtColor(img, sample, cv::COLOR_GRAY2BGR);

else

sample = img;

cv::Mat sample_resized;

if (sample.size() != input_geometry_)

cv::resize(sample, sample_resized, input_geometry_);

else

sample_resized = sample;

cv::Mat sample_float;

if (num_channels_ == 3)

sample_resized.convertTo(sample_float, CV_32FC3);

else

sample_resized.convertTo(sample_float, CV_32FC1);

cv::Mat sample_normalized;

cv::subtract(sample_float, mean_, sample_normalized);

/* This operation will write the separate BGR planes directly to the

* input layer of the network because it is wrapped by the cv::Mat

* objects in input_channels. */

cv::split(sample_normalized, *input_channels);

CHECK(reinterpret_cast<float*>(input_channels->at(0).data)

== net_->input_blobs()[0]->cpu_data())

<< "Input channels are not wrapping the input layer of the network.";

}

int main(int argc, char** argv)

{

::google::InitGoogleLogging(argv[0]);

string model_file = "C:\\Users\\lill\\Desktop\\WheatTest\\model\\mynet.prototxt";

string trained_file = "C:\\Users\\lill\\Desktop\\WheatTest\\model\\mynet.caffemodel";

string mean_file = "C:\\Users\\lill\\Desktop\\WheatTest\\model\\trainimgmean.binaryproto";

string label_file = "C:\\Users\\lill\\Desktop\\WheatTest\\model\\label.txt";

Classifier classifier(model_file, trained_file, mean_file, label_file);

string file = "C:\\Users\\lill\\Desktop\\WheatTest\\model\\1.bmp";

std::cout << "---------- Prediction for "<< file << " ----------" << std::endl;

cv::Mat img = cv::imread(file, -1);

CHECK(!img.empty()) << "Unable to decode image " << file;

std::vector<Prediction> predictions = classifier.Classify(img);

/* Print the top N predictions. */

for (size_t i = 0; i < predictions.size(); ++i)

{

Prediction p = predictions[i];

std::cout << std::fixed << std::setprecision(4) << p.second << " - \"" << p.first << "\"" << std::endl;

}

std::system("pause");

}代码的改动部分不是很多。因为要依赖于编译好的caffe环境。所以要添加一些包含目录,库目录和lib文件等。具体包含关系可以参考链接:https://blog.csdn.net/hhh0209/article/details/79830988 需要注意的是,debug和release包含有一些不同,CPU模式和GPU模式的包含也有一些不同。

Release模式下:

包含目录:

D:\caffe-master\include

D:\caffe-master\include\caffe

D:\caffe-master\include\caffe\proto

D:\NugetPackages\boost.1.59.0.0\lib\native\include

D:\NugetPackages\gflags.2.1.2.1\build\native\include

D:\NugetPackages\glog.0.3.3.0\build\native\include

D:\NugetPackages\protobuf-v120.2.6.1\build\native\include

D:\NugetPackages\OpenBLAS.0.2.14.1\lib\native\include

D:\NugetPackages\OpenCV.2.4.10\build\native\include

D:\NugetPackages\LevelDB-vc120.1.2.0.0\build\native\include

D:\NugetPackages\lmdb-v120-clean.0.9.14.0\lib\native\include

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\include(GPU模式下需配置)

库目录:

D:\caffe-master\Build\x64\Release

D:\NugetPackages\boost_date_time-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_filesystem-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_thread-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_system-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_chrono-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\glog.0.3.3.0\build\native\lib\x64\v120\Release\dynamic

D:\NugetPackages\protobuf-v120.2.6.1\build\native\lib\x64\v120\Release

D:\NugetPackages\OpenBLAS.0.2.14.1\lib\native\lib\x64

D:\NugetPackages\hdf5-v120-complete.1.8.15.2\lib\native\lib\x64

D:\NugetPackages\OpenCV.2.4.10\build\native\lib\x64\v120\Release

D:\NugetPackages\gflags.2.1.2.1\build\native\x64\v120\dynamic\Lib

D:\NugetPackages\LevelDB-vc120.1.2.0.0\build\native\lib\x64\v120\Release

D:\NugetPackages\lmdb-v120-clean.0.9.14.0\lib\native\lib\x64

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64(GPU模式下配置)

附加依赖库(这个很重要,链接教程这里的包含有问题):

caffe.lib

compute_image_mean.lib

convert_imageset.lib

convert_mnist_data.lib

libcaffe.lib

opencv_highgui2410.lib

opencv_imgproc2410.lib

opencv_objdetect2410.lib

opencv_core2410.lib

opencv_ml2410.lib

libboost_date_time-vc120-mt-s-1_59.lib

libboost_filesystem-vc120-mt-s-1_59.lib

libglog.lib

hdf5.lib

hdf5_cpp.lib

hdf5_f90cstub.lib

hdf5_fortran.lib

hdf5_hl.lib

hdf5_hl_cpp.lib

hdf5_hl_f90cstub.lib

hdf5_hl_fortran.lib

hdf5_tools.lib

szip.lib

zlib.lib

LevelDb.lib

lmdbD.lib

libprotobuf.lib

libopenblas.dll.a

gflags_nothreadsd.lib

gflagsd.lib

//以下GPU模式下额外添加

cublas.lib

cuda.lib

cublas_device.lib

cudnn.lib

cudadevrt.lib

cudart.lib

cudart_static.lib

cufft.lib

cufftw.lib

curand.lib

cusolver.lib

cusparse.lib

nppc.lib

nppi.lib

npps.lib

nvblas.lib

nvcuvid.lib

nvrtc.libDebug模式下:

包含目录:

D:\caffe-master\include

D:\caffe-master\include\caffe

D:\caffe-master\include\caffe\proto

D:\NugetPackages\boost.1.59.0.0\lib\native\include

D:\NugetPackages\gflags.2.1.2.1\build\native\include

D:\NugetPackages\glog.0.3.3.0\build\native\include

D:\NugetPackages\OpenBLAS.0.2.14.1\lib\native\include

D:\NugetPackages\OpenCV.2.4.10\build\native\include

D:\NugetPackages\protobuf-v120.2.6.1\build\native\include

D:\NugetPackages\LevelDB-vc120.1.2.0.0\build\native\include

D:\NugetPackages\lmdb-v120-clean.0.9.14.0\lib\native\include

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\include(GPU模式下配置)

库目录:

D:\caffe-master\Build\x64\Debug

D:\NugetPackages\boost_date_time-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_filesystem-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_system-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_thread-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\boost_chrono-vc120.1.59.0.0\lib\native\address-model-64\lib

D:\NugetPackages\glog.0.3.3.0\build\native\lib\x64\v120\Debug\dynamic

D:\NugetPackages\protobuf-v120.2.6.1\build\native\lib\x64\v120\Debug

D:\NugetPackages\OpenBLAS.0.2.14.1\lib\native\lib\x64

D:\NugetPackages\hdf5-v120-complete.1.8.15.2\lib\native\lib\x64

D:\NugetPackages\OpenCV.2.4.10\build\native\lib\x64\v120\Debug

D:\NugetPackages\gflags.2.1.2.1\build\native\x64\v120\dynamic\Lib

D:\NugetPackages\LevelDB-vc120.1.2.0.0\build\native\lib\x64\v120\Debug

D:\NugetPackages\lmdb-v120-clean.0.9.14.0\lib\native\lib\x64

C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v8.0\lib\x64(GPU模式下配置)

附加依赖库:

caffe.lib

compute_image_mean.lib

convert_imageset.lib

convert_mnist_data.lib

libcaffe.lib

opencv_highgui2410d.lib

opencv_imgproc2410d.lib

opencv_objdetect2410d.lib

opencv_core2410d.lib

opencv_ml2410d.lib

libboost_date_time-vc120-mt-gd-1_59.lib

libboost_filesystem-vc120-mt-gd-1_59.lib

libboost_system-vc120-mt-gd-1_59.lib

libglog.lib

hdf5.lib

hdf5_cpp.lib

hdf5_f90cstub.lib

hdf5_fortran.lib

hdf5_hl.lib

hdf5_hl_cpp.lib

hdf5_hl_f90cstub.lib

hdf5_hl_fortran.lib

hdf5_tools.lib

szip.lib

zlib.lib

LevelDb.lib

lmdbD.lib

libprotobuf.lib

libopenblas.dll.a

gflags_nothreadsd.lib

gflagsd.lib

//以下需要在GPU模式下额外配置

cublas.lib

cuda.lib

cublas_device.lib

cudnn.lib

cudadevrt.lib

cudart.lib

cudart_static.lib

cufft.lib

cufftw.lib

curand.lib

cusolver.lib

cusparse.lib

nppc.lib

nppi.lib

npps.lib

nvblas.lib

nvcuvid.lib

nvrtc.lib

参考文章及相关链接已经说的很清楚。笔者是CPU模式release下调试(注意是X64)。下面主要说一下遇到的一些巨坑。

1. 编译好caffe后,在caffe-master\include\caffe\util下有一个device_alternate.hpp。它里面默认是GPU模式的。所以如果笔者因为没有GPU和cuda相关的库,里面的包含就会报错。此时,你需要在这个.h文件头加一句#define CPU_ONLY 1 使用CPU模式。当然如果本身用的就是GPU就没任何问题

2. 编译程序时各种报错缺乏相关dll。此时就去NugetPackages附加包里面找相关dll,将它拷进C:\Windows\System32。这个大家轻车熟路。总之一句话,缺什么dll就去找什么dll。当然,笔者遇到的情况缺的dll是NugetPackages里没有的。大多数情况下通常提示缺乏libgflags.dll或者libglog.dll。这时候包里没有,此时就需要大家去网上找,在这里也友情提示大家,csdn上的下载链接要慎重。或者自己编译生成,相关操作链接:https://blog.csdn.net/yhcharles/article/details/39583351。这个操作是完全没问题的。我将它改了一下:

(1). 下载两个库源文件压缩包,我用的是glog-0.3.3 gflags-2.0

(2). 将这两个压缩包解压后,copy到你的solution目录下

(3). 在solution中add project,注意不是add new project,是add existing project,就是添加现有项:

gflags目录下,vsprojects\libgflags;

glog目录下,vsprojects\libglog

这里都是用的dll,所以最后build出来还会有libglog.dll和libgflags.dll两个文件,运行时需要用到

(4). 配置glog project:

在project->properties中设置:Common Properties->References,Add New References,选择libgflags;头文件包含目录添加gflags所在目录下的src\windows

修改该project中的config.h文件,打开HAVE_LIB_GFLAGS(就是将“#undef HAVE_LIB_GFLAGS"改成"#define HAVE_LIB_GFLAGS")

(5). 配置你的main project:

类似上面,添加libgflags和libglog两个依赖;

头文件目录添加gflags和glog所在目录下的src\windows

然后就可以build solution了,此时再去x64目录下就会生成libgflags.dll或者libglog.dll两个东东了。

3. C/C++->预处理器->预处理器定义 添加:_CRT_SECURE_NO_WARNINGS.这个要注意改。

4. 最后也是最重要的一点。网上大多数对该点说得很含糊。我将它理解为我们要为调用模型里面用到的网络层进行初始化和注册,否则拿出来的分类cpp是检测不到你模型中的网络层。这也就意味着我们模型中调用了什么层,我们就需要在cpp中初始化注册什么层。实现方法是加一个Head.h头文件。cpp中包含改头文件。我的h实现如下:

#include <caffe/common.hpp>

#include <caffe/layer.hpp>

#include <caffe/layer_factory.hpp>

#include <caffe/layers/input_layer.hpp>

#include <caffe/layers/inner_product_layer.hpp>

#include <caffe/layers/dropout_layer.hpp>

#include <caffe/layers/conv_layer.hpp>

#include <caffe/layers/relu_layer.hpp>

#include <caffe/layers/attention_crop_layer.hpp>

#include <caffe/layers/pooling_layer.hpp>

#include <caffe/layers/lrn_layer.hpp>

#include <caffe/layers/softmax_layer.hpp>

#include <caffe/layers/tanh_layer.hpp>

#include <caffe/layers/sigmoid_layer.hpp>

#include <caffe/layers/reshape_layer.hpp>

#include <caffe/layers/concat_layer.hpp>

namespace caffe

{

extern INSTANTIATE_CLASS(InputLayer);

extern INSTANTIATE_CLASS(InnerProductLayer);

extern INSTANTIATE_CLASS(DropoutLayer);

extern INSTANTIATE_CLASS(ConvolutionLayer);

REGISTER_LAYER_CLASS(Convolution);

extern INSTANTIATE_CLASS(ReLULayer);

REGISTER_LAYER_CLASS(ReLU);

extern INSTANTIATE_CLASS(PoolingLayer);

REGISTER_LAYER_CLASS(Pooling);

extern INSTANTIATE_CLASS(LRNLayer);

REGISTER_LAYER_CLASS(LRN);

extern INSTANTIATE_CLASS(SoftmaxLayer);

REGISTER_LAYER_CLASS(Softmax);

extern INSTANTIATE_CLASS(TanHLayer);

REGISTER_LAYER_CLASS(TanH);

extern INSTANTIATE_CLASS(SigmoidLayer);

REGISTER_LAYER_CLASS(Sigmoid);

extern INSTANTIATE_CLASS(AttentionCropLayer);

//REGISTER_LAYER_CLASS(AttentionCrop);*/

extern INSTANTIATE_CLASS(ReshapeLayer);

//REGISTER_LAYER_CLASS(Reshape);

extern INSTANTIATE_CLASS(ConcatLayer);

}至于什么层该注册,什么层该初始化。笔者也是摸索了很久。很多都是告诉你应该这样做,而不告诉你为什么这么做,导致在迁移复现的时候一大堆BUG。其中AttentionCropLayer就是笔者自己加的网络层,其他的层都是caffe中自带的实现层。在这里需要注意:如果caffe源码中的网络层实现cpp中已经明确调用REGISTER_LAYER_CLASS(Reshape)注册过,那么在这个头文件我们只用添加初始化语句就行了,否则程序会报错,说网络层已经注册。如果在实现cpp中没有明确注册过,而是通过layer_factor.cpp注册的,我们还需要加上注册语句,否则程序会报错,说识别不到网络层。

最后,附上笔者的结果:这是对一张图片进行分类,结果是每类的置信度,实际我们认为置信度最高的类即为我们分类结果

最后的最后,我不得不吐槽下360,连我自己生成的exe都拦截,真的是劝大家善良,该卸载就卸载。

1530

1530

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?