目的:从百度百科python页抓取相关超链接的词条,输出到html中

一些概念:深入理解python之self

程序的主要目录为

主函数

from test import url_manager

from test import html_downloader

from test import html_parser

from test import html_output

if __name__ == "__main__": # main函数 __name__中间是两个下划线 main函数不能写错!!!!

root_url="http://baike.baidu.com/view/21087.htm" # 入口地址

obj_spider = SpiderMain() # 创建一个spider

obj_spider.craw(root_url) # 调用spider的craw方法,启动爬虫class SpiderMain(object):

def __init__(self):

self.urls = url_manager.UrlManager() <span style="font-family:Microsoft YaHei;"># </span>url管理器 ——分别对应一个类。

self.downloader = html_downloader.HtmlDownloader() <span style="font-family:Microsoft YaHei;"># </span>html下载<span style="font-family:Microsoft YaHei;">器</span>

self.parser = html_parser.HtmlParser() <span style="font-family:Microsoft YaHei;"># </span>html解析器

self.output = html_output.HtmlOutPuter() <span style="font-family:Microsoft YaHei;"># </span>html输出

def craw(self, root_url): # 爬虫的调度程序

count = 1 # 记录当前爬的第几个url

# 入口 url 添加到 url 管理器

self.urls.add_new_url(root_url)

while self.urls.has_new_url(): # 有待取去url

try:

new_url = self.urls.get_new_url() # 获取最新的url

print('craw %d :%s' % (count, new_url))

# 启动下载器并存储

html_cont = self.downloader.downlad(new_url) # 下载url

new_urls,new_data = self.parser.paser(new_url,html_cont) # 得到新的url和数据

self.urls.add_new_urls(new_urls) # 添加到url管理器

self.output.collect_data(new_data) # 收集数据

if count == 100:

break

count += 1

except Exception as error:

print(error)

# 输出收集好的数据

self.output.output_html()按照craw方法中调用顺序:

URL管理器

# url 管理器需要维护待爬取的 url 列表 和 已爬取的 url 列表

class UrlManager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

# 添加url

def add_new_url(self, root_url):

if root_url is None:

return

if root_url not in self.new_urls and root_url not in self.old_urls:

self.new_urls.add(root_url)

# 添加urls集合

def add_new_urls(self, new_urls):

if new_urls is None or len(new_urls) == 0:

return

for url in new_urls:

self.add_new_url(url)

# 判断是否有新的待爬取的url

def has_new_url(self):

return len(self.new_urls) != 0

# 获取新的待爬url

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

下载器

import urllib

# 下载器代码——下载网页

class HtmlDownloader(object):

def downlad(self, new_url):

if new_url is None:

return None

response = urllib.request.urlopen(new_url)

if response.getcode() != 200:

return None

return response.read().decode('utf-8')解析器

## -*- coding: utf-8 -*-

from bs4 import BeautifulSoup

import re

import urllib.parse

import urllib.request

class HtmlParser(object):

def _get_new_urls(self, page_url, soup):

new_urls = set()

# /view/123.html

links = soup.find_all('a', href=re.compile(r'/view/\d+\.htm')) # 不是html

for link in links:

new_url = link['href']

# 让 new_url 以 page_url 为模板拼接成一个全新的 url

new_full_url = urllib.parse.urljoin(page_url, new_url)

print(new_url) # /view/10812319.htm

print(new_full_url) # http://baike.baidu.com/view/10812319.htm

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data = {}

# url

res_data['url'] = page_url

# <dd class="lemmaWgt-lemmaTitle-title"> <h1>Python</h1>

title_node = soup.find('dd', class_="lemmaWgt-lemmaTitle-title")

# 如果没找到 'lemmaWgt-lemmaTitle-title' 类,直接跳过

if title_node == None:

res_data['title'] = ''

res_data['summary'] = ''

return res_data

else:

title_node = title_node.find("h1")

res_data['title'] = title_node.get_text()

# <div class="lemma-summary">

summary_node = soup.find('div', class_="lemma-summary")

if summary_node == None:

res_data['summary'] = ''

else:

res_data['summary'] = summary_node.get_text()

return res_data

def paser(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont, 'html.parser', from_encoding = 'utf-8')

new_urls = self._get_new_urls(page_url, soup)

new_data = self._get_new_data(page_url, soup)

return new_urls, new_data

# python3对urllib和urllib2进行了重构,

# 拆分成了urllib.request, urllib.response, urllib.parse, urllib.error等几个子模块,

# urljoin现在对应的函数是urllib.parse.urljoin

输出

class HtmlOutPuter(object):

def __init__(self):

self.datas = []

def collect_data(self, data):

if data is None:

return

self.datas.append(data)

def output_html(self):

fout = open('outputer.html', 'w', encoding='utf-8')

fout.write('<html>')

fout.write("<head><meta http-equiv=\"content-type\" content=\"text/html;charset=utf-8\"></head>")

fout.write('<body>')

fout.write('<table>')

# ascii python 默认编码

for data in self.datas:

fout.write('<tr>')

fout.write('<td>%s</td>' % data['url'])

fout.write('<td>%s</td>' % data['title'])

fout.write('<td>%s</td>' % data['summary'])

fout.write('</tr>')

fout.write('</table>')

fout.write('</body>')

fout.write('</html>')

fout.close()

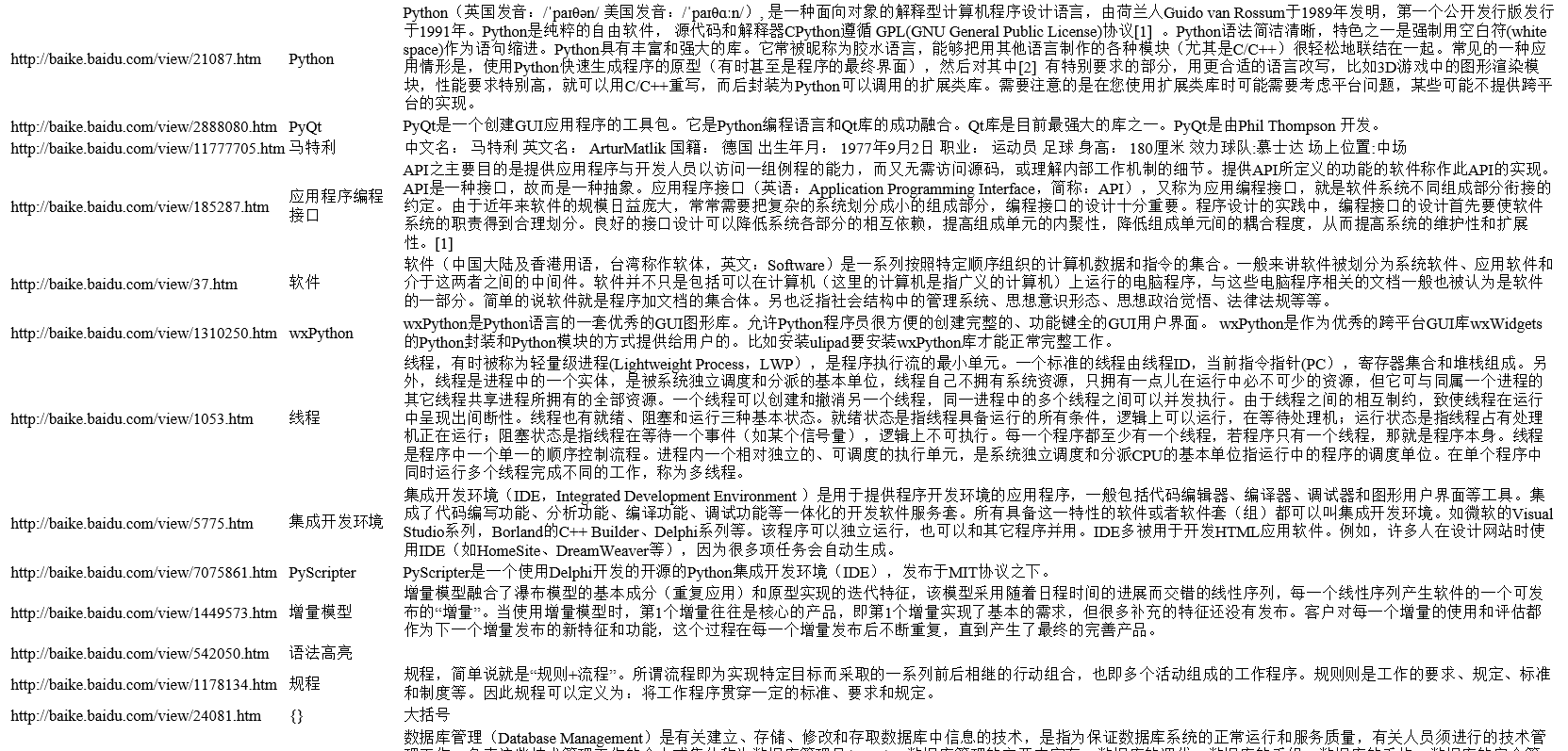

运行spider_main执行结果

代码是根据慕课网上老师讲的敲得,这是入门级别的简单的爬虫...后续路还很长.....

附视频地址:老师讲的真的很好!

代码链接:点击打开链接

914

914

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?