HDFS--Hadoop分布式文件系统

一、HDFS的定义

HDFS(Hadoop Distributed File System)Hadoop分布式文件系统,适合一次写入,多次读出的场景。具有高容错性(多副本),适合处理GB、TB甚至PB级别的百万规模以上的文件数量的大数据。

二、HDFS的原理

1.HDFS的组成

1)namenode(nn)

nn是HDFS的Master,管理者。负责管理HDFS的名称空间,配置副本策略(一个文件有几个副本),管理数据块的映射信息(一个数据块放在那个datanode上),处理客户端读写请求

2)datanode(dn)

dn是HDFS的slave,执行者。负责存储实际的数据块,执行数据块的读写 操作。

3)client 客户端

(1)负责文件切分,将文件分成一个一个的block(block大小由nn确定)

文件块大小 HDFS文件分块存储,块(block)大小通过配置参数(dfs.blocksize –

htfs-default.xml)规定,该值在3.x版本中默认为128M,该值为块的上限,即每块最大为128M,小于128M的文件按实际大小存储。块大小主要取决于磁盘传输速率,机械硬盘100M/s设置128M,固态硬盘200-300M/s,设置256M。

(2)与nn交互获取文件的位置信息,与dn交互读写数据

(3)提供hdfs命令管理hdfs,访问hdfs进行增删改查

4)Secondary NameNode(2nn)

2nn辅助nn,定期合并Fsimage和Edits并推送给nn,紧急情况下辅助恢复nn

2.hdfs读写流程

1)hdfs写数据流程

(1) hdfs客户端(client)在本地创建一个Distribute FileSystem(java对象)

(2) client 向nn发起请求上传文件(附带hdfs的path)

(3) nn根据请求进行校验,比如客户端权限,hdfs目录是否存在等

(4) nn响应client可以上传文件

(5) client向nn请求上传block

(6) nn向client返回元数据信息,根据副本数量参数返回dn节点

(7) client创建数据流FSDataOutputStream,向最近的dn请求建立传输通道,其他dn之间依次请求建立传输通道

(8) dn依次应答,最后应答给client

(9) client通过传输流开始向最近的dn传出数据(每个block会分为若干512byte的chunk进入缓冲队列,与4byte校验位共同组成一个packet进行传输),dn之间依次传输数据,每传完一个packet并应答完成之后,client中的缓冲队列中删除响应的数据

2)hdfs读数据流程

(1) hdfs客户端(client)在本地创建一个Distribute FileSystem(java对象)

(2) client 向nn发起请求下载文件(附带hdfs的path)

(3) nn根据请求进行校验,比如客户端权限,hdfs目录是否存在等

(4) nn向client返回元数据信息,根据副本数量参数返回dn节点

(7) client创建数据流FSDataInputStream,根据【节点距离】与【dn负载】选择dn请求建立传输通道(串行,一个一个block先后读数据,然后拼接)

(8) 传输数据

3.NameNode(nn)与Secondary NameNode(2nn)的工作机制

1)nn的元数据存储机制

在nn的$HADOOP_HOME/data/dfs/name/current 目录下有若干 edits_*与fsimage_*文件,还有一个edits_inprogress_*文件,下面表述存储过程:

(1)fsiamge负责存储元数据(可以类比数据库的里的数据),而edits文件负责存储数据的变化过程(类比一些sql语句);fsiamge会被加载到内存中

(2)一个修改请求过来的时候,首先将信息记录到edits_inprogress文件内,然后加载到内存中(此时edits_inprogress与fsimage都在内存中),内存数据会执行edits_inprogress中的请求

(3)由于数据是不断变化的,所以edits文件会持续增多(sql语句越来越多),若干时间edits积攒多了之后,若干edits文件会合并成一个fsimage(更新数据库里的数据),这一步会借助2nn来实现

2)2nn辅助nn实现元数据存储

在2nn的$HADOOP_HOME/data/dfs/namesecondary/current/ 目录下有若干 edits_*与fsimage_*文件,下面表述存储过程:

(1)达到一定条件后(默认定时1h或者定量100w),2nn会向nn请求checkPoint

(2)每一次执行checkpoint的时候,nn中的edits_inprogress_都会先生成一个新的文件(如后缀+1),然后老的edits_inprogress_文件重命名为edits_

(3)nn将edits_与旧的fsimage同步给2nn,2nn中旧的fsimage_ 与 刚刚同步过去的edits_ 会生成新的fsimage.chkpoint

(4)2nn将新的fsimage.chkpoint同步给nn,nn将fsimage.chkpoint重命名为fsimage_覆盖之前的fsimage_.

3)checkpoint定时和定量设置

dfs-default.xml中:(hdfs-site.xml中也可自定义,位置 $HADOOP_HOME/etc/hadoop/hdfs-site.xml)

<property>

<name>dfs.namenode.checkpoint.period</name>

<value>3600s</value>

</property>

<property>

<name>dfs.namenode.checkpoint.txns</name>

<value>1000000</value>

<description>操作动作次数</description>

</property>

<property>

<name>dfs.namenode.checkpoint.check.period</name>

<value>60s</value>

<description> 1分钟检查一次操作次数</description>

</property>

4)查看fsimage与edits文件

先看一下nn中的 $HADOOP_HOME/data/dfs/name/current目录下的文件

[hdfs@hadoop102 current]$ ll $HADOOP_HOME/data/dfs/name/current

总用量 4148

-rw-rw-r-- 1 hdfs hdfs 1048576 3月 31 22:16 edits_0000000000000000001-0000000000000000236

-rw-rw-r-- 1 hdfs hdfs 42 3月 31 22:27 edits_0000000000000000237-0000000000000000238

-rw-rw-r-- 1 hdfs hdfs 1048576 3月 31 22:27 edits_0000000000000000239-0000000000000000239

-rw-rw-r-- 1 hdfs hdfs 1812 4月 1 21:42 edits_0000000000000000240-0000000000000000262

-rw-rw-r-- 1 hdfs hdfs 42 4月 1 22:42 edits_0000000000000000263-0000000000000000264

-rw-rw-r-- 1 hdfs hdfs 1048576 4月 1 22:42 edits_0000000000000000265-0000000000000000265

-rw-rw-r-- 1 hdfs hdfs 1130 4月 3 10:22 edits_0000000000000000266-0000000000000000278

-rw-rw-r-- 1 hdfs hdfs 5291 4月 3 11:22 edits_0000000000000000279-0000000000000000327

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 12:22 edits_0000000000000000328-0000000000000000329

-rw-rw-r-- 1 hdfs hdfs 1048576 4月 3 12:22 edits_inprogress_0000000000000000330

-rw-rw-r-- 1 hdfs hdfs 1080 4月 3 11:22 fsimage_0000000000000000327

-rw-rw-r-- 1 hdfs hdfs 62 4月 3 11:22 fsimage_0000000000000000327.md5

-rw-rw-r-- 1 hdfs hdfs 1080 4月 3 12:22 fsimage_0000000000000000329

-rw-rw-r-- 1 hdfs hdfs 62 4月 3 12:22 fsimage_0000000000000000329.md5

-rw-rw-r-- 1 hdfs hdfs 4 4月 3 12:22 seen_txid

-rw-rw-r-- 1 hdfs hdfs 216 4月 3 09:53 VERSION

查看2nn中的fsimage与edits文件

[hdfs@hadoop104 current]$ ll $HADOOP_HOME/data/dfs/namesecondary/current/

总用量 1092

-rw-rw-r-- 1 hdfs hdfs 1048576 3月 31 22:27 edits_0000000000000000001-0000000000000000236

-rw-rw-r-- 1 hdfs hdfs 42 3月 31 22:27 edits_0000000000000000237-0000000000000000238

-rw-rw-r-- 1 hdfs hdfs 1812 4月 1 21:42 edits_0000000000000000240-0000000000000000262

-rw-rw-r-- 1 hdfs hdfs 42 4月 1 22:42 edits_0000000000000000263-0000000000000000264

-rw-rw-r-- 1 hdfs hdfs 1130 4月 3 10:22 edits_0000000000000000266-0000000000000000278

-rw-rw-r-- 1 hdfs hdfs 5291 4月 3 11:22 edits_0000000000000000279-0000000000000000327

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 12:22 edits_0000000000000000328-0000000000000000329

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 13:22 edits_0000000000000000330-0000000000000000331

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 14:22 edits_0000000000000000332-0000000000000000333

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 15:22 edits_0000000000000000334-0000000000000000335

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 16:22 edits_0000000000000000336-0000000000000000337

-rw-rw-r-- 1 hdfs hdfs 42 4月 3 17:22 edits_0000000000000000338-0000000000000000339

-rw-rw-r-- 1 hdfs hdfs 1080 4月 3 16:22 fsimage_0000000000000000337

-rw-rw-r-- 1 hdfs hdfs 62 4月 3 16:22 fsimage_0000000000000000337.md5

-rw-rw-r-- 1 hdfs hdfs 1080 4月 3 17:22 fsimage_0000000000000000339

-rw-rw-r-- 1 hdfs hdfs 62 4月 3 17:22 fsimage_0000000000000000339.md5

-rw-rw-r-- 1 hdfs hdfs 216 4月 3 17:22 VERSION

使用hdfs命令查看具体信息

命令格式:

查看fsimage文件:hdfs oiv -p 文件类型 -i镜像文件 -o 转换后文件输出路径

查看edits文件:hdfs oev -p 文件类型 -i镜像文件 -o 转换后文件输出路径

#查看fsimage文件

[hdfs@hadoop102 current]$ pwd

/opt/module/hadoop-3.1.3/data/dfs/name/current

[hdfs@hadoop102 current]$ hdfs oiv -p xml -i fsimage_0000000000000000339 -o /opt/software/fsimage_339.xml

2022-04-03 18:00:43,370 INFO offlineImageViewer.FSImageHandler: Loading 3 strings

[hdfs@hadoop102 current]$ cat /opt/software/fsimage_339.xml

关于fsimage文件解析为xml后的内容参见附录一

#查看edits文件前执行一个put操作

[hdfs@hadoop102 current]$ hdfs dfs -put VERSION /

2022-04-03 18:13:07,163 INFO sasl.SaslDataTransferClient: SASL encryption trust check: localHostTrusted = false, remoteHostTrusted = false

[hdfs@hadoop102 current]$ hdfs oev -p xml -i edits_inprogress_0000000000000000340 -o /opt/software/edits_340

[hdfs@hadoop102 current]$ cat !$

关于edits文件解析为xml后的内容参见附录二

4.DataNode(dn)的工作机制

查看dn上存储数据的目录

[hdfs@hadoop104 finalized]$ ll $HADOOP_HOME/data/dfs/data/current/BP-38154239-192.168.10.102-1648734109555/current/finalized/subdir0/subdir0

总用量 24

-rw-rw-r-- 1 hdfs hdfs 11 4月 1 21:29 blk_1073741852

-rw-rw-r-- 1 hdfs hdfs 11 4月 1 21:29 blk_1073741852_1028.meta

-rw-rw-r-- 1 hdfs hdfs 20 4月 1 21:30 blk_1073741853

-rw-rw-r-- 1 hdfs hdfs 11 4月 1 21:30 blk_1073741853_1030.meta

-rw-rw-r-- 1 hdfs hdfs 216 4月 3 18:13 blk_1073741854

-rw-rw-r-- 1 hdfs hdfs 11 4月 3 18:13 blk_1073741854_1031.meta

其中,

blk_文件存储真实的块数据,

blk_.meta存储块数据的数据长度、校验和、时间戳等

(1)DataNode启动后向NameNode注册,通过后,周期性(默认6小时)的向NameNode上报所有的块信息。

<property>

<name>dfs.blockreport.intervalMsec</name>

<value>21600000</value>

<description>Determines block reporting interval in milliseconds.</description>

</property>

(2)心跳是每3秒一次,心跳返回结果带有NameNode给该DataNode的命令(如复制块数据到另一台机器),或删除某个数据块。如果超过10分钟 + 10次心跳后仍然没有收到某个DataNode的心跳,则认为该节点不可用。

timeout = 2 * heartbeat.recheck.interval + 10 * dfs.heartbeat.interval

<property>

<name>dfs.heartbeat.interval</name>

<value>3s</value>

<description>心跳间隔时间</description>

</property>

<property>

<name>dfs.namenode.heartbeat.recheck-interval</name>

<value>300000</value>

<description>重新检查时间间隔</description>

</property>

三、hdfs命令

命令格式:hadoop fs 具体命令 / hdfs dfs 具体命令

查看帮助:hadoop fs -help 具体命令 / hdfs dfs -help 具体命令

创建文件夹与查看:

[hdfs@hadoop102 ~]$ hadoop fs -mkdir /singers

[hdfs@hadoop102 ~]$ hadoop fs -ls /

Found 1 items

drwxr-xr-x - hdfs supergroup 0 2022-04-01 21:20 /singers

上传文件:

-moveFormLocal 从本地剪切到hdfs

-copyFromLocal / -put 从本地拷贝到hdfs

-appendToFile 追加一个文件到另一个文件的末尾

[hdfs@hadoop102 hdfs_test]$ echo zhoujielun > zhoujielun.txt

[hdfs@hadoop102 hdfs_test]$ echo zhangxueyou > zhangxueyou.txt

[hdfs@hadoop102 hdfs_test]$ echo weitasi > weitasi.txt

[hdfs@hadoop102 hdfs_test]$ hadoop fs -moveFromLocal zhoujielun.txt /singers

[hdfs@hadoop102 hdfs_test]$ hadoop fs -put zhangxueyou.txt /singers

[hdfs@hadoop102 hdfs_test]$ hdfs dfs -ls /singers

Found 2 items

-rw-r--r-- 3 hdfs supergroup 20 2022-04-01 21:30 /singers/zhangxueyou.txt

-rw-r--r-- 3 hdfs supergroup 11 2022-04-01 21:29 /singers/zhoujielun.txt

下载文件:

-copyToLocal / -put

[hdfs@hadoop102 hdfs_test]$ hdfs dfs -get /singers .

[hdfs@hadoop102 hdfs_test]$ ls -l

总用量 8

drwxr-xr-x 2 hdfs hdfs 51 4月 1 21:34 singers

-rw-rw-r-- 1 hdfs hdfs 8 4月 1 21:28 weitasi.txt

-rw-rw-r-- 1 hdfs hdfs 12 4月 1 21:27 zhangxueyou.txt

直接操作:

hdfs dfs -cat / -tail filepath

hdfs dfs -chgrp/-chmod/-chown filepath

hdfs dfs -mkdir/-cp filepath

hdfs dfs -mv filepath (在hdfs中mv,与MVFromLocal不一样)

hdfs dfs -rm/-du filepath

-setrep:设置HDFS中文件的副本数量,如果datanode数量小于10,比如只有5个,则只能有5个副本,datanode增加时,副本也会相应增加

hdfs dfs -setrep 10 filepath

四、HDFS的java Api使用

1.前置准备工作

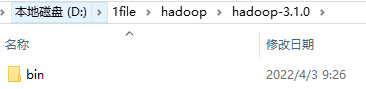

1)将windows依赖包hadoop-3.1.0放到D:\1file\hadoop(自定义无中文目录)下

2)新建系统变量HADOOP_HOME=D:\1file\hadoop\hadoop-3.1.0

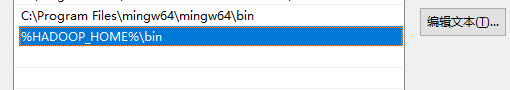

3)path变量中加入 %HADOOP_HOME%\bin

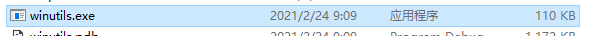

4)双击 %HADOOP_HOME%\bin目录下的winutils.exe

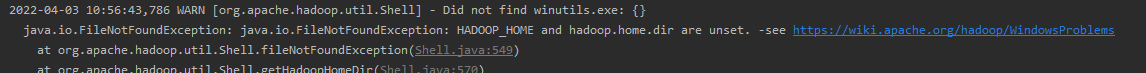

不进行以上4部的报错:

5)新建maven工程

6)配置maven

7)pom中配置依赖

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.hadoopTest</groupId>

<artifactId>HDFSClient</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

</project>

8)log4j配置文件 log4j.properties

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

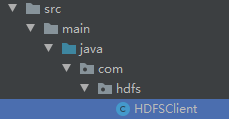

9) 新建类HDFSClient,准备工作完成

2.JAVA-API的具体使用

比较简单,直接上代码

package com.hdfs;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.*;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException;

import java.util.Arrays;

public class HDFSClient {

FileSystem hdfs;

@Before

public void init() throws URISyntaxException, IOException, InterruptedException {

//连接nn地址

URI uri1 = new URI("hdfs://hadoop102:8020");

//初始化配置

// 参数优先级排序:configuration设置的值 >ClassPath下的用户自定义配置文件 >服务器xxx-site.xml >服务器xxx-default.xml

Configuration configuration = new Configuration();

configuration.set("dfs.replication","2");

//设置用户

String user = "hdfs";

//客户端对象

hdfs = FileSystem.get(uri1, configuration,user);

}

//文件基本操作

@Test

public void test1() throws IOException {

Path toHdfsPath = new Path("/API/java");

Path fromHdfsPath = new Path("/singers/zhouejielun.txt");

Path localFile = new Path("D:\\1file\\hadoop\\学习备忘.txt");

Path localPath = new Path("D:\\1file\\hadoop");

//创建文件夹

hdfs.mkdirs(toHdfsPath);

//上传文件

hdfs.copyFromLocalFile(false,true,localFile,toHdfsPath);

//下载文件

hdfs.copyToLocalFile(false,fromHdfsPath,localPath);

//删除文件,递归删除true

hdfs.delete(toHdfsPath,true);

//文件移动或重命名

hdfs.rename(fromHdfsPath,new Path("/zhoujielun.txt.new"));

}

@Test

public void test2() throws IOException {

RemoteIterator<LocatedFileStatus> iter = hdfs.listFiles(new Path("/"), true);

while (iter.hasNext()){

LocatedFileStatus fileStatus = iter.next();

//获取文件信息

System.out.println("=====" + fileStatus.getPath().getName() + "=====");

System.out.println(fileStatus.getPath());

System.out.println(fileStatus.getPermission());

System.out.println(fileStatus.getOwner());

System.out.println(fileStatus.getGroup());

System.out.println(fileStatus.getLen());

System.out.println(fileStatus.getModificationTime());

System.out.println(fileStatus.getReplication());

System.out.println();

//获取块信息

System.out.println(fileStatus.getBlockSize());

BlockLocation[] blockLocations = fileStatus.getBlockLocations();

System.out.println(Arrays.toString(blockLocations));

System.out.println();

}

// 判断是文件还是目录

FileStatus[] fileStatuses = hdfs.listStatus(new Path("/"));

for (FileStatus fileStatus : fileStatuses) {

System.out.println((fileStatus.isFile() ? "file " : fileStatus.isDirectory()? "directory " : "nothing ") + fileStatus.getPath());

}

}

@After

public void closeResource() throws IOException {

hdfs.close();

}

}

五、附录

1.fsimage文件内容

<?xml version="1.0"?>

<fsimage>

<version>

<layoutVersion>-64</layoutVersion>

<onDiskVersion>1</onDiskVersion>

<oivRevision>ba631c436b806728f8ec2f54ab1e289526c90579</oivRevision>

</version>

<NameSection>

<namespaceId>162292633</namespaceId>

<genstampV1>1000</genstampV1>

<genstampV2>1030</genstampV2>

<genstampV1Limit>0</genstampV1Limit>

<lastAllocatedBlockId>1073741853</lastAllocatedBlockId>

<txid>339</txid>

</NameSection>

<ErasureCodingSection>

<erasureCodingPolicy>

<policyId>1</policyId>

<policyName>RS-6-3-1024k</policyName>

<cellSize>1048576</cellSize>

<policyState>DISABLED</policyState>

<ecSchema>

<codecName>rs</codecName>

<dataUnits>6</dataUnits>

<parityUnits>3</parityUnits>

</ecSchema>

</erasureCodingPolicy>

<erasureCodingPolicy>

<policyId>2</policyId>

<policyName>RS-3-2-1024k</policyName>

<cellSize>1048576</cellSize>

<policyState>DISABLED</policyState>

<ecSchema>

<codecName>rs</codecName>

<dataUnits>3</dataUnits>

<parityUnits>2</parityUnits>

</ecSchema>

</erasureCodingPolicy>

<erasureCodingPolicy>

<policyId>3</policyId>

<policyName>RS-LEGACY-6-3-1024k</policyName>

<cellSize>1048576</cellSize>

<policyState>DISABLED</policyState>

<ecSchema>

<codecName>rs-legacy</codecName>

<dataUnits>6</dataUnits>

<parityUnits>3</parityUnits>

</ecSchema>

</erasureCodingPolicy>

<erasureCodingPolicy>

<policyId>4</policyId>

<policyName>XOR-2-1-1024k</policyName>

<cellSize>1048576</cellSize>

<policyState>DISABLED</policyState>

<ecSchema>

<codecName>xor</codecName>

<dataUnits>2</dataUnits>

<parityUnits>1</parityUnits>

</ecSchema>

</erasureCodingPolicy>

<erasureCodingPolicy>

<policyId>5</policyId>

<policyName>RS-10-4-1024k</policyName>

<cellSize>1048576</cellSize>

<policyState>DISABLED</policyState>

<ecSchema>

<codecName>rs</codecName>

<dataUnits>10</dataUnits>

<parityUnits>4</parityUnits>

</ecSchema>

</erasureCodingPolicy>

</ErasureCodingSection>

<INodeSection>

<lastInodeId>16481</lastInodeId>

<numInodes>11</numInodes>

<inode>

<id>16385</id>

<type>DIRECTORY</type>

<name></name>

<mtime>1648954180012</mtime>

<permission>hdfs:supergroup:0755</permission>

<nsquota>9223372036854775807</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16449</id>

<type>DIRECTORY</type>

<name>singers</name>

<mtime>1648954180012</mtime>

<permission>hdfs:supergroup:0755</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16450</id>

<type>FILE</type>

<name>zhoujielun.txt.new</name>

<replication>3</replication>

<mtime>1648819792233</mtime>

<atime>1648953183013</atime>

<preferredBlockSize>134217728</preferredBlockSize>

<permission>hdfs:supergroup:0644</permission>

<blocks>

<block>

<id>1073741852</id>

<genstamp>1028</genstamp>

<numBytes>11</numBytes>

</block>

</blocks>

<storagePolicyId>0</storagePolicyId>

</inode>

<inode>

<id>16451</id>

<type>FILE</type>

<name>zhangxueyou.txt</name>

<replication>3</replication>

<mtime>1648819849013</mtime>

<atime>1648819809317</atime>

<preferredBlockSize>134217728</preferredBlockSize>

<permission>hdfs:supergroup:0644</permission>

<blocks>

<block>

<id>1073741853</id>

<genstamp>1030</genstamp>

<numBytes>20</numBytes>

</block>

</blocks>

<storagePolicyId>0</storagePolicyId>

</inode>

<inode>

<id>16452</id>

<type>DIRECTORY</type>

<name>tmp</name>

<mtime>1648950866448</mtime>

<permission>hdfs:supergroup:0770</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16453</id>

<type>DIRECTORY</type>

<name>hadoop-yarn</name>

<mtime>1648950866448</mtime>

<permission>hdfs:supergroup:0770</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16454</id>

<type>DIRECTORY</type>

<name>staging</name>

<mtime>1648950866448</mtime>

<permission>hdfs:supergroup:0770</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16455</id>

<type>DIRECTORY</type>

<name>history</name>

<mtime>1648950866526</mtime>

<permission>hdfs:supergroup:0770</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16456</id>

<type>DIRECTORY</type>

<name>done</name>

<mtime>1648950866451</mtime>

<permission>hdfs:supergroup:0770</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16457</id>

<type>DIRECTORY</type>

<name>done_intermediate</name>

<mtime>1648950866526</mtime>

<permission>hdfs:supergroup:1777</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

<inode>

<id>16471</id>

<type>DIRECTORY</type>

<name>API</name>

<mtime>1648954180006</mtime>

<permission>hdfs:supergroup:0755</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</inode>

</INodeSection>

<INodeReferenceSection></INodeReferenceSection>

<SnapshotSection>

<snapshotCounter>0</snapshotCounter>

<numSnapshots>0</numSnapshots>

</SnapshotSection>

<INodeDirectorySection>

<directory>

<parent>16385</parent>

<child>16471</child>

<child>16449</child>

<child>16452</child>

<child>16450</child>

</directory>

<directory>

<parent>16449</parent>

<child>16451</child>

</directory>

<directory>

<parent>16452</parent>

<child>16453</child>

</directory>

<directory>

<parent>16453</parent>

<child>16454</child>

</directory>

<directory>

<parent>16454</parent>

<child>16455</child>

</directory>

<directory>

<parent>16455</parent>

<child>16456</child>

<child>16457</child>

</directory>

</INodeDirectorySection>

<FileUnderConstructionSection></FileUnderConstructionSection>

<SecretManagerSection>

<currentId>0</currentId>

<tokenSequenceNumber>0</tokenSequenceNumber>

<numDelegationKeys>0</numDelegationKeys>

<numTokens>0</numTokens>

</SecretManagerSection>

<CacheManagerSection>

<nextDirectiveId>1</nextDirectiveId>

<numDirectives>0</numDirectives>

<numPools>0</numPools>

</CacheManagerSection>

</fsimage>

2.edits文件

cat /opt/software/edits_340

<?xml version="1.0" encoding="UTF-8" standalone="yes"?>

<EDITS>

<EDITS_VERSION>-64</EDITS_VERSION>

<RECORD>

<OPCODE>OP_START_LOG_SEGMENT</OPCODE>

<DATA>

<TXID>340</TXID>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_ADD</OPCODE>

<DATA>

<TXID>341</TXID>

<LENGTH>0</LENGTH>

<INODEID>16482</INODEID>

<PATH>/VERSION._COPYING_</PATH>

<REPLICATION>3</REPLICATION>

<MTIME>1648980787070</MTIME>

<ATIME>1648980787070</ATIME>

<BLOCKSIZE>134217728</BLOCKSIZE>

<CLIENT_NAME>DFSClient_NONMAPREDUCE_-504223726_1</CLIENT_NAME>

<CLIENT_MACHINE>192.168.10.102</CLIENT_MACHINE>

<OVERWRITE>true</OVERWRITE>

<PERMISSION_STATUS>

<USERNAME>hdfs</USERNAME>

<GROUPNAME>supergroup</GROUPNAME>

<MODE>420</MODE>

</PERMISSION_STATUS>

<ERASURE_CODING_POLICY_ID>0</ERASURE_CODING_POLICY_ID>

<RPC_CLIENTID>8c235ce3-9413-4aa5-8dc3-26167b851d7f</RPC_CLIENTID>

<RPC_CALLID>3</RPC_CALLID>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_ALLOCATE_BLOCK_ID</OPCODE>

<DATA>

<TXID>342</TXID>

<BLOCK_ID>1073741854</BLOCK_ID>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_SET_GENSTAMP_V2</OPCODE>

<DATA>

<TXID>343</TXID>

<GENSTAMPV2>1031</GENSTAMPV2>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_ADD_BLOCK</OPCODE>

<DATA>

<TXID>344</TXID>

<PATH>/VERSION._COPYING_</PATH>

<BLOCK>

<BLOCK_ID>1073741854</BLOCK_ID>

<NUM_BYTES>0</NUM_BYTES>

<GENSTAMP>1031</GENSTAMP>

</BLOCK>

<RPC_CLIENTID/>

<RPC_CALLID>-2</RPC_CALLID>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_CLOSE</OPCODE>

<DATA>

<TXID>345</TXID>

<LENGTH>0</LENGTH>

<INODEID>0</INODEID>

<PATH>/VERSION._COPYING_</PATH>

<REPLICATION>3</REPLICATION>

<MTIME>1648980788748</MTIME>

<ATIME>1648980787070</ATIME>

<BLOCKSIZE>134217728</BLOCKSIZE>

<CLIENT_NAME/>

<CLIENT_MACHINE/>

<OVERWRITE>false</OVERWRITE>

<BLOCK>

<BLOCK_ID>1073741854</BLOCK_ID>

<NUM_BYTES>216</NUM_BYTES>

<GENSTAMP>1031</GENSTAMP>

</BLOCK>

<PERMISSION_STATUS>

<USERNAME>hdfs</USERNAME>

<GROUPNAME>supergroup</GROUPNAME>

<MODE>420</MODE>

</PERMISSION_STATUS>

</DATA>

</RECORD>

<RECORD>

<OPCODE>OP_RENAME_OLD</OPCODE>

<DATA>

<TXID>346</TXID>

<LENGTH>0</LENGTH>

<SRC>/VERSION._COPYING_</SRC>

<DST>/VERSION</DST>

<TIMESTAMP>1648980788754</TIMESTAMP>

<RPC_CLIENTID>8c235ce3-9413-4aa5-8dc3-26167b851d7f</RPC_CLIENTID>

<RPC_CALLID>9</RPC_CALLID>

</DATA>

</RECORD>

</EDITS>

4697

4697

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?