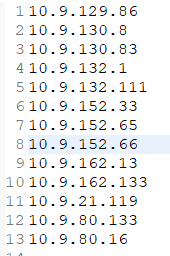

文件内容:

10.9.80.16

10.9.132.111

10.9.152.65

10.9.21.119

10.9.132.111

10.9.130.83

10.9.80.16

10.9.152.65

10.9.162.13

10.9.162.133

10.9.21.119

10.9.132.111

10.9.130.83

10.9.80.133

10.9.129.86

10.9.132.111

10.9.152.65

10.9.21.119

10.9.132.111

10.9.130.83

10.9.80.16

10.9.152.66

10.9.80.16

10.9.152.65

10.9.21.119

10.9.132.111

10.9.130.83

10.9.80.16

10.9.152.65

10.9.162.13

10.9.132.111

10.9.130.83

10.9.21.119

10.9.132.111

10.9.130.8

10.9.80.16

10.9.152.65

10.9.21.119

10.9.132.111

10.9.130.83

10.9.80.16

10.9.152.66

10.9.80.16

10.9.152.65

10.9.21.119

10.9.80.16

10.9.152.65

10.9.162.13

10.9.162.133

10.9.21.119

10.9.132.1

10.9.162.133

10.9.21.119

10.9.132.111

10.9.130.8

10.9.80.16

10.9.152.33

10.9.152.66

10.9.80.16

10.9.152.65

10.9.21.119

10.9.80.16

10.9.152.65

10.9.162.13

10.9.162.133

10.9.152.65

10.9.162.13

10.9.162.133

10.9.21.119

10.9.132.1

10.9.162.133

10.9.21.119

10.9.132.111

10.9.130.8

10.9.132.111

10.9.130.8

10.9.80.16

10.9.152.33

10.9.152.66

10.9.80.16

10.9.152.65

代码实现:

1.Mapper

package com.lj.ip3;

import java.io.IOException;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;public class ipMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

context.write(value, NullWritable.get());}

}

2.Reduce

package com.lj.ip3;

import java.io.IOException;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;public class IpReduce extends Reducer<Text, NullWritable, Text, NullWritable> {

public void reduce(Text key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

context.write(key, NullWritable.get());

}}

3.Driver

package com.lj.ip3;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;public class IpDriver {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "JobName");

job.setJarByClass(com.lj.ip3.IpDriver.class);

// TODO: specify a mapper

job.setMapperClass(ipMapper.class);

// TODO: specify a reducer

job.setReducerClass(IpReduce.class);// TODO: specify output types

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);// TODO: specify input and output DIRECTORIES (not files)

FileInputFormat.setInputPaths(job, new Path("hdfs://lj02:9000/txt/ip.txt"));

FileOutputFormat.setOutputPath(job, new Path("hdfs://lj02:9000/3ip"));if (!job.waitForCompletion(true))

return;

}}

结果:

7318

7318

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?