这里写目录标题

第 1 章 MapReduce 概述

1.1 MapReduce 定义

“分散任务,汇总结果”

1.2 MapReduce 优缺点

优点

- MapReduce 易于编程

- 良好的扩展性

- 高容错性

- 适合 PB 级以上海量数据的离线处理

缺点

- 不擅长实时计算

- 不擅长流式计算

- 不擅长 DAG(有向无环图)计算

1.3 MapReduce 核心思想

- 输入(input)—>拆分(Split):每个文件分片由单独的主机处理,这就是Map方法

- 各个主机结果汇总,Reduce方法

几个要点:

-

job=Map+Reduce

-

Map的输出是Reduce的输入

-

所有的输入输出都是<Key, Value>的形式,并且数据类型都是Hadoop的数据类型

-

MapReduce要求<Key, Value>的key,value均要实现Writable接口,上面Hadoop的Writable均已实现接口,如需自定义时,记得要实现Wrtable接口

-

MapReduce处理的数据都是HDFS的数据

1.4 MapReduce 编程规范(固定写法)

用户编写的程序分成三个部分:Mapper、Reducer 和 Driver。

1.5 WordCount 案例实操

需求:统计一堆文件中单词出现的个数(WordCount案例)

1.5.1 环境准备

- (1)创建 maven 工程,MapReduceDemo

- (2)在 pom.xml 文件中添加如下依赖

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.30</version>

</dependency>

</dependencies>

- (3)在项目的 src/main/resources 目录下,新建一个文件,命名为“log4j.properties”,在文件中填入。

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

- (4)创建包名:com.atguigu.mapreduce.wordcount

1.5.2 编写程序

- (1)编写 Mapper 类(WordCountMapper)(继承Mapper类,实现自己的Mapper,并重写map()方法)

package com.atguigu.mapreduce.wordcount;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

/**

* KEYIN,map阶段输入的key类型:LongWritable

* VALUEIN,map阶段输入的value类型:Text

* KEYYOUT,map阶段输出的key类型:Text

* VALUEOUT,map阶段输出的value类型;IntWritable

*/

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable>{

Text k = new Text();

IntWritable v = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取一行

String line = value.toString();

// 2 切割

String[] words = line.split(" ");

// 3 输出

for (String word : words) {

k.set(word);

context.write(k, v);

}

}

}

- (2)编写 Reducer 类(继承Reducer类,实现自己的Reducer,并重写reduce()方法)

package com.atguigu.mapreduce.wordcount;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

/**

* KEYIN,Reduce阶段输入的key类型:Text

* VALUEIN,Reduce阶段输入的value类型:IntWritable

* KEYYOUT,Reduce阶段输出的key类型:Text

* VALUEOUT,Reduce阶段输出的value类型;IntWritable

*/

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable>{

int sum;

IntWritable v = new IntWritable();

@Override

//Iterable:类似一个集合

protected void reduce(Text key, Iterable<IntWritable> values,Context context) throws IOException, InterruptedException {

// 1 累加求和

sum = 0;

for (IntWritable count : values) {

//因为count是IntWritable类型,sum是int类型,故使用.get()获取

sum += count.get();

}

// 2 输出

v.set(sum);

context.write(key,v);//context.write(key,new IntWritable(sum));

}

}

- (3)编写 Driver 驱动类(程序入口类)

package com.atguigu.mapreduce.wordcount;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class WordCountDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

// 1 获取配置信息以及获取 job 对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2 关联本 Driver 程序的 jar

job.setJarByClass(WordCountDriver.class);

// 3 关联 Mapper 和 Reducer 的 jar

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

// 4 设置 Mapper 输出的 kv 类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

// 5 设置最终输出 kv 类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 6 设置输入和输出路径

FileInputFormat.setInputPaths(job, new Path("D:\\input\\inputword"));

FileOutputFormat.setOutputPath(job, new Path("D:\\hadoop\\output1"));//注意:输出地址不能已经存在

// 7 提交 job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

1.5.3 本地测试

1.5.4 提交到集群测试

- (1)用 maven 打 jar 包,需要添加的打包插件依赖

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.1</version>

<configuration>

<source>11</source>

<target>11</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

-

(2)将程序打成 jar 包,并打开

-

(3)显示打包的jar包

注意:一般常用不带依赖的 -

(4)修改不带依赖的 jar 包名称为 wc.jar,并拷贝该 jar 包到 Hadoop 集群的

/opt/module/hadoop-3.1.3 路径,

-

(4)启动 Hadoop 集群

-

(5)执行 WordCount 程序

启动:

成功:

第 2 章 Hadoop 序列化

2.1 Hadoop 序列化特点

- (1)紧凑 :高效使用存储空间。

- (2)快速:读写数据的额外开销小。

- (3)互操作:支持多语言的交互

2.2 自定义 bean 对象实现序列化接口(Writable)

具体实现 bean 对象序列化步骤如下 7 步

- (1)必须实现 Writable 接口

- (2)反序列化时,需要反射调用空参构造函数,所以必须有空参构造

- (3)重写序列化方法

- (4)重写反序列化方法

- (5)注意反序列化的顺序和序列化的顺序完全一致

- (6)要想把结果显示在文件中,需要重写 toString(),可用"\t"分开,方便后续用。

- (7)如果需要将自定义的 bean 放在 key 中传输,则还需要实现 Comparable 接口,因为

MapReduce 框中的 Shuffle 过程要求对 key 必须能排序。详见后面排序案例。

@Override

public int compareTo(FlowBean o) {

// 倒序排列,从大到小

return this.sumFlow > o.getSumFlow() ? -1 : 1;

}

2.3 序列化案例实操

统计每一个手机号耗费的总上行流量、总下行流量、总流量

统计结果

编写 MapReduce 程序

(1)编写流量统计的 Bean 对象

package com.atguigu.mapreduce.writable;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:15

*/

//1 继承 Writable 接口

public class FlowBean implements Writable {

private long upFlow;//上行流量

private long downFlow; //下行流量

private long sumFlow;//总流量

//2 提供无参构造

public FlowBean() {

}

//3 提供三个参数的 getter 和 setter 方法

public long getUpFlow() {

return upFlow;

}

public long getDownFlow() {

return downFlow;

}

public long getSumFlow(){

return getSumFlow();

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public void setSumFlow() {

this.sumFlow = this.upFlow + this.downFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

//4 实现序列化和反序列化方法,注意顺序一定要保持一致

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

}

//5 重写 ToString

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

}

(2)编写 Mapper 类

package com.atguigu.mapreduce.writable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:25

*/

public class FlowMapper extends Mapper<LongWritable,Text,Text,FlowBean> {

private Text outk = new Text();

private FlowBean outv = new FlowBean();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1 获取一行数据,转成字符串

String line = value.toString();

//2 切割数据

String[] split = line.split("\t");

//3 抓取我们需要的数据:手机号,上行流量,下行流量

String phone = split[1];

String up = split[split.length - 3];

String down = split[split.length - 2];

//4 封装 outK outV

outk.set(phone);

outv.setUpFlow(Long.parseLong(up));

outv.setDownFlow(Long.parseLong(down));

outv.setSumFlow();

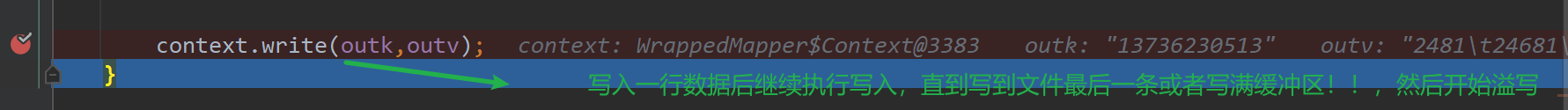

context.write(outk,outv);

}

}

(3)编写 Reducer 类

package com.atguigu.mapreduce.writable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 14:53

*/

public class FlowReducer extends Reducer<Text, FlowBean,Text,FlowBean>{

private FlowBean outv = new FlowBean();

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

long totalUp = 0;

long totalDown = 0;

//1 遍历 values,将其中的上行流量,下行流量分别累加

for(FlowBean flowBean : values){

totalUp += flowBean.getUpFlow();

totalDown += flowBean.getDownFlow();

}

//2 封装 outKV

outv.setUpFlow(totalUp);

outv.setDownFlow(totalDown);

outv.setSumFlow();

//3 写出outk outv

context.write(key,outv);

}

}

(4)编写 Driver 驱动类

package com.atguigu.mapreduce.writable;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.awt.*;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 15:03

*/

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//1 获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2 关联Driver类

job.setJarByClass(FlowDriver.class);

//3 关联Mapper和Reducer

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

//4 设置Map端输出LV类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

//5 设置程序最终输出的KV类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//6 设置程序的输入输出路径

FileInputFormat.setInputPaths(job, new Path("C:\\Users\\Administrator\\Desktop\\phone_data.txt"));

FileOutputFormat.setOutputPath(job,new Path("C:\\Users\\Administrator\\Desktop\\jar1"));

//7 提交

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

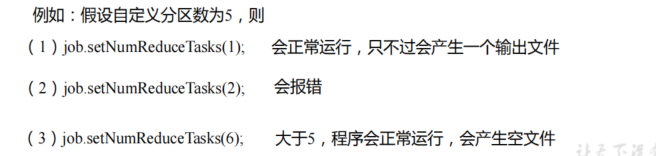

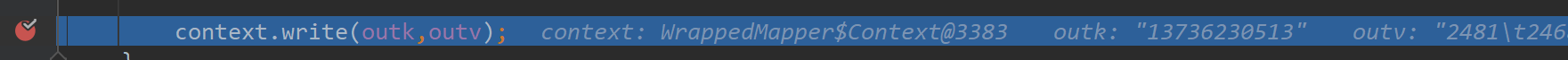

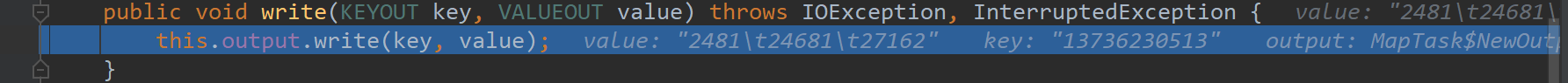

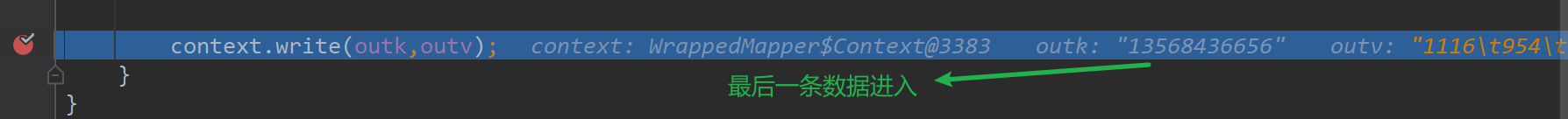

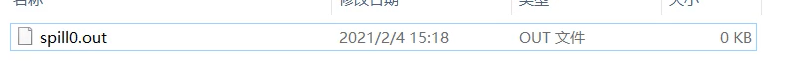

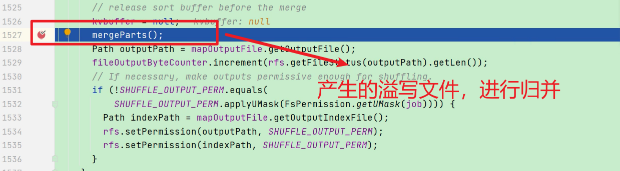

注意:活用deBug

第 3 章 MapReduce 框架原理

3.1 InputFormat 数据输入

3.1.1 切片与 MapTask 并行度决定机制

- 1)一个Job的Map阶段并行度由客户端在提交Job时的切片数决定

- 2)每一个Split切片分配一个MapTask并行实例处理

- 3)默认情况下,切片大小=BlockSize

- 4)切片时不考虑数据集整体,而是逐个针对每一个文件单独切片

3.1.2 FileInputFormat 切片源码解析和切片机制(input.getSplits(job))

3.1.4 FileInputFormat 实现类—TextInputFormat

- TextInputFormat 是默认的 FileInputFormat 实现类

- 按行读取每条记录

- 键是存储该行在整个文件中的起始字节偏移量, LongWritable 类型

- 值是这行的内容,不包括任何行终止符(换行符和回车符),Text 类型

以下是一个示例,比如,一个分片包含了如下 4 条文本记录。

Rich learning form

Intelligent learning engine

Learning more convenient

From the real demand for more close to the enterprise

每条记录表示为以下键/值对:

(0,Rich learning form)

(20,Intelligent learning engine)

(49,Learning more convenient)

(74,From the real demand for more close to the enterprise)

3.1.5 FileInputFormat 实现类—CombineTextInputFormat

- 框架默认的 TextInputFormat 切片机制是对任务按文件规划切片,不管文件多小,都会是一个单独的切片,都会交给一个 MapTask,这样如果有大量小文件,就会产生大量的MapTask,处理效率极其低下

- CombineTextInputFormat 用于小文件过多的场景,它可以将多个小文件从逻辑上规划到一个切片中,这样,多个小文件就可以交给一个 MapTask 处理

3.1.6 CombineTextInputFormat 案例实操

1:需求

2:实现过程

(1)不做任何处理,运行 1.8 节的 WordCount 案例程序,观察切片个数为 4。

(2)在 WordcountDriver 中增加如下代码,运行程序,并观察运行的切片个数

(a)驱动类中添加代码如下:

// 如果不设置 InputFormat,它默认用的是 TextInputFormat.class

job.setInputFormatClass(CombineTextInputFormat.class);

//虚拟存储切片最大值设置 4m

CombineTextInputFormat.setMaxInputSplitSize(job, 4194304);

(b)运行结果为 1个切片。

3.2 MapReduce 工作流程

3.3 Shuffle 机制(面试重点)

3.3.1 Shuffle 机制

Map 方法之后,Reduce 方法之前的数据处理过程称之为 Shuffle。

3.3.2 Partition 分区

- 1、默认Partitioner分区

默认分区是根据key的hashCode对ReduceTasks个数取模得到的。用户没法控制哪个key存储到哪个分区。

举例1:原始数据

举例1:设置生成两个文件

举例1:结果:

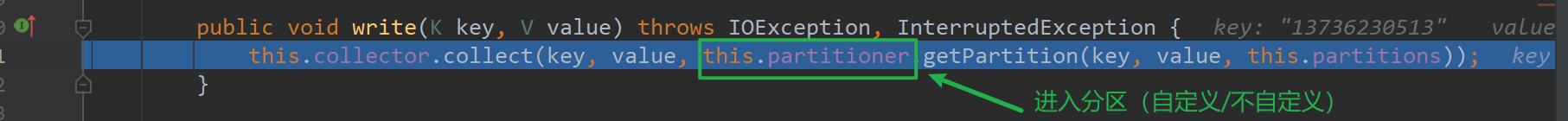

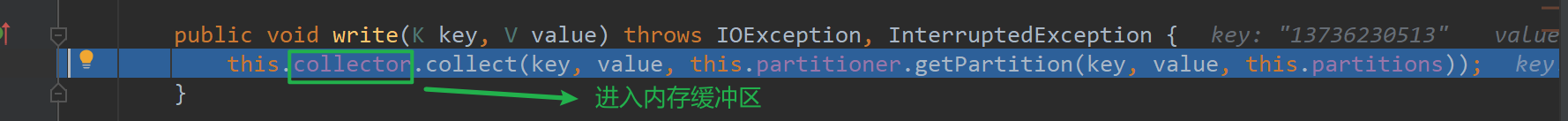

源码解析(debug)(设置为2,处理流程)

源码解析(debug)(不设置为2处理流程,默认为1个分区)

只会产生一个0号文件

- 3、自定义Partitioner步骤

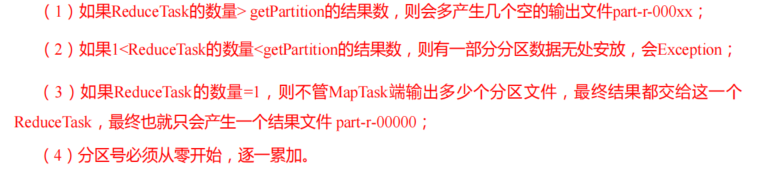

- 4、分区总结

结论:如果不设置ReduceTask,其默认值为1,不会进入hashpartitioner方法。就只会生成一个文件

设置ReduceTask后,如果其值大于自定义分区数(partition的值),程序会正常运行,不过会产生空文件

设置ReduceTask后,如果其值小于自定义分区数(partition的值),程序会报错

- 5、案例分析

3.3.3 Partition 分区案例实操

在前面案例writable案例的基础上,增加一个分区类

package com.atguigu.mapreduce.partitioner2;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

/**

* @author shkstart

* @create 2021-04-08 17:05

*/

public class ProvincePartitioner extends Partitioner<Text,FlowBean> {

@Override

public int getPartition(Text text, FlowBean flowBean, int i) {//text是手机号

//获取手机号前三位

String phone = text.toString();

String prePhone = phone.substring(0,3);

//定义一个分区号变量partition,根据prePhone设置分区号

int partition;

if("136".equals(prePhone)){

//设置该136开头的手机号放入分区号为0的文件

partition = 0;

}else if("137".equals(prePhone)){

//设置137开头的手机号放入分区号为1的文件

partition = 1;

}else if("138".equals(prePhone)){

partition = 2;

}else if("139".equals(prePhone)){

partition = 3;

}else {

partition = 4;

}

//最后返回分区号

return partition;

}

}

在驱动函数中增加自定义数据分区设置和 ReduceTask 设置

//8 指定自定义分区器

job.setPartitionerClass(ProvincePartitioner.class);

//9 同时指定相应数量的 ReduceTask

job.setNumReduceTasks(5);

结果:

3.3.4 WritableComparable 排序

- MapTask和ReduceTask均会对数据按照key进行排序

- 任何应用程序中的数据均会被排序,而不管逻辑上是

否需要 - 默认排序是按照字典顺序排序,且实现该排序的方法是快速排序。

- 排序分类:部分排序、全排序、辅助排序、二次排序

自定义排序 WritableComparable 原理分析

实现 WritableComparable 接口重写 compareTo 方法,就可以实现排序。

@Override

public int compareTo(FlowBean bean) {

return 0;

}

3.3.5 WritableComparable 排序案例实操(全排序)

代码实现

(1)FlowBean 对象在在需求 1 基础上增加了比较功能

- 注意点1:实现的是WritableComparable接口

- 注意点2:重写了compareTo方法

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:15

*/

//1 继承 Writable 接口

public class FlowBean implements WritableComparable<FlowBean> {

private long upFlow;//上行流量

private long downFlow; //下行流量

private long sumFlow;//总流量

//2 提供无参构造

public FlowBean() {

}

//3 提供三个参数的 getter 和 setter 方法

public long getUpFlow() {

return upFlow;

}

public long getDownFlow() {

return downFlow;

}

public long getSumFlow(){

return getSumFlow();

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public void setSumFlow() {

this.sumFlow = this.upFlow + this.downFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

//4 实现序列化和反序列化方法,注意顺序一定要保持一致

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

}

//5 重写 ToString

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

//排序比较

@Override

public int compareTo(FlowBean o){

//按照总流量排序

if(this.sumFlow > o.sumFlow){

return -1;

}else if(this.sumFlow < o.sumFlow){

return 1;

}else{

return 0;

}

}

}

(2)编写 Mapper 类

- 注意点1:输入k,v类型:LongWritable,Text

- 注意点2:输出k,v类型:FlowBean,Text

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:25

*/

public class FlowMapper extends Mapper<LongWritable,Text,FlowBean,Text>{

FlowBean outk = new FlowBean();

Text outv = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1.获取一行数据

String line = value.toString();

//2.按照/t,切割数据

String[] split = line.split("\t");

//3 封装outk outv

outk.setUpFlow(Long.parseLong(split[1]));

outk.setDownFlow(Long.parseLong(split[2]));

outk.setSumFlow();

outv.set(split[0]);

//4 写出outK outV

context.write(outk,outv);

}

}

(3)编写 Reducer 类

- 注意点1:输入输出k,v类型FlowBean, Text,Text, FlowBean

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 14:53

*/

public class FlowReducer extends Reducer<FlowBean, Text,Text, FlowBean> {

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

//遍历value集合,循环写出,避免总流量相同的情况

for(Text value : values){

//调换kv的位置,反向写出

context.write(value,key);

}

}

}

(4)编写 Driver 类

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 15:03

*/

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//1 获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2 关联Driver类

job.setJarByClass(FlowDriver.class);

//3 关联Mapper和Reducer

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

//4 设置Map端输出LV类型

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

//5 设置程序最终输出的KV类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//6 设置程序的输入输出路径

FileInputFormat.setInputPaths(job, new Path("C:\\Users\\Administrator\\Desktop\\jar1"));

FileOutputFormat.setOutputPath(job,new Path("C:\\Users\\Administrator\\Desktop\\jar2"));

//7 提交job

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

结果

3.3.6 WritableComparable 排序案例实操(区内排序)

3:案例实操

(1)增加自定义分区类ProvincePartitioner2

package com.atguigu.mapreduce.partitionerandwritableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

/**

* @author shkstart

* @create 2021-04-08 17:05

*/

public class ProvincePartitioner2 extends Partitioner<FlowBean,Text> {

@Override

public int getPartition(FlowBean flowBean,Text text ,int numPartitions) {//text是手机号

//获取手机号前三位

String phone = text.toString();

String prePhone = phone.substring(0,3);

//定义一个分区号变量partition,根据prePhone设置分区号

int partition;

if("136".equals(prePhone)){

//设置该136开头的手机号放入分区号为0的文件

partition = 0;

}else if("137".equals(prePhone)){

//设置137开头的手机号放入分区号为1的文件

partition = 1;

}else if("138".equals(prePhone)){

partition = 2;

}else if("139".equals(prePhone)){

partition = 3;

}else {

partition = 4;

}

//最后返回分区号

return partition;

}

}

(2)在驱动类FlowDriver中添加分区类

//指定自定义分区器

job.setPartitionerClass(ProvincePartitioner2.class);

//设置对应的ReduceTask

job.setNumReduceTasks(5);

package com.atguigu.mapreduce.partitionerandwritableComparable;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 15:03

*/

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//1 获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2 关联Driver类

job.setJarByClass(FlowDriver.class);

//3 关联Mapper和Reducer

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

//4 设置Map端输出LV类型

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

//5 设置程序最终输出的KV类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//指定自定义分区器

job.setPartitionerClass(ProvincePartitioner2.class);

//设置对应的ReduceTask

job.setNumReduceTasks(5);

//6 设置程序的输入输出路径

FileInputFormat.setInputPaths(job, new Path("C:\\Users\\Administrator\\Desktop\\jar1"));

FileOutputFormat.setOutputPath(job,new Path("C:\\Users\\Administrator\\Desktop\\jar9"));

//7 提交job

boolean b = job.waitForCompletion(true);

System.exit(b ? 0 : 1);

}

}

FlowBean类

package com.atguigu.mapreduce.partitionerandwritableComparable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:15

*/

//1 继承 Writable 接口

public class FlowBean implements WritableComparable<FlowBean> {

private long upFlow;//上行流量

private long downFlow; //下行流量

private long sumFlow;//总流量

//2 提供无参构造

public FlowBean() {

}

//3 提供三个参数的 getter 和 setter 方法

public long getUpFlow() {

return upFlow;

}

public long getDownFlow() {

return downFlow;

}

public long getSumFlow(){

return getSumFlow();

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public void setSumFlow() {

this.sumFlow = this.upFlow + this.downFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

//4 实现序列化和反序列化方法,注意顺序一定要保持一致

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

}

//5 重写 ToString

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

//排序比较

@Override

public int compareTo(FlowBean o){

//按照总流量排序

if(this.sumFlow > o.sumFlow){

return -1;

}else if(this.sumFlow < o.sumFlow){

return 1;

}else{

return 0;

}

}

}

FlowMapper类

package com.atguigu.mapreduce.partitionerandwritableComparable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 11:25

*/

public class FlowMapper extends Mapper<LongWritable,Text, FlowBean,Text>{

FlowBean outk = new FlowBean();

Text outv = new Text();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1.获取一行数据

String line = value.toString();

//2.按照/t,切割数据

String[] split = line.split("\t");

//3 封装outk outv

outk.setUpFlow(Long.parseLong(split[1]));

outk.setDownFlow(Long.parseLong(split[2]));

outk.setSumFlow();

outv.set(split[0]);

//4 写出outK outV

context.write(outk,outv);

}

}

FlowReducer类

package com.atguigu.mapreduce.partitionerandwritableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author shkstart

* @create 2021-04-07 14:53

*/

public class FlowReducer extends Reducer<FlowBean, Text,Text, FlowBean> {

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

//遍历value集合,循环写出,避免总流量相同的情况

for(Text value : values){

//调换kv的位置,反向写出

context.write(value,key);

}

}

}

结果:

3.3.7 Combiner 合并

将 WordcountReducer 作为 Combiner 在 WordcountDriver 驱动类中指定

// 指定需要使用 Combiner,以及用哪个类作为 Combiner 的逻辑

job.setCombinerClass(WordCountReducer.class);

3.4 OutputFormat 数据输出

3.4.1 OutputFormat 接口实现类

3.4.2 自定义 OutputFormat 案例实操

3.5 MapReduce 内核源码解析

3.5.1 MapTask 工作机制

五大阶段!!!!!!!

3.5.2 ReduceTask 工作机制

三大阶段!!!!!!!

3.5.3 ReduceTask 并行度决定机制

- MapTask 并行度由切片个数决定,切片个数由输入文件和切片规则决定

- ReduceTask 的并行度数量的决定是可以直接手动设置,默认为1

// 默认值是 1,手动设置为 4

job.setNumReduceTasks(4);

一般情况下,在企业里面ReduceTask 的并行度数量需要进行测试后得出!!!

3.5.4 MapTask & ReduceTask 源码解析

- MapTask 源码解析流程

举例:

- ReduceTask 源码解析流程

3.6 Join 应用

3.6.1 Reduce Join

3.6.2 Reduce Join 案例实操

3.6.3 Map Join

3.6.4 Map Join 案例实操

3.7 数据清洗(ETL)

3.8 MapReduce 开发总结

第 4 章 Hadoop 数据压缩

4.1 压缩算法对比介绍

4.2 压缩性能的比较

4.3 压缩位置选择

4.4 压缩实操案例

4.4.1 Map 输出端采用压缩

4.4.2 Reduce 输出端采用压缩

881

881

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?