pytorch官网——docs(官方文档)——torch.nn(关于神经网络的工具)

Containers:给神经网络定义了一些骨架

在卷积神经网络中的一些核心部分:

Convolution Layers

Pooling layers

Padding Layers

Non-linear Activations (weighted sum, nonlinearity)

Non-linear Activations (other)

Normalization Layers

Recurrent Layers

Transformer Layers

Linear Layers

Dropout Layers

Sparse Layers

Distance Functions

Loss Functions

Vision Layers

Shuffle Layers

DataParallel Layers (multi-GPU, distributed)

Utilities

Quantized Functions

Lazy Modules Initialization

container中有六个模块,最常用的为Module

Module 示例代码

import torch.nn as nn

import torch.nn.functional as F

class Model(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(1, 20, 5)

self.conv2 = nn.Conv2d(20, 20, 5)

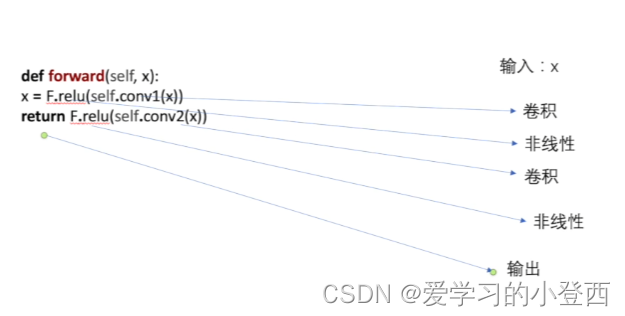

def forward(self, x):

x = F.relu(self.conv1(x))

return F.relu(self.conv2(x))

代码实战:

class Peipei(nn.Module):

def __init__(self) -> None:

super().__init__()

def forward(self, input):

output = input + 1

return output

pp1 = Peipei()

x = torch.tensor(1.0)

output = pp1(x)

print(output)

输出:tensor(2.)

1108

1108

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?