💖💖>>>加勒比海带,QQ2479200884<<<💖💖

🍀🍀>>>【YOLO魔法搭配&论文投稿咨询】<<<🍀

✨✨>>>学习交流 | 温澜潮生 | 合作共赢 | 共同进步<<<✨✨

📚📚>>>人工智能 | 计算机视觉 | 深度学习Tricks | 第一时间送达<<<📚📚

论文题目:Searching for MobileNetV3

论文地址:https://arxiv.org/abs/1905.02244

源代码:https://github.com/xiaolai-sqlai/mobilenetv3

一、论文简介

MobileNetV3,是谷歌在2019年3月21日提出的轻量化网络架构,在前两个版本的基础上,加入神经网络架构搜索(NAS)和h-swish激活函数,并引入SE通道注意力机制,性能和速度都表现优异,受到学术界和工业界的追捧。

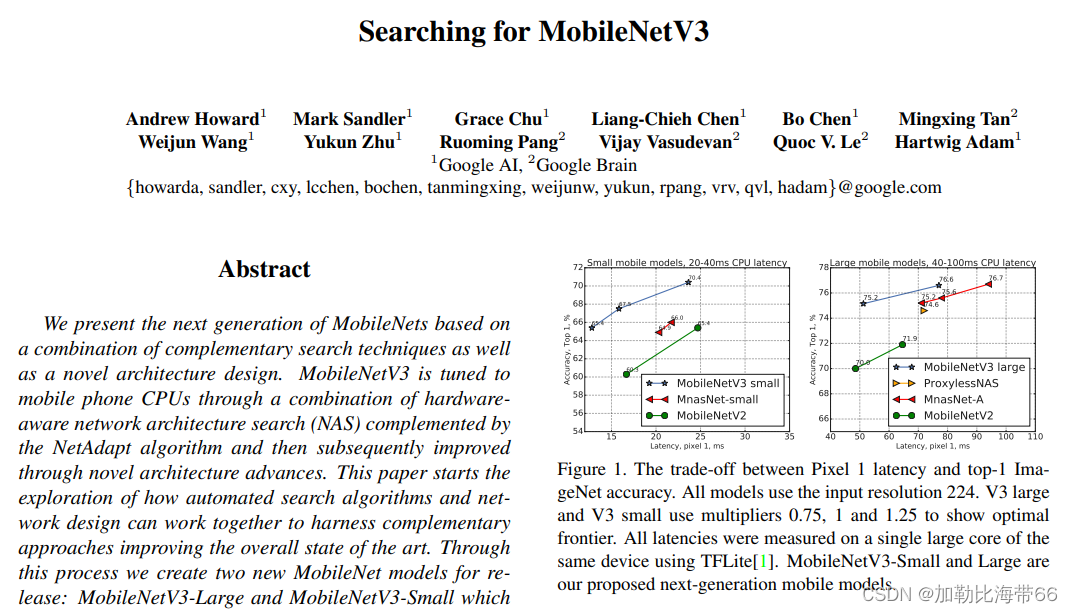

相比于 MobileNetV2 版本而言,具体 MobileNetV3 在性能上有哪些提升呢?在原论文摘要中,作者提到在 ImageNet 分类任务中正确率上升了 3.2%,计算延时还降低了 20%。

小海带结合YOLOv5做实验时的参数:343 layers, 1376844 parameters, 1376844 gradients, 2.3 GFLOPS。参数量及浮点运算量大幅下降,且推理速度提升飞快!

总结其主要特点:

1.论文推出两个版本:Large 和 Small,分别适用于不同的场景

2.网络的架构基于NAS实现的MnasNet(效果比MobileNetV2好),由NAS搜索获取参数

3.引入MobileNetV1的深度可分离卷积

4.引入MobileNetV2的具有线性瓶颈的倒残差结构

5.引入基于squeeze and excitation结构的轻量级注意力模型(SE)

6.使用了一种新的激活函数h-swish(x)

7.网络结构搜索中,结合两种技术:资源受限的NAS(platform-aware NAS)与NetAdapt

8.修改了MobileNetV2网络端部最后阶段

二、YOLOv5结合轻量化网络MobileNetV3

1.配置.yaml文件

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

backbone:

# [from, number, module, args]

[ [ -1, 1, conv_bn_hswish, [ 16, 2 ] ], # 0-p1/2

[ -1, 1, MobileNet_Block, [ 16, 16, 3, 2, 1, 0 ] ], # 1-p2/4

[ -1, 1, MobileNet_Block, [ 24, 72, 3, 2, 0, 0 ] ], # 2-p3/8

[ -1, 1, MobileNet_Block, [ 24, 88, 3, 1, 0, 0 ] ], # 3-p3/8

[ -1, 1, MobileNet_Block, [ 40, 96, 5, 2, 1, 1 ] ], # 4-p4/16

[ -1, 1, MobileNet_Block, [ 40, 240, 5, 1, 1, 1 ] ], # 5-p4/16

[ -1, 1, MobileNet_Block, [ 40, 240, 5, 1, 1, 1 ] ], # 6-p4/16

[ -1, 1, MobileNet_Block, [ 48, 120, 5, 1, 1, 1 ] ], # 7-p4/16

[ -1, 1, MobileNet_Block, [ 48, 144, 5, 1, 1, 1 ] ], # 8-p4/16

[ -1, 1, MobileNet_Block, [ 96, 288, 5, 2, 1, 1 ] ], # 9-p5/32

[ -1, 1, MobileNet_Block, [ 96, 576, 5, 1, 1, 1 ] ], # 10-p5/32

[ -1, 1, MobileNet_Block, [ 96, 576, 5, 1, 1, 1 ] ], # 11-p5/32

]

# YOLOv5 head

head:

[[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 8], 1, Concat, [1]], # cat backbone P4

[-1, 1, C3, [256, False]], # 15

[-1, 1, Conv, [128, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 3], 1, Concat, [1]], # cat backbone P3

[-1, 1, C3, [128, False]], # 19 (P3/8-small)

[-1, 1, Conv, [128, 3, 2]],

[[-1, 16], 1, Concat, [1]], # cat head P4

[-1, 1, C3, [256, False]], # 22 (P4/16-medium)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 12], 1, Concat, [1]], # cat head P5

[-1, 1, C3, [512, False]], # 25 (P5/32-large)

[[19, 22, 25], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

2.配置common.py

#——————MobileNetV3——————

class h_sigmoid(nn.Module):

def __init__(self, inplace=True):

super(h_sigmoid, self).__init__()

self.relu = nn.ReLU6(inplace=inplace)

def forward(self, x):

return self.relu(x + 3) / 6

class h_swish(nn.Module):

def __init__(self, inplace=True):

super(h_swish, self).__init__()

self.sigmoid = h_sigmoid(inplace=inplace)

def forward(self, x):

return x * self.sigmoid(x)

class SELayer(nn.Module):

def __init__(self, channel, reduction=4):

super(SELayer, self).__init__()

# Squeeze操作

self.avg_pool = nn.AdaptiveAvgPool2d(1)

# Excitation操作(FC+ReLU+FC+Sigmoid)

self.fc = nn.Sequential(

nn.Linear(channel, channel // reduction),

nn.ReLU(inplace=True),

nn.Linear(channel // reduction, channel),

h_sigmoid()

)

def forward(self, x):

b, c, _, _ = x.size()

y = self.avg_pool(x)

y = y.view(b, c)

y = self.fc(y).view(b, c, 1, 1) # 学习到的每一channel的权重

return x * y

class conv_bn_hswish(nn.Module):

"""

This equals to

def conv_3x3_bn(inp, oup, stride):

return nn.Sequential(

nn.Conv2d(inp, oup, 3, stride, 1, bias=False),

nn.BatchNorm2d(oup),

h_swish()

)

"""

def __init__(self, c1, c2, stride):

super(conv_bn_hswish, self).__init__()

self.conv = nn.Conv2d(c1, c2, 3, stride, 1, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = h_swish()

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class MobileNet_Block(nn.Module):

def __init__(self, inp, oup, hidden_dim, kernel_size, stride, use_se, use_hs):

super(MobileNet_Block, self).__init__()

assert stride in [1, 2]

self.identity = stride == 1 and inp == oup

# 输入通道数=扩张通道数 则不进行通道扩张

if inp == hidden_dim:

self.conv = nn.Sequential(

# dw

nn.Conv2d(hidden_dim, hidden_dim, kernel_size, stride, (kernel_size - 1) // 2, groups=hidden_dim,

bias=False),

nn.BatchNorm2d(hidden_dim),

h_swish() if use_hs else nn.ReLU(inplace=True),

# Squeeze-and-Excite

SELayer(hidden_dim) if use_se else nn.Sequential(),

# pw-linear

nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False),

nn.BatchNorm2d(oup),

)

else:

# 否则 先进行通道扩张

self.conv = nn.Sequential(

# pw

nn.Conv2d(inp, hidden_dim, 1, 1, 0, bias=False),

nn.BatchNorm2d(hidden_dim),

h_swish() if use_hs else nn.ReLU(inplace=True),

# dw

nn.Conv2d(hidden_dim, hidden_dim, kernel_size, stride, (kernel_size - 1) // 2, groups=hidden_dim,

bias=False),

nn.BatchNorm2d(hidden_dim),

# Squeeze-and-Excite

SELayer(hidden_dim) if use_se else nn.Sequential(),

h_swish() if use_hs else nn.ReLU(inplace=True),

# pw-linear

nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False),

nn.BatchNorm2d(oup),

)

def forward(self, x):

y = self.conv(x)

if self.identity:

return x + y

else:

return y

3.修改yolo.py

找到parse_model函数,加入h_sigmoid, h_swish,SELayer,conv_bn_hswish, MobileNet_Block等5个模块即可。

2089

2089

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?