分类问题

感知机:二分类问题

线性回归是输出实数,感知机是输出离散类

Softmax 可实现多分类,感知机 实现二分类

收敛定理(停止条件):所有数据分类正确,且有一定余量

感知机不能拟合XOR问题,只能产生线性分割面

多层感知机

首先解决XOR问题==>做多次感知分类

激活函数 要使用非线性函数

- 将 x 的值投影到一个0和1的开区间中

- sigmoid实际上是阶跃函数的温和版

y = torch.sigmoid(x)

d2l.plot(x.detach(), y.detach(), 'x', 'sigmoid(x)', figsize=(5, 2.5))

- 和sigmoid很像,区别在于它是将输入投影到-1到1的区间内

- -2是为了方便求导

y = torch.tanh(x)

d2l.plot(x.detach(), y.detach(), 'x', 'tanh(x)', figsize=(5, 2.5))

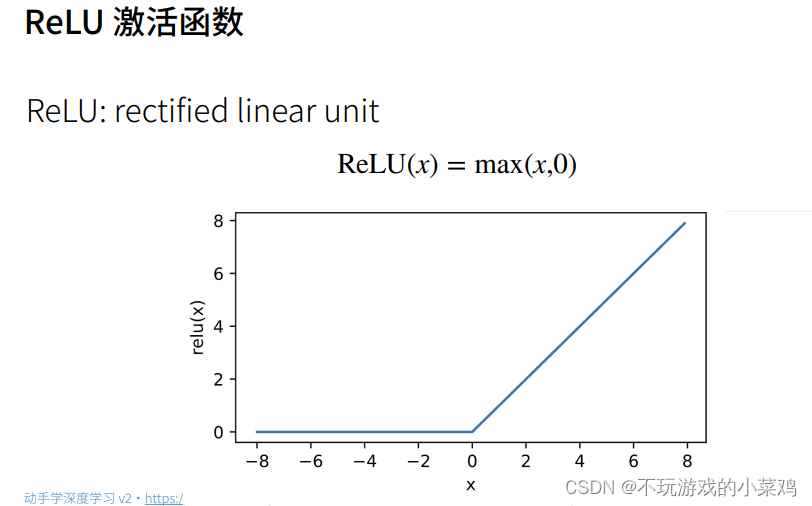

- 最常用

- 不用做指数运算

x = torch.arange(-8.0, 8.0, 0.1, requires_grad=True)

y = torch.relu(x)

d2l.plot(x.detach(), y.detach(), 'x', 'relu(x)', figsize=(5, 2.5))

- softmax就是将所有的输入映射到0和1的区间之内,并且所有输出的值加起来等于1,从而转变成概率

1.做线性

2.单隐藏层

3.多隐藏层

简洁代码实现

1 导入包

import torch

from torch import nn

from d2l import torch as d2l2 创建网络

这里784是一个数据的输入,就是说这个网络输入的点有784个。256表示的是隐藏层的单元个数。10表示的是最终的输出是一个长为10的向量。

nn.Sequential()

net = nn.Sequential(nn.Flatten(),

nn.Linear(784, 256),

nn.ReLU(),

nn.Linear(256, 10)) #可以理解为网络是由这4部分组成的

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights);3 训练

batch_size, lr, num_epochs = 256, 0.1, 10

loss = nn.CrossEntropyLoss()

trainer = torch.optim.SGD(net.parameters(), lr=lr)

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)从零开始代码实现

1 导入包

import torch

from torch import nn

from d2l import torch as d2l

batch_size = 256 #批量大小为256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size) #导入训练和测试数据集2 参数w和b初始化

输入和输出个数参考softmax函数是实现根据模型固定了的。

Parameter torch.randn

num_inputs, num_outputs, num_hiddens = 784, 10, 256 #输入、输出、隐藏层单元 个数。

W1 = nn.Parameter(torch.randn(

num_inputs, num_hiddens, requires_grad=True) * 0.01) #w初始化成一个随机的784*256的tensor

b1 = nn.Parameter(torch.zeros(num_hiddens, requires_grad=True)) #b初始化一个

W2 = nn.Parameter(torch.randn(

num_hiddens, num_outputs, requires_grad=True) * 0.01)

b2 = nn.Parameter(torch.zeros(num_outputs, requires_grad=True))

params = [W1, b1, W2, b2]3 ReLu函数

def relu(X):

a = torch.zeros_like(X)

return torch.max(X, a)4 定义模型

即前向传播的计算

nn.CrossEntropyLoss()

def net(X):

X = X.reshape((-1, num_inputs))

H = relu(X@W1 + b1) # 这里“@”代表矩阵乘法

return (H@W2 + b2)

loss = nn.CrossEntropyLoss() #定义loss损失函数为交叉熵损失函数5 训练

num_epochs, lr = 10, 0.1 #循环10次,学习率为0.1

updater = torch.optim.SGD(params, lr=lr) #随机梯度下降算法

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater) #画图

6 预测

d2l.predict_ch3(net, test_iter)完整代码:

import torch

from torch import nn

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

'''

# 实现一个具有单隐藏层的多层感知机,它包含256个隐藏单元

num_inputs, num_outputs, num_hiddens = 784, 10, 256

W1 = nn.Parameter(torch.randn(num_inputs, num_hiddens, requires_grad=True))

print(f'W1.shape: {W1.shape}')

b1 = nn.Parameter(torch.zeros(num_hiddens, requires_grad=True))

print(f'b1.shape: {b1.shape}')

W2 = nn.Parameter(torch.randn(num_hiddens, num_outputs, requires_grad=True))

b2 = nn.Parameter(torch.zeros(num_outputs, requires_grad=True))

params = [W1, b1, W2, b2]

# 实现ReLU激活函数

def relu(X):

a = torch.zeros_like(X)

return torch.max(X, a)

# 实现我们的模型

def net(X):

X = X.reshape((-1, num_inputs))

print(f'X.shape: {X.shape}')

H = relu(X @ W1 + b1)

return (H @ W2 + b2)

loss = nn.CrossEntropyLoss()

num_epochs, lr = 10, 0.1

updater = torch.optim.SGD(params, lr=lr)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, updater)

'''

# 简洁实现

# 输入是3D的,要Flatten成2D

net = nn.Sequential(nn.Flatten(), nn.Linear(784, 256), nn.ReLU(), nn.Linear(256, 10))

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights)

batch_size, lr, num_epochs = 256, 0.1, 10

loss = nn.CrossEntropyLoss()

trainer = torch.optim.SGD(net.parameters(), lr=lr)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

pycharm版本:

import torch

from torch import nn

from d2l import torch as d2l

net = nn.Sequential(

nn.Flatten(), # flatten: 展平层,将输入数据展平,这里只保留第0维度

nn.Linear(784, 256),

nn.ReLU(),

nn.Linear(256, 10)

)

def init_weights(m): # m: 当前layer

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

# m.weight 默认为0,这一行的意思就是说把当前layer层的参数初始化成均值为0,标准差为0.01

net.apply(init_weights) # 每一层的权值都进行初始化

batch_size, lr, num_epochs = 256, 0.1, 10

loss = nn.CrossEntropyLoss(reduction='none') # 交叉熵损失函数

trainer = torch.optim.SGD(net.parameters(), lr=lr) # 优化器选择随机梯度下降

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, trainer)

d2l.plt.show()

9022

9022

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?