一、准备条件

1.安装包下载

https://archive.apache.org/dist/spark/spark-2.1.1/spark-2.1.1-bin-hadoop2.7.tgz

2. 解压缩

[root@hadoop src]# tar zxvf spark-2.1.1-bin-hadoop2.7.tgz3.修改名称

[root@hadoop src]# mv spark-2.1.1-bin-hadoop2.7.tgz spark4. 配置环境变量

#SPARK_HOME

export SPARK_HOME=/usr/local/src/spark

export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin二、环境搭建

Standalone模式

(1)伪分布式

1.进入$SPARK_HOME/conf

[root@master ~] cd $SPARK_HOME/conf2.拷贝spark-env.sh.template并进入

[root@master conf] cp spark-env.sh.template spark-env.sh

[root@master conf] vi spark-env.sh3.添加以下内容

SPARK_MASTER_HOST=master

SPARK_WORKER_CORES=2 #一个从节点分2个Core

SPARK_WORKER_MEMORY=2g

SPARK_WORKER_INSTANCES=1 #一个woker启动一个示例

---注意:Spark Standalone模式架构与Hadoop HDFS/YARN 类似 1个master 2个Woker4.启动standalone模式

[root@master sbin] start-master.sh

---注意:Alivee Worker:1 原因是SPARK_WORKER_INSTANCES=1

4.测试

#测试:一台机器一个节点启动多个worker实例

#1.修改

SPARK_WORKER_INSTANCES=2

#2.启动

[root@master sbin] start-master.sh(2)全分布式

1.修改spark-env.sh

SPARK_MASTER_HOST=master

SPARK_WORKER_CORES=2 #一个从节点分2个Core

SPARK_WORKER_MEMORY=2g

SPARK_WORKER_INSTANCES=22.拷贝slaves.template

[root@master conf]cp slaves.template slaves3.修改Slaves

master:master

slave1:worker

slave2:woker

---注意:把所有的worker节点配置到slaves,若master也想要worker,也可添加入内4.分发到其他两台机器

[root@master conf]scp -r /usr/local/src/spark slave1:/usr/local/src

[root@master conf]scp -r /usr/local/src/spark slave2:/usr/local/src5.启动

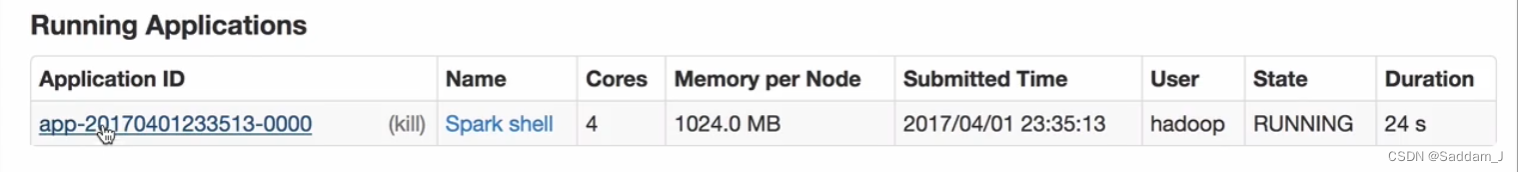

[root@master conf] spark-shell spark://master:7077

---注意:多次启动拿不到Core,状态为Wating

Local模式

#1.解压spark

略

#2.配置环境变量

略

#3.直接启动

[root@master ~] spark-shell --master local[2]Spark-shell帮助手册

[root@CQ-WEB-Centos1 conf]# spark-shell --help

Usage: ./bin/spark-shell [options]

Options:

--master MASTER_URL spark://host:port, mesos://host:port, yarn, or local.

--deploy-mode DEPLOY_MODE Whether to launch the driver program locally ("client") or

on one of the worker machines inside the cluster ("cluster")

(Default: client).

--class CLASS_NAME Your application's main class (for Java / Scala apps).

--name NAME A name of your application.

--jars JARS Comma-separated list of local jars to include on the driver

and executor classpaths.

--packages Comma-separated list of maven coordinates of jars to include

on the driver and executor classpaths. Will search the local

maven repo, then maven central and any additional remote

repositories given by --repositories. The format for the

coordinates should be groupId:artifactId:version.

--exclude-packages Comma-separated list of groupId:artifactId, to exclude while

resolving the dependencies provided in --packages to avoid

dependency conflicts.

--repositories Comma-separated list of additional remote repositories to

search for the maven coordinates given with --packages.

--py-files PY_FILES Comma-separated list of .zip, .egg, or .py files to place

on the PYTHONPATH for Python apps.

--files FILES Comma-separated list of files to be placed in the working

directory of each executor.

--conf PROP=VALUE Arbitrary Spark configuration property.

--properties-file FILE Path to a file from which to load extra properties. If not

specified, this will look for conf/spark-defaults.conf.

--driver-memory MEM Memory for driver (e.g. 1000M, 2G) (Default: 1024M).

--driver-java-options Extra Java options to pass to the driver.

--driver-library-path Extra library path entries to pass to the driver.

--driver-class-path Extra class path entries to pass to the driver. Note that

jars added with --jars are automatically included in the

classpath.

--executor-memory MEM Memory per executor (e.g. 1000M, 2G) (Default: 1G).

--proxy-user NAME User to impersonate when submitting the application.

This argument does not work with --principal / --keytab.

--help, -h Show this help message and exit.

--verbose, -v Print additional debug output.

--version, Print the version of current Spark.

Spark standalone with cluster deploy mode only:

--driver-cores NUM Cores for driver (Default: 1).

Spark standalone or Mesos with cluster deploy mode only:

--supervise If given, restarts the driver on failure.

--kill SUBMISSION_ID If given, kills the driver specified.

--status SUBMISSION_ID If given, requests the status of the driver specified.

Spark standalone and Mesos only:

--total-executor-cores NUM Total cores for all executors.

Spark standalone and YARN only:

--executor-cores NUM Number of cores per executor. (Default: 1 in YARN mode,

or all available cores on the worker in standalone mode)

YARN-only:

--driver-cores NUM Number of cores used by the driver, only in cluster mode

(Default: 1).

--queue QUEUE_NAME The YARN queue to submit to (Default: "default").

--num-executors NUM Number of executors to launch (Default: 2).

If dynamic allocation is enabled, the initial number of

executors will be at least NUM.

--archives ARCHIVES Comma separated list of archives to be extracted into the

working directory of each executor.

--principal PRINCIPAL Principal to be used to login to KDC, while running on

secure HDFS.

--keytab KEYTAB The full path to the file that contains the keytab for the

principal specified above. This keytab will be copied to

the node running the Application Master via the Secure

Distributed Cache, for renewing the login tickets and the

delegation tokens periodically.

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?