该专栏内容为对该视频的学习记录:【《PyTorch深度学习实践》完结合集】

该专栏的全部代码、数据和课件全放在我的GitHub了,欢迎自取。

一个简单示例:y=w*x

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

# 返回预测值

def forward(x):

return x * w

# 损失函数

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) * (y_pred - y)

# 穷举法

w_list = [] # 存储不同的参数w

mse_list = [] # 存储不同参数w下的均方误差MSE

for w in np.arange(0.0, 4.1, 0.1):

print('w=', w)

l_sum = 0

for x_val, y_val in zip(x_data, y_data):

y_pred_val = forward(x_val) # 预测值

loss_val = loss(x_val, y_val) # 一个样本损失值

l_sum += loss_val #把每个样本的损失值求和

print('\t', x_val, y_val, y_pred_val, loss_val)

print('MSE=', l_sum / 3)

w_list.append(w)

mse_list.append(l_sum / 3)

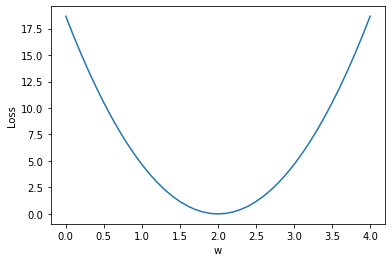

plt.plot(w_list, mse_list)

plt.xlabel('w')

plt.ylabel('Loss')

plt.show()

w= 0.0

1.0 2.0 0.0 4.0

2.0 4.0 0.0 16.0

3.0 6.0 0.0 36.0

MSE= 18.666666666666668

w= 0.1

1.0 2.0 0.1 3.61

2.0 4.0 0.2 14.44

3.0 6.0 0.30000000000000004 32.49

MSE= 16.846666666666668

w= 0.2

1.0 2.0 0.2 3.24

2.0 4.0 0.4 12.96

3.0 6.0 0.6000000000000001 29.160000000000004

MSE= 15.120000000000003

......

......

w= 1.9000000000000001

1.0 2.0 1.9000000000000001 0.009999999999999974

2.0 4.0 3.8000000000000003 0.0399999999999999

3.0 6.0 5.7 0.0899999999999999

MSE= 0.046666666666666586

w= 2.0

1.0 2.0 2.0 0.0

2.0 4.0 4.0 0.0

3.0 6.0 6.0 0.0

MSE= 0.0

w= 2.1

1.0 2.0 2.1 0.010000000000000018

2.0 4.0 4.2 0.04000000000000007

3.0 6.0 6.300000000000001 0.09000000000000043

MSE= 0.046666666666666835

......

......

以上可以发现w=2时均方误差MSE最小

zip函数用法:

list(zip(x_data, y_data)) # zip可以把两个数据压缩打包; list可以把zip解压成列表

[(1.0, 3.0), (2.0, 5.0), (3.0, 7.0)]

作业:y=w*x+b

import numpy as np

import matplotlib.pyplot as plt

# 这里假设真实方程为:y=2*x+1

x_data = [1.0, 2.0, 3.0]

y_data = [3.0, 5.0, 7.0]

# 返回预测值

def forward(x):

return x * w + b

# 损失函数

def loss(x, y):

y_pred = forward(x)

return (y_pred - y) * (y_pred - y)

mse_list = [] # 存储不同参数下的均方误差MSE

w_list = np.arange(0.5, 3.1, 0.1)

b_list = np.arange(.0, 3.1, 0.1)

i = 0

# 穷举

for w in w_list:

for b in b_list:

l_sum = 0

for x_val, y_val in zip(x_data, y_data):

y_pred_val = forward(x_val) # 预测值

loss_val = loss(x_val, y_val) # 一个样本损失值

l_sum += loss_val #把每个样本的损失值求和

mse_list.append(l_sum / 3)

# print('w:', w, 'b:', b, 'MSE:', l_sum / 3)

if i == 475:

print('w:', w, 'b:', b, 'MSE:', l_sum / 3)

i += 1

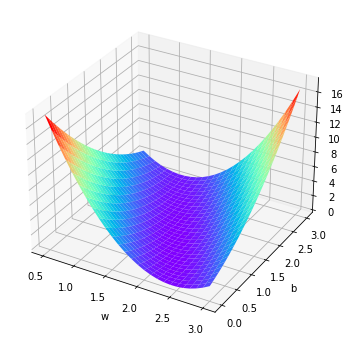

# 绘图

ll_sum = 0

[ww, bb] = np.meshgrid(w_list, b_list)

for x_val, y_val in zip(x_data, y_data):

loss = (ww * x_val + bb - y_val) ** 2

ll_sum += loss

fig = plt.figure(figsize=[10, 6])

ax = plt.axes(projection="3d")

ax.plot_surface(ww, bb, ll_sum / 3, cmap="rainbow")

ax.set_xlabel('w')

ax.set_ylabel('b')

plt.show()

w: 1.9999999999999996 b: 1.0 MSE: 1.3805065841367707e-30

在这么多输出中找出使损失函数最小的w和b:

min(mse_list), mse_list.index(min(mse_list))

(1.3805065841367707e-30, 475)

再回源代码中上面34行处写if语句,当索引为475时输出对应的w b,重新运行。

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?