与非分布式爬虫相比,做的修改

爬虫文件中

1.导入scrapy_redis包

2.不再继承基础类

3.开启爬虫的钥匙

import json

import scrapy

from ..items import DbItem

from scrapy_redis.spiders import RedisSpider #1.导入scrapy_redis包

# class Db250Spider(scrapy.Spider): 2.不再继承基础类

class Db250Spider(RedisSpider):

name = 'db250'

#allowed_domains = ['movie.douban.com']

#start_urls = ['http://movie.douban.com/top250'] 不必须存在

redis_key = "db:start_urls" 3.#开启爬虫的钥匙

page_num=0

def parse(self, response):

print("************************")

# print(response)

node_list = response.xpath('//div[@class="info"]')

# with open('film.text','w',encoding="utf-8") as f:

for node in node_list:

film_name = node.xpath("./div/a/span/text()").extract()[0]

director_name = node.xpath("./div/p/text()").extract()[0].strip()

score = node.xpath('./div/div/span[@property="v:average"]/text()').extract()[0]

# 非管道存储

# item = {}

# item["file_name"] = file_name

# item["director_name"] = file_name

# item["score"] = file_name

# content=json.dumps(item,ensure_ascii=False)

# f.write(content)

# 使用管道存储

item_pipe = DbItem()

item_pipe["film_name"] = film_name

item_pipe["director_name"] = director_name

item_pipe["score"] = score

introduction_url = node.xpath('./div/a/@href').extract()[0]

yield scrapy.Request(introduction_url,callback=self.des_detail,meta={"info":item_pipe})

if response.meta.get["num"]:

self.page_num=response.meta["num"]

self.page_num += 1

if self.page_num==3:

return

page_url = "https://movie.douban.com/top250?start={}&filter=".format(self.page_num * 25)

yield scrapy.Request(page_url,meta={"num":self.page_num})

#获取电影简介数据 次级页面的网页源代码中

def des_detail(self,response):

#解析详情页的response

#1.meta 会跟随response一块返回 2.通过response.meta接收 3.通过update添加到新的item

info=response.meta["info"]

item=DbItem()

item.update(info)

#简介内容

introduction=response.xpath('//div[@id="link-report"]//span[@property="v:summary"]/text()').extract()[0].strip()

item["introduction"]=introduction

#通过管道保存

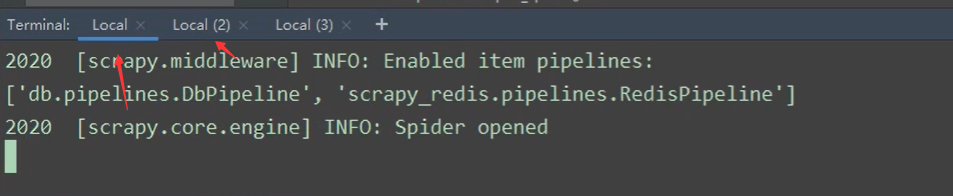

yield item4.redis中添加公共需求

5.与此同时新建一个相同的项目,这里需要把项目名称修改一下,和里面存储的文件名修改以便区分

6.同时运行两个爬虫文件

俩文件中保持数据持续增长更新,用meta传参

if response.meta.get["num"]:

self.page_num=response.meta["num"]

self.page_num += 1

if self.page_num==3:

return

page_url = "https://movie.douban.com/top250?start={}&filter=".format(self.page_num * 25)

yield scrapy.Request(page_url,meta={"num":self.page_num})

309

309

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?