目录

- 本文章主要记录了自己手动标注数据集并将其训练,测试的过程。

环境搭建

Python>=3.8.0

强烈建议装CUDA,相关安装请查阅百度

git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # install当然你也可以去官方下载压缩包

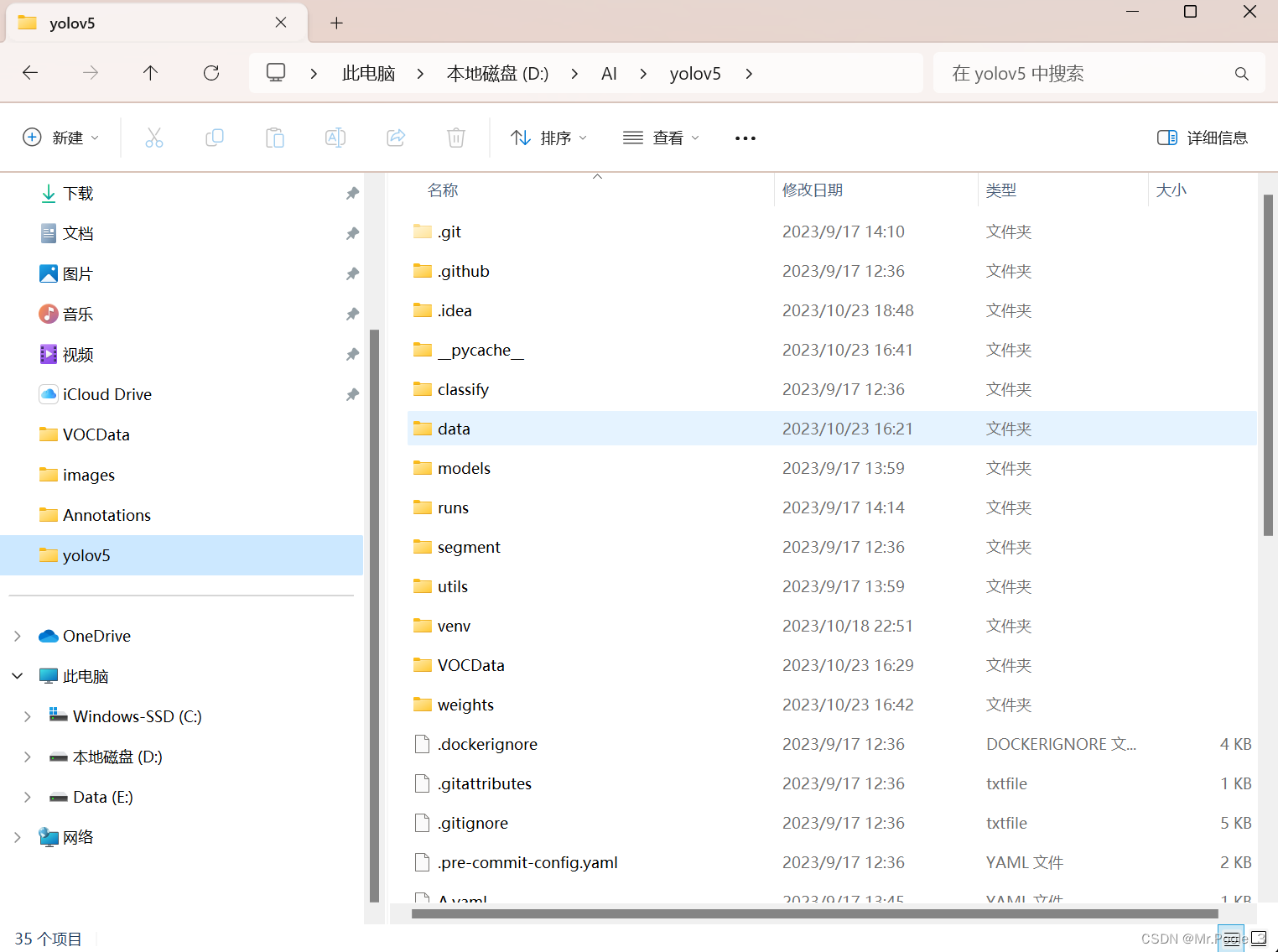

克隆/解压缩包成功后得到这样一个文件夹

有关yolov5的详细安装请看我的另一篇文章,这里就不赘述了。

如何安装yolov5 这里有详细讲解。

模型训练前的准备

创建相关文件夹

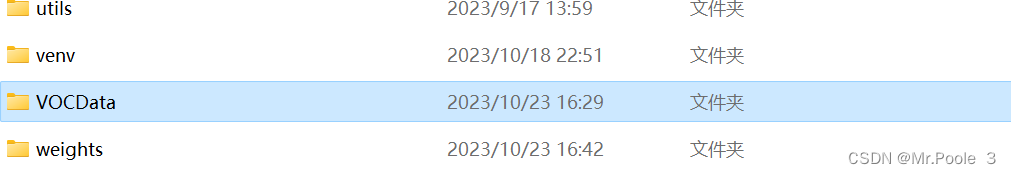

首先打开yolov5文件夹,新建一个 VOCdata 文件夹

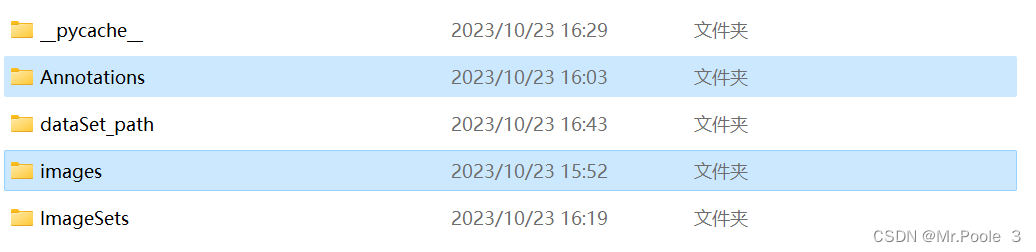

在VOCdata文件夹中创建两个文件夹,一个名为Annotations,一个名为images

在VOCdata文件夹中创建两个文件夹,一个名为Annotations,一个名为images

将你的图片保存到images文件夹中,记得图片不能带有中文,特殊符号等等最好就是数字排序,1,2,3,4这种。

安装图片打标签工具——labelimg

- windows+conda安装

打开anaconda prompt

cd到你想克隆库的地方,输入:

git clone https://github.com/HumanSignal/labelImg.git

cd labelimg

conda install pyqt=5

conda install -c anaconda lxml

pyrcc5 -o libs/resources.py resources.qrc

python labelImg.py- cmd安装

pip3 install labelImg

labelImg- .exe应用程序

我上传到百度网盘,需要自取

链接:https://pan.baidu.com/s/1T2R68E3vYp_iKCPcMpNG1Q

提取码:lpnb

直接双击.exe文件就能运行

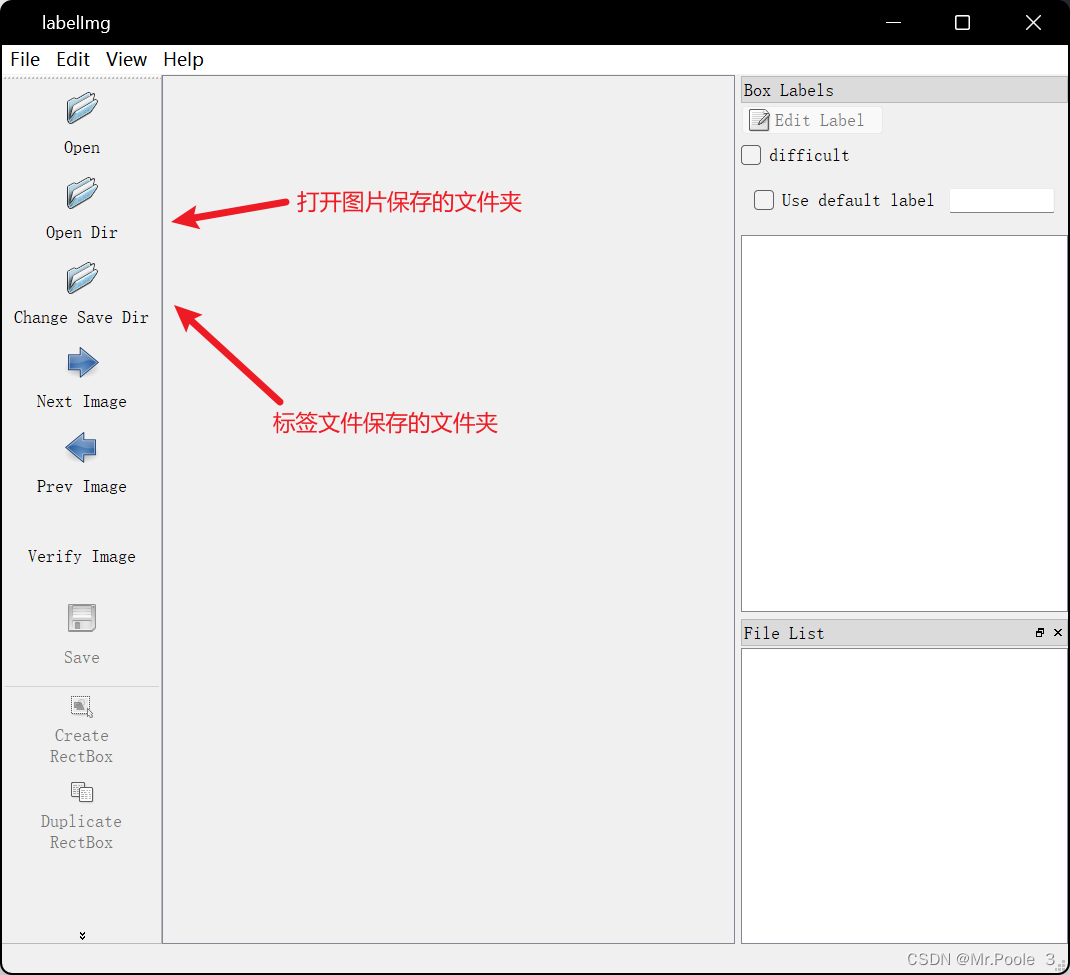

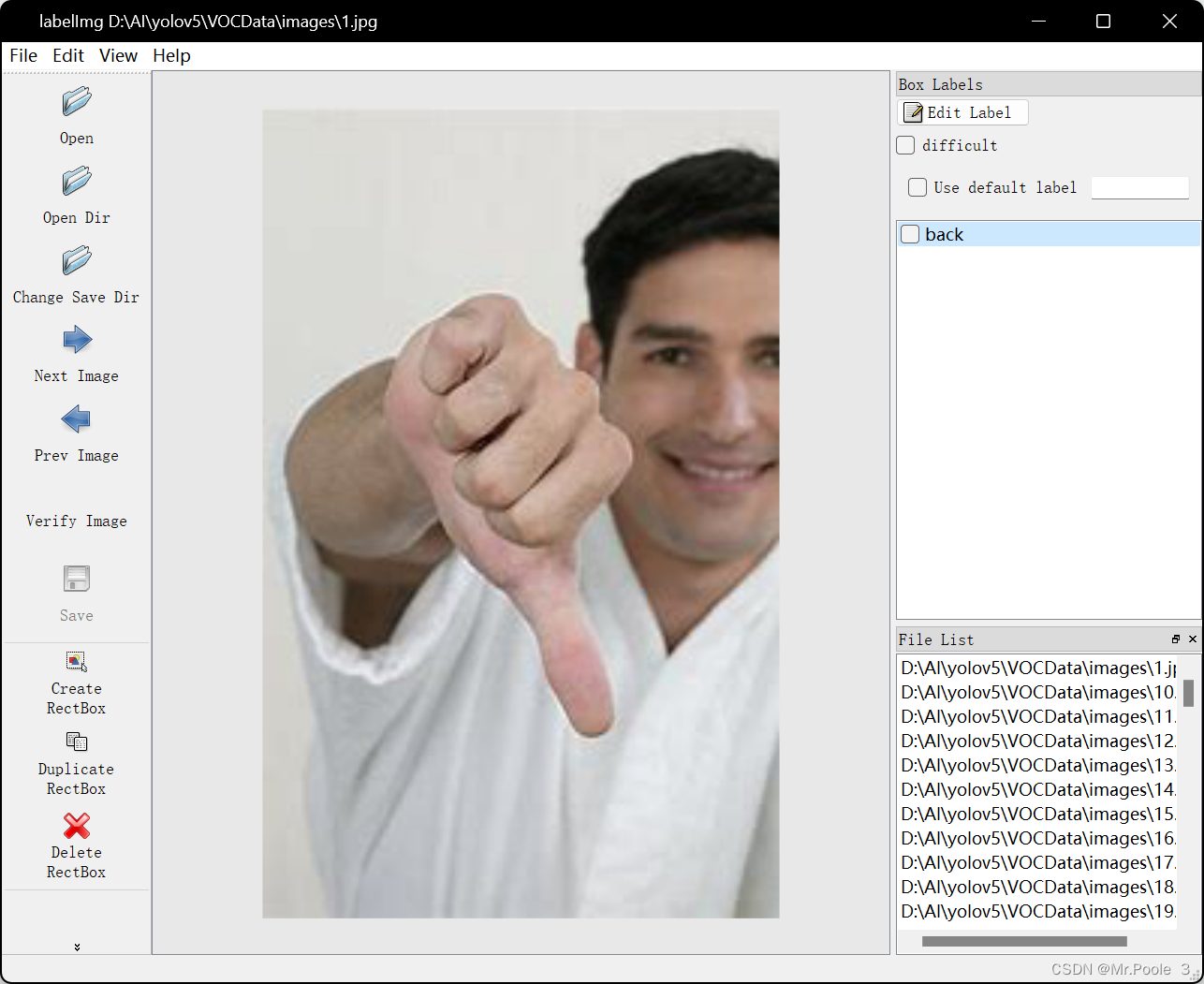

使用labelimg给图片打标签

本文选择默认的VOC格式保存标签,yolo_txt格式后面会讲

首先运行labelimg

打开View,点击auto saving,这样就会自动保存标签文件。

点击change save dir,选择刚刚创建的Annotations文件夹

点击open dir 打开images文件夹将图片导入

常用快捷键介绍:

- d:下一张图片

- a:上一张图片

- w:绘制标签框

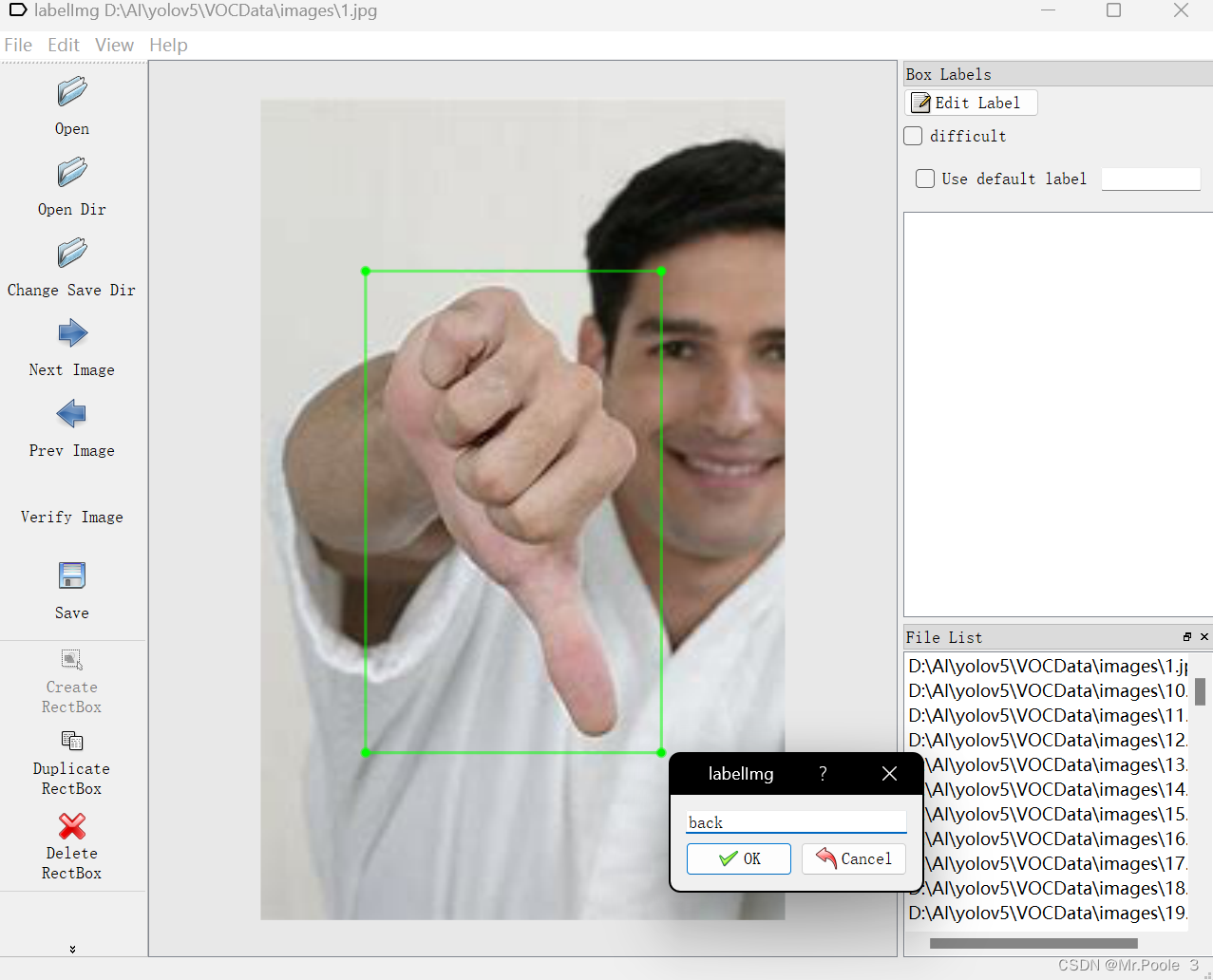

点击 create rectbox 绘制标签框

框内输入你想命名的标签名称,这里我给他命名为 back

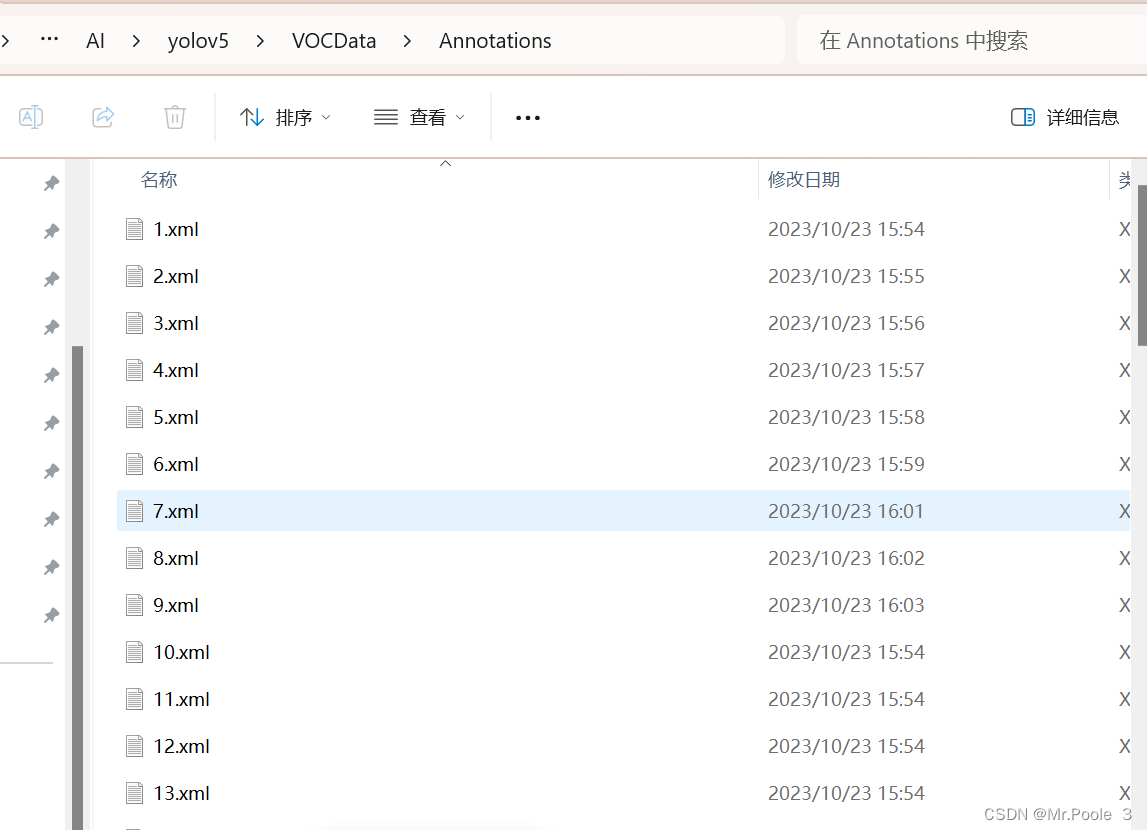

全部打好标签后,在我们Annotations文件夹里面就会有标签文件

划分数据集

在VOCdata文件夹内创建 divide.py文件,打开,代码如下:

# coding:utf-8

import os

import random

import argparse

parser = argparse.ArgumentParser()

#xml文件的地址,根据自己的数据进行修改 xml一般存放在Annotations下

parser.add_argument('--xml_path', default='Annotations', type=str, help='input xml label path')

#数据集的划分,地址选择自己数据下的ImageSets/Main

parser.add_argument('--txt_path', default='ImageSets/Main', type=str, help='output txt label path')

opt = parser.parse_args()

trainval_percent = 1.0 # 训练集和验证集所占比例。 这里没有划分测试集

train_percent = 0.9 # 训练集所占比例,可自己进行调整

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list_index = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list_index, tv)

train = random.sample(trainval, tr)

file_trainval = open(txtsavepath + '/trainval.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

for i in list_index:

name = total_xml[i][:-4] + '\n'

if i in trainval:

file_trainval.write(name)

if i in train:

file_train.write(name)

else:

file_val.write(name)

else:

file_test.write(name)

file_trainval.close()

file_train.close()

file_val.close()

file_test.close()运行完毕后文件夹内会生成 ImagesSets\Main 文件夹,里面存放了测试集,训练集,验证集,以及图片的名称。

配置文件修改

我们需要将VOC文件转化为yolo_txt的格式

在VOCData目录下创建 voc_to_yolotxt.py,打开,代码如下:

import xml.etree.ElementTree as ET

import os

from os import getcwd

sets = ['train', 'val', 'test']

classes = ["move","back"] # 改成自己的类别,我的是move和back,你改成你自己定义的标签名

abs_path = os.getcwd()

print(abs_path)

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

in_file = open('D:/AI/yolov5/VOCData/Annotations/%s.xml' % (image_id), encoding='UTF-8')

out_file = open('D:/AI/yolov5/VOCData/labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

# difficult = obj.find('Difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# 标注越界修正

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('D:/AI/yolov5/VOCData/labels/'):

os.makedirs('D:/AI/yolov5/VOCData/labels/')

image_ids = open('D:/AI/yolov5/VOCData/ImageSets/Main/%s.txt' % (image_set)).read().strip().split()

if not os.path.exists('D:/AI/yolov5/VOCData/dataSet_path/'):

os.makedirs('D:/AI/yolov5/VOCData/dataSet_path/')

list_file = open('dataSet_path/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write('D:/AI/yolov5/VOCData/images/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()注意:代码中有我自己的文件路径,因此你需要根据你的情况修改自己的文件路径

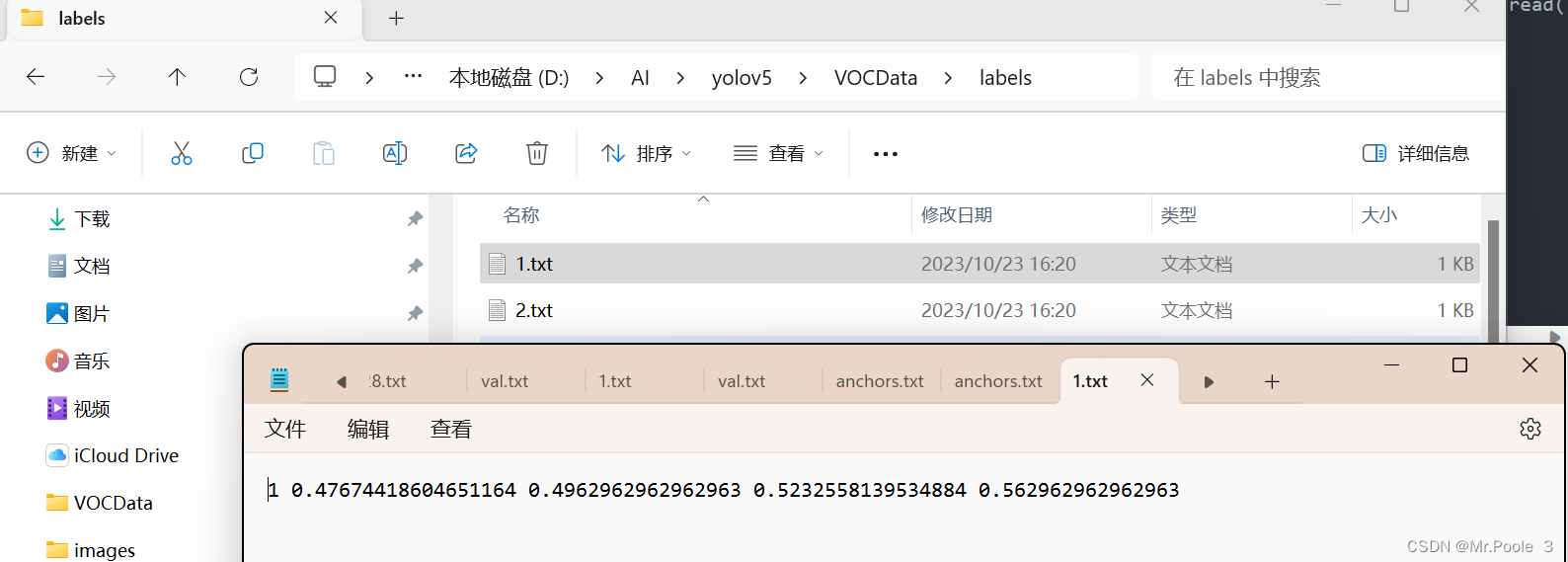

运行后会生成如下 labels 文件夹和 dataSet_path 文件夹

在label文件夹里面就是保存的我们yolo.txt的文件

文件里面的格式为:class, x_center, y_center, width, height

在 yolov5 目录下的 data 文件夹下 新建一个 myvoc.yaml文件,打开输入:

train: D:\Testcode\yolov5-5.0\VOCData\dataSet_path\train.txt #改为你自己的路径

val: D:\Testcode\yolov5-5.0\VOCData\dataSet_path\val.txt #改为你自己的路径

# 你有几个标签就改为几,比如我有move和back,就写 2

nc: 1

# 你的标签名

names: ["move","back"]聚类获得先验框

在VOCdata目录下创建程序两个程序

kmeans.py 以及 clauculate_anchors.py

打开kmeans.py(不要运行),代码如下:

import numpy as np

def iou(box, clusters):

"""

Calculates the Intersection over Union (IoU) between a box and k clusters.

:param box: tuple or array, shifted to the origin (i. e. width and height)

:param clusters: numpy array of shape (k, 2) where k is the number of clusters

:return: numpy array of shape (k, 0) where k is the number of clusters

"""

x = np.minimum(clusters[:, 0], box[0])

y = np.minimum(clusters[:, 1], box[1])

if np.count_nonzero(x == 0) > 0 or np.count_nonzero(y == 0) > 0:

raise ValueError("Box has no area") # 如果报这个错,可以把这行改成pass即可

intersection = x * y

box_area = box[0] * box[1]

cluster_area = clusters[:, 0] * clusters[:, 1]

iou_ = intersection / (box_area + cluster_area - intersection)

return iou_

def avg_iou(boxes, clusters):

"""

Calculates the average Intersection over Union (IoU) between a numpy array of boxes and k clusters.

:param boxes: numpy array of shape (r, 2), where r is the number of rows

:param clusters: numpy array of shape (k, 2) where k is the number of clusters

:return: average IoU as a single float

"""

return np.mean([np.max(iou(boxes[i], clusters)) for i in range(boxes.shape[0])])

def translate_boxes(boxes):

"""

Translates all the boxes to the origin.

:param boxes: numpy array of shape (r, 4)

:return: numpy array of shape (r, 2)

"""

new_boxes = boxes.copy()

for row in range(new_boxes.shape[0]):

new_boxes[row][2] = np.abs(new_boxes[row][2] - new_boxes[row][0])

new_boxes[row][3] = np.abs(new_boxes[row][3] - new_boxes[row][1])

return np.delete(new_boxes, [0, 1], axis=1)

def kmeans(boxes, k, dist=np.median):

"""

Calculates k-means clustering with the Intersection over Union (IoU) metric.

:param boxes: numpy array of shape (r, 2), where r is the number of rows

:param k: number of clusters

:param dist: distance function

:return: numpy array of shape (k, 2)

"""

rows = boxes.shape[0]

distances = np.empty((rows, k))

last_clusters = np.zeros((rows,))

np.random.seed()

# the Forgy method will fail if the whole array contains the same rows

clusters = boxes[np.random.choice(rows, k, replace=False)]

while True:

for row in range(rows):

distances[row] = 1 - iou(boxes[row], clusters)

nearest_clusters = np.argmin(distances, axis=1)

if (last_clusters == nearest_clusters).all():

break

for cluster in range(k):

clusters[cluster] = dist(boxes[nearest_clusters == cluster], axis=0)

last_clusters = nearest_clusters

return clusters

if __name__ == '__main__':

a = np.array([[1, 2, 3, 4], [5, 7, 6, 8]])

print(translate_boxes(a))打开clauculate_anchors.py,代码如下:

记得修改里面的路径以及第16 行的标注类别名称

import os

import numpy as np

import xml.etree.cElementTree as et

from kmeans import kmeans, avg_iou

FILE_ROOT = "D:/AI/yolov5/VOCData/" # 根路径

ANNOTATION_ROOT = "Annotations" # 数据集标签文件夹路径

ANNOTATION_PATH = FILE_ROOT + ANNOTATION_ROOT

ANCHORS_TXT_PATH = "D:/AI/yolov5/VOCData/anchors.txt" # anchors文件保存位置

CLUSTERS = 9

CLASS_NAMES = ['move','back'] # 类别名称

def load_data(anno_dir, class_names):

xml_names = os.listdir(anno_dir)

boxes = []

for xml_name in xml_names:

xml_pth = os.path.join(anno_dir, xml_name)

tree = et.parse(xml_pth)

width = float(tree.findtext("./size/width"))

height = float(tree.findtext("./size/height"))

for obj in tree.findall("./object"):

cls_name = obj.findtext("name")

if cls_name in class_names:

xmin = float(obj.findtext("bndbox/xmin")) / width

ymin = float(obj.findtext("bndbox/ymin")) / height

xmax = float(obj.findtext("bndbox/xmax")) / width

ymax = float(obj.findtext("bndbox/ymax")) / height

box = [xmax - xmin, ymax - ymin]

boxes.append(box)

else:

continue

return np.array(boxes)

if __name__ == '__main__':

anchors_txt = open(ANCHORS_TXT_PATH, "w")

train_boxes = load_data(ANNOTATION_PATH, CLASS_NAMES)

count = 1

best_accuracy = 0

best_anchors = []

best_ratios = []

for i in range(10): ##### 可以修改,不要太大,否则时间很长

anchors_tmp = []

clusters = kmeans(train_boxes, k=CLUSTERS)

idx = clusters[:, 0].argsort()

clusters = clusters[idx]

# print(clusters)

for j in range(CLUSTERS):

anchor = [round(clusters[j][0] * 640, 2), round(clusters[j][1] * 640, 2)]

anchors_tmp.append(anchor)

print(f"Anchors:{anchor}")

temp_accuracy = avg_iou(train_boxes, clusters) * 100

print("Train_Accuracy:{:.2f}%".format(temp_accuracy))

ratios = np.around(clusters[:, 0] / clusters[:, 1], decimals=2).tolist()

ratios.sort()

print("Ratios:{}".format(ratios))

print(20 * "*" + " {} ".format(count) + 20 * "*")

count += 1

if temp_accuracy > best_accuracy:

best_accuracy = temp_accuracy

best_anchors = anchors_tmp

best_ratios = ratios

anchors_txt.write("Best Accuracy = " + str(round(best_accuracy, 2)) + '%' + "\r\n")

anchors_txt.write("Best Anchors = " + str(best_anchors) + "\r\n")

anchors_txt.write("Best Ratios = " + str(best_ratios))

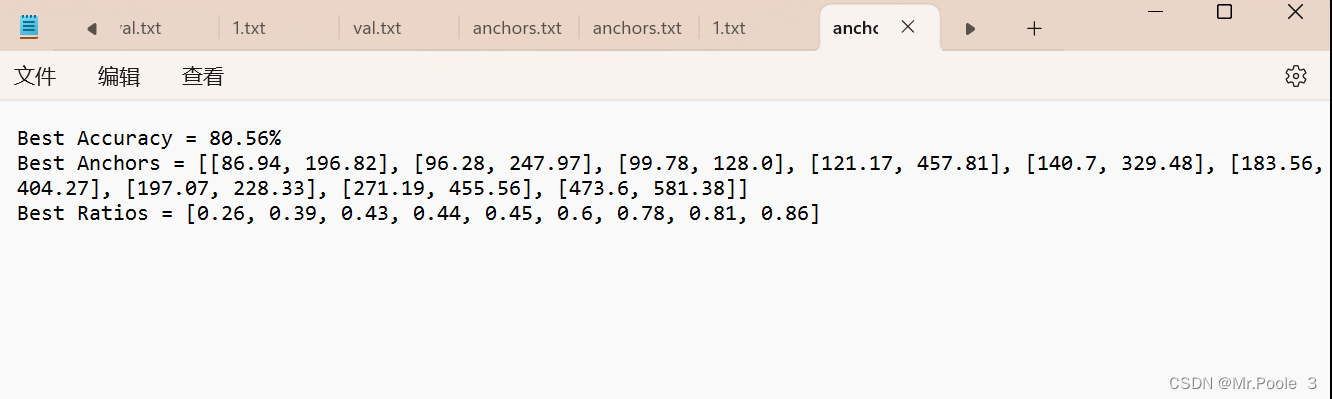

anchors_txt.close()运行clauculate_anchors.py后,会生成anchors.txt文件。

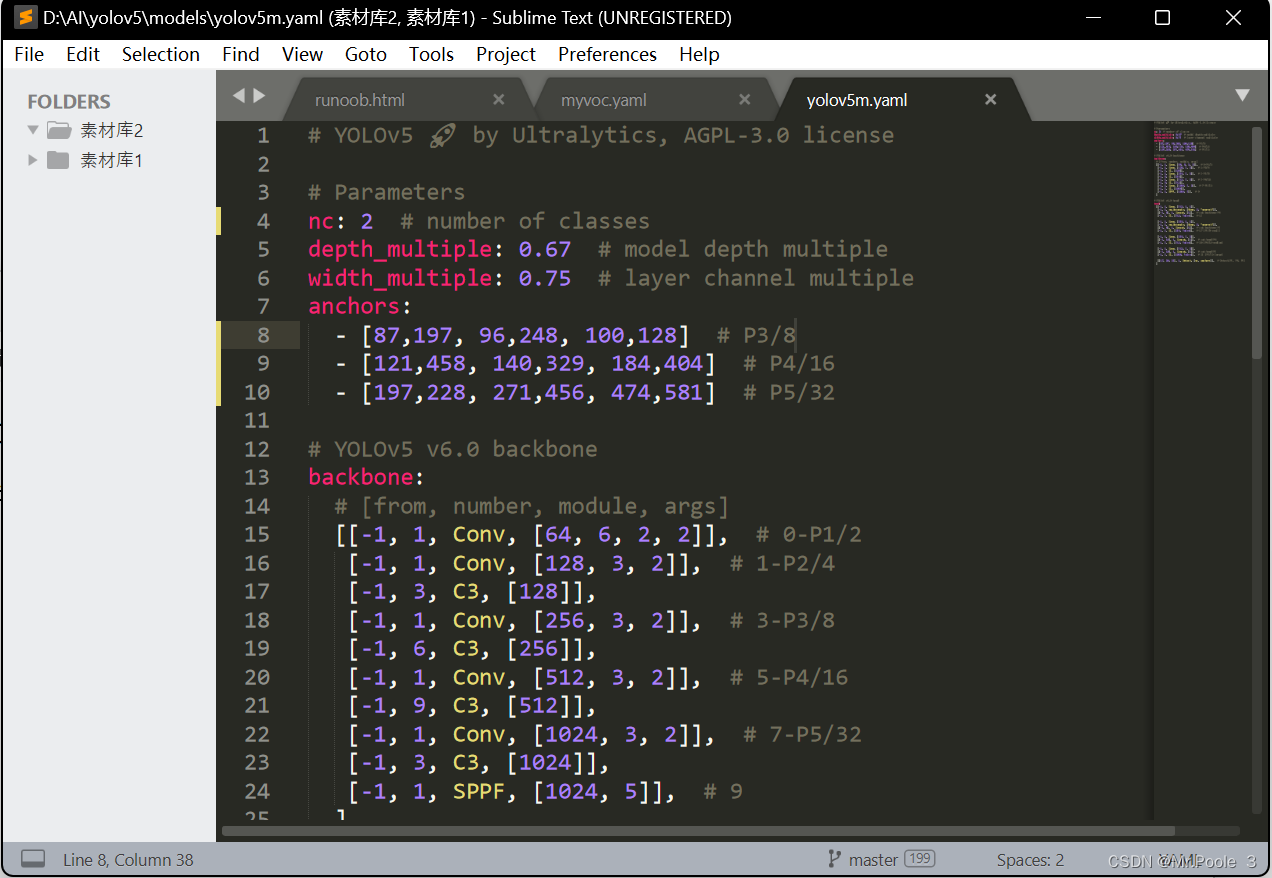

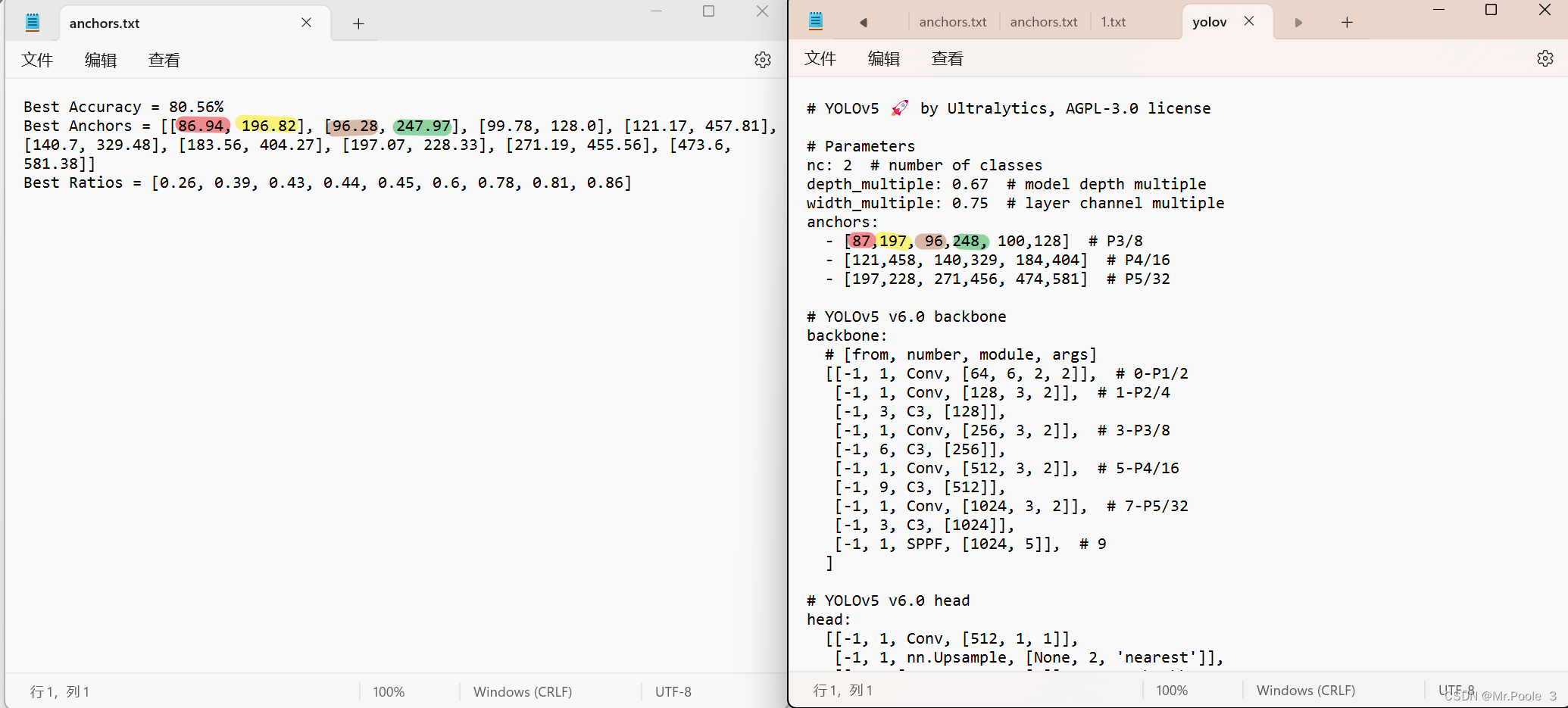

修改模型配置文件

选择一个模型,在yolov5目录下的model文件夹下是模型的配置文件,有n、s、m、l、x版本,逐渐增大(随着架构的增大,训练时间也是逐渐增大)。你也可以自己去github上找其他的模型,本文采用的是yolov5m

在yolov5\models文件夹内打开 yolov5m.yaml

修改nc: 2 #你的标签数量

然后就是打开刚才的anchors.txt,一一对应 yolov5m.yaml里面的anchors

根据 anchors.txt 中的 Best Anchors 修改,需要取整 四舍五入就行

如图一一对应就行

模型训练

cmd,进入你的虚拟环境

进入yolov5的目录

训练代码

weights:权重文件路径

cfg:存储模型结构的配置文件

data:存储训练、测试数据的文件

epochs:指的就是训练过程中整个数据集将被迭代多少次

batch-size:一次看完多少张图片才进行权重更新,梯度下降的mini-batch

img-size:输入图片宽高

device:cuda device 0 这里我选择使用device0即为GPU训练

python train.py --weights weights/yolov5s.pt --cfg models/yolov5s.yaml --data data/myvoc.yaml --epoch 233 --batch-size 8 --img 640 --device 0

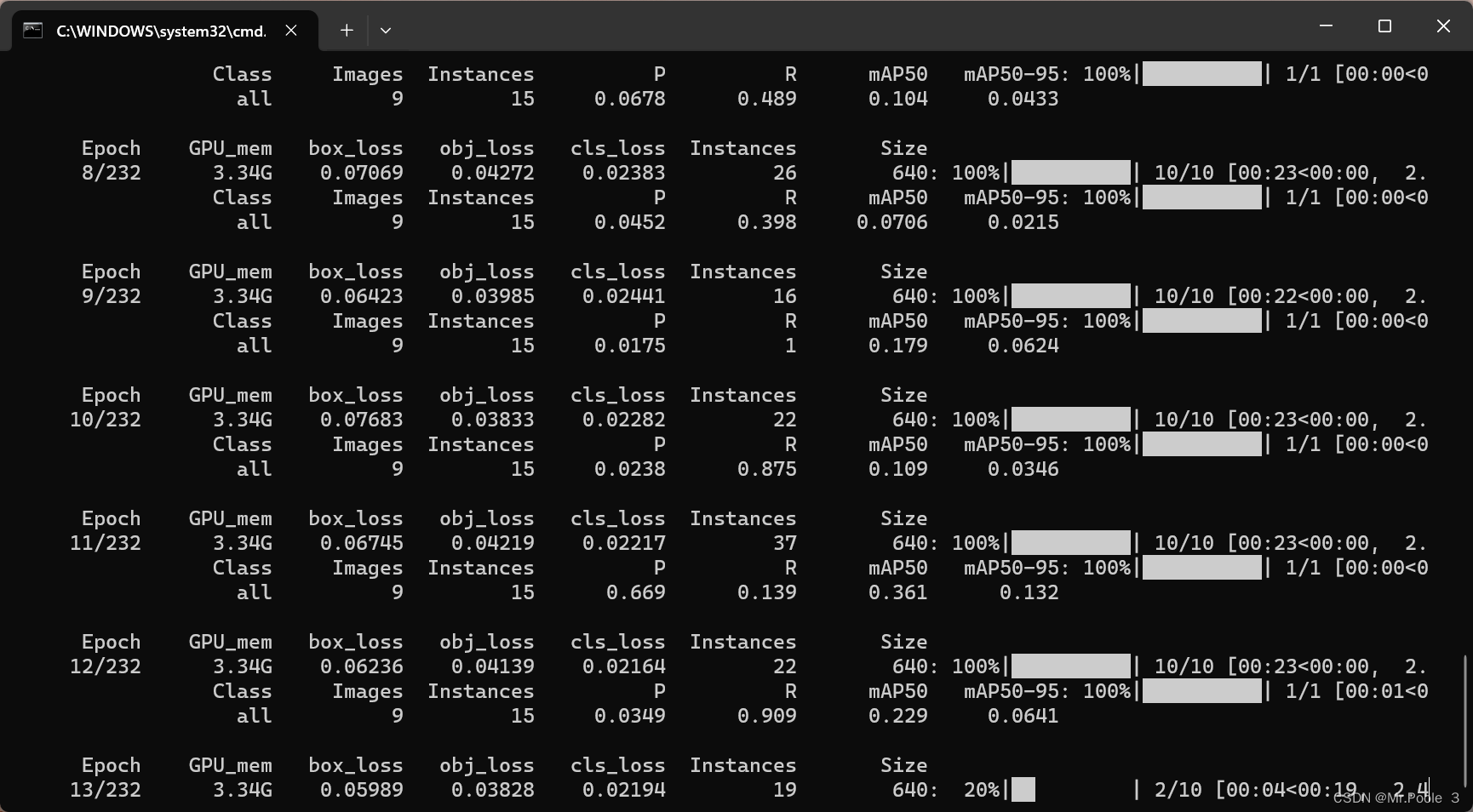

回车开始训练!!!!!

训练好的模型best.pt会被保存在 yolov5 目录下的 runs/train/weights/exp xx下(具体上面会显示)

我用了大概60张图片训练了232次,用时1小时32分钟(rtx3060 laptop)

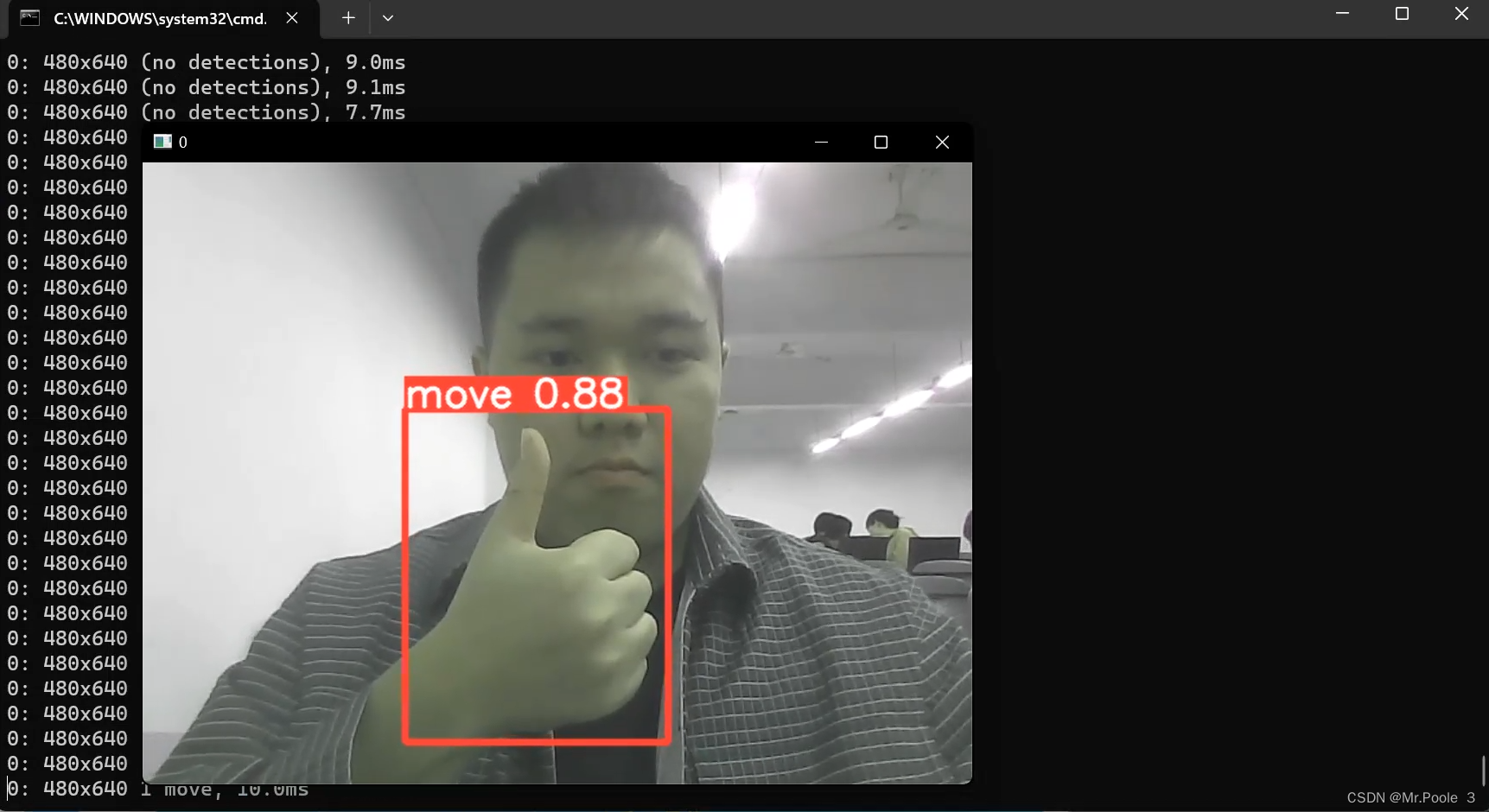

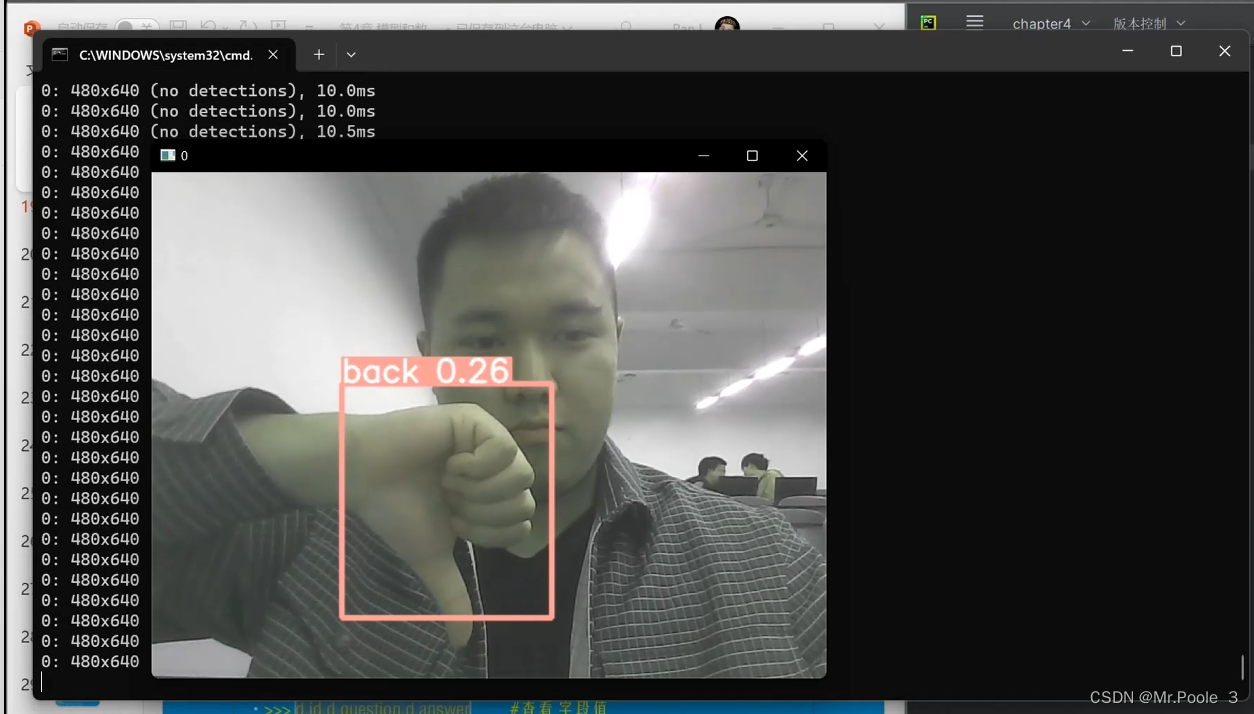

使用自己的模型

训练好模型后,复制best.pt粘贴到yolov5目录中

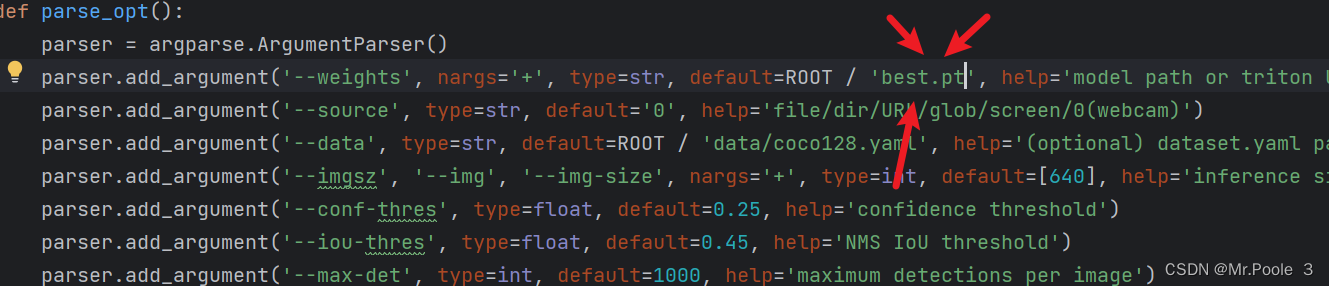

打开detect.py,在weights那一排中修改为你的模型,下一排default设置为'0'(调用笔记本摄像头)

修改后直接运行,这样就大功告成了!!!!!!!!!!!!!

8071

8071

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?