目录

Segmentation fault (core dumped)

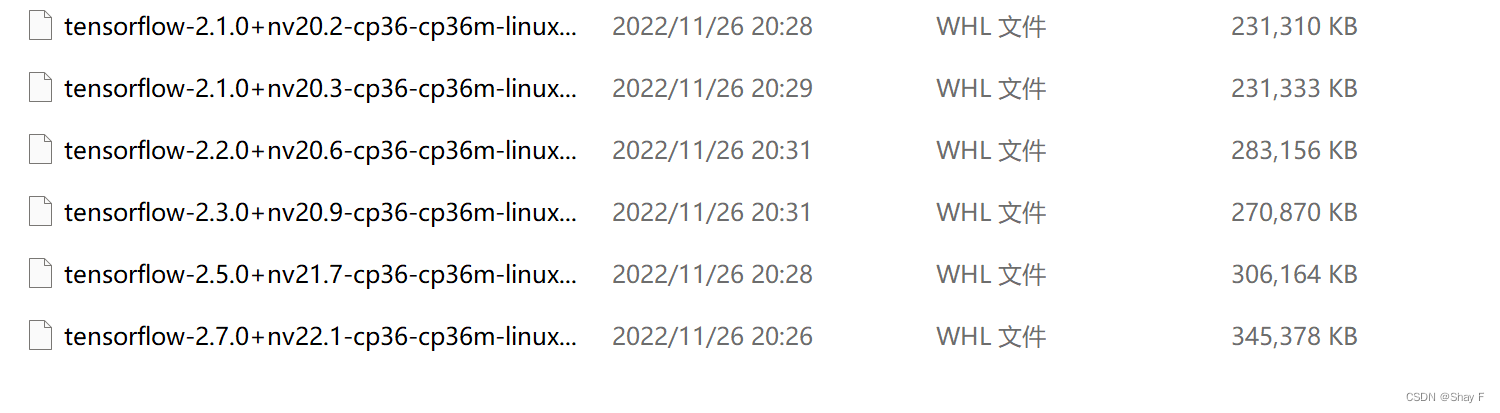

3.Jetson Nano上各版本的tensorflow(python3.6) whl文件下载分享

由于一些学习项目需求,我想把在电脑上成功运行的基于tensorflow的程序在板子上也成功执行,于是开始了嵌入式板子上的tensorflow安装过程。

1.本次遇到的问题

我的环境是python3.6.9 ,cuda10.2

(1)首先是h5py的安装过程,有些许问题

解决:

先安装一下 Cython,慢的话在后面加上-i http://pypi.douban.com/simple/ --trusted-host pypi.douban.com

pip3 install Cython然后我的pip3 h5py还是有问题,所以参考了https://blog.csdn.net/weixin_42062018/article/details/119546939

sudo apt install python3-h5py在命令行中,进入python3 ,然后import h5py就没问题了!

(2)检查cuda cuDNN opencv等

参考https://blog.csdn.net/qq_42877824/article/details/111303338

1.CUDA

nvcc -V直接就可以显示cuda的版本,如果没有,可能是没有配置好

2.Opencv4.1.1

检查opencv的安装

pkg-config opencv4 --modversion3.cuDNN

检查cuDNN,运行一下自带的例子

cd /usr/src/cudnn_samples_v8/mnistCUDNN/ #进入例子目录

sudo make #编译一下例子

./mnistCUDNN # 执行

#如果以上无法运行可以添加权限如下方法:

sudo chmod a+x mnistCUDNN # 为可执行文件添加执行权限(3)相关包安装

参考https://blog.csdn.net/dvd_sun/article/details/88975005

https://blog.csdn.net/l_jsaphsj/article/details/103385042

sudo pip3 install -U grpcio absl-py py-cpuinfo psutil portpicker six mock requests gast astor termcolorsudo apt-get install python3-pip

sudo apt-get install libhdf5-serial-dev

sudo apt-get install hdf5-tools有些包我的开发板已经有了,可能没列举到所有

(4)安装tensorflow2.0.0

我先安装了2.0.0版本,参考https://blog.csdn.net/qq_36229876/article/details/104046824

sudo pip3 install tensorflow_gpu-2.0.0+nv19.11-cp36-cp36m-linux_aarch64.whl -i http://pypi.douban.com/simple/ --trusted-host pypi.douban.com进入到whl文件所在的位置,执行这个命令,加上豆瓣源,会把需要的包都安装。

我是成功安装了2.0.0

然后,开始进入python,尝试import tensorflow

先是遇到了illegal instruction (core dumped)

解决此问题参考 这里用到Vim命令,不会的可以搜索相关的看一下。

大概是方向上下键调整到需要修改的位置,在光标处按“i”或者“insert”键开始修改操作。

末尾添加后,保存退出的时候要输入一下

:wq然后解决后,又遇到了segmentation fault(core dumped)

Segmentation fault (core dumped)

这下,有点难以解决了。网上都难以找到一个可行的方法

有说import numpy,import scipy后再import tensorflow可解决,可是我却没有。

我发现应该是Cuda和tensorflow版本对应的问题

2.安装tensorflow2.1.0

我不想对cuda的版本有调整,于是就在tensorflow版本上做修改。

我尝试直接pip,却发现一直报错,于是我开始寻找合适的whl文件,在官网找到了一些适合python3.6的文件下载了下来,准备放在板子上试试

注意:要寻找后面是aarch64的whl文件,因为我们是在Jetson Nano上安装

首先尝试了

sudo pip3 install tensorflow-2.1.0+nv20.2-cp36-cp36m-linux_aarch64.whl -i http://pypi.douban.com/simple/ --trusted-host pypi.douban.com

有些灰心的是:scipy==1.4.1报错,单独安装也不行

解决这个问题:解决scipy==1.4.1的安装问题,

同样下载whl文件,cd到所在位置 sudo pip3 install xxxxx.whl即可

再贴一下下载链接https://blog.csdn.net/weixin_43220532/article/details/109156240

安装好scipy后,我又尝试

sudo pip3 install tensorflow-2.1.0+nv20.2-cp36-cp36m-linux_aarch64.whl

芜湖,完成了安装。

然后

python3

import tensorflow as tf

tf.__version__显示出了2.1.0。

最后,我的程序也跑成功了。

3.Jetson Nano上各版本的tensorflow(python3.6) whl文件下载分享

可以去官网找,这里分享一下我这次找的(包括scipy1.4.1)

链接:https://pan.baidu.com/s/1r8npdT7u0fiK8-KCA7KXHQ

提取码:kxbd

本科小白,不太会写,有相同问题可以参考参考

有错误麻烦指正,谢谢

686

686

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?