1.加性注意力

import math

import torch

from torch import nn

from d2l import torch as d2l

def masked_softmax(X, valid_lens):

"""通过在最后一个轴上掩蔽元素来执行softmax操作"""

# X:3D张量,valid_lens:1D或2D张量

if valid_lens is None:

return nn.functional.softmax(X, dim=-1)

else:

shape = X.shape

if valid_lens.dim() == 1:

valid_lens = torch.repeat_interleave(valid_lens, shape[1])

else:

valid_lens = valid_lens.reshape(-1)

# 最后一轴上被掩蔽的元素使用一个非常大的负值替换,从而其softmax输出为0

X = d2l.sequence_mask(X.reshape(-1, shape[-1]), valid_lens,

value=-1e6)

return nn.functional.softmax(X.reshape(shape), dim=-1)

class AdditiveAttention(nn.Module):

def __init__(self,key_size,query_size,num_hiddens,dropout,**kwargs):

super(AdditiveAttention,self).__init__(**kwargs)

self.w_k=nn.Linear(key_size,num_hiddens,bias=False)

self.w_q=nn.Linear(query_size,num_hiddens,bias=False)

self.w_v=nn.Linear(num_hiddens,1,bias=False)

self.dropout=nn.Dropout(dropout)

def forward(self,queries,keys,values,valid_lens):

queries,keys=self.w_q(queries),self.w_k(keys)

#print(queries.shape)

# q=queries.unsqueeze(2)

# k=keys.unsqueeze(1)

#利用广播机制进行相加:

#queries.unsqueeze(2):[B,sq_len,1,hiddens]

#keys.unsqueeze(1):[B,1,sq_len,hiddens]

features=queries.unsqueeze(2)+keys.unsqueeze(1)

#print(features.shape)

features=torch.tanh(features)

scores=self.w_v(features).squeeze(-1)

self.attention_weights=masked_softmax(scores,valid_lens)

#批量矩阵乘乘法:bmm

return torch.bmm(self.dropout(self.attention_weights),values)

#输入测试:

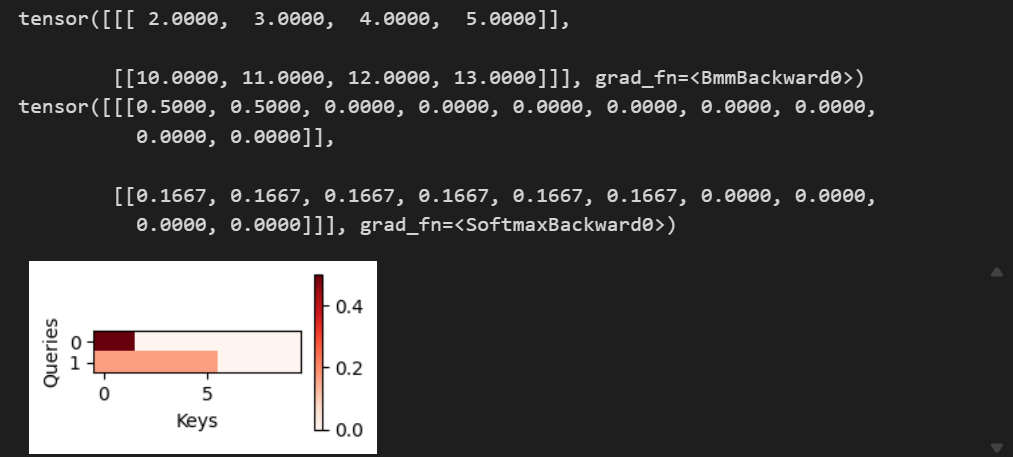

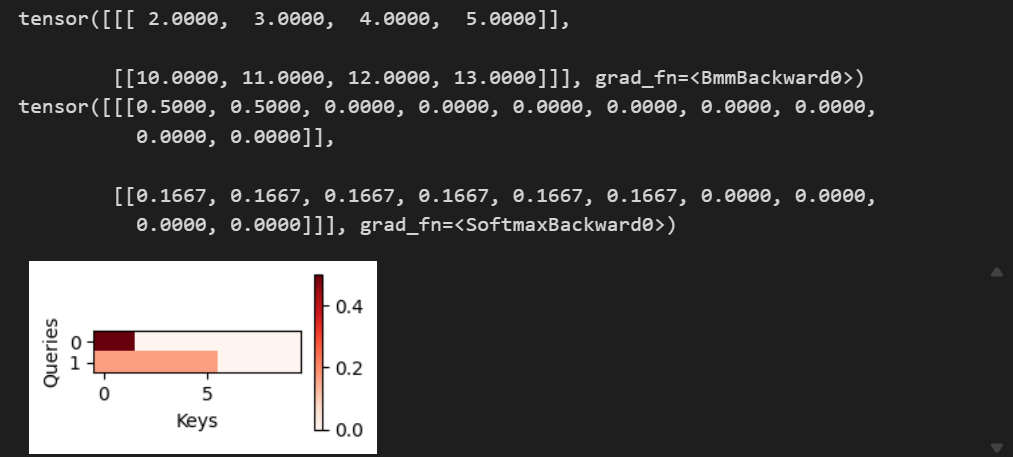

queries, keys = torch.normal(0, 1, (2, 1, 20)), torch.ones((2, 10, 2))

values = torch.arange(40, dtype=torch.float32).reshape(1, 10, 4).repeat(2, 1, 1)

valid_lens = torch.tensor([2, 6])

attention = AdditiveAttention(key_size=2, query_size=20, num_hiddens=8,dropout=0.1)

attention.eval()

print(attention(queries, keys, values, valid_lens))

d2l.show_heatmaps(attention.attention_weights.reshape((1, 1, 2, 10)),

xlabel='Keys', ylabel='Queries')

2.缩放点积注意力

import math

import torch

from torch import nn

from d2l import torch as d2l

def sequence_mask(X, valid_lens, value=float("-inf")):

"""简单实现的掩蔽功能"""

batch_size, seq_len = X.shape

mask = torch.arange(seq_len, device=X.device).expand(batch_size, seq_len) < valid_lens.unsqueeze(1)

X[~mask] = value

return X

def masked_softmax(X, valid_lens):

"""通过在最后一个轴上掩蔽元素来执行softmax操作"""

if valid_lens is None:

return nn.functional.softmax(X, dim=-1)

else:

shape = X.shape

if valid_lens.dim() == 1:

valid_lens = valid_lens.unsqueeze(1).repeat(1, shape[1])

X = X.reshape(-1, shape[-1])

X = sequence_mask(X, valid_lens.reshape(-1), value=-1e6)

return nn.functional.softmax(X.reshape(shape), dim=-1)

class DotProductAttention(nn.Module):

"""缩放点积注意力"""

def __init__(self, dropout, **kwargs):

super(DotProductAttention, self).__init__(**kwargs)

self.dropout = nn.Dropout(dropout)

# queries的形状:(batch_size,查询的个数,d)

# keys的形状:(batch_size,“键-值”对的个数,d)

# values的形状:(batch_size,“键-值”对的个数,值的维度)

# valid_lens的形状:(batch_size,)或者(batch_size,查询的个数)

def forward(self, queries, keys, values, valid_lens=None):

d = queries.shape[-1]

# 设置transpose_b=True为了交换keys的最后两个维度

scores = torch.bmm(queries, keys.transpose(1,2)) / math.sqrt(d)

self.attention_weights = masked_softmax(scores, valid_lens)

return torch.bmm(self.dropout(self.attention_weights), values)

#输入测试:

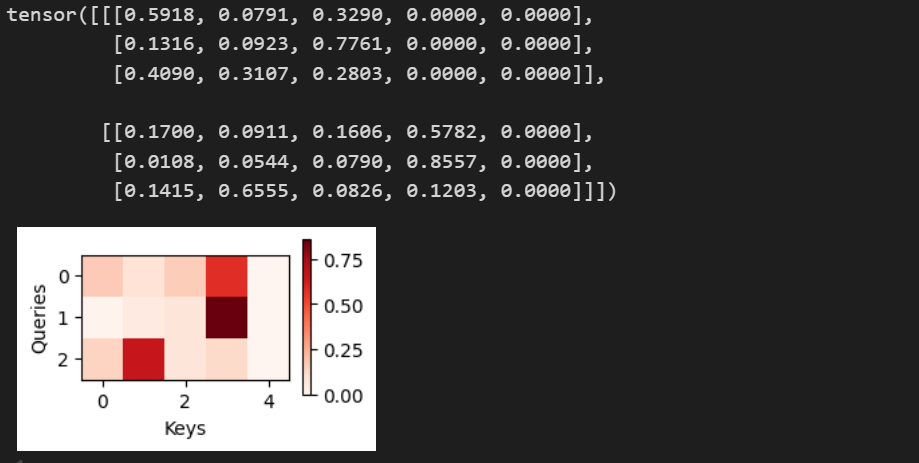

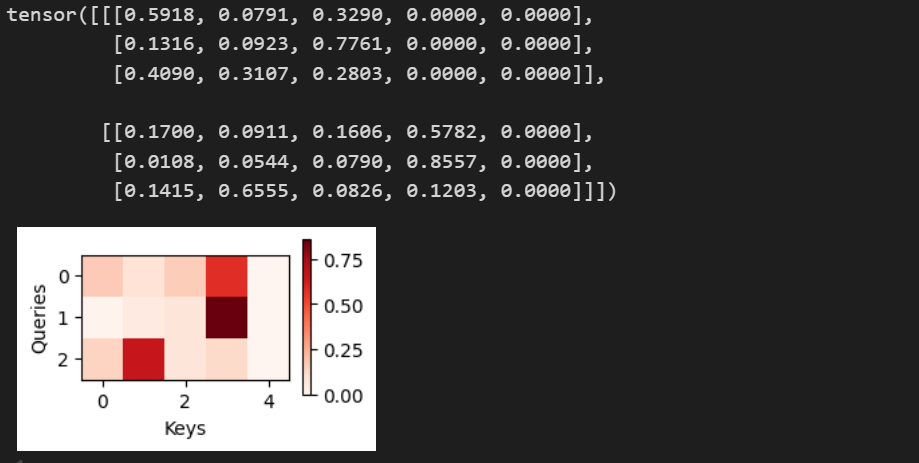

queries = torch.normal(0, 1, (2, 3, 4))

keys = torch.normal(0, 1, (2,5, 4))

values = torch.normal(0, 1, (2, 5, 6))

valid_lens = torch.tensor([3, 4])

attention = DotProductAttention(dropout=0.5)

attention.eval()

attention(queries, keys, values, valid_lens)

print(attention.attention_weights.shape)

d2l.show_heatmaps(attention.attention_weights[1].reshape((1, 1, 3, 5)),

xlabel='Keys', ylabel='Queries')

468

468

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?