3台:

| 名称 | ip |

| hadoop01 | 192.168.204.130 |

| hadoop02 | 192.168.204.131 |

| hadoop03 | 192.168.204.132 |

只要在hadoop01上搭建hadoop02 hadoop03没有必要

目录

1:下载Hive3.1.2 mysql-connector-java-5.1.32包,hive-jdbc-3.1.2-standalone包

2:下载:mysql -connector-java -5.1.32 和hive-jdbc-3.1.2-standalone.jar下载

1:拷贝hadoop01安装包到beeline客户端机器上(hadoop03)

1:需要加载hive-jdbc-3.1.2-standalone包

1:下载Hive3.1.2 mysql-connector-java-5.1.32包,hive-jdbc-3.1.2-standalone包

1:下载:Hive3.1.2

2:下载:mysql -connector-java -5.1.32 和hive-jdbc-3.1.2-standalone.jar下载

2:安装,解压Hive3.1.2

1:创建Hive文件夹

mkdir -p /export/server/

2:解压Hive到 /opt/module

cd /mwd

tar -zxvf apache-hive-3.1.2-bin.tar.gz -C /export/server

3:解决Hive与Hadoop之间guava版本差异

cd /export/server/apache-hive-3.1.2-bin/

rm -rf lib/guava-19.0.jar

cp /data/hadoop/app/share/hadoop/common/lib/guava-27.0-jre.jar ./lib/

4:修改配置文件

1:修改hive-env.sh

cd /export/server/apache-hive-3.1.2-bin/conf

mv hive-env.sh.template hive-env.sh

vim hive-env.sh

export HADOOP_HOME=/data/hadoop/app

export HIVE_CONF_DIR=/export/server/apache-hive-3.1.2-bin/conf

export HIVE_AUX_JARS_PATH=/export/server/apache-hive-3.1.2-bin/lib

2:修改hive-site.xml

vim hive-site.xml

<configuration>

<!-- 存储元数据mysql相关配置 -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hadoop01:3306/hive3?createDatabaseIfNotExist=true&useSSL=false&useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<!--mysql用户名-->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<!--mysql密码-->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<!-- H2S运行绑定host -->

<property>

<name>hive.server2.thrift.bind.host</name>

<value>hadoop01</value>

</property>

<!-- 远程模式部署metastore metastore地址 -->

<property>

<name>hive.metastore.uris</name>

<value>thrift://hadoop01:9083</value>

</property>

<!-- 关闭元数据存储授权 -->

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

</configuration>

3:上传mysql jdbc驱动到hive安装包lib下

cd /export/server/apache-hive-3.1.2-bin/lib/

5:初始化元数据

cd /export/server/apache-hive-3.1.2-bin/

bin/schematool -initSchema -dbType mysql -verbos

#初始化成功会在mysql中创建74张表

6:启动Hive

1:启动metastore服务

#前台启动 关闭ctrl+c

/export/server/apache-hive-3.1.2-bin/bin/hive --service metastore

#前台启动开启debug日志

/export/server/apache-hive-3.1.2-bin/bin/hive --service metastore --hiveconf hive.root.logger=DEBUG,console

#后台启动 进程挂起 关闭使用jps+ kill -9

nohup /export/server/apache-hive-3.1.2-bin/bin/hive --service metastore &

2:启动hiveserver2服务

nohup /export/server/apache-hive-3.1.2-bin/bin/hive --service hiveserver2 &

#注意 启动hiveserver2需要一定的时间 不要启动之后立即beeline连接 可能连接不上

[root@hadoop01 ~]# jps

108160 RunJar # Hive进程

39505 Jps

30690 ResourceManager

30899 NodeManager

30103 DataNode

112362 RunJar # Hive进程

29916 NameNode

7:beeline客户端连接

1:拷贝hadoop01安装包到beeline客户端机器上(hadoop03)

scp -r /export/server/apache-hive-3.1.2-bin/ hadoop03:/export/server/

/export/server/apache-hive-3.1.2-bin/bin/hive

/export/server/apache-hive-3.1.2-bin/bin/beeline

! connect jdbc:hive2://hadoop01:10000

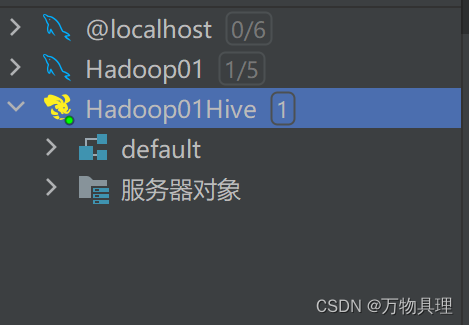

8:连接PyCharm

1:需要加载hive-jdbc-3.1.2-standalone包

9:Hive注释信息中文乱码解决

--注意 下面sql语句是需要在MySQL中执行 修改Hive存储的元数据信息(metadata)

use hive3;

show tables;

alter table hive3.COLUMNS_V2 modify column COMMENT varchar(256) character set utf8;

alter table hive3.TABLE_PARAMS modify column PARAM_VALUE varchar(4000) character set utf8;

alter table hive3.PARTITION_PARAMS modify column PARAM_VALUE varchar(4000) character set utf8 ;

alter table hive3.PARTITION_KEYS modify column PKEY_COMMENT varchar(4000) character set utf8;

alter table hive3.INDEX_PARAMS modify column PARAM_VALUE varchar(4000) character set utf8;

894

894

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?