一、原理

深度卷积对抗生成网络 (DCGAN)将GAN与CNN相结合,奠定后几乎所有GAN的基本网络架构。DCGAN极大地提升了原始GAN训练的稳定性以及生成结果质量。

DCGAN网络设计中采用了当时对CNN比较流行的改进方案:

1、将空间池化层用卷积层替代,这种替代只需要将卷积的步长stride设置为大于1的数值。改进的意义是下采样过程不再是固定的抛弃某些位置的像素值,而是可以让网络自己去学习下采样方式。

2、将全连接层去除

3、采用BN层,BN的全称是Batch Normalization,是一种用于常用于卷积层后面的归一化方法,起到帮助网络的收敛等作用。作者实验中发现对所有的层都使用BN会造成采样的震荡(我也不理解什么是采样的震荡,我猜是生成图像趋于同样的模式或者生成图像质量忽高忽低)和网络不稳定。

4、在生成器中除输出层使用Tanh(Sigmoid)激活函数,其余层全部使用ReLu激活函数。

5、在判别器所有层都使用LeakyReLU激活函数,防止梯度稀。

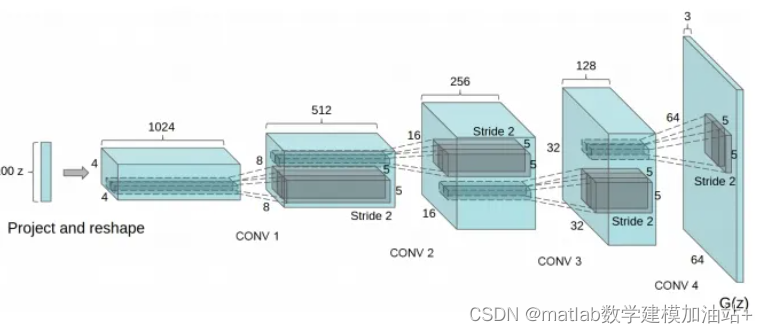

下面是DCGAN的生成器网络架构图。

二、代码实战

clear all; close all; clc;

%% Deep Convolutional Generative Adversarial Network

%% Load Data

load('mnistAll.mat')

trainX = preprocess(mnist.train_images);

trainY = mnist.train_labels;

testX = preprocess(mnist.test_images);

testY = mnist.test_labels;

%% Settings

settings.latentDim = 100;

settings.batch_size = 32; settings.image_size = [28,28,1];

settings.lrD = 0.0002; settings.lrG = 0.0002; settings.beta1 = 0.5;

settings.beta2 = 0.999; settings.maxepochs = 50;

%% Generator

paramsGen.FCW1 = dlarray(initializeGaussian([128*7*7,...

settings.latentDim]));

paramsGen.FCb1 = dlarray(zeros(128*7*7,1,'single'));

paramsGen.TCW1 = dlarray(initializeGaussian([3,3,128,128]));

paramsGen.TCb1 = dlarray(zeros(128,1,'single'));

paramsGen.BNo1 = dlarray(zeros(128,1,'single'));

paramsGen.BNs1 = dlarray(ones(128,1,'single'));

paramsGen.TCW2 = dlarray(initializeGaussian([3,3,64,128]));

paramsGen.TCb2 = dlarray(zeros(64,1,'single'));

paramsGen.BNo2 = dlarray(zeros(64,1,'single'));

paramsGen.BNs2 = dlarray(ones(64,1,'single'));

paramsGen.CNW1 = dlarray(initializeGaussian([3,3,64,1]));

paramsGen.CNb1 = dlarray(zeros(1,1,'single'));

stGen.BN1 = []; stGen.BN2 = [];

%% Discriminator

paramsDis.CNW1 = dlarray(initializeGaussian([3,3,1,32]));

paramsDis.CNb1 = dlarray(zeros(32,1,'single'));

paramsDis.CNW2 = dlarray(initializeGaussian([3,3,32,64]));

paramsDis.CNb2 = dlarray(zeros(64,1,'single'));

paramsDis.BNo1 = dlarray(zeros(64,1,'single'));

paramsDis.BNs1 = dlarray(ones(64,1,'single'));

paramsDis.CNW3 = dlarray(initializeGaussian([3,3,64,128]));

paramsDis.CNb3 = dlarray(zeros(128,1,'single'));

paramsDis.BNo2 = dlarray(zeros(128,1,'single'));

paramsDis.BNs2 = dlarray(ones(128,1,'single'));

paramsDis.CNW4 = dlarray(initializeGaussian([3,3,128,256]));

paramsDis.CNb4 = dlarray(zeros(256,1,'single'));

paramsDis.BNo3 = dlarray(zeros(256,1,'single'));

paramsDis.BNs3 = dlarray(ones(256,1,'single'));

paramsDis.FCW1 = dlarray(initializeGaussian([1,256*4*4]));

paramsDis.FCb1 = dlarray(zeros(1,1,'single'));

stDis.BN1 = []; stDis.BN2 = []; stDis.BN3 = [];

% average Gradient and average Gradient squared holders

avgG.Dis = []; avgGS.Dis = []; avgG.Gen = []; avgGS.Gen = [];

%% Train

numIterations = floor(size(trainX,4)/settings.batch_size);

out = false; epoch = 0; global_iter = 0;

%% modelGradients

function [GradGen,GradDis,stGen,stDis]=modelGradients(x,z,paramsGen,...

paramsDis,stGen,stDis)

[fake_images,stGen] = Generator(z,paramsGen,stGen);

d_output_real = Discriminator(x,paramsDis,stDis);

[d_output_fake,stDis] = Discriminator(fake_images,paramsDis,stDis);

% Loss due to true or not

d_loss = -mean(.9*log(d_output_real+eps)+log(1-d_output_fake+eps));

g_loss = -mean(log(d_output_fake+eps));

% For each network, calculate the gradients with respect to the loss.

GradGen = dlgradient(g_loss,paramsGen,'RetainData',true);

GradDis = dlgradient(d_loss,paramsDis);

end

%% progressplot

function progressplot(paramsGen,stGen,settings)

r = 5; c = 5;

noise = gpdl(randn([settings.latentDim,r*c]),'CB');

gen_imgs = Generator(noise,paramsGen,stGen);

gen_imgs = reshape(gen_imgs,28,28,[]);

fig = gcf;

if ~isempty(fig.Children)

delete(fig.Children)

end

I = imtile(gatext(gen_imgs));

I = rescale(I);

imagesc(I)

title("Generated Images")

colormap gray

drawnow;

end

%% dropout

function dly = dropout(dlx,p)

if nargin < 2

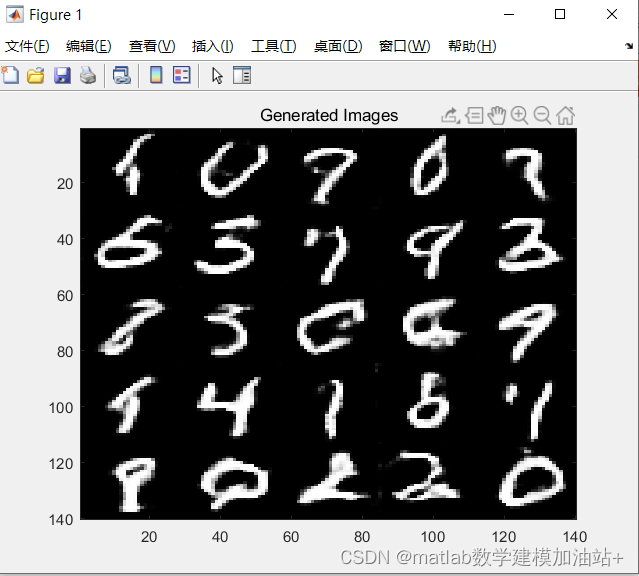

p = .3;实验结果

epoch = 5;

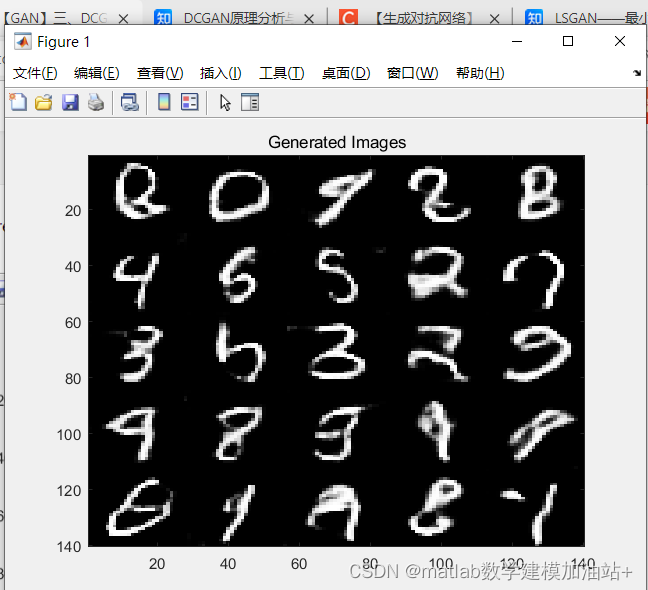

epoch = 6

534

534

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?