Many a times, you come across a requirement to update a large table in SQL Server that has millions of rows (say more than 5 millions) in it. In this article I will demonstrate a fast way to update rows in a large table

Consider a table called test which has more than 5 millions rows. Suppose you want to update a column with the value 0, if it that column contains negative value. Let us also assume that there are over 2 million row in that column that has a negative value.

The usual way to write the update method is as shown below:

UPDATE test SET col=0 WHERE col<0

The issue with this query is that it will take a lot of time as it affects 2 million rows and also locks the table during the update.

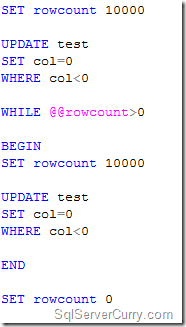

You can improve the performance of an update operation by updating the table in smaller groups. Consider the following code:

The above code updates 10000 rows at a time and the loop continues till @@rowcount has a value greater than zero. This ensures that the table is not locked.

Best practices while updating large tables in SQL Server

1. Always use a WHERE clause to limit the data that is to be updated

2. If the table has too many indices, it is better to disable them during update and enable it again after update

3. Instead of updating the table in single shot, break it into groups as shown in the above example.

You may also want to read my article Find the Most Time Consuming Code in your SQL Server Database

8895

8895

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?