利用上下文信息可以进一步提高模型的检测性能。SSH是将上下文信息用于单阶段人脸检测模型的早期方案,PyramidBox、SRN、DSFD等也设计了不同上下文模块。

SSH人脸检测算法中的上下文模块:

SSH中的上下文模块也是特征融合的的一种。上下文网络模块的作用是用于增大感受野,一般在two-stage 的目标检测模型当中,都是通过增大候选框的尺寸大小以合并得到更多的上下文信息,SSH通过单层卷积层的方法对上下文(context)信息进行了合并。

通过2个3✖️3的卷积层和3个3✖️3的卷积层并联,从而增大了卷积层的感受野,并作为各检测模块的目标尺寸。通过该方法构造的上下文的检测模块比候选框生成的方法具有更少的参数量,并且上下文模块可以在WIDER数据集上的AP提升0.5个百分点 。

我们可以看到SSH是多个并联3*3卷积的方式提升感受野,但是这样操作计算量还是比较大的。

代码实现:

class SSH(nn.Module):

def __init__(self, in_channels, out_channels):

super(SSH, self).__init__()

assert out_channels % 4 == 0

leaky = 0

if (out_channels <= 64):

leaky = 0.1

self.conv3x3 = conv_bn_no_relu(in_channels, out_channels // 2, stride=1)

self.conv5x5_1 = conv_bn(in_channels, out_channels // 4, stride=1, leaky=leaky)

self.conv5x5_2 = conv_bn_no_relu(out_channels // 4, out_channels // 4, stride=1)

self.conv7x7_2 = conv_bn(in_channels // 4, out_channels // 4, stride=1, leaky=leaky)

self.conv7x7_3 = conv_bn_no_relu(out_channels // 4, out_channels // 4, stride=1)

def forward(self, x):

conv3x3 = self.conv3x3(x)

conv5x5_1 = self.conv5x5_1(x)

conv5x5 = self.conv5x5_2(conv5x5_1)

conv7x7_2 = self.conv7x7_2(conv5x5_1)

conv7x7 = self.conv7x7_3(conv7x7_2)

out = torch.cat([conv3x3, conv5x5, conv7x7], dim=1)

out = F.relu(out)

return out

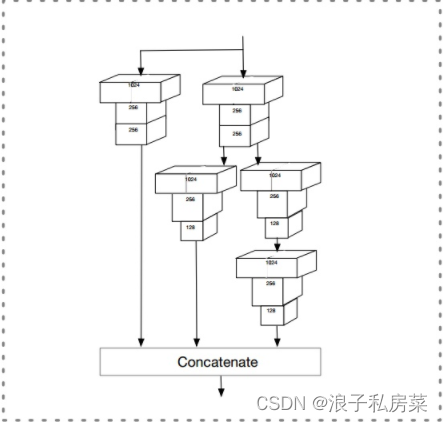

PyramidBox中的CPM模块

SSH通过横向扩展,通过不同大小的stride, 从而增加网络的感受野,提升mAP

DSSD增加residual blocks, 通过增加网络深度从而提升mAP

wider + deeper 一起干,CPM就是把两个叠加起来一起使用, 提升算法效果

代码实现:

class CPM(nn.Module):

def __init__(self, in_place):

super(CPM, self).__init__()

self.branch1 = conv_bn(in_plane, 1024, 1, 1, 0)

self.branch2a = conv_bn(in_plane, 256, 1, 1, 0)

self.branch2b = conv_bn(256, 256, 3, 1, 1)

self.branch2c = conv_bn(256, 1024, 1, 1, 0)

self.ssh_1 = nn.Conv2d(1024, 256, kernel_size=3, stride=1, padding=1)

self.ssh_dimred = nn.Conv2d(

1024, 128, kernel_size=3, stride=1, padding=1)

self.ssh_2 = nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

self.ssh_3a = nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

self.ssh_3b = nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

def forward(self, x):

out_residual = self.branch1(x)

x = F.relu(self.branch2a(x), inplace=True)

x = F.relu(self.branch2b(x), inplace=True)

x = self.branch2c(x)

rescomb = F.relu(x + out_residual, inplace=True)

ssh1 = self.ssh_1(rescomb)

ssh_dimred = F.relu(self.ssh_dimred(rescomb), inplace=True)

ssh_2 = self.ssh_2(ssh_dimred)

ssh_3a = F.relu(self.ssh_3a(ssh_dimred), inplace=True)

ssh_3b = self.ssh_3b(ssh_3a)

ssh_out = torch.cat([ssh1, ssh_2, ssh_3b], dim=1)

ssh_out = F.relu(ssh_out, inplace=True)

return ssh_out

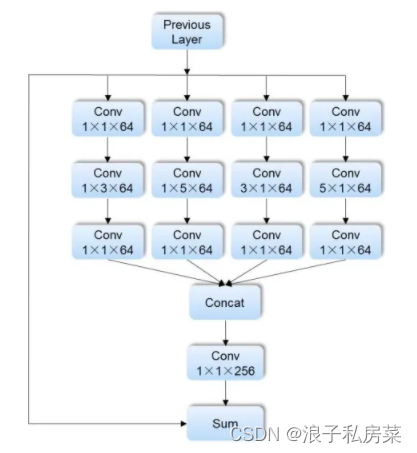

SRN人脸检测算法中的RFE模块

RFE模块借鉴Inception系列结构的思想,不但扩展了感受野大小,而且采用矩阵分解的方式,降低了计算量。并且为模型引入多样化的感受野信息,有助于SRN检出极端尺度、姿态下的人脸,RFE结构如图3,包含了Inception、ResNet思路,共4个分支 + 1个shotcut。又是一个集宽度和深度与一体的模块。

具体实现,先用1 x 1 conv降低feature map通道数至原通道数1 / 4,再就是不对称的1 x k、k x 1 conv操作(k = 3、5),提供矩形框的感受野(非传统正方形感受野区域),再接1 x 1 conv,最后五个分支做element-wise sum。

代码实现:

class RFE(nn.Module):

def __init__(self, in_planes=256, out_planes=256):

super(RFE, self).__init__()

self.out_channels = out_planes

self.inter_channels = int(in_planes / 4)

self.branch0 = nn.Sequential(

nn.Conv2d(in_planes, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=(1, 5), stride=1, padding=(0, 2)),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True)

)

self.branch1 = nn.Sequential(

nn.Conv2d(in_planes, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=(5, 1), stride=1, padding=(2, 0)),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True)

)

self.branch2 = nn.Sequential(

nn.Conv2d(in_planes, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=(1, 3), stride=1, padding=(0, 1)),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True)

)

self.branch3 = nn.Sequential(

nn.Conv2d(in_planes, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=(3, 1), stride=1, padding=(1, 0)),

nn.ReLU(inplace=True),

nn.Conv2d(self.inter_channels, self.inter_channels, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True)

)

self.cated_conv = nn.Sequential(

nn.Conv2d(in_planes, out_planes, kernel_size=1, stride=1, padding=0),

nn.ReLU(inplace=True)

)

def forward(self,x):

x0 = self.branch0(x)

x1 = self.branch1(x)

x2 = self.branch2(x)

x3 = self.branch3(x)

out = torch.cat((x0, x1, x2, x3), 1)

out = self.cated_conv(out)

out = out + x

return out

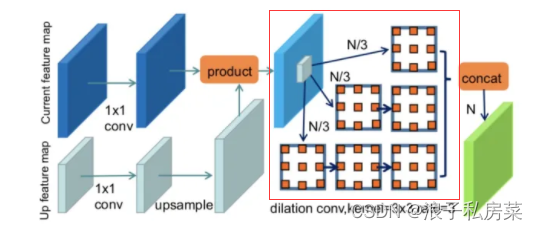

DSFD中的FEM模块

为了增强原始特征cell oc(i,j,l),FEM利用不同维度的信息,包括较高层的原始神经元cell oc(i,j,l),和当前层非局部神经元cell:nc(i-e,j-e,l),nc(i-e,j,l),…,nc(i,j+e,l),nc(i+e,j+e,l)。

总结:

基本都是通过inception系列结构演化而来,就本人实验效果而言觉得的RFE模块,简单易实现,计算量小,可以很好的应用于设备端人脸检测算法中去。

文章介绍了SSH、PyramidBox、SRN和DSFD等人脸检测算法中如何利用上下文信息提升模型性能。SSH通过并联的卷积层增大感受野;PyramidBox的CPM模块结合了SSH和深度残差块;SRN的RFE模块借鉴Inception结构,提供多样化的感受野;而DSFD的FEM模块则利用不同维度信息增强特征。这些方法都在减少计算量的同时提高了检测精度。

文章介绍了SSH、PyramidBox、SRN和DSFD等人脸检测算法中如何利用上下文信息提升模型性能。SSH通过并联的卷积层增大感受野;PyramidBox的CPM模块结合了SSH和深度残差块;SRN的RFE模块借鉴Inception结构,提供多样化的感受野;而DSFD的FEM模块则利用不同维度信息增强特征。这些方法都在减少计算量的同时提高了检测精度。

4875

4875

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?