keras

在keras中,比如动态调整学习率,可以:

1 2 3 4 5 6 7 8 9 10 11 12 import tensorflow as tfdef step_decay (epoch) : if epoch < 3 : lr = 1e-5 else : lr = 1e-6 return lr tf.keras.callbacks.LearningRateScheduler(step_decay, verbose=2 )

lr_scheduler

在pytorch中,提供了torch.optim.lr_scheduler

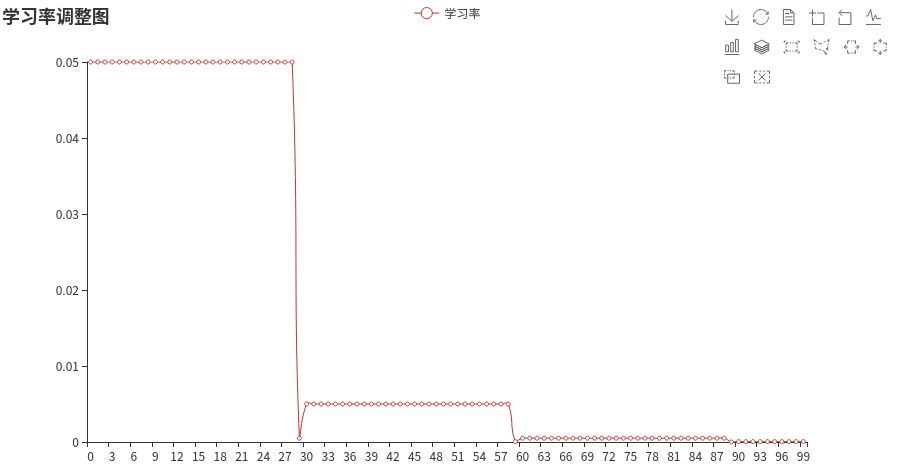

1. StepLR

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 import torchfrom pyecharts import optionsfrom pyecharts.charts import Linefrom torch import optimfrom torch.nn import Linearfrom torch.optim import lr_schedulerclass TestModel (torch.nn.Module) : def __init__ (self) : super(TestModel, self).__init__() self.linear = Linear(100 , 2 ) def forward (self, x) : return self.linear(x) def line_graph (xs, ys) : line = Line() line.add_xaxis(xs) line.add_yaxis(series_name='学习率' , y_axis=ys, is_smooth=True ) line.set_global_opts( title_opts=options.TitleOpts(title='学习率调整图' ), toolbox_opts=options.ToolboxOpts() ) line.set_series_opts( label_opts=options.LabelOpts(is_show=False ), ) line.render('折线图.html' ) model = TestModel() optimizer = optim.Adam(params=model.parameters(), lr=0.05 ) scheduler = lr_scheduler.StepLR(optimizer, step_size=30 , gamma=0.1 ) x = list(range(100 )) y = [] for epoch in range(100 ): optimizer.step() scheduler.step() lr = scheduler.get_lr() y.append(scheduler.get_lr()[0 ]) line_graph(x, y)

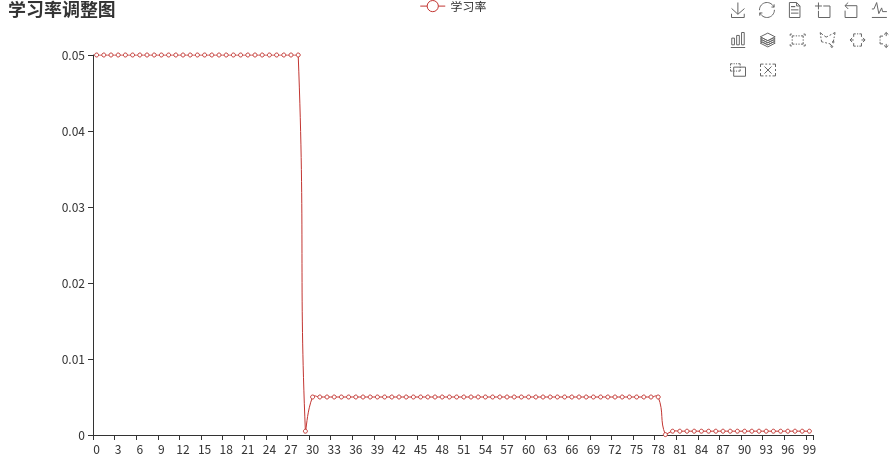

2. MultiStepLR

1 2 scheduler = lr_scheduler.MultiStepLR(optimizer, [30 , 80 ], 0.1 )

这个可以设置区间,在30 ~ 80 为一个学习率

3. ExponentialLR

1 scheduler = lr_scheduler.ExponentialLR(optimizer, gamma=0.9 )

指数衰减

在transformers库中,也提供了一些,比如:

1. get_linear_schedule_with_warmup

学习率预热

1 num_warmup_steps = 0.05 * len(train_dataloader) * epochs

1 2 3 4 5 6 optimizer = optim.Adam(params=model.parameters(), lr=1e-3 ) scheduler = get_linear_schedule_with_warmup( optimizer=optimizer, num_warmup_steps=10000 , num_training_steps=100000 )

学习率先不断上升,然后再不断减小。

在预热期间,学习率从0线性增加到优化器中的初始lr。随后线性降低到0

理论

4829

4829

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?