KNN简介:

k-Nearest Neighbor:kNN 即k近邻算法

分类问题:对新的样本,根据其k个最近邻的训练样本的类别,通过多数表决等方式进行预测。

回归问题:对新的样本,根据其k个最近邻的训练样本标签值的均值作为预测值。

优缺点:

- k近邻模型具有非常高的容量,这使得它在训练样本数量较大时能获得较高的精度

- 计算成本很高

- 在训练集较小时,泛化能力很差,非常容易陷入过拟合

- 无法判断特征的重要性

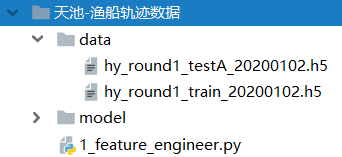

数据集采用阿里巴巴天池项目中的“渔船轨迹数据”

本数据集为h5数据,运行代码目录结构如下

代码如下:

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

import pickle

data = pd.read_hdf("data/hy_round1_train_20200102.h5")

print(data.shape)

print(data.columns)

# 校验是否存在NaN

# print(np.all(pd.notnull(data)))

# 载入数据集

data = np.array(data)

# 量化标签

# print(np.where(data[:,-1] == "刺网"))

data[np.where(data[:,-1] == "刺网"),-1] = 0

data[np.where(data[:,-1] == "围网"),-1] = 1

data[np.where(data[:,-1] == "拖网"),-1] = 2

x_train, x_test, y_train, y_test = train_test_split(data[:,:-2],data[:,-1],test_size=0.25,random_state=42)

# 标准化(归一化存在离群点影响弊端)

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.fit_transform(x_test)

y_train = y_train.astype("int")

y_test = y_test.astype("int")

print(y_train)

# 模型训练

estimator = KNeighborsClassifier(n_neighbors=5)

estimator.fit(x_train,y_train)

# 保存模型

f = open("model/hy_knn_model.pickle","wb")

pickle.dump(estimator, f)

# 模型评估

# 预测值

y_predict = estimator.predict(x_test)

print("模型预测结果:\n", y_predict)

print("真实值对比结果:\n", y_test == y_predict)

# 准确率

score = estimator.score(x_test,y_test)

print("模型准确率:",score)

749

749

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?