Google于8月份正式推出了ARCore,ARCore的介绍可以参见官网。作为ARKit的竞赛对手,ARCore有一个致命的缺点,就是支持的机型较少,目前只支持Google的Pixel和三星S8手机。不过刚好有一个Pixel手机。于是想要开发一个ARCore的应用,后来有了一个想法就是ARCore和人脸结合的Demo。

一、ARCore的基础概念

根据官网上的介绍,ARCore的核心功能是:

Motion tracking:allows the phone to understand and track its position relative to the world.

Environmental understanding: allows the phone to detect the size and location of flat horizontal surfaces like the ground or a coffee table.

Light estimation :allows the phone to estimate the environment’s current lighting conditions.

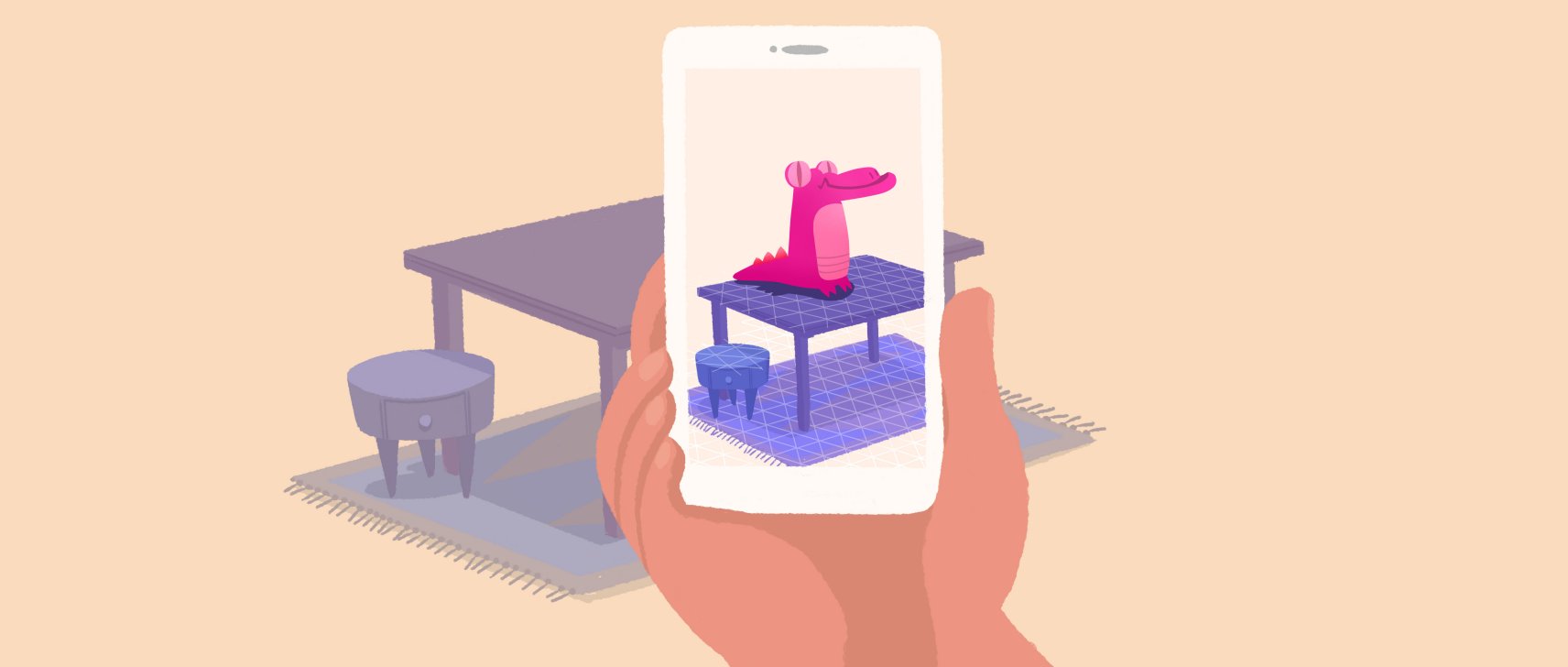

Environmental understanding功能如下所示

Light estimation 的效果如下所示

二、接入人脸识别

由于检测人脸的代码是之前项目中写的,所以使用Unity来实现不太好实现,所以选用Android对应的SDK。SDK地址:https://github.com/google-ar/arcore-android-sdk

首先看一下Demo中如何使用OpenGL实时渲染Camera数据的,整个流程位于BackgroundRenderer.class

/**

* This class renders the AR background from camera feed. It creates and hosts the texture

* given to ARCore to be filled with the camera image.

*/

public class BackgroundRenderer {

//可以看到这个定义了textureId,所以是通过surfaceTexture对摄像头的数据进行了处理

private int mTextureId = -1;

public void setTextureId(int textureId) {

mTextureId = textureId;

}

public void createOnGlThread(Context context) {

// Generate the background texture.

int textures[] = new int[1];

GLES20.glGenTextures(1, textures, 0);

mTextureId = textures[0];

//可以看到我们在这里生成了一个textureId。然后绑定纹理对象ID。

GLES20.glBindTexture(mTextureTarget, mTextureId);

...

}

public void draw(Frame frame) {

// If display rotation changed (also includes view size change), we need to re-query the uv

// coordinates for the screen rect, as they may have changed as well.

//这两行很重要,用来更新视图矩阵

if (frame.isDisplayRotationChanged()) {

frame.transformDisplayUvCoords(mQuadTexCoord, mQuadTexCoordTransformed);

}

// No need to test or write depth, the screen quad has arbitrary depth, and is expected

// to be drawn first.

GLES20.glDisable(GLES20.GL_DEPTH_TEST);

//禁止向深度缓冲区写入数据

GLES20.glDepthMask(false);

GLES20.glBindTexture(GLES11Ext.GL_TEXTURE_EXTERNAL_OES, mTextureId);

// Restore the depth state for further drawing.

// 后面需要绘制3

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

105

105

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?