1.Spark ML代码实现

1.1 重要概念

- DataFrame

用于学习的数据集

可以包含多种类型 - 管道组件

Transfromers:transfrom()

把一个DF转换成另一个DF的算法

Estimators:fit()

应用在一个DF上生成一个转换器的算法

1.2 如何工作

训练:

预测:

1.3 其他

- 参数:

所有的转化器和评估器共享一个公共的api

参数名Param是一个参数

ParamMap是一个参数的集合(parameter,value)

传递参数的两种方式:为实例设置参数,传递ParamMap给fit()或transfrom方法 - 保存和加载管道

1.3 案例一

步骤:

- 准备带标签和特征的数据

- 创建逻辑回归的评估器

- 使用setter方法设置参数

- 使用存储在Lr中的参数来训练一个模型

- 使用paramMap选择指定的参数

- 准备测试数据

- 预测结果

import org.apache.spark.ml.classification.LogisticRegression

import org.apache.spark.ml.param.ParamMap

import org.apache.spark.ml.linalg.{Vector,Vectors}

import org.apache.spark.sql.Row

//创建数据集

val training = sqlContext.createDataFrame(Seq(

(1.0, Vectors.dense(0.0,1.1,0.1)),

(0.0, Vectors.dense(2.0,1.1,-1.0)),

(0.0, Vectors.dense(2.0,1.3,1.0)),

(1.0, Vectors.dense(0.0,1.2,-0.5))

)).toDF("label","features")

//创建逻辑评估器

var lr = new LogisticRegression()

/*调整参数

* setMaxIter 最大迭代次数,训练的截止条件,默认100次

* setRegParam 正则化系数,默认为0,不使用正则化

*/

lr.setMaxIter(10).setRegParam(0.01)

//创建转化器 模型

val model = lr.fit(training)

//创建另外一个模型

val paramMap = ParamMap(lr.maxIter -> 20, lr.regParam->0.1, lr.threshold ->0.55)

val paramMap2 = ParamMap(lr.probabilityCol -> "myProbability")

val paramMapCombined = paramMap ++ paramMap2

val model2 = lr.fit(training, paramMapCombined)

//创建测试集

val test = sqlContext.createDataFrame(Seq(

(1.0, Vectors.dense(-1.0,1.5,1.3)),

(0.0, Vectors.dense(3.0,2.1,-0.1)),

(1.0, Vectors.dense(0.0,2.2,-1.5))

)).toDF("label","features")

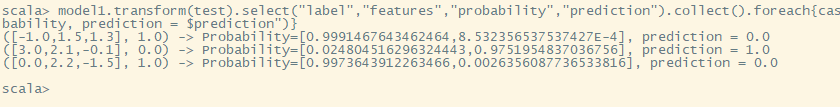

model1.transform(test).select("label","features","probability","prediction").collect().

foreach{case

Row(label: Double, features: Vector, probability: Vector, prediction: Double) =>

println(s"($features, $label) -> Probability=$probability, prediction = $prediction")

}

预测图

1.3 案例二

步骤

- 准备训练文档

- 配置ML管道,包含三个stage:Tokenizer,HasingTF和Lt

- 安装管道到数据上

- 保存管道到磁盘:包括安装好的和未安装好的

- 加载管道

- 准备测试文档

- 预测结果

import org.apache.spark.ml.feature.{Tokenizer,HashingTF}

import org.apache.spark.ml.{Pipeline,PipelineModel}

import org.apache.spark.ml.classification.LogisticRegression

import org.apache.spark.ml.linalg.{Vector,Vectors}

import org.apache.spark.sql.Row

import org.apache.spark.sql.SQLContext

val sqlContext = new SQLContext(sc)

//创建数据集

val training = sqlContext.createDataFrame(Seq(

(0L, "a b c d e spark", 1.0),

(1L, "b d", 0.0),

(2L, "spark f g h", 1.0),

(3L, "hadoop mapreduce", 0.0)

)).toDF("id","text","label")

//Tokenizerj将句子切成单词

val tokenizer = new Tokenizer().setInputCol("text").setOutputCol("words")

//接收词条的集合然后把这些集合转化成固定长度的特征向量。这个算法在哈希的同时会统计各个词条的词频。

val hashingTF = new HashingTF().setNumFeatures(1000).setInputCol(tokenizer.getOutputCol).setOutputCol("features")

val lr = new LogisticRegression().setMaxIter(10).setRegParam(0.01)

val pipeline = new Pipeline().setStages(Array(tokenizer,hashingTF,lr))

val model = pipeline.fit(training)

pipeline.save("/tmp/sparkMl-LRpipeline")

model.save("/tmp/sparkMl-LRmodel")

//加载模型

val model2 = PipelineModel.load("/tmp/sparkMl-LRmodel")

//创建测试集

val test = sqlContext.createDataFrame(Seq(

(4L, "spark h d e"),

(5L, "a f c"),

(6L, "spark mapreduce"),

(7L, "hadoop apache")

)).toDF("id","text")

model.transform(test).select("id","text","probability","prediction").collect()

.foreach{case Row(id: Long, text: String, probability: Vector, prediction: Double) =>

println(s"($id, $test) -> Probability=$probability, prediction = $prediction")}

1.3 案例三模型调优

步骤

- 准备训练数据

- 配置ML管道,包含三个stage:Tokenizer,HashingTF和Lr

- 使用ParamGridBuilder构造一个参数网格

- 使用CrossValidator需要一个Estimator,一个评估器参数集合,和一个Evaluator

- 运行交叉检验,选择最好的参数集

- 准备测试数据

- 预测结果

import org.apache.spark.ml.feature.{Tokenizer,HashingTF}

import org.apache.spark.ml.{Pipeline,PipelineModel}

import org.apache.spark.ml.classification.LogisticRegression

import org.apache.spark.ml.linalg.{Vector,Vectors}

import org.apache.spark.sql.Row

import org.apache.spark.sql.SQLContext

import org.apache.spark.ml.evaluation.BinaryClassificationEvaluator

import org.apache.spark.ml.tuning.{CrossValidator,ParamGridBuilder}

val sqlContext = new SQLContext(sc)

//创建数据集

val training = sqlContext.createDataFrame(Seq(

(0L, "a b c d e spark", 1.0),

(1L, "b d", 0.0),

(2L, "spark f g h", 1.0),

(3L, "hadoop mapreduce", 0.0),

(4L, "b spark who", 1.0),

(5L, "g d a y", 0.0),

(6L, "spark fly", 1.0),

(7L, "was mapreduce", 0.0),

(8L, "e spark program", 1.0),

(9L, "a e c l", 0.0),

(10L, "spark compile", 1.0),

(11L, "hadoop software", 0.0)

)).toDF("id","text","label")

//Tokenizerj将句子切成单词

val tokenizer = new Tokenizer().setInputCol("text").setOutputCol("words")

//接收词条的集合然后把这些集合转化成固定长度的特征向量。这个算法在哈希的同时会统计各个词条的词频。

val hashingTF = new HashingTF().setNumFeatures(1000).setInputCol(tokenizer.getOutputCol).setOutputCol("features")

val lr = new LogisticRegression().setMaxIter(10)

val pipeline = new Pipeline().setStages(Array(tokenizer,hashingTF,lr))

//构建网格

val paramGrid = new ParamGridBuilder().addGrid(hashingTF.numFeatures, Array(10,100,1000)).

addGrid(lr.regParam, Array(0.1, 0.01)).build()

//获取最佳模型参数

val cv = new CrossValidator().setEstimator(pipeline).setEstimatorParamMaps(paramGrid).

setEvaluator(new BinaryClassificationEvaluator()).setNumFolds(2)

val CVmodel = cv.fit(training)

//创建测试集

val test = sqlContext.createDataFrame(Seq(

(12L, "spark h d e"),

(13L, "a f c"),

(14L, "spark mapreduce"),

(15L, "hadoop apache")

)).toDF("id","text")

CVmodel.transform(test).select("id","text","probability","prediction").collect().

foreach{case Row(id: Long, text: String, probability: Vector, prediction: Double) =>

println(s"($id, $test) -> Probability=$probability, prediction = $prediction")}

1.4 案例四通过训练校验分类来调优模型

步骤

- 准备训练数据

- 使用ParamGridBuild构造一个参数网格

- 使用ParamGridBuilder构造一个参数网格

- 使用TrainVaildationSplit来选择模型和参数,CrossValidator需要一个Estimator,一个评估器参数集合,和一个Evaluator

- 运行训练校验分离,选择最好的参数

- 在测试数据上左预测,模型是参数组合中执行最好的一个

import org.apache.spark.ml.regression.LinearRegression

import org.apache.spark.sql.Row

import org.apache.spark.sql.SQLContext

import org.apache.spark.ml.evaluation.RegressionEvaluator

import org.apache.spark.ml.tuning.{TrainValidationSplit,ParamGridBuilder}

val sqlContext = new SQLContext(sc)

//读取文件

//从hdfs里读

//val data = sqlContext.read.format("libsvm").load("/data/sample_linear_regression_data.txt")

//从本地读

val data = sqlContext.read.format("libsvm").load("file:/home/hadoop/spark/data/sample_linear_regression_data.txt")

val Array(training, text) = data.randomSplit(Array(0.9, 0.1),seed=12345)

val lr = new LinearRegression()

//构建网格

val paramGrid = new ParamGridBuilder().addGrid(lr.elasticNetParam, Array(0.0,0.5,1.0)).

addGrid(lr.fitIntercept).addGrid(lr.regParam,Array(0.1, 0.01)).build()

//获取最佳模型参数

val trainValidationSplit = new TrainValidationSplit().setEstimator(lr).setEstimatorParamMaps(paramGrid).

setEvaluator(new RegressionEvaluator).setTrainRatio(0.8)

val model = trainValidationSplit.fit(training)

model.transform(test).select("features","label","prediction").show()

2.用户画像系统应用

2.1 用户信用等级分级

2.2 在大数据营销的应用

2.3 用户流失预警

2.4 潜在用户分析

2.5 异常检测分析与离群点分析

2271

2271

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?