Keras 自定义loss 函数

有的时候keras 里面提供的loss函数不能满足我们的需求,我们就需要自己去提供loss函数, 比如dice-loss。

dice-loss 一般是dice-coef 取反, 因此先求dice-coef:

import keras.backend as K

def dice_coef(y_true, y_pred, smooth, thresh):

y_pred = y_pred > thresh

y_true_f = K.flatten(y_true)

y_pred_f = K.flatten(y_pred)

intersection = K.sum(y_true_f * y_pred_f)

return (2. * intersection + smooth) / (K.sum(y_true_f) + K.sum(y_pred_f) + smooth)但是keras的loss函数只能传y_true, y_pred作为参数,因此我们使用function closure来实现, 就是用函数来返回函数:

def dice_loss(smooth, thresh):

def dice(y_true, y_pred)

return -dice_coef(y_true, y_pred, smooth, thresh)

return dice# build model

model = my_model()

# get the loss function

model_dice = dice_loss(smooth=1e-5, thresh=0.5)

# compile model

model.compile(loss=model_dice)具体实例如下:

多分类语义分割损失函数:

#多分类dice

def dice_coef_fun(smooth=0.001):

def dice_coef(y_true, y_pred):

#求得每个sample的每个类的dice

intersection = K.sum(y_true * y_pred, axis=(1,2,3))

union = K.sum(y_true, axis=(1,2,3)) + K.sum(y_pred, axis=(1,2,3))

sample_dices=(2. * intersection + smooth) / (union + smooth) #一维数组 为各个类别的dice

#求得每个类的dice

dices=K.mean(sample_dices,axis=0)

return K.mean(dices) #所有类别dice求平均的dice

return dice_coef

def dice_coef_loss_fun(smooth=0.001):

def dice_coef_loss(y_true,y_pred):

return 1-dice_coef_fun(smooth=smooth)(y_true=y_true,y_pred=y_pred)

return dice_coef_loss

#二分类

#dice loss1

def dice_coef(y_true, y_pred, smooth):

#y_pred =K.cast((K.greater(y_pred,thresh)), dtype='float32')#转换为float型

#y_pred = y_pred[y_pred > thresh]=1.0

y_true_f =y_true# K.flatten(y_true)

y_pred_f =y_pred# K.flatten(y_pred)

# print("y_true_f",y_true_f.shape)

# print("y_pred_f",y_pred_f.shape)

intersection = K.sum(y_true_f * y_pred_f,axis=(0,1,2))

denom =K.sum(y_true_f,axis=(0,1,2)) + K.sum(y_pred_f,axis=(0,1,2))

return K.mean((2. * intersection + smooth) /(denom + smooth))

def dice_loss(smooth):

def dice(y_true, y_pred):

# print("y_true_f",y_true.shape)

# print("y_pred_f",y_pred.shape)

return 1-dice_coef(y_true, y_pred, smooth)

return dice模型训练:

model_dice=dice_coef_loss_fun(smooth=1e-5)

model.compile(optimizer = Nadam(lr = 2e-4), loss = model_dice, metrics = ['accuracy'])

model_dice=dice_loss(smooth=1e-5)

# model_dice=generalized_dice_loss_fun(smooth=1e-5)

# model.compile(optimizer = Nadam(lr = 2e-4), loss = "binary_crossentropy", metrics = ['accuracy'])

model.compile(optimizer = Nadam(lr = 2e-4), loss = model_dice, metrics = ['accuracy'])

模型加载:

model=load_model("unet_membrane_int16.hdf5",custom_objects={'dice_coef_loss':dice_coef_loss_fun(1e-5),'dice_coef_fun':dice_coef_fun})

model=load_model("vnet_s_extend_epoch110.hdf5",custom_objects={'dice':dice_loss(1e-5),'dice_coef':dice_coef})

上面的损失函数写的麻烦,也可以这么写:

# parameter for loss function

# metric function and loss function

def dice_coef(y_true, y_pred):

amooth=0.0005

y_true_f = K.flatten(y_true)

y_pred_f = K.flatten(y_pred)

intersection = K.sum(y_true_f * y_pred_f)

return (2. * intersection + smooth) / (K.sum(y_true_f) + K.sum(y_pred_f) + smooth)

def dice_coef_loss(y_true, y_pred):

return -dice_coef(y_true, y_pred)

# load model

weight_path = './weights.h5'

model = load_model(weight_path,custom_objects={'dice_coef_loss': dice_coef_loss,'dice_coef':dice_coef})参考资料:

https://www.jianshu.com/p/78c100d3c4f4

https://blog.csdn.net/u010420283/article/details/90232926

https://blog.csdn.net/xiaoma_xiaoma/article/details/91041620

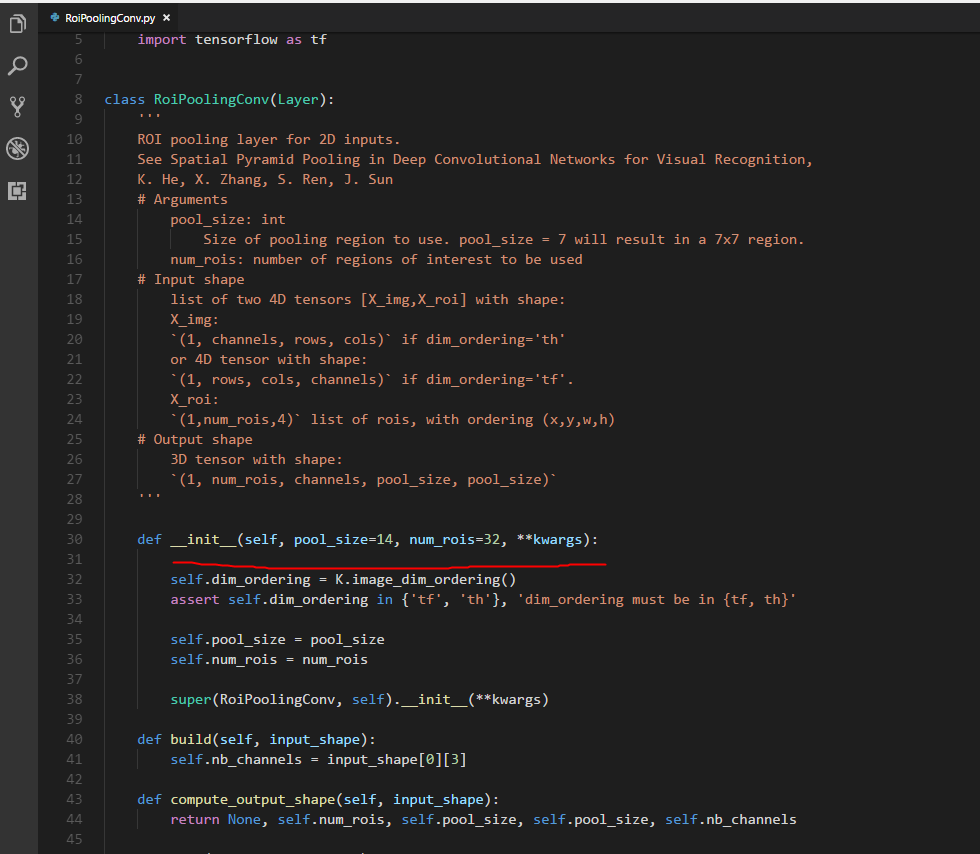

另外,在转化keras版本的fastercnn时候,代码中含有自定义的中间层RoiPoolingConv,切记初始化__init__中形参必须实力初始化才能正常加载model,

参考:https://blog.csdn.net/qq_34418352/article/details/90213049

本文详细介绍了如何在Keras中自定义Dice Loss函数,包括单分类和多分类语义分割任务。通过实例展示了如何定义和使用Dice Loss,以及如何在模型训练和加载时正确配置自定义损失函数。

本文详细介绍了如何在Keras中自定义Dice Loss函数,包括单分类和多分类语义分割任务。通过实例展示了如何定义和使用Dice Loss,以及如何在模型训练和加载时正确配置自定义损失函数。

3741

3741

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?