内核:X86 openeuler4.19

内存大小3G,采用的是sparse vmemmap内存模型,相关config如下

CONFIG_ARCH_SPARSEMEM_ENABLE=y

CONFIG_ARCH_SPARSEMEM_DEFAULT=y

CONFIG_SPARSEMEM_MANUAL=y

CONFIG_SPARSEMEM=y

CONFIG_SPARSEMEM_EXTREME=y

CONFIG_SPARSEMEM_VMEMMAP_ENABLE=y

CONFIG_SPARSEMEM_VMEMMAP=y

memblock

伙伴系统初始化之前的内存分配是通过memblock进行的,在启动参数添加memblock=debug后,可以看到这个阶段使用memblock分配器的内存使用信息。

在vmware虚拟机的环境下,物理内存大小通过boot_params传递给内核,然后加入到memblock的memory类型里,早期通过memblock预留的内存则加入到reserve类型

paging_init

sparse_memory_present_with_active_regions

对加入到memblock.memory里的所有内存区域调用memory_present

/* Record a memory area against a node. */

void __init memory_present(int nid, unsigned long start, unsigned long end)

{

unsigned long pfn;

#ifdef CONFIG_SPARSEMEM_EXTREME

if (unlikely(!mem_section)) {

unsigned long size, align;

size = sizeof(struct mem_section*) * NR_SECTION_ROOTS; //16kb

align = 1 << (INTERNODE_CACHE_SHIFT);

mem_section = memblock_virt_alloc(size, align); /*通过

* memblcok申请16kb内存,sparse内存模型下,每128M作为一个section,用

* mem_section结构体表示,所有的mem_section结构体每256(SECTION_PER_ROOT)

* 个分为一个组,首地址存入到全局数组mem_section。每个组可以表示的物理地址大小

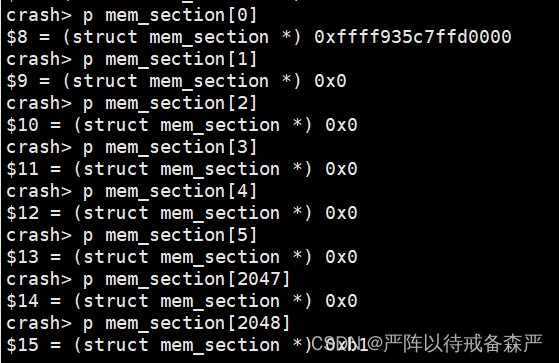

* 为256*128M=64G,在本次实验环境中只有3GB内存,所以一个组就够了,通过crash工具

* 查看mem_section变量的值也可以看到除了mem_section[0]之外全都指向0x0

* mem_section结构体的成员section_mem_map指向一个page

* 数组,这样通过全局变量mem_section就可以访问到所有的page*/

}

#endif

start &= PAGE_SECTION_MASK;

mminit_validate_memmodel_limits(&start, &end);

for (pfn = start; pfn < end; pfn += PAGES_PER_SECTION) { /*每2的15次方个page分配一个mem_section*/

unsigned long section = pfn_to_section_nr(pfn);

struct mem_section *ms;

sparse_index_init(section, nid);//分配一个mem_section组(256个mem_section结构体组成一个组,占4kb大小)

set_section_nid(section, nid);

ms = __nr_to_section(section);//以section作为索引得到mem_section[0]数组中的某一个mem_section,本例中3gb物理地址空间为0-0xbfffffff,所以mem_section[0][0]到mem_section[0][23](3GB/128M=24)的mem_section结构体都会被填充

if (!ms->section_mem_map) { //因为每128M分配一个mem_section结构体,需要128M/4K/sizeof(struct page),所以sectiom_mem_map的低位可以用来作为标志位

ms->section_mem_map = sparse_encode_early_nid(nid) |

SECTION_IS_ONLINE;

section_mark_present(ms);

}

}

}

#define NR_SECTION_ROOTS DIV_ROUND_UP(NR_MEM_SECTIONS, SECTIONS_PER_ROOT)

#define NR_MEM_SECTIONS (1UL << SECTIONS_SHIFT)

#define SECTIONS_SHIFT (MAX_PHYSMEM_BITS - SECTION_SIZE_BITS)

# define SECTION_SIZE_BITS 27 /* matt - 128 is convenient right now */

# define MAX_PHYSADDR_BITS (pgtable_l5_enabled() ? 52 : 44)

# define MAX_PHYSMEM_BITS (pgtable_l5_enabled() ? 52 : 46) //46

#define SECTIONS_PER_ROOT (PAGE_SIZE / sizeof (struct mem_section))

crash查看mem_section变量,2048已溢出

sparse_init

按内存节点为所有的mem_section结构体分配page数组,并为其section_mem_map成员赋值,本例中仅有一个内存节点(笔者接触到的手机、个人pc等设备都只有一个内存结点)

/*

* Allocate the accumulated non-linear sections, allocate a mem_map

* for each and record the physical to section mapping.

*/

void __init sparse_init(void)

{

unsigned long pnum_begin = first_present_section_nr();

int nid_begin = sparse_early_nid(__nr_to_section(pnum_begin));

unsigned long pnum_end, map_count = 1;

/* Setup pageblock_order for HUGETLB_PAGE_SIZE_VARIABLE */

set_pageblock_order();

for_each_present_section_nr(pnum_begin + 1, pnum_end) {

int nid = sparse_early_nid(__nr_to_section(pnum_end));

if (nid == nid_begin) {

map_count++;

continue;

}

/* Init node with sections in range [pnum_begin, pnum_end) */

sparse_init_nid(nid_begin, pnum_begin, pnum_end, map_count);

nid_begin = nid;

pnum_begin = pnum_end;

map_count = 1;

}

/* cover the last node */

sparse_init_nid(nid_begin, pnum_begin, pnum_end, map_count); //node 0,map_count表示有多少个mem_section,本例中为23

vmemmap_populate_print_last();

}

sparse_init_nid

/*

* Initialize sparse on a specific node. The node spans [pnum_begin, pnum_end)

* And number of present sections in this node is map_count.

*/

static void __init sparse_init_nid(int nid, unsigned long pnum_begin,

unsigned long pnum_end,

unsigned long map_count)

{

unsigned long pnum, usemap_longs, *usemap;

struct page *map;

//mem_section的成员page_block_flags指向一块区域,用于表示该mem_section中的page的使用情况,

//此处计算该区域的大小为几个long的长度。此处为4

usemap_longs = BITS_TO_LONGS(SECTION_BLOCKFLAGS_BITS);

//分配24*4*8字节的空间

usemap = sparse_early_usemaps_alloc_pgdat_section(NODE_DATA(nid),

usemap_size() *

map_count);

if (!usemap) {

pr_err("%s: node[%d] usemap allocation failed", __func__, nid);

goto failed;

}

//分配sizeof(struct page)*PAGES_PER_SECTION*map_count字节内存,以PAGE_SIZE(4K)对齐,

//此处为64*32K*24=50331648字节

sparse_buffer_init(map_count * section_map_size(), nid);

for_each_present_section_nr(pnum_begin, pnum) {

if (pnum >= pnum_end)

break;

map = sparse_mem_map_populate(pnum, nid, NULL);

if (!map) {

pr_err("%s: node[%d] memory map backing failed. Some memory will not be available.",

__func__, nid);

pnum_begin = pnum;

goto failed;

}

check_usemap_section_nr(nid, usemap);

sparse_init_one_section(__nr_to_section(pnum), pnum, map, usemap);

/*设置此mem_section的secton_mem_map成员,此处会把map的地址减去偏移,

*所以可以看到每个mem_section的section_mem_map值都是相同的,section_mem_map末位为7,表明此mem_section的以下标志位已置位

* #define SECTION_MARKED_PRESENT (1UL<<0)

* #define SECTION_HAS_MEM_MAP (1UL<<1)

* #define SECTION_IS_ONLINE (1UL<<2)

*/

usemap += usemap_longs;

}

sparse_buffer_fini();//释放未使用的空间

return;

failed:

/* We failed to allocate, mark all the following pnums as not present */

for_each_present_section_nr(pnum_begin, pnum) {

struct mem_section *ms;

if (pnum >= pnum_end)

break;

ms = __nr_to_section(pnum);

ms->section_mem_map = 0;

}

}

sparse_mem_map_populate

struct page * __meminit sparse_mem_map_populate(unsigned long pnum, int nid,

struct vmem_altmap *altmap)

{

unsigned long start;

unsigned long end;

struct page *map;

map = pfn_to_page(pnum * PAGES_PER_SECTION);//将页帧号转换为page指针,sparse_vmemmap内存模型使用虚拟地址vmemmap_base作为

//page数组的起始地址,从而减少page和pfn的转换时的内存访问(sparse内存模型要先找到mem_section组,再找到page组)。vmemmap_base初

//始值为ffffea0000000000,而后在setup_arch-->kernel_randomize_memory内变为一个随机的值

start = (unsigned long)map;

end = (unsigned long)(map + PAGES_PER_SECTION);//每个section表示128M内存,即需要32K个page

if (vmemmap_populate(start, end, nid, altmap))//为start到end之间的页分配内存空间,如果page对应的pud、p4d空间未分配,则分配其空间。

return NULL;

return map;

}

vmemmap_populate–>vmemmap_populate_hugepages

static int __meminit vmemmap_populate_hugepages(unsigned long start,

unsigned long end, int node, struct vmem_altmap *altmap)

{

unsigned long addr;

unsigned long next;

pgd_t *pgd;

p4d_t *p4d;

pud_t *pud;

pmd_t *pmd;

for (addr = start; addr < end; addr = next) {

next = pmd_addr_end(addr, end);

pgd = vmemmap_pgd_populate(addr, node);//以init_mm的pgd作为页表基地址

if (!pgd)

return -ENOMEM;

p4d = vmemmap_p4d_populate(pgd, addr, node);//分配4kb作为p4d页表

if (!p4d)

return -ENOMEM;

pud = vmemmap_pud_populate(p4d, addr, node);//分配4kb作为pud页表,每个pud可以容纳512个pmd指针

if (!pud)

return -ENOMEM;

pmd = pmd_offset(pud, addr);

if (pmd_none(*pmd)) {

void *p;

if (altmap)

p = altmap_alloc_block_buf(PMD_SIZE, altmap);

else

p = vmemmap_alloc_block_buf(PMD_SIZE, node);//分配2M作为pmd页表基地址,其中的每项是一个pte,

//现在就相当于为128M物理内存分配了32K个page, 此处的分配时通过移动sparsemap_buf指针实现的,

//因为之前已经为所有pte分配了空间(sparse_buffer_init),sparsemap_buf指向这块空间的开头

if (p) {

pte_t entry;

entry = pfn_pte(__pa(p) >> PAGE_SHIFT,

PAGE_KERNEL_LARGE);

set_pmd(pmd, __pmd(pte_val(entry)));

/* check to see if we have contiguous blocks */

if (p_end != p || node_start != node) {

if (p_start)

pr_debug(" [%lx-%lx] PMD -> [%p-%p] on node %d\n",

addr_start, addr_end-1, p_start, p_end-1, node_start);

addr_start = addr;

node_start = node;

p_start = p;

}

addr_end = addr + PMD_SIZE;

p_end = p + PMD_SIZE;

continue;

} else if (altmap)

return -ENOMEM; /* no fallback */

} else if (pmd_large(*pmd)) {

vmemmap_verify((pte_t *)pmd, node, addr, next);

continue;

}

if (vmemmap_populate_basepages(addr, next, node))

return -ENOMEM;

}

return 0;

}

zone_sizes_init

max_pfn和max_low_pfn都为786432

void __init zone_sizes_init(void)

{

unsigned long max_zone_pfns[MAX_NR_ZONES];

memset(max_zone_pfns, 0, sizeof(max_zone_pfns));

#ifdef CONFIG_ZONE_DMA

max_zone_pfns[ZONE_DMA] = min(MAX_DMA_PFN, max_low_pfn); //16M

#endif

#ifdef CONFIG_ZONE_DMA32

max_zone_pfns[ZONE_DMA32] = min(MAX_DMA32_PFN, max_low_pfn); //3G

#endif

max_zone_pfns[ZONE_NORMAL] = max_low_pfn;

#ifdef CONFIG_HIGHMEM

max_zone_pfns[ZONE_HIGHMEM] = max_pfn;

#endif

free_area_init_nodes(max_zone_pfns);

}

free_area_init_nodes会计算得到各个zone的大小并输出,此例中会得到以下输出

Zone ranges:

DMA [mem 0x0000000000001000-0x0000000000ffffff]

DMA32 [mem 0x0000000001000000-0x00000000bfffffff]

Normal empty

Device empty

free_area_init_node

void __init free_area_init_node(int nid, unsigned long *zones_size,

unsigned long node_start_pfn,

unsigned long *zholes_size)

{

pg_data_t *pgdat = NODE_DATA(nid);

unsigned long start_pfn = 0;

unsigned long end_pfn = 0;

/* pg_data_t should be reset to zero when it's allocated */

WARN_ON(pgdat->nr_zones || pgdat->kswapd_classzone_idx);

pgdat->node_id = nid;

pgdat->node_start_pfn = node_start_pfn;

pgdat->per_cpu_nodestats = NULL;

#ifdef CONFIG_HAVE_MEMBLOCK_NODE_MAP

get_pfn_range_for_nid(nid, &start_pfn, &end_pfn); //获得该node的页帧的起始和结束值

pr_info("Initmem setup node %d [mem %#018Lx-%#018Lx]\n", nid,

(u64)start_pfn << PAGE_SHIFT,

end_pfn ? ((u64)end_pfn << PAGE_SHIFT) - 1 : 0);

/*Initmem setup node 0 [mem 0x0000000000001000-0x00000000bfffffff]*/

#else

start_pfn = node_start_pfn;

#endif

//计算node和zone的page数量

calculate_node_totalpages(pgdat, start_pfn, end_pfn,

zones_size, zholes_size);

alloc_node_mem_map(pgdat); //平坦内存模型才会分配

pgdat_set_deferred_range(pgdat);

free_area_init_core(pgdat);

}

free_area_init_core

/*

* Set up the zone data structures:

* - mark all pages reserved

* - mark all memory queues empty

* - clear the memory bitmaps

*

* NOTE: pgdat should get zeroed by caller.

* NOTE: this function is only called during early init.

*/

static void __init free_area_init_core(struct pglist_data *pgdat)

{

enum zone_type j;

int nid = pgdat->node_id;

pgdat_init_internals(pgdat); //初始化节点的lru链表等成员

pgdat->per_cpu_nodestats = &boot_nodestats;

for (j = 0; j < MAX_NR_ZONES; j++) {

struct zone *zone = pgdat->node_zones + j;

unsigned long size, freesize, memmap_pages;

unsigned long zone_start_pfn = zone->zone_start_pfn;

size = zone->spanned_pages;

freesize = zone->present_pages;

/*

* Adjust freesize so that it accounts for how much memory

* is used by this zone for memmap. This affects the watermark

* and per-cpu initialisations

*/

memmap_pages = calc_memmap_size(size, freesize);//page结构体占用的页

if (!is_highmem_idx(j)) {

if (freesize >= memmap_pages) {

freesize -= memmap_pages;

if (memmap_pages)

printk(KERN_DEBUG

" %s zone: %lu pages used for memmap\n",

zone_names[j], memmap_pages);

} else

pr_warn(" %s zone: %lu pages exceeds freesize %lu\n",

zone_names[j], memmap_pages, freesize);

}

/* Account for reserved pages */

if (j == 0 && freesize > dma_reserve) {

freesize -= dma_reserve;

printk(KERN_DEBUG " %s zone: %lu pages reserved\n",

zone_names[0], dma_reserve);

}

if (!is_highmem_idx(j))

nr_kernel_pages += freesize;

/* Charge for highmem memmap if there are enough kernel pages */

else if (nr_kernel_pages > memmap_pages * 2)

nr_kernel_pages -= memmap_pages;

nr_all_pages += freesize;

/*

* Set an approximate value for lowmem here, it will be adjusted

* when the bootmem allocator frees pages into the buddy system.

* And all highmem pages will be managed by the buddy system.

*/

zone_init_internals(zone, j, nid, freesize);

if (!size)

continue;

set_pageblock_order(); //不执行

setup_usemap(pgdat, zone, zone_start_pfn, size);//不执行

init_currently_empty_zone(zone, zone_start_pfn, size); //初始化zone的free_area链表

memmap_init(size, nid, j, zone_start_pfn);

}

}

memmap_init–>memmap_init_zone–>__init_single_page(page, pfn, zone, nid)

static void __meminit __init_single_page(struct page *page, unsigned long pfn,

unsigned long zone, int nid)

{

mm_zero_struct_page(page);

set_page_links(page, zone, nid, pfn); //设置page->flags,表面其所处的zone和inode

init_page_count(page); //设置page->_refcount为1

page_mapcount_reset(page);设置page->mapcount为-1

page_cpupid_reset_last(page);

INIT_LIST_HEAD(&page->lru);

#ifdef WANT_PAGE_VIRTUAL

/* The shift won't overflow because ZONE_NORMAL is below 4G. */

if (!is_highmem_idx(zone))

set_page_address(page, __va(pfn << PAGE_SHIFT));

#endif

}

build_all_zonelists

将zone按从高到低的顺序加入内存结点pglist的zonelist中

mm_init–>mem_init

void __init mem_init(void)

{

pci_iommu_alloc();

/* clear_bss() already clear the empty_zero_page */

/* this will put all memory onto the freelists */

free_all_bootmem();

after_bootmem = 1;

x86_init.hyper.init_after_bootmem();

/*

* Must be done after boot memory is put on freelist, because here we

* might set fields in deferred struct pages that have not yet been

* initialized, and free_all_bootmem() initializes all the reserved

* deferred pages for us.

*/

register_page_bootmem_info();

/* Register memory areas for /proc/kcore */

if (get_gate_vma(&init_mm))

kclist_add(&kcore_vsyscall, (void *)VSYSCALL_ADDR, PAGE_SIZE, KCORE_USER);

mem_init_print_info(NULL);//打印如下log

/*Memory: 210408K/3145204K available (12300K kernel code, 2546K rwd....*/

}

free_all_bootmem

unsigned long __init free_all_bootmem(void)

{

unsigned long pages;

reset_all_zones_managed_pages(); //将zone->manage_pages设置为0

pages = free_low_memory_core_early();//将page->_refcount设置为0,然后调用free_page将页释放到伙伴系统中。

totalram_pages += pages;

return pages;

}

本文详细描述了基于X86架构的OpenEuler4.19内核中,采用sparsevmemmap内存模型的配置和内存管理过程,涉及memblock、mem_section、sparse_init等关键组件的初始化和内存分配策略。

本文详细描述了基于X86架构的OpenEuler4.19内核中,采用sparsevmemmap内存模型的配置和内存管理过程,涉及memblock、mem_section、sparse_init等关键组件的初始化和内存分配策略。

7983

7983

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?