数据集下载地址:

https://ubicomp.eti.uni-siegen.de/home/datasets/icmi18/

数据集对应代码链接:

https://github.com/WJMatthew/WESAD

数据集说明

1.实验设置

参与人员:15人

实验过程:

15名参与人员分别佩戴胸部(chest)和腕部(wrist)传感器,进行90分钟左右的实验,实验阶段主要分为五个:

1.baseline condition: 刚装上传感器后的20分钟,静坐阅读

2.amusement condition:娱乐阶段,观看搞笑短片

3.meditation condition 1:冷静阶段

4.stress condition:压力阶段,实验者处在TSST(Trier Social Stress Test)情境下,这个情景可以有效地让参与者感受到压力

5.meditation condition 2:冷静阶段

ps:每个实验者的实验过程可能有略微不同,具体各阶段的顺序放在各用户目录的SX_quest.csv中,X为用户ID

为了验证实验过程是有效的,每个实验者需要在每个阶段完成后填写调查问卷:

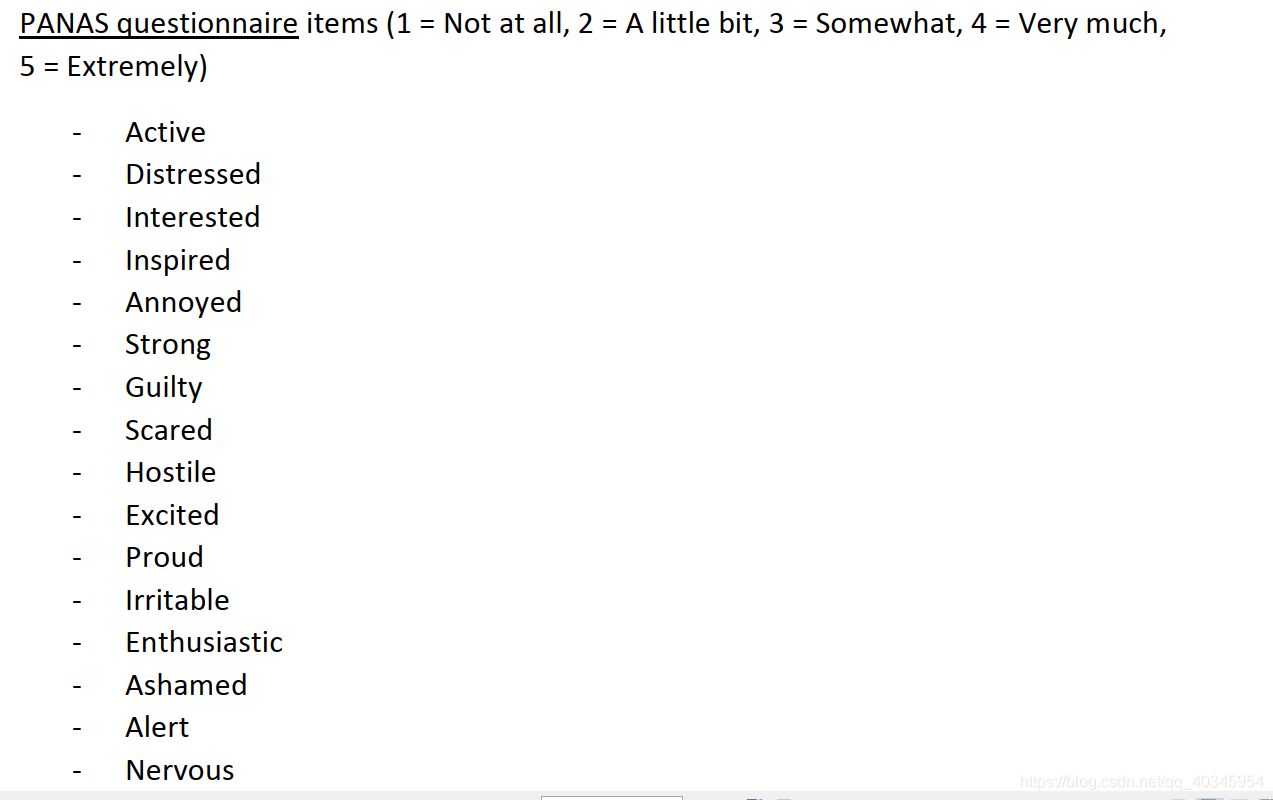

1.Positive and Negative Affect Schedule (PANAS)

PANAS reliably assesses positive (PA) and negative affect (NA),获取二维情绪模型的pleasure值

2.State-Trait Anxiety Inventory (STAI)

gain insight into the anxiety level of the participants

3.Self-Assessment Manikins (SAM)

generate labels in the valence-arousal space,获取二维情绪模型的arousal值

4.Short Stress State Questionnaire (SSSQ)

TSST阶段之后,用SSSQ问卷获取压力类型(worry, engagement, or distress)

具体各问卷问题内容参考数据集readme.pdf => III.2.

2.数据文件说明

# 以S2为例

- S2/

- - S2_E4_Data.zip 存放用户各传感器原始数据

- - - - ACC.csv

- - - - BVP.csv

- - - - EDA.csv

- - - - HR.csv

- - - - IBI.csv

- - - - info.txt

- - - - tags.csv

- - - - TEMP.csv

- - S2.pkl

- - S2_quest.csv 实验阶段和用户问卷数据

- - S2_readme.txt

- - S2_respiban.txt

S2.pkl 存放初步处理过的数据,用python的pickle读取后为字典数据,报错请看[pickle报错解决](https://blog.csdn.net/u012813109/article/details/106966338)

- dict_data

- - subject: "S2"

- - label: 标签 shape=(4255300,) 700hz

- - signal:传感器数据

- - - - chest

- - - - - - ACC:加速度 shape=(4255300,) 700hz

- - - - - - ECG:心电 shape=(4255300,) 700hz

- - - - - - EMG:肌电 shape=(4255300,) 700hz

- - - - - - EDA:皮肤电 shape=(4255300,) 700hz

- - - - - - TEMP:体温 shape=(4255300,) 700hz

- - - - - - RESP:呼吸 shape=(4255300,) 700hz

- - - - wrist

- - - - - - ACC:加速度 shape=(194528,) 32hz

- - - - - - BVP:脉搏 shape=(389056,) 64hz

- - - - - - EDA:皮肤电 shape=(24316,) 4hz

- - - - - - TEMP:体温 shape=(24316,) 4hz

3.标签说明

可以使用情景标签1 2 3 4

也可以使用 用户问卷标签

参考文献

Philip Schmidt, Attila Reiss, Robert Duerichen, Claus Marberger and Kristof Van Laerhoven. 2018. Introducing WESAD, a multimodal dataset for Wearable Stress and Affect Detection. In 2018 International Conference on Multimodal Interaction (ICMI ’18), October 16–20, 2018, Boulder, CO, USA. ACM, New York, NY, USA, 9 pages. https://doi.org/10.1145/3242969.3242985

WESAD数据集包含15名参与者在不同心理状态下的生理信号数据,旨在研究可穿戴设备在压力和情绪检测方面的应用。数据集通过胸部和腕部传感器收集了多种生理信号,并配以问卷评估情绪状态。

WESAD数据集包含15名参与者在不同心理状态下的生理信号数据,旨在研究可穿戴设备在压力和情绪检测方面的应用。数据集通过胸部和腕部传感器收集了多种生理信号,并配以问卷评估情绪状态。

5281

5281

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?