什么是序列化与反序列化:

序列化(Serialization)是指把结构化对象转化为字节流。

反序列化(Deserialization)是序列化的逆过程。把字节流转为结构化对象。

mapreduce中的序列化:

实现这个接口即可

第一个方法是进行序列化

第二个方法是反序列化

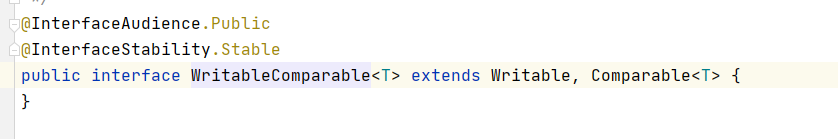

mapreduce中的排序:

WritableComparable 接口包含了排序以及序列化,如果我们序列化的同时还需要排序,就实现这个接口即可。

需求:

代码实现:

目录结构:

包装类:通过继承实现排序与序列化

package sortserializedemo;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class SortBean implements WritableComparable<SortBean> {

private String word;

private int num;

public SortBean() {

}

public SortBean(String word, int num) {

this.word = word;

this.num = num;

}

public String getWord() {

return word;

}

public void setWord(String word) {

this.word = word;

}

public int getNum() {

return num;

}

public void setNum(int num) {

this.num = num;

}

//排序

public int compareTo(SortBean o) {

//按字典顺序排

int res = this.word.compareTo(o.word);

//如果大小相同 比较num

if(res==0){

return this.num - o.num;

}

return res;

}

@Override

public String toString() {

return "SortBean{" +

"word='" + word + '\'' +

", num=" + num +

'}';

}

//实现序列化

public void write(DataOutput out) throws IOException {

out.writeUTF(word);

out.writeInt(num);

}

//实现反序列化

public void readFields(DataInput in) throws IOException {

this.word=in.readUTF();

this.num=in.readInt();

}

}

Mapper:

package sortserializedemo;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class MyMapper extends Mapper<LongWritable, Text,SortBean, NullWritable> {

public void map(LongWritable longWritable, Text text, OutputCollector<SortBean, NullWritable> outputCollector, Reporter reporter) throws IOException {

//分隔文本内容 取出K2

String[] split = text.toString().split("\t");

//包装

SortBean sortBean = new SortBean(split[0], Integer.parseInt(split[1]));

//K2 sortBean V2 为空 后边基于K2进行操作

outputCollector.collect(sortBean,NullWritable.get());

}

}

Reducer:

package sortserializedemo;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class MyReducer extends Reducer<SortBean, NullWritable,SortBean,NullWritable> {

@Override

protected void reduce(SortBean key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

context.write(key, NullWritable.get());

}

}

Main:

package sortserializedemo;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import partitiondemo.MyMapper;

import partitiondemo.MyPartitioner;

import partitiondemo.MyReducer;

import partitiondemo.ReducerMain;

public class ReduceMain {

public static void main(String[] args) throws Exception{

//创建job

Configuration configuration = new Configuration();

Job job = Job.getInstance(configuration, "my-partition");

//指定job所在jar包

job.setJarByClass(ReducerMain.class);

//指定文件读取方式 路径

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path("hdfs://192.168.40.150:9000/test.txt"));

//指定mapper

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

//指定reducer

job.setReducerClass(MyReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

//指定输出方式类以及输出路径 目录必须不存在

job.setOutputFormatClass(TextOutputFormat.class);

TextOutputFormat.setOutputPath(job, new Path("hdfs://192.168.40.150:9000/lgy_test/res"));

//将job提交到yarn集群

boolean bl = job.waitForCompletion(true);

System.exit(bl?0:1);

}

}

1190

1190

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?