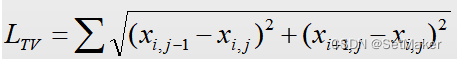

TV Loss(Total Variation Loss),全名为总变分损失函数,TV Loss作为一种正则项配合损失函数去调节网络学习。总变分的公式如下:

即求每一个像素与其下方像素和右方像素的差的平方相加再开根号的和。

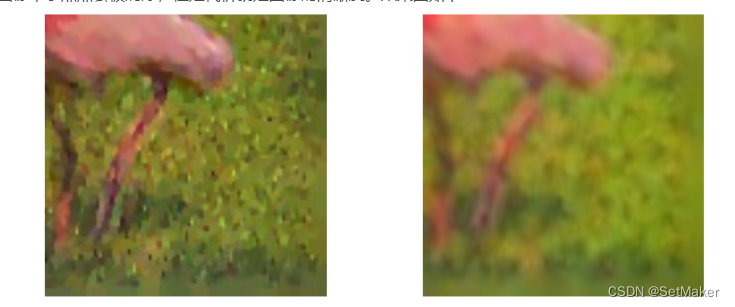

TV值和噪声是线性相关的,噪声越大TV值也会越大,所以TV值可以作为在图像复原或超分辨等任务中的一种指导正侧项,TVloss越小则图像噪声越小,图像更加平滑。

效果图:右侧为加入TVloss指导后的结果。

代码:

import torch

import torch.nn as nn

from torchvision import transforms

import numpy as np

import os

import time

import pathlib

from matplotlib import pyplot as plt

import warnings

np.set_printoptions(threshold=np.inf)

warnings.filterwarnings(action='ignore')

def _tensor_size(t):

return t.size()[1] * t.size()[2] * t.size()[3]

def tv_loss(x):

h_x = x.size()[2]

w_x = x.size()[3]

count_h = _tensor_size(x[:, :, 1:, :])

count_w = _tensor_size(x[:, :, :, 1:])

h_tv = torch.pow((x[:, :, 1:, :] - x[:, :, :h_x - 1, :]), 2).sum()

w_tv = torch.pow((x[:, :, :, 1:] - x[:, :, :, :w_x - 1]), 2).sum()

return 2*(h_tv/count_h+w_tv/count_w)

class TV_Loss(nn.Module):

def __init__(self,TVLoss_weight=1):

super(TV_Loss, self).__init__()

self.TVLoss_weight = TVLoss_weight

def forward(self,x):

batch_size=x.shape[0]

return self.TVLoss_weight*tv_loss(x)/batch_size

device=torch.device("cuda" if torch.cuda.is_available() else "cpu")

x=torch.randint(10,size=(1,1,3,3))

x=x.to(device)

print(x)

creation=TV_Loss().to(device)

loss=creation(x)

print(loss)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?