代码目的:

1.读取1.tsv文件,提取列索引ogt_source=“experimental”的所在行的uniprot_id列的值

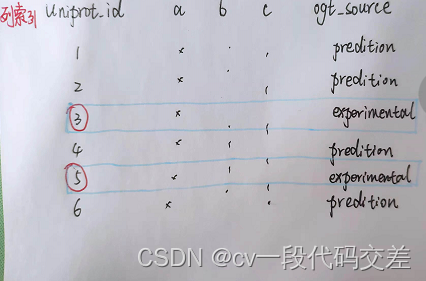

举个栗子:

假设1.tsv文件如下图所示,则列索引为:(uniprot_id、a、b、c、opt_source ),下面代码中提到的all_uniprotid = [3,5],类型为<class 'numpy.ndarray'>

2. 在2.fasta文件中查找步骤一提取到的uniprotid,并把当前行和下一行写入re.txt文件中

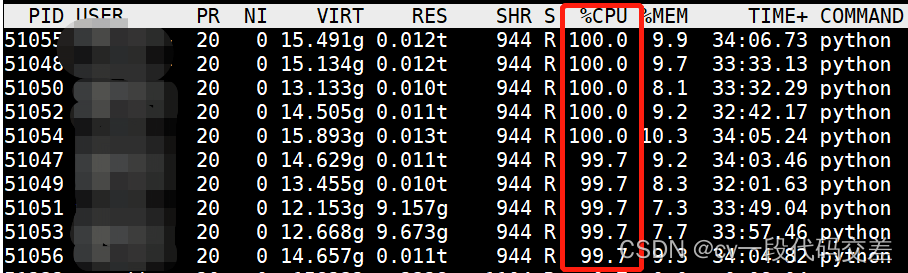

细节:1. 使用并行计算,Pool,10个线程,运行时cpu运行情况如下,100%为一个CPU核

2. 读取指定内容的下一行:把当前行的行号记录下来,使用linecache.getline(xxx.txt,num)函数,获取xxx.txt文件的第num行

3. 10个进程同时写入同一个文件会产生冲突,需要给文件加锁,在with open()内使用下面这行代码(最终代码中也有用到):给文件添加一个排他锁(同一时间只能被一个进程拥有),with结束后自动释放。

fcntl.flock(fasta_file.fileno(),fcntl.LOCK_EX)最终代码如下:

import multiprocessing

from multiprocessing import Pool

import pandas as pd

import numpy as np

import fcntl

import sys

import linecache

processes_count = 10

data = pd.read_csv(open('./1.tsv'),sep='\t')

nedata = data.query('ogt_source=="experimental"')

all_uniprotid = nedata['uniprot_id'].values

def pruductFa(uniprotid):

print(uniprotid)

st = ">"+uniprotid

with open(r"./2.fasta") as f:

count = 0

lines = f.readlines()

flag = 0

for line1 in lines:

count = count + 1

if(line1.find(st)!=-1):

flag = 1

with open('./re.txt',mode='a') as fasta_file:

fcntl.flock(fasta_file.fileno(),fcntl.LOCK_EX)

fasta_file.write(line1)

fasta_file.write(linecache.getline('./2.fasta', count+1))

break

if (flag == 1):

print(uniprotid+"found!\n")

def pallelFun(operation,input,pool):

pool.map(operation,input)

if __name__ == '__main__':

processes_pool = Pool(processes_count)

pallelFun(pruductFa,all_uniprotid,processes_pool)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?