最近为了测试clickhouse集群性能及其分布式表复制表行为特征搭建了一个2shard2replica的集群,系统为centos,clickhouse搭建在docker中,使用四台机器。·

zookeeper

搭建请参考link,搭一个四台服务器的集群。

clickhouse搭建

我们选择用docker搭建clickhouse,比较方便,因为公司系统是centos,clickhouse对centos支持有限,ubuntu系统的朋友可以直接在物理机搭建clickhouse

- docker-compose文件

version: '3'

services:

clickhouse-server:

image: yandex/clickhouse-server

container_name: clickhouse-server

hostname: clickhouse-server

restart: always

ports:

- 8123:8123

- 9000:9000

expose:

- 9000

- 9009

network_mode: host

volumes:

- ./config.xml:/etc/clickhouse-server/config.xml

- ./users.xml:/etc/clickhouse-server/users.xml

- ./metrika.xml:/etc/clickhouse-server/metrika.xml

- ./data:/var/lib/clickhouse

- ./log/clickhouse-server.log:/var/log/clickhouse-server/clickhouse-server.log

- ./log/clickhouse-server.err.log:/var/log/clickhouse-server/clickhouse-server.err.log

- 注意搭建时要把在volumes的文件建好,network_mode置为host表明使用宿主机的网络和端口,为咱们以后clickhouse服务之间通信提供了不少方便,写了这项之后ports选项就是多余的,但防止万一还是保留。

- 下面说说volumns前三个配置文件。

- config.xml:其提供了关于clickhouse服务的相关参数,参考示例如下:

<?xml version="1.0"?>

<yandex>

<logger>

<level>trace</level>

<log>/var/log/clickhouse-server/clickhouse-server.log</log>

<errorlog>/var/log/clickhouse-server/clickhouse-server.err.log</errorlog>

<size>1000M</size>

<count>10</count>

</logger>

<http_port>8123</http_port>

<tcp_port>9000</tcp_port>

<openSSL>

<server>

<certificateFile>/etc/clickhouse-server/server.crt</certificateFile>

<privateKeyFile>/etc/clickhouse-server/server.key</privateKeyFile>

<dhParamsFile>/etc/clickhouse-server/dhparam.pem</dhParamsFile>

<verificationMode>none</verificationMode>

<loadDefaultCAFile>true</loadDefaultCAFile>

<cacheSessions>true</cacheSessions>

<disableProtocols>sslv2,sslv3</disableProtocols>

<preferServerCiphers>true</preferServerCiphers>

</server>

<client>

<loadDefaultCAFile>true</loadDefaultCAFile>

<cacheSessions>true</cacheSessions>

<disableProtocols>sslv2,sslv3</disableProtocols>

<preferServerCiphers>true</preferServerCiphers>

<invalidCertificateHandler>

<name>RejectCertificateHandler</name>

</invalidCertificateHandler>

</client>

</openSSL>

<interserver_http_port>9009</interserver_http_port>

<listen_host>0.0.0.0</listen_host>

<max_connections>4096</max_connections>

<keep_alive_timeout>3</keep_alive_timeout>

<max_concurrent_queries>100</max_concurrent_queries> <uncompressed_cache_size>8589934592</uncompressed_cache_size>

<mark_cache_size>5368709120</mark_cache_size>

<path>/var/lib/clickhouse/</path>

<tmp_path>/var/lib/clickhouse/tmp/</tmp_path>

<user_files_path>/var/lib/clickhouse/user_files/</user_files_path>

<default_database>default</default_database>

<timezone>Asia/Shanghai</timezone>

<mlock_executable>false</mlock_executable>

<remote_servers incl="clickhouse_remote_servers" >

<cluster_2s_2r>

<shard>

<weight>1</weight>

<internal_replication>true</internal_replication>

<replica>

<host>CK01</host>

<port>9000</port>

<user>default</user>

<password>Biyjatqdw...</password>

</replica>

<replica>

<host>CK02</host>

<port>9000</port>

<user>default</user>

<password>Biyjatqdw...</password>

</replica>

</shard>

<shard>

<internal_replication>true</internal_replication>

<replica>

<host>CK03</host>

<port>9000</port>

<user>default</user>

<password>Biyjatqdw...</password>

</replica>

<replica>

<host>CK04</host>

<port>9000</port>

<user>default</user>

<password>Biyjatqdw...</password>

</replica>

</shard>

</cluster_2s_2r>

</remote_servers>

<zookeeper>

<node index="1">

<host>CK01</host>

<port>2181</port>

</node>

<node index="2">

<host>CK02</host>

<port>2181</port>

</node>

<node index="3">

<host>CK03</host>

<port>2181</port>

</node>

<node index="4">

<host>CK04</host>

<port>2181</port>

</node>

</zookeeper>

<macros>

<shard>01</shard>

<replica>01</replica>

</macros>

<builtin_dictionaries_reload_interval>3600</builtin_dictionaries_reload_interval>

<max_session_timeout>3600</max_session_timeout>

<default_session_timeout>60</default_session_timeout>

<log_queries>1</log_queries>

<query_log>

<database>system</database>

<table>query_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</query_log>

<log_query_threads>1</log_query_threads>

<query_thread_log>

<database>system</database>

<table>query_thread_log</table>

<partition_by>toYYYYMM(event_date)</partition_by>

<flush_interval_milliseconds>7500</flush_interval_milliseconds>

</query_thread_log>

<dictionaries_config>*_dictionary.xml</dictionaries_config>

<max_memory_usage_for_all_queries>2000000000</max_memory_usage_for_all_queries>

<compression incl="clickhouse_compression">

</compression>

<distributed_ddl>

<path>/clickhouse/task_queue/ddl</path>

</distributed_ddl>

<graphite_rollup_example>

<pattern>

<regexp>click_cost</regexp>

<function>any</function>

<retention>

<age>0</age>

<precision>3600</precision>

</retention>

<retention>

<age>86400</age>

<precision>60</precision>

</retention>

</pattern>

<default>

<function>max</function>

<retention>

<age>0</age>

<precision>60</precision>

</retention>

<retention>

<age>3600</age>

<precision>300</precision>

</retention>

<retention>

<age>86400</age>

<precision>3600</precision>

</retention>

</default>

</graphite_rollup_example>

<format_schema_path>/var/lib/clickhouse/format_schemas/</format_schema_path>

<http_server_default_response>

</http_server_default_response>

</yandex>

- 限于篇幅,我已把注释全部去掉,其中比较需要注意的标签是:

listen_host–>0.0.0.0

remote_servers–>此标签与分布式表有关,以本配置文件实例:

- cluster_2s_2r为集群名

- shard为分片名称

- replica为复制节点,其下填入节点的相关信息即可

zookeeper–>此标签与zookeeper有关,即与复制表有关,clickhouse的表数据同步是表的行为,不是服务的行为,必须配置此zookeeper

macros–>此标签表明了本机器是第几分片的第几个复制表,相当于一种环境变量,以后建表时会方便很多,我们下面建表时介绍。

query_log–>可以开启clickhouse的system表中记录query信息的表:log_queries;query_thread_log同理。

- users.xml,是关于用户的相关配置,示例如下:

<?xml version="1.0"?>

<yandex>

<profiles>

<default>

<!-- 设置最大使用内存为两个G -->

<max_memory_usage>2000000000</max_memory_usage>

<use_uncompressed_cache>0</use_uncompressed_cache>

<load_balancing>random</load_balancing>

</default>

<readonly>

<readonly>1</readonly>

</readonly>

</profiles>

<users>

<default>

<password>Biyjatqdw...</password>

<networks incl="networks" replace="replace">

<ip>::/0</ip>

</networks>

<profile>default</profile>

<quota>default</quota>

<databases>

<test>

<filtered_table1>

<filter>a = 1</filter>

</filtered_table1>

<filtered_table2>

<filter>a + b < 1 or c - d > 5</filter>

</filtered_table2>

<filtered_table3>

<filter>c = 1</filter>

</filtered_table3>

</test>

</databases>

</default>

</users>

<quotas>

<default>

<interval>

<duration>3600</duration>

<queries>0</queries>

<errors>0</errors>

<result_rows>0</result_rows>

<read_rows>0</read_rows>

<execution_time>0</execution_time>

</interval>

</default>

</quotas>

</yandex>

这个实例中重要的参数只有password,可以设置default的密码,当然也可以新建一个与default并列的用户,详情见clickhouse官方文档。

还有一个参数为max_memory_usage,设置最大内存使用量,我设置为了2G,毕竟机器才4G内存,草(一种植物)。

- metraic.xml,示例如下:

- 这个文件我并没有用到,其是为了可以把配置拆出到别的文件,但是我已经全部写到了config.xml里,所以就不需要了

<yandex>

<!-- 集群配置 -->

<clickhouse_remote_servers>

<!-- 3分片1备份 -->

<cluster_3shards_1replicas>

<!-- 数据分片1 -->

<shard>

<replica>

<host>CK01</host>

<port>9000</port>

</replica>

</shard>

<!-- 数据分片2 -->

<shard>

<replica>

<host>CK02</host>

<port>9000</port>

</replica>

</shard>

<!-- 数据分片3 -->

<shard>

<replica>

<host>CK03</host>

<port>9000</port>

</replica>

</shard>

</cluster_3shards_1replicas>

</clickhouse_remote_servers>

</yandex>

写完配置文件之后通过scp将文件传到其他三个节点,像link中的scp命令格式相同,传输过去之后不要忘记修改macros标签为该节点的配置。

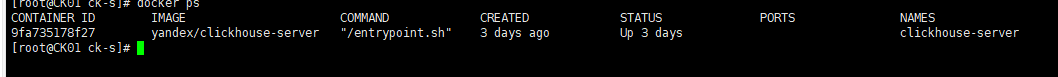

- 之后就可以在每台机器上执行,当然要cd到目录下

docker-compose -f docker-compose.yml up -d

如果配置文件没问题的话,应该是能启动起来clickhouse的,如果报错了欢迎评论留言,如果是我踩过的坑会帮你填平。

建表

replicated表创建

配置好macros之后创建集群表就比较简单了:

create table if not exists t_local ON CLUSTER cluster_2s_2r

(

ID String,

CreateTime DateTime

) ENGINE = ReplicatedMergeTree('/clickhouse/tables/{shard}/t_local', '{replica}')

PARTITION BY toYYYYMMDD(CreateTime) ORDER BY (CreateTime);

其中{shard}和{replica}就是我们在macros定义的节点

replicated表使用

待续

distributed表创建

CREATE TABLE `trade_drop_table_v1.0_all` AS `trade_drop_table`

ENGINE = Distributed(cluster_2s_2r,`default`,`trade_drop_table`,rand());

其中表参数为:集群名,数据库,目标表名,插入算法(一般为rand,当distributed表只有一个节点时,这个参数无用)。

distributed表使用

待续

2837

2837

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?