目录

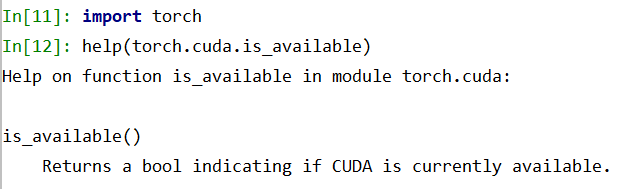

dir()、 help()

eg

help(torch.cuda.is_available)

注意要import torch

torch.cuda.is_available不能加括号

pycharm与jupyter比较

Dataset 和Dataloader

from torch.utils.data import Dataset

查看说明:

Dataset??

All datasets that represent a map from keys to data samples should subclass it.

All subclasses should overwrite :meth:__getitem__, supporting fetching a data sample for a given key. Subclasses could also optionally overwrite

:meth:__len__, which is expected to return the size of the dataset by many

:class:~torch.utils.data.Samplerimplementations and the default options

of :class:~torch.utils.data.DataLoader.

每个Dataset类都要继承它

每个Dataset类都要写一个__getitem__方法

可以写__len__方法

示例代码

文件夹目录

from torch.utils.data import Dataset

from PIL import Image

import os

class MyData(Dataset):

def __init__(self,root_dir,label_dir):

self.root_dir = root_dir

self.label_dir = label_dir

self.path = os.path.join(self.root_dir ,self.label_dir )

self.img_path = os.listdir(self.path )

def __getitem__(self, idx):

img_name=self.img_path [idx]

img_item_path=os.path.join(self.root_dir ,self.label_dir ,img_name)

img=Image.open(img_item_path )

label=self.label_dir

return img,label

def __len__(self):

return len(self.img_path )

#创建实例

root_dir='C:\\Users\\10755\\Desktop\\imges'

du_label_dir='都敏俊'

k_label_dir='小K'

du=MyData (root_dir,du_label_dir)

xk=MyData (root_dir,k_label_dir)

print('都教授个数:',len(du))

img,label=du[0]

img.show()

# 数据集拼接

# train_dataset=ants+bees

transform

ctrl+p 可以提示参数

from torch.utils.data import Dataset, DataLoader

from PIL import Image

import os

from torchvision import transforms

class MyData(Dataset):

def __init__(self, root_dir, image_dir, label_dir, transform=None):

self.root_dir = root_dir

self.image_dir = image_dir

self.label_dir = label_dir

self.label_path = os.path.join(self.root_dir, self.label_dir)

self.image_path = os.path.join(self.root_dir, self.image_dir)

self.image_list = os.listdir(self.image_path)

self.label_list = os.listdir(self.label_path)

self.transform = transform

# 因为label 和 Image文件名相同,进行一样的排序,可以保证取出的数据和label是一一对应的

self.image_list.sort()

self.label_list.sort()

def __getitem__(self, idx):

img_name = self.image_list[idx]

label_name = self.label_list[idx]

img_item_path = os.path.join(self.root_dir, self.image_dir, img_name)

label_item_path = os.path.join(self.root_dir, self.label_dir, label_name)

img = Image.open(img_item_path)

with open(label_item_path, 'r') as f:

label = f.readline()

if self.transform:

img = transform(img)

return img, label

def __len__(self):

assert len(self.image_list) == len(self.label_list)

return len(self.image_list)

transform = transforms.Compose([transforms.Resize(400), transforms.ToTensor()])

root_dir = "dataset/train"

image_ants = "ants_image"

label_ants = "ants_label"

ants_dataset = MyData(root_dir, image_ants, label_ants, transform=transform)

image_bees = "bees_image"

label_bees = "bees_label"

bees_dataset = MyData(root_dir, image_bees, label_bees, transform=transform)

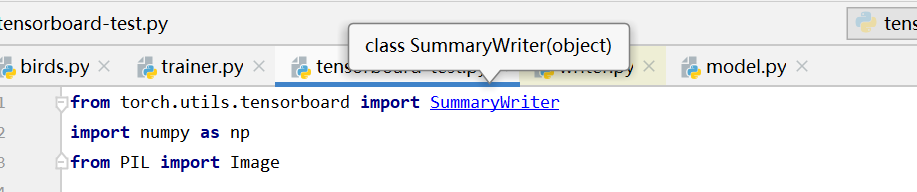

tensorboard

Pytorch当中Tensorboard的使用

PyTorch 自带 TensorBoard 使用教程

示例代码

from torch.utils.tensorboard import SummaryWriter

import numpy as np

from PIL import Image

writer = SummaryWriter("logs")

# 会在当前目录下生成logs目录

image_path = "data/train/ants_image/6240329_72c01e663e.jpg"

img_PIL = Image.open(image_path)

img_array = np.array(img_PIL)

print(type(img_array))

print(img_array.shape)

writer.add_image("train", img_array, 1, dataformats='HWC')

# y = 2x

for i in range(100):

writer.add_scalar("y=2x", 3*i, i)

writer.close()

ctrl+点击函数,可以查看函数具体情况

add_scalar

def add_scalar(self, tag, scalar_value, global_step=None, walltime=None):

"""Add scalar data to summary.

Args:

tag (string): Data identifier#标题

scalar_value (float or string/blobname): Value to save#y轴

global_step (int): Global step value to record#X轴

walltime (float): Optional override default walltime (time.time())#时间

with seconds after epoch of event

add_image

def add_image(self, tag, img_tensor, global_step=None, walltime=None, dataformats='CHW'):

"""Add image data to summary.

Note that this requires the ``pillow`` package.

Args:

tag (string): Data identifier

img_tensor (torch.Tensor, numpy.array, or string/blobname): Image data

global_step (int): Global step value to record

walltime (float): Optional override default walltime (time.time())

seconds after epoch of event

Shape:

img_tensor: Default is :math:`(3, H, W)`. You can use ``torchvision.utils.make_grid()`` to

convert a batch of tensor into 3xHxW format or call ``add_images`` and let us do the job.

Tensor with :math:`(1, H, W)`, :math:`(H, W)`, :math:`(H, W, 3)` is also suitible as long as

corresponding ``dataformats`` argument is passed. e.g. CHW, HWC, HW.

数据类型

注意一下

img_tensor (torch.Tensor, numpy.array, or string/blobname): Image data

所需的数据类型

以 PIL中的Image.open(image_path)

打开的图像是不满足要求的

可以print(type(img))

查看文件类型

转换成numpy型

np.array(img)

shape

默认为:(3, H, W)

若不是 需要加dataformats

显示

writer.add_image(“train”, img_array, 1, dataformats=‘HWC’)

train 相当于 图片窗口名

img_array输入文件

1步数

继续加载一张图:

writer.add_image(“train”, img_array, 2, dataformats=‘HWC’)

新开窗口:

writer.add_image(“小k”, img_array, 1, dataformats=‘HWC’)

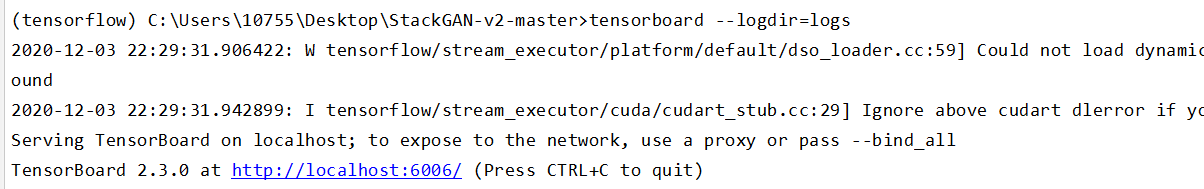

打开tensorboard

pycharm terminal 输入

tensorboard --logdir=logs

注意空格不能漏,

logdir=事件文件所在文件夹名

更换打开端口:

tensorboard --logdir=logs --port=6007

自带的浏览器可能打不开

复制链接到其他览器再打开

No dashboards are active for the current data set

解决方案

若是还不行 再重新运行下代码 再打开

重复运行代码

新产生的图像会在上个图像基础上显示

解决方法:

把logs里的文件都删掉

ctrl+c关闭窗口

再重新运行 打开窗口

按住ctrl选选中多个文件

710

710

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?