修改说明:之前有些地方写的不是很清楚,所以再对每个步骤补充一下,添加每步生成的文件结果图,好让大家有更清晰的理解,方便和大家自己的步骤对照。

如果有写的不对的地方,欢迎批评指正。

一.Pyskl安装

不管是windows还是linux,我都试过了,都是可以安装成功的。

Pyskl地址

需要安装好anaconda

Linux安装

git clone https://github.com/kennymckormick/pyskl.git

cd pyskl

# This command runs well with conda 22.9.0, if you are running an early conda version and got some errors, try to update your conda first

conda env create -f pyskl.yaml

conda activate pyskl

pip install -e .

Windows安装,见我的另一篇博客 windows环境安装pyskl

安装成功后可以测试一下

# Running the demo with STGCN++ trained on NTURGB+D 120 (Joint Modality). The input file is demo/ntu_sample.avi, the output file is demo/demo.mp4

python demo/demo_skeleton.py demo/ntu_sample.avi demo/demo.mp4 --config configs/stgcn++/stgcn++_ntu120_xsub_hrnet/j.py --checkpoint http://download.openmmlab.com/mmaction/pyskl/ckpt/stgcnpp/stgcnpp_ntu120_xsub_hrnet/j.pth

输出视频为

二.数据集准备

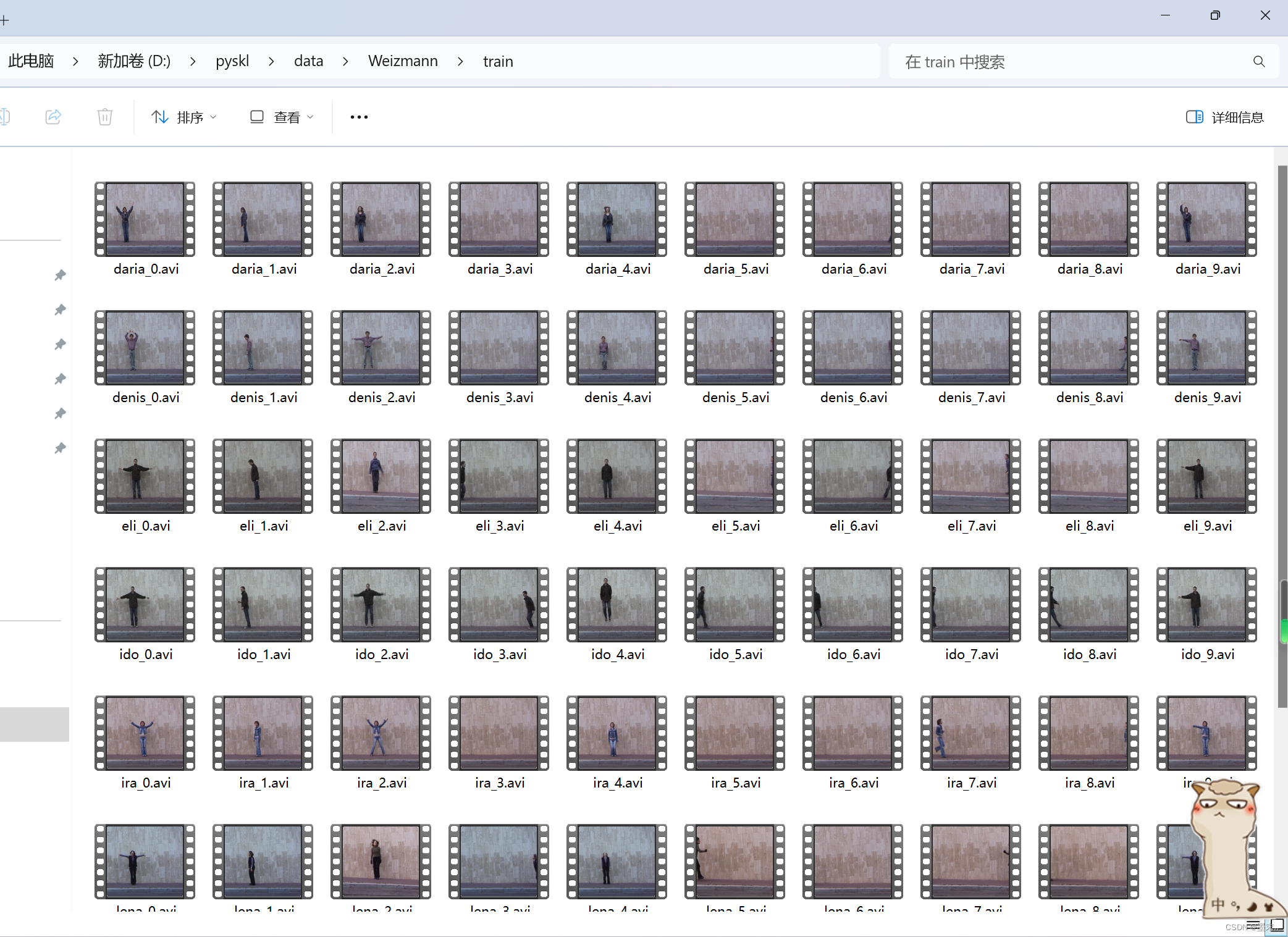

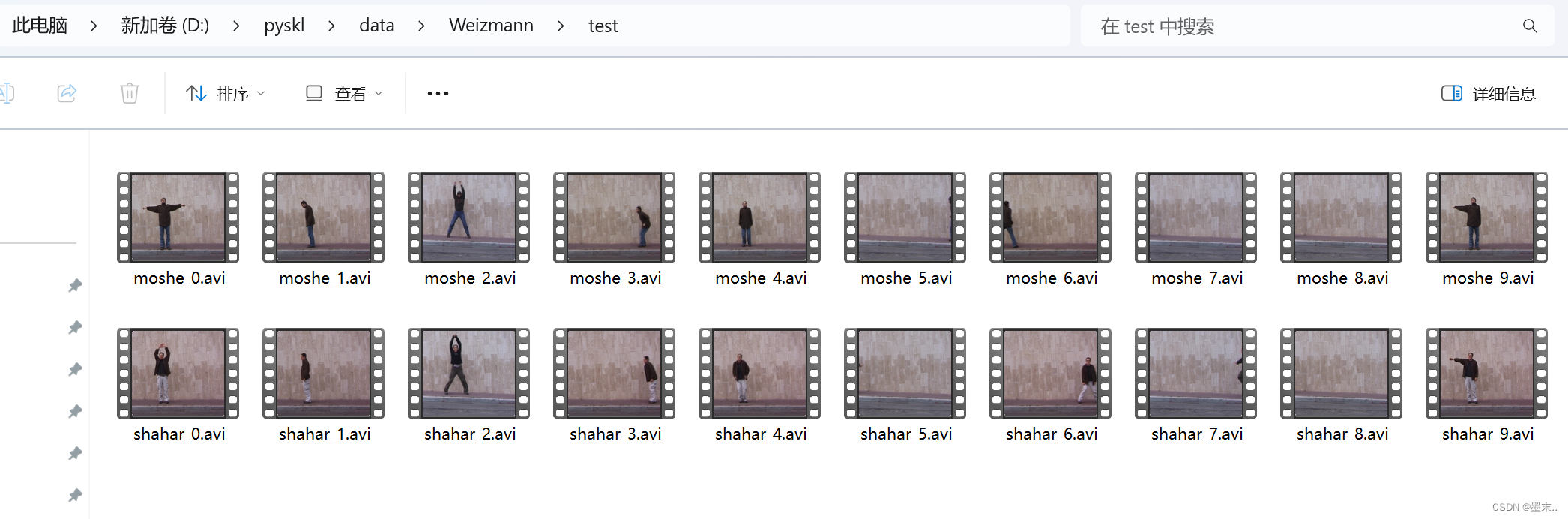

参考的是这位博主参考博客,按他说的做就可以了,重点是每个视频名里要有对应标签。

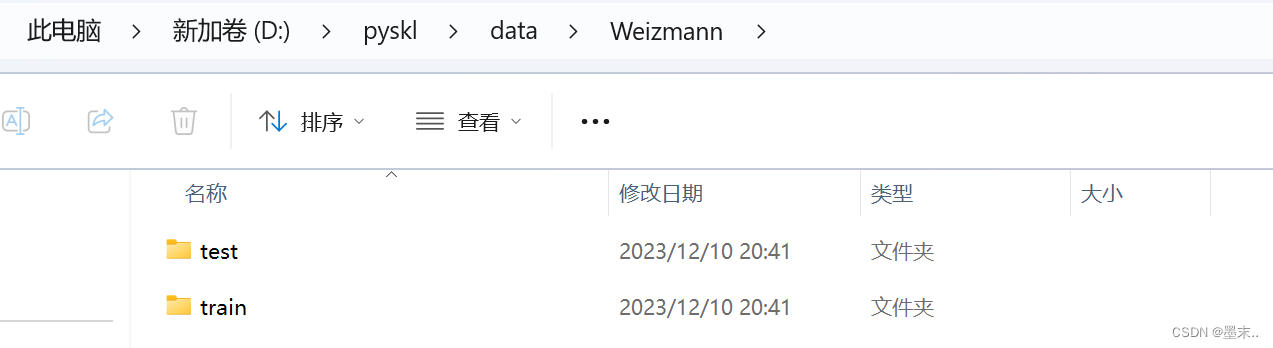

我的数据集路径如下

train:

test:

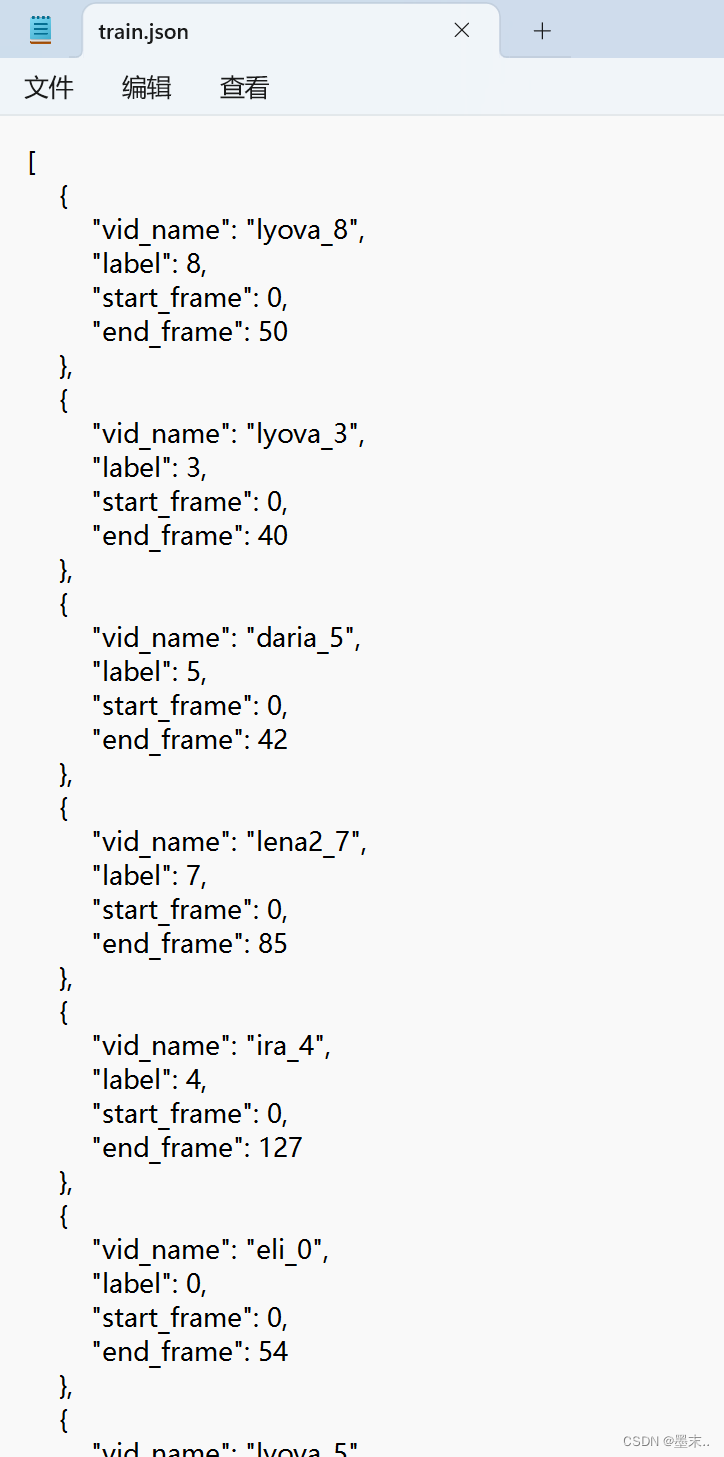

三.生成train.jaon和test.json

import os

import decord

import json

def writeJson(path_train, jsonpath):

outpot_list = []

trainfile_list = os.listdir(path_train)

for train_name in trainfile_list:

traindit = {}

sp = train_name.split('_')

traindit['vid_name'] = train_name.replace('.avi', '')

traindit['label'] = int(sp[1].replace('.avi', ''))

traindit['start_frame'] = 0

video_path = os.path.join(path_train, train_name)

vid = decord.VideoReader(video_path)

traindit['end_frame'] = len(vid)

outpot_list.append(traindit.copy())

with open(jsonpath, 'w') as outfile:

json.dump(outpot_list, outfile)

使用如下:

# 第一个参数是训练数据集或测试数据集的路径,第二个参数是保存的json文件名

writeJson('./data/Weizmann/train', 'train.json')

writeJson('./data/Weizmann/test', 'test.json')

生成的train.json内容格式为:

[{"vid_name": "moshe_0", "label": 0, "start_frame": 0, "end_frame": 62}, {"vid_name": "moshe_1", "label": 1, "start_frame": 0, "end_frame": 61}, {"vid_name": "moshe_2", "label": 2, "start_frame": 0, "end_frame": 105}, {"vid_name": "moshe_3", "label": 3, "start_frame": 0, "end_frame": 39}, {"vid_name": "moshe_4", "label": 4, "start_frame": 0, "end_frame": 45}, {"vid_name": "moshe_5", "label": 5, "start_frame": 0, "end_frame": 64}, {"vid_name": "moshe_6", "label": 6, "start_frame": 0, "end_frame": 46}, {"vid_name": "moshe_7", "label": 7, "start_frame": 0, "end_frame": 77}, {"vid_name": "moshe_8", "label": 8, "start_frame": 0, "end_frame": 111}, {"vid_name": "moshe_9", "label": 9, "start_frame": 0, "end_frame": 60}, {"vid_name": "shahar_0", "label": 0, "start_frame": 0, "end_frame": 59}, {"vid_name": "shahar_1", "label": 1, "start_frame": 0, "end_frame": 61}, {"vid_name": "shahar_2", "label": 2, "start_frame": 0, "end_frame": 103}, {"vid_name": "shahar_3", "label": 3, "start_frame": 0, "end_frame": 38}, {"vid_name": "shahar_4", "label": 4, "start_frame": 0, "end_frame": 56}, {"vid_name": "shahar_5", "label": 5, "start_frame": 0, "end_frame": 67}, {"vid_name": "shahar_6", "label": 6, "start_frame": 0, "end_frame": 43}, {"vid_name": "shahar_7", "label": 7, "start_frame": 0, "end_frame": 68}, {"vid_name": "shahar_8", "label": 8, "start_frame": 0, "end_frame": 120}, {"vid_name": "shahar_9", "label": 9, "start_frame": 0, "end_frame": 61}]

四.生成tools/data/custom_2d_skeleton.py需要的list文件

代码如下:

def writeList(dirpath,name):

path_train = os.path.join(dirpath, 'train')

path_test = os.path.join(dirpath, 'test')

trainfile_list=os.listdir(path_train)

testfile_list=os.listdir(path_test)

train=[]

for train_name in trainfile_list:

traindit={}

sp=train_name.split('_')

traindit['vid_name']= train_name

traindit['label'] = sp[1].replace('.avi','')

train.append(traindit)

test = []

for test_name in testfile_list:

testdit={}

sp=test_name.split('_')

testdit['vid_name']= test_name

testdit['label'] = sp[1].replace('.avi','')

test.append(testdit)

tmpl1 =os.path.join(path_train,'{}')

lines1 = [(tmpl1 + ' {}').format(x['vid_name'], x['label']) for x in train]

tmpl2 = os.path.join(path_test, '{}')

lines2 = [(tmpl2 + ' {}').format(x['vid_name'], x['label']) for x in test]

lines=lines1+lines2

mwlines(lines, os.path.join(dirpath,name))

path是数据集路径 dirpath = ‘./data/Weizmann’

name为生成的list文件名称,这里为 ‘Weizmann.list’

writeList('./data/Weizmann', 'Weizmann.list')

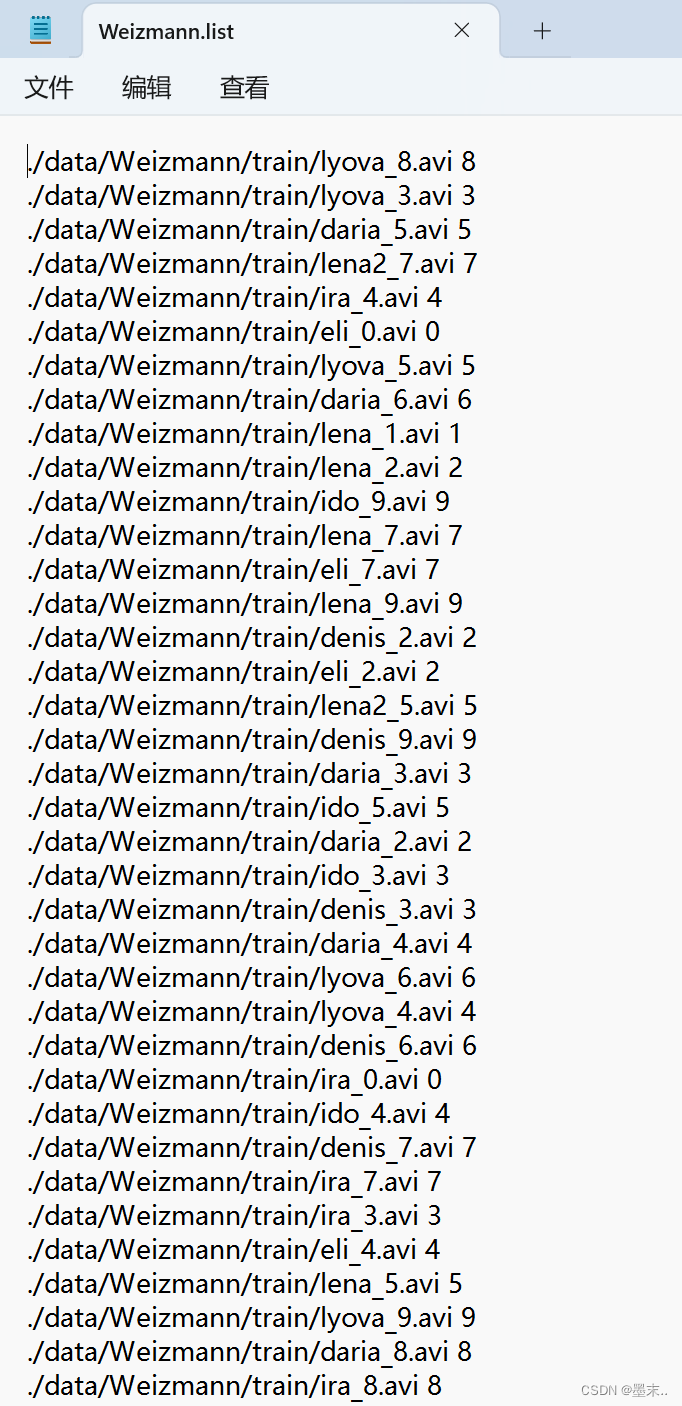

Weizmann.list内容如下:

五.调用custom_2d_skeleton.py,生成训练模型要用的pkl文件

直接用的 大脸猫105这位博主改好的代码,只不过自己修改了一部分,如果我的代码有问题请回到大脸猫105

# Copyright (c) OpenMMLab. All rights reserved.

import argparse

import os

import os.path as osp

# import pdb

import pyskl

from mmdet.apis import inference_detector, init_detector

from mmpose.apis import inference_top_down_pose_model, init_pose_model

import decord

import mmcv

import numpy as np

# import torch.distributed as dist

from tqdm import tqdm

import mmdet

# import mmpose

# from pyskl.smp import mrlines

import cv2

from pyskl.smp import mrlines

def extract_frame(video_path):

vid = decord.VideoReader(video_path)

return [x.asnumpy() for x in vid]

def detection_inference(model, frames):

model = model.cuda()

results = []

for frame in frames:

result = inference_detector(model, frame)

results.append(result)

return results

def pose_inference(model, frames, det_results):

model = model.cuda()

assert len(frames) == len(det_results)

total_frames = len(frames)

num_person = max([len(x) for x in det_results])

kp = np.zeros((num_person, total_frames, 17, 3), dtype=np.float32)

for i, (f, d) in enumerate(zip(frames, det_results)):

# Align input format

d = [dict(bbox=x) for x in list(d)]

pose = inference_top_down_pose_model(model, f, d, format='xyxy')[0]

for j, item in enumerate(pose):

kp[j, i] = item['keypoints']

return kp

pyskl_root = osp.dirname(pyskl.__path__[0])

default_det_config = f'{pyskl_root}/demo/faster_rcnn_r50_fpn_1x_coco-person.py'

default_det_ckpt = (

'https://download.openmmlab.com/mmdetection/v2.0/faster_rcnn/faster_rcnn_r50_fpn_1x_coco-person/'

'faster_rcnn_r50_fpn_1x_coco-person_20201216_175929-d022e227.pth')

default_pose_config = f'{pyskl_root}/demo/hrnet_w32_coco_256x192.py'

default_pose_ckpt = (

'https://download.openmmlab.com/mmpose/top_down/hrnet/'

'hrnet_w32_coco_256x192-c78dce93_20200708.pth')

def parse_args():

parser = argparse.ArgumentParser(

description='Generate 2D pose annotations for a custom video dataset')

# * Both mmdet and mmpose should be installed from source

# parser.add_argument('--mmdet-root', type=str, default=default_mmdet_root)

# parser.add_argument('--mmpose-root', type=str, default=default_mmpose_root)

# parser.add_argument('--det-config', type=str, default='../refe/faster_rcnn_r50_caffe_fpn_mstrain_1x_coco-person.py')

# parser.add_argument('--det-ckpt', type=str,

# default='../refe/faster_rcnn_r50_fpn_1x_coco-person_20201216_175929-d022e227.pth')

parser.add_argument(

'--det-config',

# default='../refe/faster_rcnn_r50_fpn_2x_coco.py',

default=default_det_config,

help='human detection config file path (from mmdet)')

parser.add_argument(

'--det-ckpt',

default=default_det_ckpt,

help='human detection checkpoint file/url')

parser.add_argument('--pose-config', type=str, default=default_pose_config)

parser.add_argument('--pose-ckpt', type=str, default=default_pose_ckpt)

# * Only det boxes with score larger than det_score_thr will be kept

parser.add_argument('--det-score-thr', type=float, default=0.7)

# * Only det boxes with large enough sizes will be kept,

parser.add_argument('--det-area-thr', type=float, default=1300)

# * Accepted formats for each line in video_list are:

# * 1. "xxx.mp4" ('label' is missing, the dataset can be used for inference, but not training)

# * 2. "xxx.mp4 label" ('label' is an integer (category index),

# * the result can be used for both training & testing)

# * All lines should take the same format.

parser.add_argument('--video-list', type=str, help='the list of source videos')

# * out should ends with '.pkl'

parser.add_argument('--out', type=str, help='output pickle name')

parser.add_argument('--tmpdir', type=str, default='tmp')

parser.add_argument('--local_rank', type=int, default=1)

# pdb.set_trace()

# if 'RANK' not in os.environ:

# os.environ['RANK'] = str(args.local_rank)

# os.environ['WORLD_SIZE'] = str(1)

# os.environ['MASTER_ADDR'] = 'localhost'

# os.environ['MASTER_PORT'] = '12345'

args = parser.parse_args()

return args

def main():

args = parse_args()

assert args.out.endswith('.pkl')

lines = mrlines(args.video_list)

lines = [x.split() for x in lines]

assert len(lines[0]) in [1, 2]

if len(lines[0]) == 1:

annos = [dict(frame_dir=osp.basename(x[0]).split('.')[0], filename=x[0]) for x in lines]

else:

annos = [dict(frame_dir=osp.basename(x[0]).split('.')[0], filename=x[0], label=int(x[1])) for x in lines]

rank = 0 # 添加该

world_size = 1 # 添加

# init_dist('pytorch', backend='nccl')

# rank, world_size = get_dist_info()

#

# if rank == 0:

# os.makedirs(args.tmpdir, exist_ok=True)

# dist.barrier()

my_part = annos

# my_part = annos[rank::world_size]

print("from det_model")

det_model = init_detector(args.det_config, args.det_ckpt, 'cuda')

assert det_model.CLASSES[0] == 'person', 'A detector trained on COCO is required'

print("from pose_model")

pose_model = init_pose_model(args.pose_config, args.pose_ckpt, 'cuda')

n = 0

for anno in tqdm(my_part):

frames = extract_frame(anno['filename'])

print("anno['filename", anno['filename'])

det_results = detection_inference(det_model, frames)

# * Get detection results for human

det_results = [x[0] for x in det_results]

for i, res in enumerate(det_results):

# * filter boxes with small scores

res = res[res[:, 4] >= args.det_score_thr]

# * filter boxes with small areas

box_areas = (res[:, 3] - res[:, 1]) * (res[:, 2] - res[:, 0])

assert np.all(box_areas >= 0)

res = res[box_areas >= args.det_area_thr]

det_results[i] = res

pose_results = pose_inference(pose_model, frames, det_results)

shape = frames[0].shape[:2]

anno['img_shape'] = anno['original_shape'] = shape

anno['total_frames'] = len(frames)

anno['num_person_raw'] = pose_results.shape[0]

anno['keypoint'] = pose_results[..., :2].astype(np.float16)

anno['keypoint_score'] = pose_results[..., 2].astype(np.float16)

anno.pop('filename')

mmcv.dump(my_part, osp.join(args.tmpdir, f'part_{rank}.pkl'))

# dist.barrier()

if rank == 0:

parts = [mmcv.load(osp.join(args.tmpdir, f'part_{i}.pkl')) for i in range(world_size)]

rem = len(annos) % world_size

if rem:

for i in range(rem, world_size):

parts[i].append(None)

ordered_results = []

for res in zip(*parts):

ordered_results.extend(list(res))

ordered_results = ordered_results[:len(annos)]

mmcv.dump(ordered_results, args.out)

if __name__ == '__main__':

# default_mmdet_root = osp.dirname(mmcv.__path__[0])

# default_mmpose_root = osp.dirname(mmcv.__path__[0])

main()

执行下面命令可以提取到所有视频数据集的骨骼数据,合并在train.pkl里了:

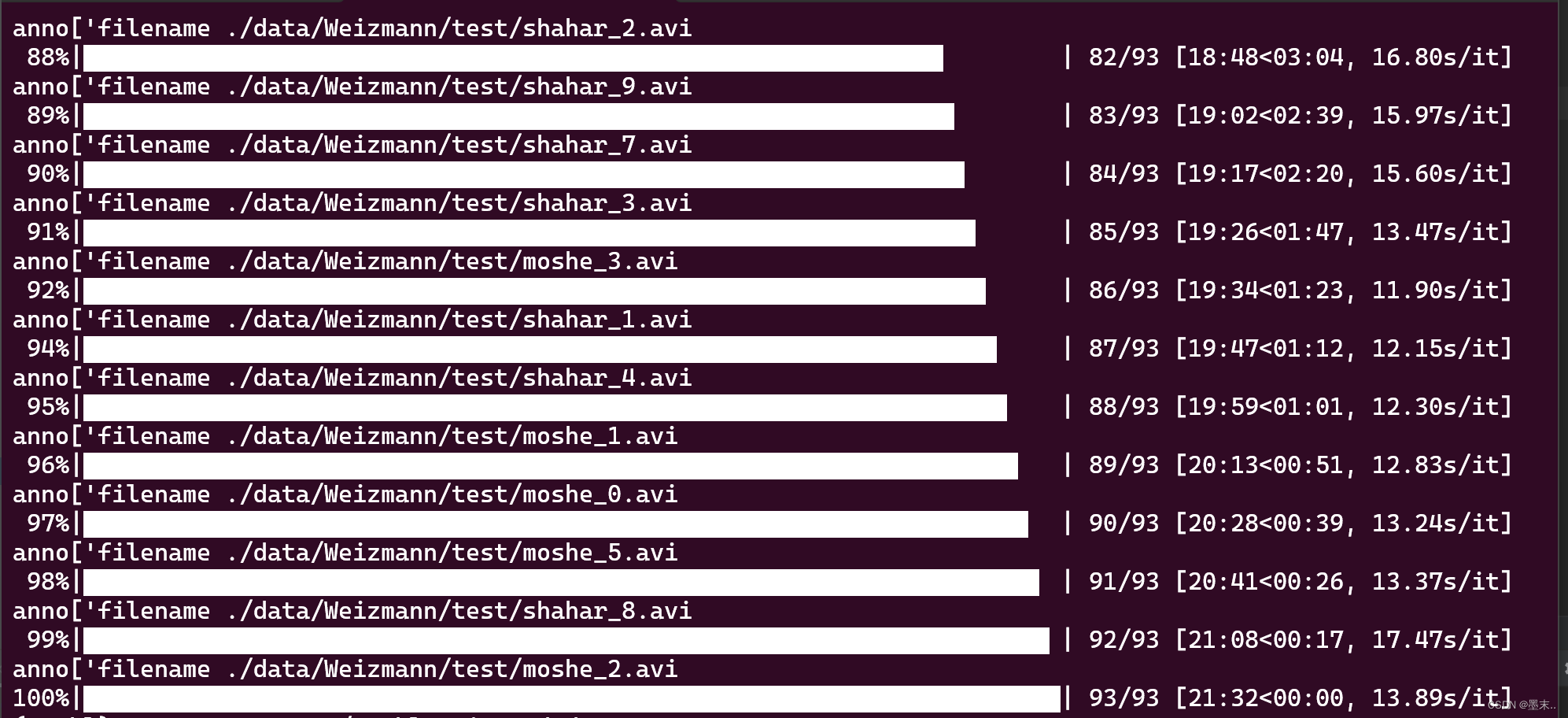

python tools/data/custom_2d_skeleton.py --video-list ./data/Weizmann/Weizmann.list --out ./data/Weizmann/train.pkl

生成过程为:

六.训练模型

根据上面生成的train.pkl和train.json、test.json文件,生成最后训练要用的pkl文件。

from mmcv import load, dump

def traintest(dirpath, pklname, newpklname):

os.chdir(dirpath)

train = load('train.json')

test = load('test.json')

annotations = load(pklname)

split = dict()

split['xsub_train'] = [x['vid_name'] for x in train] #指定训练集里样本名字

split['xsub_val'] = [x['vid_name'] for x in test] #指定测试集里样本名字

dump(dict(split=split, annotations=annotations), newpklname) #最后用于训练的pkl文件名

dirpath = './data/Weizmann'

pklname = 'train.pkl'

newpklname = 'wei_xsub_stgn++_ch.pkl'

traintest(dirpath, pklname, newpklname)

七.选定要训练的模型

我选择了stgcn++,使用了configs/stgcn++/stgcn++_ntu120_xsub_hrnet/j.py该配置文件

配置文件里面有几个地方需要修改,才能训练

这里只是让模型能够训练起来,其余的batch、epoch、lr等参数还可以继续调整。

#num_classes=10 改成自己数据集的类别数量

model = dict(

type='RecognizerGCN',

backbone=dict(

type='STGCN',

gcn_adaptive='init',

gcn_with_res=True,

tcn_type='mstcn',

graph_cfg=dict(layout='coco', mode='spatial')),

cls_head=dict(type='GCNHead', num_classes=10, in_channels=256))

dataset_type = 'PoseDataset'

#ann_file,改成最后生成的用于训练的pkl文件的路径

ann_file = './data/Weizmann/wei_xsub_stgn++_ch.pkl'

train_pipeline = [

dict(type='PreNormalize2D'),

dict(type='GenSkeFeat', dataset='coco', feats=['j']),

dict(type='UniformSample', clip_len=100),

dict(type='PoseDecode'),

dict(type='FormatGCNInput', num_person=1),

dict(type='Collect', keys=['keypoint', 'label'], meta_keys=[]),

dict(type='ToTensor', keys=['keypoint'])

]

val_pipeline = [

dict(type='PreNormalize2D'),

dict(type='GenSkeFeat', dataset='coco', feats=['j']),

dict(type='UniformSample', clip_len=100, num_clips=1, test_mode=True),

dict(type='PoseDecode'),

dict(type='FormatGCNInput', num_person=1),

dict(type='Collect', keys=['keypoint', 'label'], meta_keys=[]),

dict(type='ToTensor', keys=['keypoint'])

]

test_pipeline = [

dict(type='PreNormalize2D'),

dict(type='GenSkeFeat', dataset='coco', feats=['j']),

dict(type='UniformSample', clip_len=100, num_clips=10, test_mode=True),

dict(type='PoseDecode'),

dict(type='FormatGCNInput', num_person=1),

dict(type='Collect', keys=['keypoint', 'label'], meta_keys=[]),

dict(type='ToTensor', keys=['keypoint'])

]

#这里的split='xsub_train'、split='xsub_val'可以按照自己写入的时候的key键进行修改,但是要保证

#wei_xsub_stgn++_ch.pkl中的和这里的一致

data = dict(

videos_per_gpu=16, #batch大小

workers_per_gpu=2,

test_dataloader=dict(videos_per_gpu=1),

train=dict(

type='RepeatDataset',

times=5,

dataset=dict(type=dataset_type, ann_file=ann_file, pipeline=train_pipeline, split='xsub_train')),

val=dict(type=dataset_type, ann_file=ann_file, pipeline=val_pipeline, split='xsub_val'),

test=dict(type=dataset_type, ann_file=ann_file, pipeline=test_pipeline, split='xsub_val'))

# optimizer

optimizer = dict(type='SGD', lr=0.1, momentum=0.9, weight_decay=0.0005, nesterov=True)

optimizer_config = dict(grad_clip=None)

# learning policy

lr_config = dict(policy='CosineAnnealing', min_lr=0, by_epoch=False)

#可以修改训练的轮数total_epochs

total_epochs = 20

checkpoint_config = dict(interval=1)

evaluation = dict(interval=1, metrics=['top_k_accuracy'])

log_config = dict(interval=100, hooks=[dict(type='TextLoggerHook')])

# runtime settings

log_level = 'INFO'

#work_dir为保存训练结果文件的地方,可以自己修改

work_dir = './work_dirs/stgcn++/stgcn++_ntu120_xsub_hrnet/j_Wei5'

执行训练命令:

bash tools/dist_train.sh configs/stgcn++/stgcn++_ntu120_xsub_hrnet/j.py 1 --validate --test-last --test-best

相关训练,测试操作可以看项目文档readme.md

最后

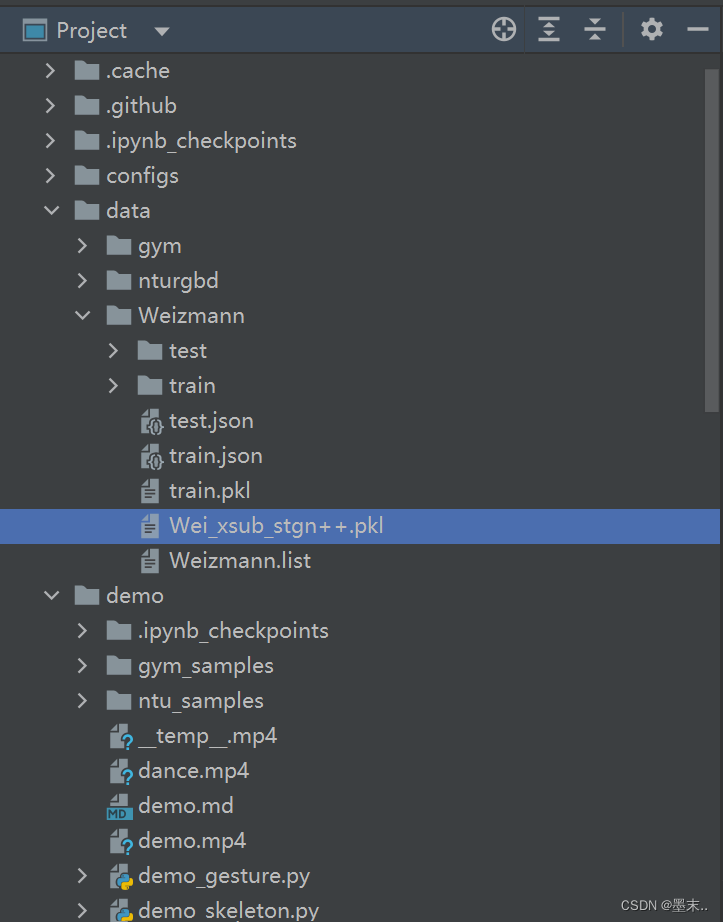

最后得到的全部文件如下所示(不包括训练好的权重):

上面的用到的几个函数我将他们都写在同一个util.py文件里了,直接调用就可以了。

import os

import decord

import json

from mmcv import load, dump

from pyskl.smp import mwlines

def writeJson(path_train, jsonpath):

outpot_list = []

trainfile_list = os.listdir(path_train)

for train_name in trainfile_list:

traindit = {}

sp = train_name.split('_')

traindit['vid_name'] = train_name.replace('.avi', '')

traindit['label'] = int(sp[1].replace('.avi', ''))

traindit['start_frame'] = 0

video_path = os.path.join(path_train, train_name)

vid = decord.VideoReader(video_path)

traindit['end_frame'] = len(vid)

outpot_list.append(traindit.copy())

with open(jsonpath, 'w') as outfile:

json.dump(outpot_list, outfile)

def writeList(dirpath, name):

path_train = os.path.join(dirpath, 'train')

path_test = os.path.join(dirpath, 'test')

trainfile_list = os.listdir(path_train)

testfile_list = os.listdir(path_test)

train = []

for train_name in trainfile_list:

traindit = {}

sp = train_name.split('_')

traindit['vid_name'] = train_name

traindit['label'] = sp[1].replace('.avi', '')

train.append(traindit)

test = []

for test_name in testfile_list:

testdit = {}

sp = test_name.split('_')

testdit['vid_name'] = test_name

testdit['label'] = sp[1].replace('.avi', '')

test.append(testdit)

tmpl1 = os.path.join(path_train, '{}')

lines1 = [(tmpl1 + ' {}').format(x['vid_name'], x['label']) for x in train]

tmpl2 = os.path.join(path_test, '{}')

lines2 = [(tmpl2 + ' {}').format(x['vid_name'], x['label']) for x in test]

lines = lines1 + lines2

mwlines(lines, os.path.join(dirpath, name))

def traintest(dirpath, pklname, newpklname):

os.chdir(dirpath)

train = load('train.json')

test = load('test.json')

annotations = load(pklname)

split = dict()

split['xsub_train'] = [x['vid_name'] for x in train]

split['xsub_val'] = [x['vid_name'] for x in test]

dump(dict(split=split, annotations=annotations), newpklname)

if __name__ == '__main__':

dirpath = './data/Weizmann'

pklname = 'train.pkl'

newpklname = 'Wei_xsub_stgn++.pkl'

# writeJson('./data/Weizmann/test', 'test.json')

traintest(dirpath, pklname, newpklname)

# writeList('./data/Weizmann.list', 'Weizmann.list')

使用自己训练好的模型生成demo

需要在 ./tools/data/label_map文件夹下建立数据集标签名称,从小到大排列,这样得到的输出视频画面中的标签才不会错。

python demo/demo_skeleton.py video/shahar_1.avi video/shahar_1_demo.mp4

--config ./configs/stgcn++/stgcn++_ntu120_xsub_hrnet/j.py

--checkpoint ./work_dirs/stgcn++/stgcn++_ntu120_xsub_hrnet/j_Wei5/best_top1_acc_epoch_11.pth

--label-map ./tools/data/label_map/Weizmann.txt

输出结果如下:

shahar_1_demo

992

992

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?