文章目录

1 urllib的实验

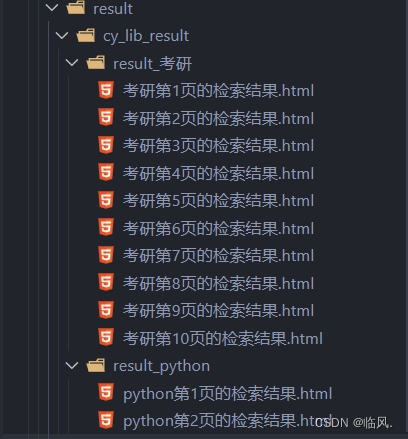

2.1 编写函数cy_lib(keyword, n),爬取xx学院图书馆的馆藏图书信息

要求:给定检索关键词keyword,即可下载前n页的检索结果

分别下载关键词为:‘python’前2页 和‘考研’前10页的检索结果

2.1.1 代码展示

def cy_lib(keyword: str, n: int):

"""编写函数cy_lib(keyword, n),爬取诚毅学院图书馆的馆藏图书信息

给定检索关键词keyword,即可下载前n页的检索结果

Args:

keyword (str): 检索关键词

n (int): 检索结果前n页

"""

import os

import urllib.request

import urllib.parse

url = "http://210.34.144.251:8080/opac/openlink.php?" # 加了问号后,就得实现url拼接而不是向requests中传入params

headers = {

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36 Edg/117.0.2045.31",

"cookie": "PHPSESSID=c8bv3i9vptbjbushu3dmilda73"

}

result_dir_path = f"./result/cy_lib_result/result_{keyword}"

try:

os.makedirs(result_dir_path)

except:

FileExistsError

for time in range(n):

params = {

"title": keyword,

"page": time + 1

}

params_encode = urllib.parse.urlencode(params)

request_url = url + params_encode

req = urllib.request.Request(request_url, headers=headers, method="GET")

response = urllib.request.urlopen(req)

file_name = f"{keyword}第{time + 1}页的检索结果.html"

file_path = os.path.join(result_dir_path, file_name)

with open(file_path, "wb") as f:

f.write(response.read()) # 注意指针

if __name__ == "__main__":

cy_lib("python", 2)

cy_lib("考研", 10)

2.1.2 效果展示

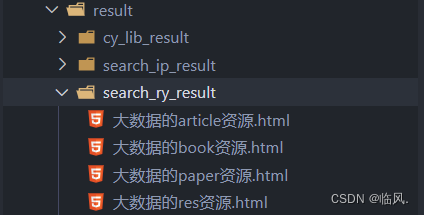

2.2 给定关键词(例如:大数据),在人邮教育官网上搜索图书、资源、文章,并分类保存

2.2.1 代码展示

def search_ry(keyword: str):

"""给定关键词(例如:大数据),在人邮教育官网上搜索图书、资源、文章,并分类保存

Args:

keyword (str): 关键词

"""

import os

import urllib.parse

import urllib.request

url = "https://www.ryjiaoyu.com/search"

headers = {

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36 Edg/117.0.2045.31",

"cookie": "AnonymousUserId=6f7ca777-1f35-4488-9882-c06af6183e73; neplayer_device_id=16861429833056259629505; BookMode=List; acw_tc=2760825a16959062506874460e215ed90e93ab11f67a319a522448796f4814"

}

type_list = ["book", "article", "res", "paper"]

result_path = f"./result/search_ry_result"

try:

os.makedirs(result_path)

except:

FileExistsError

for type_name in type_list:

params = {

"q": keyword,

"type": type_name

}

data = bytes(urllib.parse.urlencode(params), encoding="utf-8")

req = urllib.request.Request(url, data=data, headers=headers, method="POST")

response = urllib.request.urlopen(req)

# print(response.read().decode("utf-8"))

file_name = f"{keyword}的{type_name}资源.html"

file_path = os.path.join(result_path, file_name)

with open(file_path, "wb") as f:

f.write(response.read()) # 注意指针

if __name__ == "__main__":

search_ry("大数据")

2.2.2 效果展示

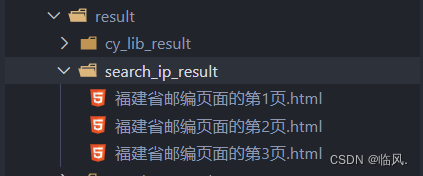

2.3 爬取福建省指定n-m页的邮编页面

•http://alexa.ip138.com/post/search.asp

2.3.1 代码展示

def search_ip(n: int, m: int):

"""爬取福建省指定n-m页的邮编页面

http://alexa.ip138.com/post/search.asp

Args:

n (int): 从第n页

m (int): 到第m页

"""

import os

import urllib.parse

import urllib.request

import time

url = "http://alexa.ip138.com/post/search.asp?"

headers = {

"user-agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36 Edg/117.0.2045.31",

}

result_path = f"./result/search_ip_result"

try:

os.makedirs(result_path)

except:

FileExistsError

for num in range(n, m + 1):

params = {

"page": num,

"regionid": 3,

"cityid": "",

"countyid": "",

"address" : "",

"zip": "",

"t": "56621"

}

params_encode = urllib.parse.urlencode(params)

request_url = url + params_encode

req = urllib.request.Request(request_url, headers=headers, method="GET")

response = urllib.request.urlopen(req)

file_name = f"福建省邮编页面的第{num}页.html"

file_path = os.path.join(result_path, file_name)

with open(file_path, "wb") as f:

f.write(response.read()) # 注意指针

time.sleep(1)

if __name__ == "__main__":

search_ip(1,3)

2.3.2 效果展示

3.实验小结

根据网页请求方法,我们将网站分为"GET"和"POST",当网页为"GET"是,我们使用网页参数拼接的方式进行构造请求的url

params_encode = urllib.parse.urlencode(params)

request_url = url + params_encode

req = urllib.request.Request(request_url, headers=headers, method="GET")

response = urllib.request.urlopen(req)

当为”POST“网页时,我们使用传入参数的方式进行构造请求

data = bytes(urllib.parse.urlencode(params), encoding="utf-8")

req = urllib.request.Request(url, data=data, headers=headers, method="POST")

response = urllib.request.urlopen(req)

当我们进行手动调试时,使用read()时需要注意,文本指针的位置,如果在前面以及read过一次,又read到的内容肯定为空,因为第一次read后,文本指针已经到达了文本最后。

所以还是建议使用debug来进行做调试

866

866

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?