配置前提:hdfs、zk、spark 可用

配置如下

vim spark/conf/spark-env.sh

# 注释或删除MASTER_HOST内容: # SPARK_MASTER_HOST=node1 # 增加以下配置 SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=node1:2181,node2:2181,node3:2181 -Dspark.deploy.zookeeper.dir=/spark-ha"

启动:

先启动hdfs (node1) start-dfs.sh

再启动zk (所有节点) zookeeper-3.4.6/bin/zkServer.sh start

然后启动spark (node1) spark/sbin/start-all.sh

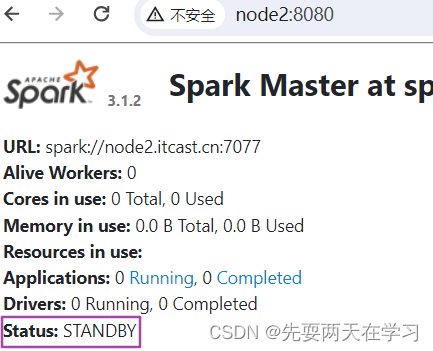

最后启动备份节点(node2)spark/sbin/start-master.sh

结果:

632

632

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?