参考

https://blog.csdn.net/weixin_43508499/article/details/107714080

https://blog.csdn.net/Lucinda6/article/details/116162198

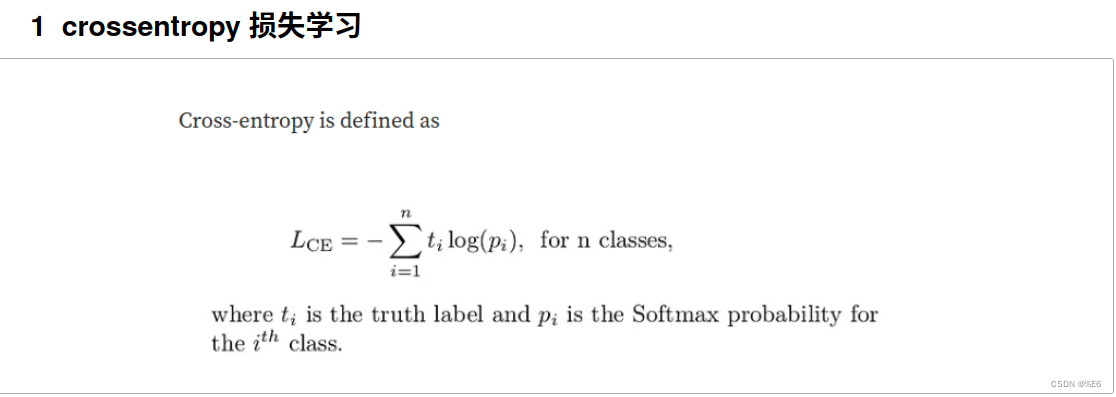

主要看定义,函数的输入和输出

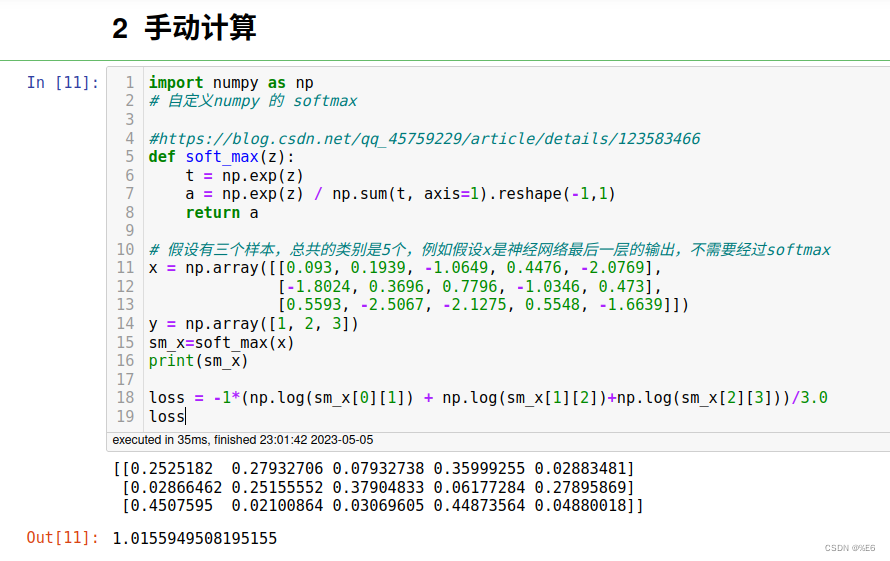

手动计算

import numpy as np

# 自定义numpy 的 softmax

#https://blog.csdn.net/qq_45759229/article/details/123583466

def soft_max(z):

t = np.exp(z)

a = np.exp(z) / np.sum(t, axis=1).reshape(-1,1)

return a

# 假设有三个样本,总共的类别是5个,例如假设x是神经网络最后一层的输出,不需要经过softmax

x = np.array([[0.093, 0.1939, -1.0649, 0.4476, -2.0769],

[-1.8024, 0.3696, 0.7796, -1.0346, 0.473],

[0.5593, -2.5067, -2.1275, 0.5548, -1.6639]])

y = np.array([1, 2, 3])

sm_x=soft_max(x)

print(sm_x)

loss = -1*(np.log(sm_x[0][1]) + np.log(sm_x[1][2])+np.log(sm_x[2][3]))/3.0

loss

结果如下

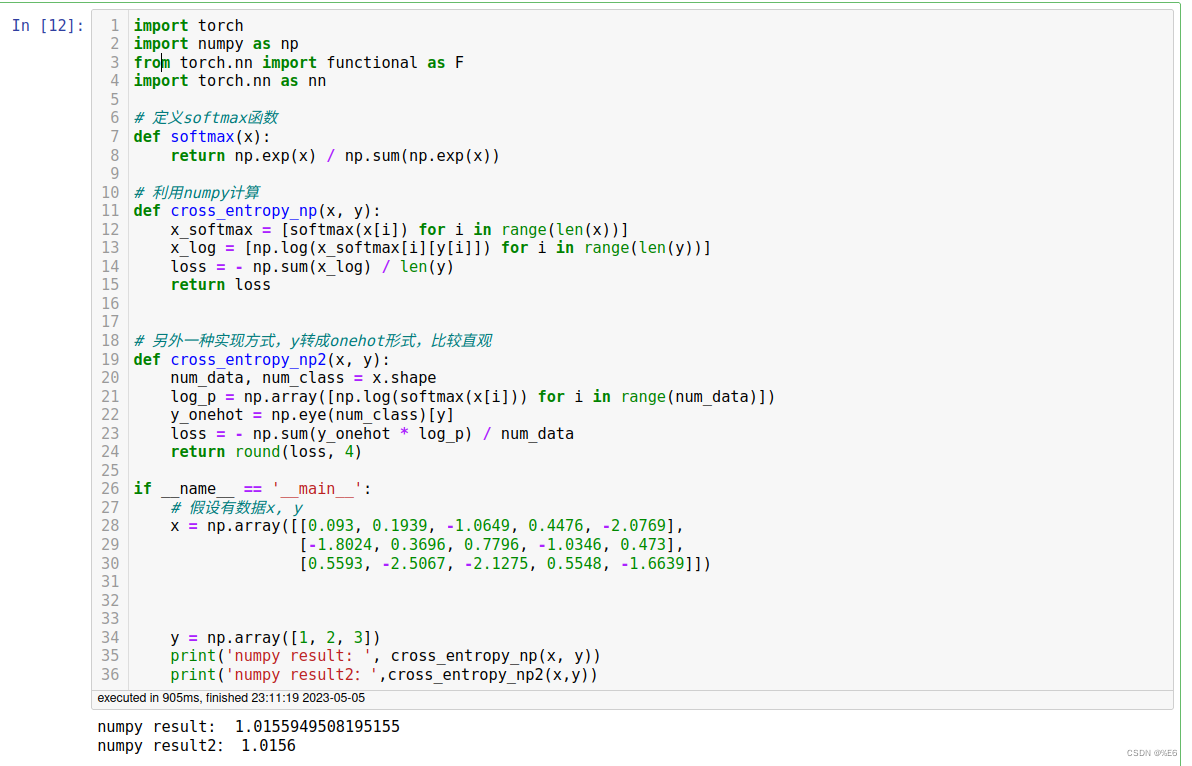

numpy 实现

import torch

import numpy as np

from torch.nn import functional as F

import torch.nn as nn

# 定义softmax函数

def softmax(x):

return np.exp(x) / np.sum(np.exp(x))

# 利用numpy计算

def cross_entropy_np(x, y):

x_softmax = [softmax(x[i]) for i in range(len(x))]

x_log = [np.log(x_softmax[i][y[i]]) for i in range(len(y))]

loss = - np.sum(x_log) / len(y)

return loss

# 另外一种实现方式,y转成onehot形式,比较直观

def cross_entropy_np2(x, y):

num_data, num_class = x.shape

log_p = np.array([np.log(softmax(x[i])) for i in range(num_data)])

y_onehot = np.eye(num_class)[y]

loss = - np.sum(y_onehot * log_p) / num_data

return round(loss, 4)

if __name__ == '__main__':

# 假设有数据x, y

x = np.array([[0.093, 0.1939, -1.0649, 0.4476, -2.0769],

[-1.8024, 0.3696, 0.7796, -1.0346, 0.473],

[0.5593, -2.5067, -2.1275, 0.5548, -1.6639]])

y = np.array([1, 2, 3])

print('numpy result: ', cross_entropy_np(x, y))

print('numpy result2:',cross_entropy_np2(x,y))

结果如下

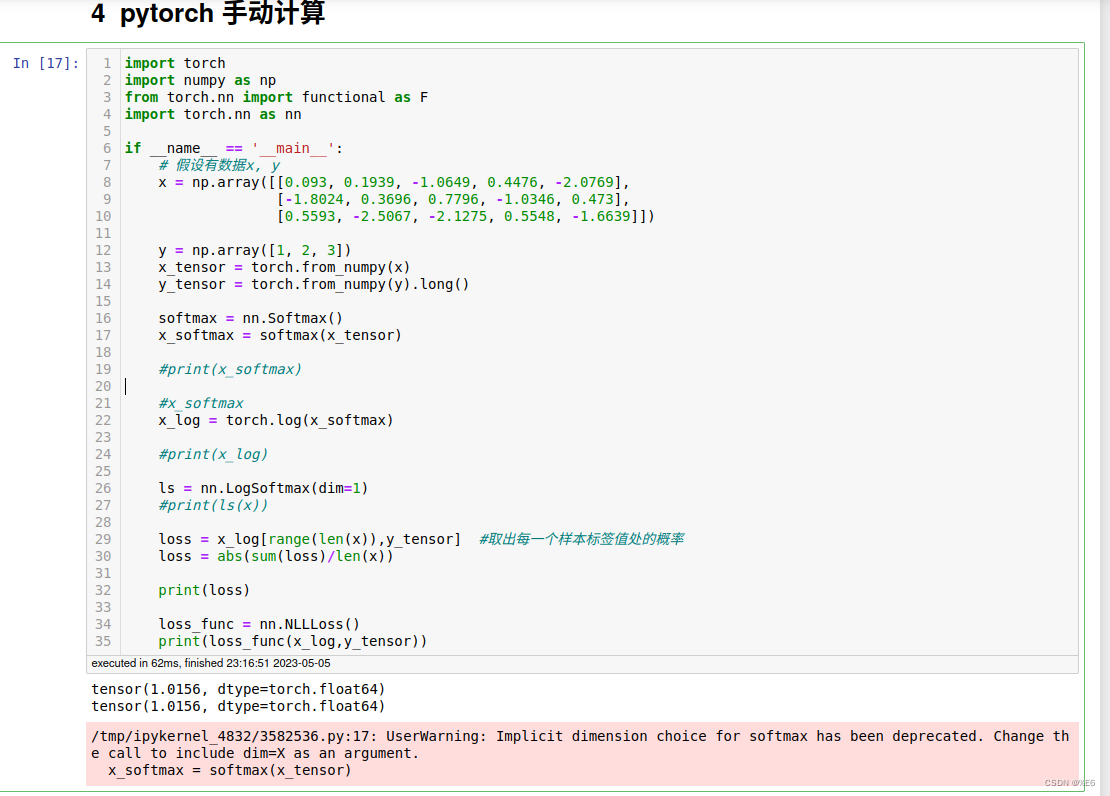

pytorch手动计算

import torch

import numpy as np

from torch.nn import functional as F

import torch.nn as nn

if __name__ == '__main__':

# 假设有数据x, y

x = np.array([[0.093, 0.1939, -1.0649, 0.4476, -2.0769],

[-1.8024, 0.3696, 0.7796, -1.0346, 0.473],

[0.5593, -2.5067, -2.1275, 0.5548, -1.6639]])

y = np.array([1, 2, 3])

x_tensor = torch.from_numpy(x)

y_tensor = torch.from_numpy(y).long()

softmax = nn.Softmax()

x_softmax = softmax(x_tensor)

#print(x_softmax)

#x_softmax

x_log = torch.log(x_softmax)

#print(x_log)

ls = nn.LogSoftmax(dim=1)

#print(ls(x))

loss = x_log[range(len(x)),y_tensor] #取出每一个样本标签值处的概率

loss = abs(sum(loss)/len(x))

print(loss)

loss_func = nn.NLLLoss()

print(loss_func(x_log,y_tensor))

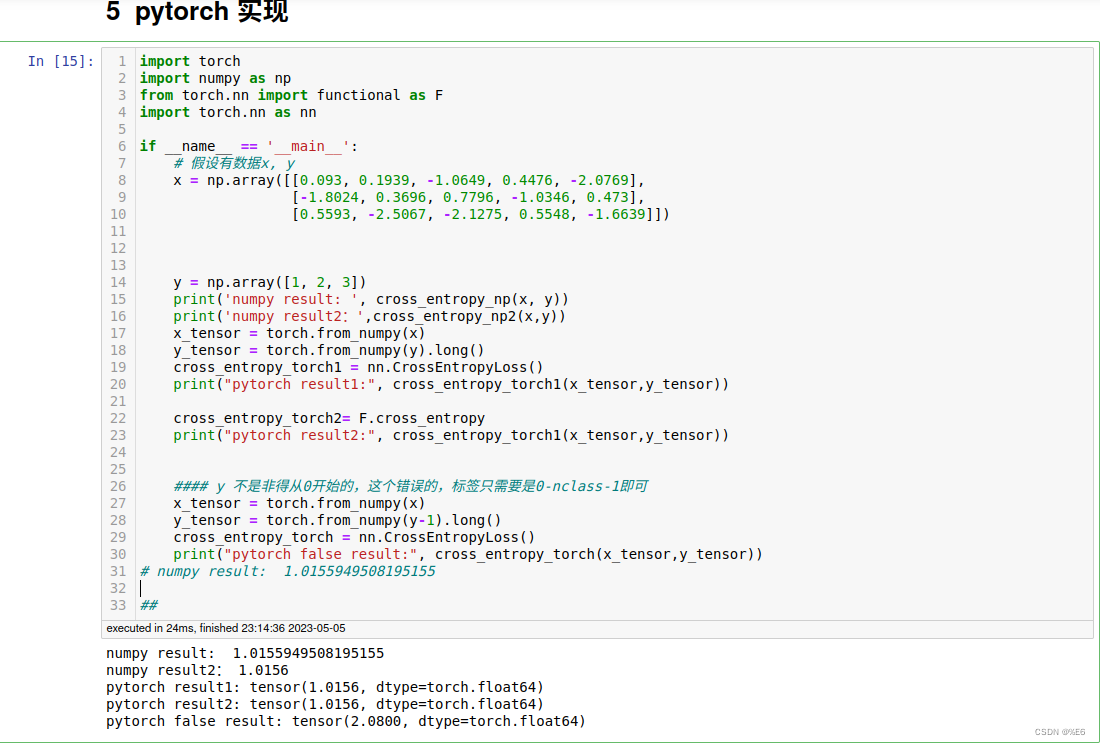

pytorch实现(使用nn.CrossEntropy或F.cross_entropy)

import torch

import numpy as np

from torch.nn import functional as F

import torch.nn as nn

if __name__ == '__main__':

# 假设有数据x, y

x = np.array([[0.093, 0.1939, -1.0649, 0.4476, -2.0769],

[-1.8024, 0.3696, 0.7796, -1.0346, 0.473],

[0.5593, -2.5067, -2.1275, 0.5548, -1.6639]])

y = np.array([1, 2, 3])

print('numpy result: ', cross_entropy_np(x, y))

print('numpy result2:',cross_entropy_np2(x,y))

x_tensor = torch.from_numpy(x)

y_tensor = torch.from_numpy(y).long()

cross_entropy_torch1 = nn.CrossEntropyLoss()

print("pytorch result1:", cross_entropy_torch1(x_tensor,y_tensor))

cross_entropy_torch2= F.cross_entropy

print("pytorch result2:", cross_entropy_torch1(x_tensor,y_tensor))

#### y 不是非得从0开始的,这个错误的,标签只需要是0-nclass-1即可

x_tensor = torch.from_numpy(x)

y_tensor = torch.from_numpy(y-1).long()

cross_entropy_torch = nn.CrossEntropyLoss()

print("pytorch false result:", cross_entropy_torch(x_tensor,y_tensor))

# numpy result: 1.0155949508195155

##

结果如下

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?