一、何为贝叶斯

贝叶斯基本公式:P(A|B)=P(B|A)*P(A)/P(B)

假设事件 B={一封邮件},事件A={这封邮件是垃圾邮件},P(A|B)表示这封邮件是垃圾邮件的概率。可想而知,我们无法直接求得这个概率,此时借助贝叶斯就可以对该事件进行一次逆推

-

P(A|B)称为后验概率(posterior),这是我们需要结合先验概率和证据计算之后才能知道的。

-

P(B|A)称为似然(likelihood),在事件A发生的情况下,事件B(或evidence)的概率有多大

-

P(A)称为先验概率(prior), 事件A发生的概率有多大

-

P(B)称为证据(evidence),即无论事件如何,事件B(或evidence)的可能性有多大

二、项目实战-垃圾分类

1. 导包

import random

import numpy as np

import re

2. 代码示例

def textParse(input_text):

'''

读取文本数据,进行正则切分,将切分后的单词进行小写化

:param input_text: 邮件文本

:return: 切分后的单词列表

'''

list_world = re.split(r'\W+', input_text)

return [world.lower() for world in list_world if len(world) > 2]

def createVocabList(doclist):

'''

创建语料库

:param doclist: 传入单词列表

:return: 返回无重复的语料库

'''

vocablist = set()

for doc in doclist:

vocablist = vocablist | set(doc)

return list(vocablist)

def setOfWordVec(vocablist, inputSet):

'''

文本向量化

:param vocablist: 语料库

:param inputSet: 单篇邮件的单词列表

:return: 统计单词出现次数的向量

'''

returnVec = [0] * len(vocablist)

for word in inputSet:

if word in vocablist:

returnVec[vocablist.index(word)] += 1

return returnVec

def trainNB(trainMat, trainClass):

'''

训练模型

:param trainMat: 训练集单词矩阵

:param trainClass: 训练集对应类别(1代表垃圾邮件,0代表正常邮件)

:return: 垃圾邮件似然概率,正常文件似然概率,垃圾文件先验概率

'''

numTrainDocs = len(trainMat)

numberWorld = len(trainMat[0])

p1 = sum(trainClass) / numTrainDocs

p0Num = np.ones((numberWorld)) # 拉普拉斯平滑

p1Num = np.ones((numberWorld))

p0Demo = 2 # 一般情况下分为几类就写几

p1Demo = 2

for i in range(numTrainDocs):

if trainClass[i] == 1:

p1Num += trainMat[i]

p1Demo += sum(trainMat[i])

else:

p0Num += trainMat[i]

p0Demo += sum(trainMat[i])

p1Vec = np.log(p1Num / p1Demo)

p0Vec = np.log(p0Num / p0Demo)

return p1Vec, p0Vec, p1

def classifyNB(wordVec, p1Vec, p0Vec, p1):

'''

贝叶斯计算,分类

:param wordVec: 测试集文本向量

:param p1Vec: 垃圾邮件似然概率

:param p0Vec: 正常邮件似然概率

:param p1: 垃圾邮件先验概率

:return: 分类结果

'''

p1 = np.log(p1) + sum(wordVec * p1Vec)

p0 = np.log(1 - p1) + sum(wordVec * p0Vec)

if p1 > p0:

return 1

else:

return 0

def spam():

'''

主函数

:return:

'''

doclist = []

classlist = []

for i in range(1, 26):

wordlist = textParse(open("data/ham/{0}.txt".format(i), 'r').read())

doclist.append(wordlist)

classlist.append(1) # 垃圾邮件标签

wordlist = textParse(open("data/spam/{0}.txt".format(i), 'r').read())

doclist.append(wordlist)

classlist.append(0) # 正常邮件标签

vocablist = createVocabList(doclist) # 创建语料表

trainset = list(range(50))

testset = []

for i in range(10):

randIndex = int(random.uniform(0, len(trainset)))

testset.append(trainset[randIndex])

del trainset[randIndex]

trainMat = []

trainClass = []

for docIndex in trainset:

trainMat.append(setOfWordVec(vocablist, doclist[docIndex]))

trainClass.append(classlist[docIndex])

p1Vec, p0Vec, p1 = trainNB(np.array(trainMat), np.array(trainClass))

errorCount = 0

for docIndex in testset:

wordVec = setOfWordVec(vocablist, doclist[docIndex])

p = classifyNB(np.array(wordVec), p1Vec, p0Vec, p1)

if p != classlist[docIndex]:

errorCount += 1

return errorCount

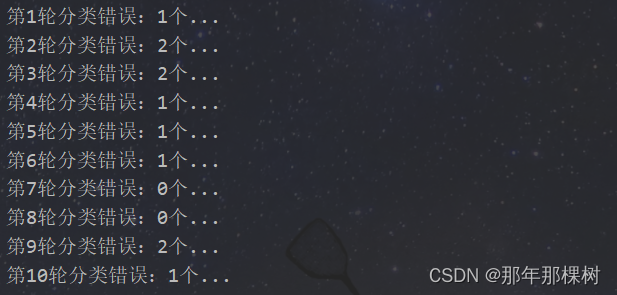

3. 测试代码

if __name__ == "__main__":

errorCount = 0

for i in range(10):

print(f"第{i+1}轮分类错误:{spam()}个...")

4.测试结果

注意:需要数据请私聊作者!!!

1072

1072

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?