作业

2.

# 计算损失

def compute_error(b, m, points):

error = 0

for l in points:

x = l[0]

y = l[1]

error += (y - (m * x + b)) ** 2

return error / float(len(points))

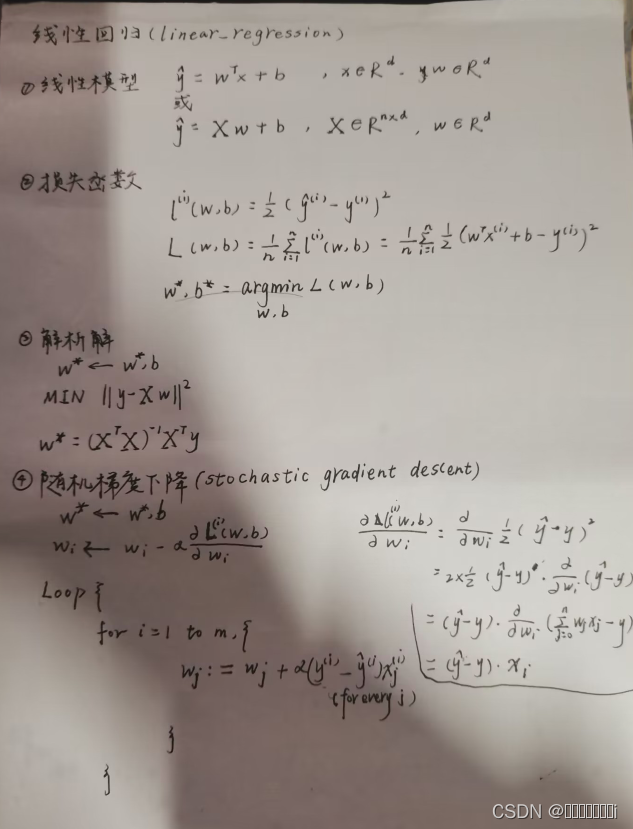

# 每一步的梯度改变

def step_gradient(b, m, points):

b_gradient = m_gradient = 0

N = float(len(points))

for l in points:

x = l[0]

y = l[1]

b_gradient += -(2/N) * (y - ((m * x) + b))

m_gradient += -(2/N) * (x * (y - ((m * x) + b)))

return [(b - (learning_rate * b_gradient)), (m - (learning_rate * m_gradient))];

# 梯度下降算法

def gradient_descent_runner(points):

global lines

b = m = 0

for i in range(num_iterations):

b, m = step_gradient(b, m, array(points))

lines = append(lines, [[b, m]], axis=0)

return [b, m]

笔记

97

97

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?