1. 安装要求

在开始之前,部署Kubernetes集群机器需要满足以下几个条件:

-至少3台机器,操作系统 CentOS7+

- 硬件配置:2GB或更多RAM,2个CPU或更多CPU,硬盘20GB或更多

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像

- 禁止swap分区

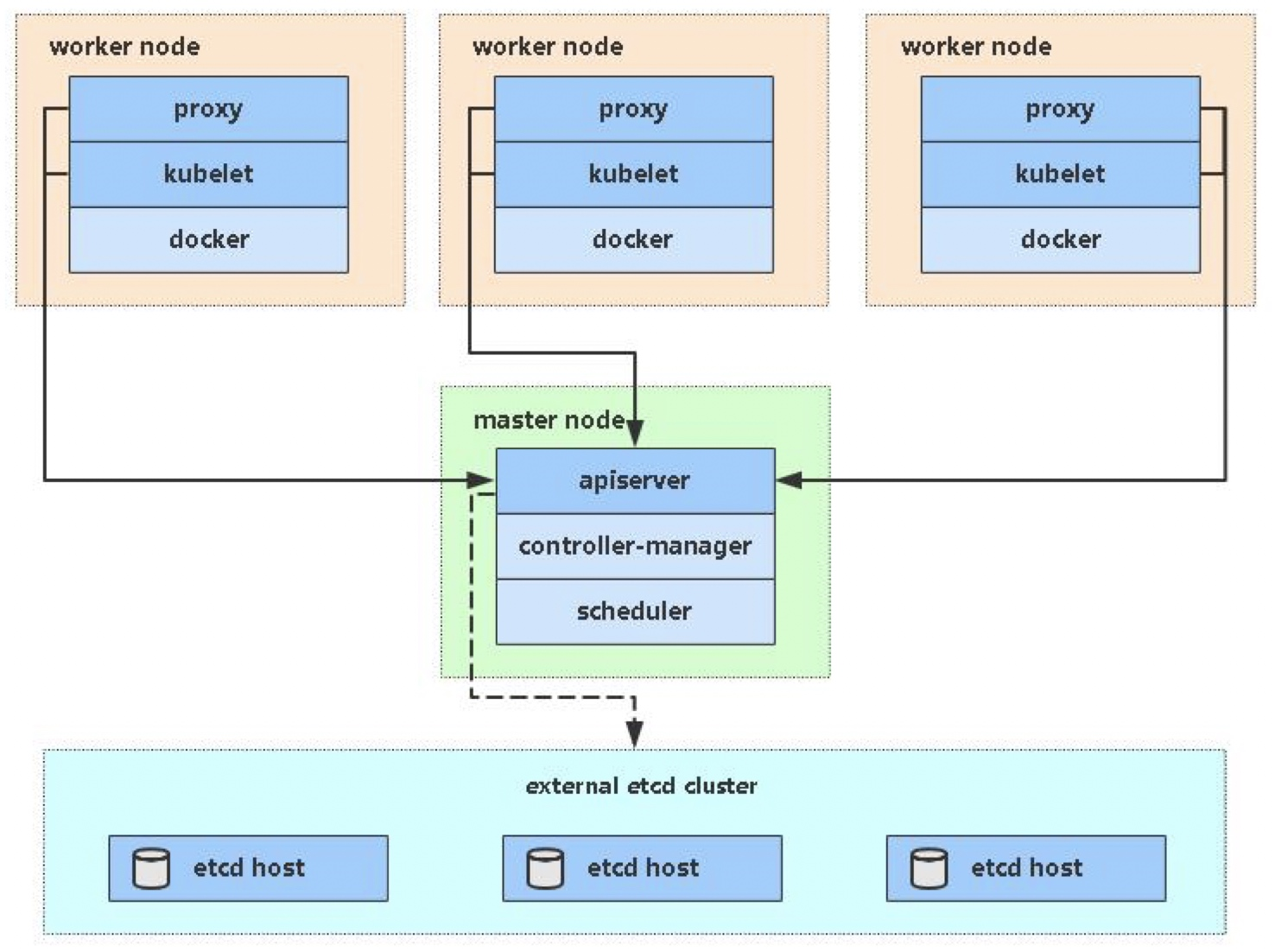

2. 学习目标

- 在所有节点上安装Docker和kubeadm

- 部署Kubernetes Master

- 部署容器网络插件

- 部署 Kubernetes Node,将节点加入Kubernetes集群中

- 部署Dashboard Web页面,可视化查看Kubernetes资源

3. 准备环境

| 角色 | IP |

|---|---|

| master | 192.168.57.139 |

| node1 | 192.168.57.140 |

| node2 | 192.168.57.143 |

关闭防火墙:

[root@localhost ~]# systemctl disable --now firewalld

Removed /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

关闭selinux:

[root@localhost ~]# sed -i 's/enforcing/disabled/' /etc/selinux/config

关闭swap:

[root@localhost ~]# vi /etc/fstab #最后一行删除

设置主机名:

[root@master ~]# hostnamectl set-hostname master.example.com #还有node1和node2

[root@master ~]# bash

[root@master ~]# hostname

master.example.com

在master添加hosts:

[root@master ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.57.139 node1 node1.example.com

192.168.57.140 node2 node2.example.com

192.168.57.143 master master.example.com

将桥接的IPv4流量传递到iptables的链:

# cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

# sysctl --system # 生效

[root@master ~]# cat /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

[root@master ~]# sysctl --system

时间同步:

[root@master ~]# yum -y install chronyd

[root@master ~]# vim /etc/chrony.conf

#pool 2.centos.pool.ntp.org iburst

server time1.aliyun.com iburst

[root@master ~]# systemctl enable --now chronyd

免密认证:

[root@master ~]# ssh-keygen -t rsa

[root@master ~]# ssh-copy-id master

[root@master ~]# ssh-copy-id node1

[root@master ~]# ssh-copy-id node2

[root@master ~]# for i in master node1 node2;do ssh $i 'date';done

2021年 12月 18日 星期六 04:42:42 CST

2021年 12月 18日 星期六 04:42:42 CST

2021年 12月 18日 星期六 04:42:42 CST

[root@master ~]# 所有节点安装Docker/kubeadm/kubelet

Kubernetes默认CRI(容器运行时)为Docker,因此先安装Docker。

//三台机器重复一样的操作

//三台机器重复一样的操作

//三台机器重复一样的操作

// 下载docker源

[root@master ~]# cd /etc/yum.repos.d/

[root@master yum.repos.d]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

// 安装docker

[root@master ~]# yum -y install docker-ce

// 设置docker开机自启

[root@master ~]# systemctl enable --now docker

Created symlink /etc/systemd/system/multi-user.target.wants/docker.service → /usr/lib/systemd/system/docker.service.

// 查看docker版本号

[root@master ~]# docker --version

Docker version 20.10.12, build e91ed57

// 设置docker的加速器

[root@master ~]# cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://2bkybiwf.mirror.aliyuncs.com"], ## 配置加速器

"exec-opts": ["native.cgroupdriver=systemd"], ## 用systemd的方式来管理cgroup

"log-driver": "json-file", ## 日志格式用json

"log-opts": {

"max-size": "100m" ## 最大的大小100M,达到100M就滚动

},

"storage-driver": "overlay2" ## 存储驱动用overlay2

}

EOF添加kubernetes阿里云YUM软件源

//三台都要做

//三台都要做

//三台都要做

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里指定版本号部署:

//三台都要

//三台都要

[root@master ~]# yum install -y kubelet-1.20.0 kubeadm-1.20.0 kubectl-1.20.0

[root@master ~]# systemctl enable kubelet //不能现在启动

Created symlink /etc/systemd/system/multi-user.target.wants/kubelet.service → /usr/lib/systemd/system/kubelet.service.部署Kubernetes Master

在192.168.57.139(Master)执行。

[root@master ~]# kubeadm init --apiserver-advertise-address 192.168.57.139 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.20.0 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16

[root@master ~]# kubeadm init \

> --apiserver-advertise-address=192.168.57.139 \

> --image-repository registry.aliyuncs.com/google_containers \

> --kubernetes-version v1.20.0 \

> --service-cidr=10.96.0.0/12 \

> --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.20.0

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.12. Latest validated version: 19.03

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master.example.com] and IPs [10.96.0.1 192.168.57.139]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master.example.com] and IPs [192.168.57.139 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master.example.com] and IPs [192.168.57.139 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 9.001945 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.20" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master.example.com as control-plane by adding the labels "node-role.kubernetes.io/master=''" and "node-role.kubernetes.io/control-plane='' (deprecated)"

[mark-control-plane] Marking the node master.example.com as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 03zew4.v93no08kijmerlzg

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.57.139:6443 --token 03zew4.v93no08kijmerlzg \

--discovery-token-ca-cert-hash sha256:3aedd51b61036327c87aaeeca36733bf9301af4cee2a77eff9c07461fbbd6120 [root@master ~]# touch yibie

[root@master ~]# vim yibie

[root@master ~]# cat yibie

kubeadm join 192.168.57.139:6443 --token 03zew4.v93no08kijmerlzg \

--discovery-token-ca-cert-hash sha256:3aedd51b61036327c87aaeeca36733bf9301af4cee2a77eff9c07461fbbd6120 由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址。

使用kubectl工具:

// 让环境变量永久生效

[root@master ~]# echo 'export KUBECONFIG=/etc/kubernetes/admin.conf' > /etc/profile.d/k8s.sh

[root@master ~]# source /etc/profile.d/k8s.sh

// 查看是否有控制节点

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.example.com NotReady control-plane,master 4m2s v1.20.0

安装Pod网络插件(CNI)

//下载状态文件

[root@master ~]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

(下载有点问题,我直接把文件拉进来的)

[root@master ~]# kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

确保能够访问到quay.io这个registery。

加入Kubernetes Node

在192.168.57.140、192.168.57.143上(Node)执行。

向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

// 把刚刚保存的文件复制到node1和node2上去执行,拉镜像

[root@master ~]# cat yibie

kubeadm join 192.168.57.139:6443 --token 03zew4.v93no08kijmerlzg \

--discovery-token-ca-cert-hash sha256:3aedd51b61036327c87aaeeca36733bf9301af4cee2a77eff9c07461fbbd6120

//node1

[root@node1 ~]# kubeadm join 192.168.57.139:6443 --token 03zew4.v93no08kijmerlzg \

> --discovery-token-ca-cert-hash sha256:3aedd51b61036327c87aaeeca36733bf9301af4cee2a77eff9c07461fbbd6120

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.12. Latest validated version: 19.03

[WARNING Hostname]: hostname "node1" could not be reached

[WARNING Hostname]: hostname "node1": lookup node1 on 192.168.57.2:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

//node2

[root@node2 ~]# kubeadm join 192.168.57.139:6443 --token 03zew4.v93no08kijmerlzg \

> --discovery-token-ca-cert-hash sha256:3aedd51b61036327c87aaeeca36733bf9301af4cee2a77eff9c07461fbbd6120

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.12. Latest validated version: 19.03

[WARNING Hostname]: hostname "node2" could not be reached

[WARNING Hostname]: hostname "node2": lookup node2 on 192.168.57.2:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

// 三个全都是ready之后,集群就部署好了

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master.example.com Ready control-plane,master 18m v1.20.0

node1 Ready <none> 63s v1.20.0

node2 Ready <none> 57s v1.20.0

//查看名称空间

[root@master ~]# kubectl get ns

NAME STATUS AGE

default Active 21m

kube-node-lease Active 21m

kube-public Active 21m

kube-system Active 21m

//-n指定名称空间能看见信息

[root@master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7f89b7bc75-9ltl7 1/1 Running 0 21m

coredns-7f89b7bc75-rjnbg 1/1 Running 0 21m

etcd-master.example.com 1/1 Running 0 21m

kube-apiserver-master.example.com 1/1 Running 0 21m

kube-controller-manager-master.example.com 1/1 Running 0 21m

kube-flannel-ds-4vbdn 1/1 Running 0 4m49s

kube-flannel-ds-cvk6b 1/1 Running 0 11m

kube-flannel-ds-zhhv4 1/1 Running 0 4m43s

kube-proxy-hx6dw 1/1 Running 0 4m43s

kube-proxy-m8cjz 1/1 Running 0 4m49s

kube-proxy-pgtjp 1/1 Running 0 21m

kube-scheduler-master.example.com 1/1 Running 0 21m

//跟能清楚看见pod信息

[root@master ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-7f89b7bc75-9ltl7 1/1 Running 0 22m 10.244.0.3 master.example.com <none> <none>

coredns-7f89b7bc75-rjnbg 1/1 Running 0 22m 10.244.0.2 master.example.com <none> <none>

etcd-master.example.com 1/1 Running 0 22m 192.168.57.139 master.example.com <none> <none>

kube-apiserver-master.example.com 1/1 Running 0 22m 192.168.57.139 master.example.com <none> <none>

kube-controller-manager-master.example.com 1/1 Running 0 22m 192.168.57.139 master.example.com <none> <none>

kube-flannel-ds-4vbdn 1/1 Running 0 5m21s 192.168.57.140 node1 <none> <none>

kube-flannel-ds-cvk6b 1/1 Running 0 11m 192.168.57.139 master.example.com <none> <none>

kube-flannel-ds-zhhv4 1/1 Running 0 5m15s 192.168.57.143 node2 <none> <none>

kube-proxy-hx6dw 1/1 Running 0 5m15s 192.168.57.143 node2 <none> <none>

kube-proxy-m8cjz 1/1 Running 0 5m21s 192.168.57.140 node1 <none> <none>

kube-proxy-pgtjp 1/1 Running 0 22m 192.168.57.139 master.example.com <none> <none>

kube-scheduler-master.example.com 1/1 Running 0 22m 192.168.57.139 master.example.com <none> <none>

测试kubernetes集群

在Kubernetes集群中创建一个pod,验证是否正常运行:

[root@master ~]# kubectl create deployment nginx --image nginx

deployment.apps/nginx created

[root@master ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 23m

nginx NodePort 10.98.128.7 <none> 80:31335/TCP 8s

[root@master ~]# kubectl get pods,svc

NAME READY STATUS RESTARTS AGE

pod/nginx-6799fc88d8-dmq4s 0/1 ContainerCreating 0 23s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 24m

service/nginx NodePort 10.98.128.7 <none> 80:31335/TCP 15s

[root@master ~]# ping 10.98.128.7(ping不通)

PING 10.98.128.7 (10.98.128.7) 56(84) bytes of data.

^C

--- 10.98.128.7 ping statistics ---

11 packets transmitted, 0 received, 100% packet loss, time 10263ms

// 访问service的IP

[root@master ~]# curl http://10.98.128.7

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

//查看pod

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-6799fc88d8-dmq4s 1/1 Running 0 88s

//查看pod IP

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-6799fc88d8-dmq4s 1/1 Running 0 109s 10.244.2.2 node2 <none> <none>

[root@master ~]# ping 10.244.2.2

PING 10.244.2.2 (10.244.2.2) 56(84) bytes of data.

64 bytes from 10.244.2.2: icmp_seq=1 ttl=63 time=0.929 ms

64 bytes from 10.244.2.2: icmp_seq=2 ttl=63 time=1.35 ms

^C

--- 10.244.2.2 ping statistics ---

2 packets transmitted, 2 received, 0% packet loss, time 1001ms

rtt min/avg/max/mdev = 0.929/1.137/1.346/0.211 ms

// 测试能否访问

[root@master ~]# curl http://10.244.2.2

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

// 查看service的IP

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 26m

nginx NodePort 10.98.128.7 <none> 80:31335/TCP 2m41s访问页面

master IP:service端口号

640

640

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?