深度学习训练营之使用Word2Vec实现文本识别分类

原文链接

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍦 参考文章:365天深度学习训练营-第N4周:用Word2Vec实现文本分类

- 🍖 原作者:K同学啊|接辅导、项目定制

环境介绍

- 语言环境:Python3.9.12

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2

前言

本次内容我本来是使用miniconda的环境的,但是好像有文件发生了损坏,出现了如下报错,据我所了解应该是某个文件发生了损坏,应该是之前将anaconda误删有关,有所了解或者有同样问题的朋友可以一起进行探讨

前置工作

设置GPU

如果

# 先进行数据加载

import torch

import torch.nn as nn

import torchvision

import os,PIL,pathlib,warnings

import time

from torchvision import transforms, datasets

from torch import nn

from torch.utils.data.dataset import random_split

warnings.filterwarnings("ignore")#忽略警告信息

device=torch.device("cuda"if torch.cuda.is_available()else "cpu")

device

device(type=‘cpu’)

数据查看

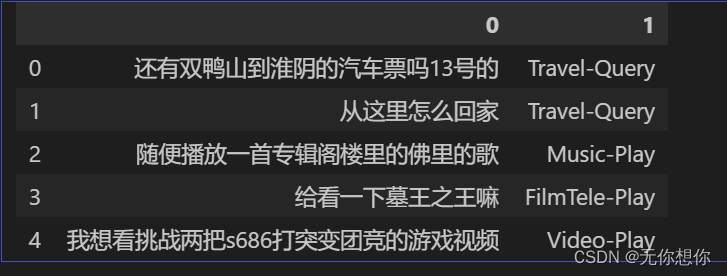

本次使用的数据集和之前中文文本识别分类的是一样的

import pandas as pd

train_data=pd.read_csv('train.csv',sep='\t',header=None)

train_data.head()

构建数据迭代器

#构建数据集迭代器

def coustom_data_iter(texts,labels):

for x,y in zip(texts,labels):

yield x,y

x=train_data[0].values[:]

y=train_data[1].values[:]

添加数据迭代器是为了让数据的随机性增强,进行数据集的划分,可以有效的发挥内存的高利用率

Word2Vec的调用

对Word2Vec进行直接的调用

from gensim.models.word2vec import Word2Vec

import numpy as np

#训练浅层神经网络模型

w2v=Word2Vec(vector_size=100,

min_count=3)

w2v.build_vocab(x)

w2v.train(x,

total_examples=w2v.corpus_count,

epochs=30)

build_vocab统计输入每一个词汇出现的次数

def average_vec(text):

vec=np.zeros(100).reshape((1,100))#表示平均向量

#(n,100),其中n表示x中的元素的数量

for word in text:

try:

vec+=w2v.wv[word].reshape((1,100))

except KeyError:

continue#未找到,再进行迭代下一个词

return vec

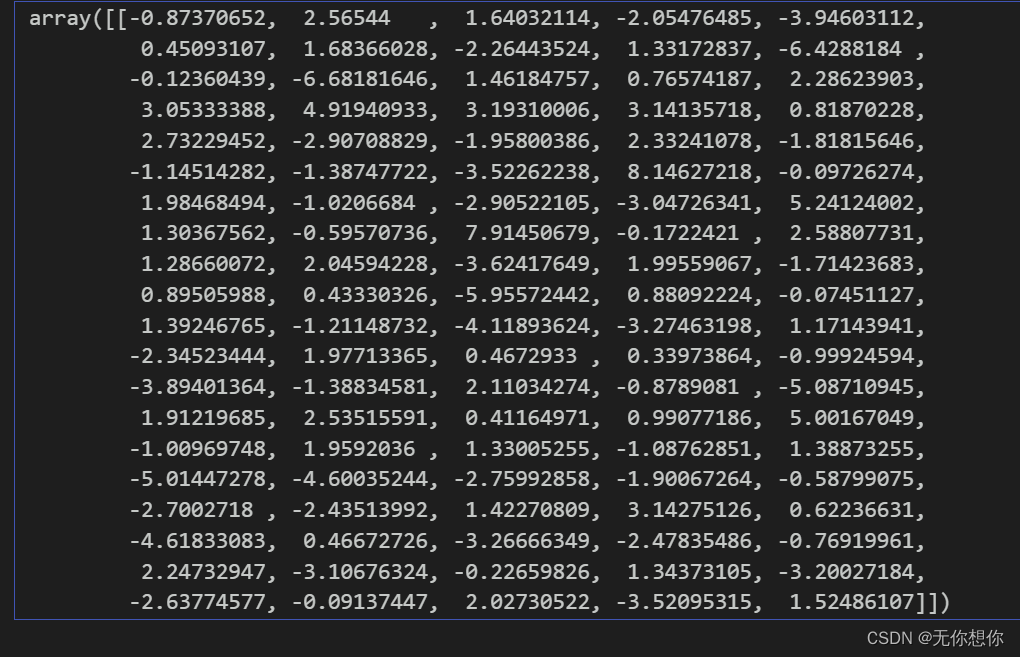

x_vec=np.concatenate([average_vec(z) for z in x])

w2v.save('w2v_model.pkl')

该步骤将输入的文本转变成了平均向量

对于输入进来的text当中的每一个单词都进行一个查询,确认是否当中有该词,如果有那么就将其添加到vector当中,否则跳出本层循环,查找下一个词.

最后通过np当中的concatenate方法进行一个向量的连接

train_iter=coustom_data_iter(x_vec,y)#训练迭代器

print(len(x),len(y))

12100 12100

设置训练的迭代器

label_name=list(set(train_data[1].values[:]))

print(label_name)

['FilmTele-Play', 'Weather-Query', 'Audio-Play', 'Radio-Listen', 'HomeAppliance-Control', 'Alarm-Update', 'Travel-Query', 'Video-Play', 'Calendar-Query', 'TVProgram-Play', 'Music-Play', 'Other']

生成数据批次和迭代器

text_pipeline=lambda x:average_vec(x)

label_pipeline=lambda x:label_name.index(x)

#lambda语法:lambda arguments

text_pipeline("我想你了")

label_pipeline("Travel-Query")

6

这里的结果每次都会不太一样,具有一定的随机性

from torch.utils.data import DataLoader

def collate_batch(batch):

label_list, text_list= [], []

for (_text,_label) in batch:

# 标签列表

label_list.append(label_pipeline(_label))

# 文本列表

processed_text = torch.tensor(text_pipeline(_text), dtype=torch.float32)

text_list.append(processed_text)

# 偏移量,即语句的总词汇量

label_list = torch.tensor(label_list, dtype=torch.int64)

text_list = torch.cat(text_list)

return text_list.to(device),label_list.to(device)

# 数据加载器,调用示例

dataloader = DataLoader(train_iter,

batch_size=8,

shuffle =False,

collate_fn=collate_batch)

和之前的不同在于没有了offset

模型训练

from torch import nn

class TextClassificationModel(nn.Module):

def __init__(self, num_class):

super(TextClassificationModel, self).__init__()

self.fc = nn.Linear(100, num_class)

def forward(self, text):

return self.fc(text)

初始化

num_class = len(label_name)

vocab_size = 100000

em_size = 12

model = TextClassificationModel(num_class).to(device)

import time

def train(dataloader):

model.train() # 切换为训练模式

total_acc, train_loss, total_count = 0, 0, 0

log_interval = 50

start_time = time.time()

for idx, (text,label) in enumerate(dataloader):

predicted_label = model(text)

optimizer.zero_grad() # grad属性归零

loss = criterion(predicted_label, label) # 计算网络输出和真实值之间的差距,label为真实值

loss.backward() # 反向传播

torch.nn.utils.clip_grad_norm_(model.parameters(), 0.1) # 梯度裁剪

optimizer.step() # 每一步自动更新

# 记录acc与loss

total_acc += (predicted_label.argmax(1) == label).sum().item()

train_loss += loss.item()

total_count += label.size(0)

if idx % log_interval == 0 and idx > 0:

elapsed = time.time() - start_time

print('| epoch {:1d} | {:4d}/{:4d} batches '

'| train_acc {:4.3f} train_loss {:4.5f}'.format(epoch, idx, len(dataloader),

total_acc/total_count, train_loss/total_count))

total_acc, train_loss, total_count = 0, 0, 0

start_time = time.time()

def evaluate(dataloader):

model.eval() # 切换为测试模式

total_acc, train_loss, total_count = 0, 0, 0

with torch.no_grad():

for idx, (text,label) in enumerate(dataloader):

predicted_label = model(text)

loss = criterion(predicted_label, label) # 计算loss值

# 记录测试数据

total_acc += (predicted_label.argmax(1) == label).sum().item()

train_loss += loss.item()

total_count += label.size(0)

return total_acc/total_count, train_loss/total_count

拆分数据集并进行训练

from torch.utils.data.dataset import random_split

from torchtext.data.functional import to_map_style_dataset

# 超参数

EPOCHS = 30 # epoch

LR = 5 # 学习率

BATCH_SIZE = 64 # batch size for training

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=LR)

scheduler = torch.optim.lr_scheduler.StepLR(optimizer, 1.0, gamma=0.1)

total_accu = None

# 构建数据集

train_iter = coustom_data_iter(train_data[0].values[:], train_data[1].values[:])

train_dataset = to_map_style_dataset(train_iter)

split_train_, split_valid_ = random_split(train_dataset,

[int(len(train_dataset)*0.8),int(len(train_dataset)*0.2)])

train_dataloader = DataLoader(split_train_, batch_size=BATCH_SIZE,

shuffle=True, collate_fn=collate_batch)

valid_dataloader = DataLoader(split_valid_, batch_size=BATCH_SIZE,

shuffle=True, collate_fn=collate_batch)

for epoch in range(1, EPOCHS + 1):

epoch_start_time = time.time()

train(train_dataloader)

val_acc, val_loss = evaluate(valid_dataloader)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

if total_accu is not None and total_accu > val_acc:

scheduler.step()

else:

total_accu = val_acc

print('-' * 69)

print('| epoch {:1d} | time: {:4.2f}s | '

'valid_acc {:4.3f} valid_loss {:4.3f} | lr {:4.6f}'.format(epoch,

time.time() - epoch_start_time,

val_acc,val_loss,lr))

print('-' * 69)

| epoch 1 | 50/ 152 batches | train_acc 0.742 train_loss 0.02635

| epoch 1 | 100/ 152 batches | train_acc 0.820 train_loss 0.02033

| epoch 1 | 150/ 152 batches | train_acc 0.838 train_loss 0.01927

---------------------------------------------------------------------

| epoch 1 | time: 0.95s | valid_acc 0.819 valid_loss 0.023 | lr 5.000000

---------------------------------------------------------------------

| epoch 2 | 50/ 152 batches | train_acc 0.850 train_loss 0.01876

| epoch 2 | 100/ 152 batches | train_acc 0.849 train_loss 0.02012

| epoch 2 | 150/ 152 batches | train_acc 0.847 train_loss 0.01736

---------------------------------------------------------------------

| epoch 2 | time: 0.92s | valid_acc 0.869 valid_loss 0.016 | lr 5.000000

---------------------------------------------------------------------

| epoch 3 | 50/ 152 batches | train_acc 0.858 train_loss 0.01588

| epoch 3 | 100/ 152 batches | train_acc 0.833 train_loss 0.02008

| epoch 3 | 150/ 152 batches | train_acc 0.864 train_loss 0.01813

---------------------------------------------------------------------

| epoch 3 | time: 0.86s | valid_acc 0.835 valid_loss 0.023 | lr 5.000000

---------------------------------------------------------------------

| epoch 4 | 50/ 152 batches | train_acc 0.883 train_loss 0.01309

| epoch 4 | 100/ 152 batches | train_acc 0.899 train_loss 0.00996

| epoch 4 | 150/ 152 batches | train_acc 0.895 train_loss 0.00927

---------------------------------------------------------------------

| epoch 4 | time: 0.87s | valid_acc 0.888 valid_loss 0.011 | lr 0.500000

---------------------------------------------------------------------

| epoch 5 | 50/ 152 batches | train_acc 0.906 train_loss 0.00834

...

| epoch 30 | 150/ 152 batches | train_acc 0.900 train_loss 0.00717

---------------------------------------------------------------------

| epoch 30 | time: 0.92s | valid_acc 0.886 valid_loss 0.010 | lr 0.000000

---------------------------------------------------------------------

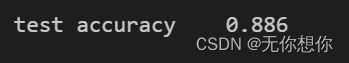

test_acc, test_loss = evaluate(valid_dataloader)

print('test accuracy {:8.3f}'.format(test_acc))

预测

def predict(text, text_pipeline):

with torch.no_grad():

text = torch.tensor(text_pipeline(text),dtype=torch.float32)

print(text.shape)

output = model(text)

return output.argmax(1).item()

ex_text_str = "随便播放一首专辑阁楼里的佛里的歌"

#ex_text_str = "还有双鸭山到淮阴的汽车票吗13号的"

model = model.to(device)

print("该文本的类别是:%s" % label_name[predict(ex_text_str, text_pipeline)])

torch.Size([1, 100])

该文本的类别是:Music-Play

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?