ResNet是一种深度神经网络结构,通过使用残差块(residual block)解决了神经网络深度增加导致的梯度消失问题,从而可以构建更深层次的神经网络。ResNet的基本思想是,通过添加一个连接跨过若干层网络,可以使得这些层的输出直接参与到后续的计算过程中,从而减轻梯度消失的问题。ResNet中还采用了批标准化和残差连接等技术,可以更好地训练和优化深层次的神经网络,并取得更加出色的图像识别性能。

在基于PyTorch的猫狗预测中,PyTorch自带了torchvision.models模块,其中包括了各种经典的卷积神经网络结构,包括ResNet。可以使用这些模块中的ResNet模型对图像进行训练和预测。可以通过调整模型的超参数和训练方法,如学习率、训练迭代次数等,来获得更好的预测效果。在训练过程中,也可以采用GPU加速、交叉熵损失函数和优化器来提高训练和优化的效率。最终,使用训练好的模型对新的图片进行预测,判断其是否是猫或狗。

代码:

%matplotlib inline

import torch

import torchvision

import torch.nn as nn

from torchvision import datasets,transforms

from torch import nn

from d2l import torch as d2l

from torch.utils.data import random_split

from torch.utils import data

from torch.utils.data import DataLoadertransforms = transforms.Compose(

[

transforms.RandomResizedCrop(150),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]

)

train_data = torchvision.datasets.ImageFolder('D:\\Jupyter Notebook\\Pytorch入门\\catsdogs\\train',transform=transforms)

valid_data = torchvision.datasets.ImageFolder('D:\\Jupyter Notebook\\Pytorch入门\\catsdogs\\test',transform=transforms)#设置迭代器

batch_size = 32

train_iter = data.DataLoader(train_data,batch_size,shuffle = True,num_workers=0)

valid_iter = data.DataLoader(valid_data,batch_size,shuffle = False,num_workers=0)class Residual(nn.Module): #@save

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

self.relu1 = nn.ReLU()

self.relu2 = nn.ReLU()

def forward(self, X):

Y = self.relu1(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return self.relu2(Y)b1 = nn.Sequential(nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))def resnet_block(input_channels, num_channels, num_residuals,

first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(Residual(input_channels, num_channels,

use_1x1conv=True, strides=2))

else:

blk.append(Residual(num_channels, num_channels))

return blkb2 = nn.Sequential(*resnet_block(64, 64, 2, first_block=True))

b3 = nn.Sequential(*resnet_block(64, 512, 2))Resnet_net = nn.Sequential(b1, b2, b3,

nn.AdaptiveAvgPool2d((1,1)),

nn.Flatten(), nn.Linear(512, 2))X = torch.rand(size=(32, 3, 150, 150))

for layer in Resnet_net:

X = layer(X)

print(layer.__class__.__name__,'output shape:\t', X.shape)lr=1e-4

device=torch.device("cuda" if torch.cuda.is_available() else "cpu" )

model=Resnet_net.to(device)

optimizer=torch.optim.Adam(model.parameters(),lr=lr)

loss_fn = nn.CrossEntropyLoss().to(device)

print(device)def train(model,device,train_iter,optimizer,loss,epochs):

total_train_step = 0

for epoch in range(epochs):

print("第{}轮训练开始".format(epoch+1))

model.train()

for idx,(data,target) in enumerate(train_iter):

data,target = data.to(device),target.to(device)

pred = model(data)

loss = loss_fn(pred,target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_train_step = total_train_step+1

if total_train_step %10 == 0:

print("训练次数:{},Loss:{}".format(total_train_step,loss.item()))

def test(model,device,test_iter,loss_fn):

total_test_step = 0

total_test_loss = 0

total_accuracy = 0

model.eval()

correct = 0

with torch.no_grad():

for idx,(data,target) in enumerate(test_iter):

data,target = data.to(device),target.to(device)

output = model(data)

loss = loss_fn(output,target)

total_test_loss = total_test_loss + loss.item()#计算测试Loss

#计算精确度

accuracy = (output.argmax(1) == target).sum()

total_accuracy = total_accuracy + accuracy

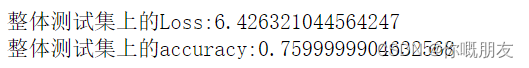

print("整体测试集上的Loss:{}".format(total_test_loss))

print("整体测试集上的accuracy:{}".format(total_accuracy/len(valid_data)))

# acc=correct/len(valid_data)num_epochs=30

import time

begin_time=time.time()

print(time.ctime(begin_time))

train(model,device,train_iter,optimizer,loss_fn,num_epochs)

# test(model,device,test_loader)

end_time=time.time()

print(time.ctime(end_time))test(model,device,valid_iter,loss_fn)

2513

2513

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?