文章目录

- 27 spark 核心模块RDD

-

- 1.RDD基本概念

- 2.RDD的创建以及操作方式

-

- 2.1 RDD的创建三种方式

- 2.2 RDD的编程常用API

-

- 2.2.1 RDD的算子分类 重点

- 2.2.2 transfermation算子(27个)

-

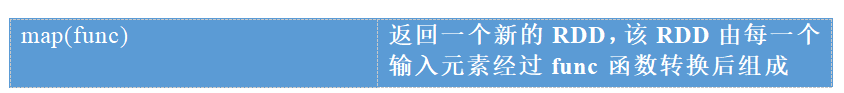

- ==1.map(func)==

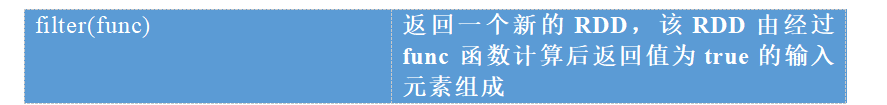

- ==2.filter(func)==

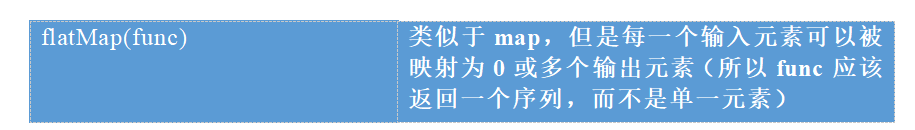

- ==3.flatMap(func)==

- ==4.mapPartitions(func)==

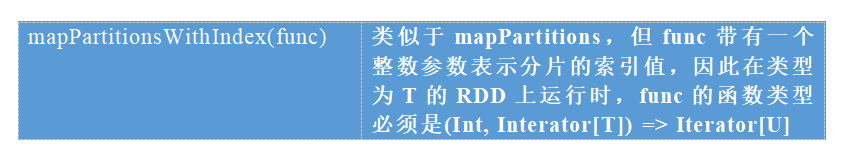

- 5.mapPartitionsWithIndex(func)

- 6.sample(withReplacement, fraction, seed)

- 7.takeSample

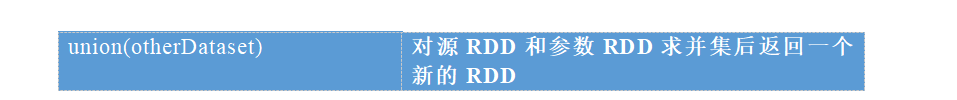

- ==8.union(otherDataset)==

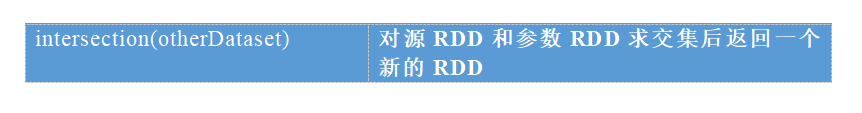

- ==9.intersection(otherDataset)==

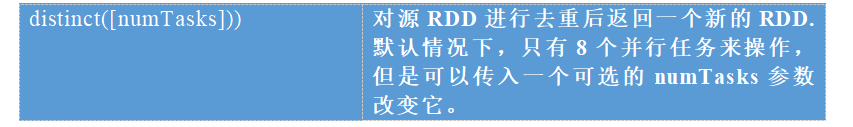

- ==10.distinct([numTasks]))==

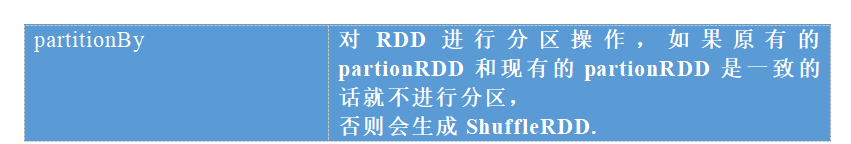

- ==11.partitionBy==

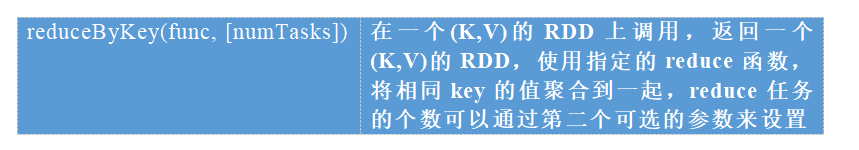

- ==12.reduceByKey(func, [numTasks])==

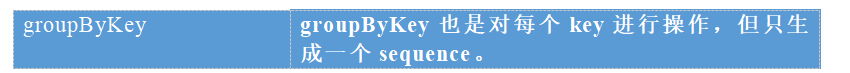

- ==13.groupByKey==

- 14.combineByKey[C]

- 15.aggregateByKey(zeroValue:U,[partitioner: Partitioner]) (seqOp: (U, V) => U,combOp: (U, U) => U)

- 16.foldByKey(zeroValue: V)(func: (V, V) => V): RDD[(K, V)]

- ==17.sortByKey([ascending], [numTasks])==

- ==18.sortBy(func,[ascending], [numTasks])==

- ==19.join(otherDataset, [numTasks])==

- 20.cogroup(otherDataset, [numTasks])

- 21.cartesian(otherDataset)

- 22.pipe(command, [envVars])

- ==23.coalesce(numPartitions)==

- 24.repartitionAndSortWithinPartitions(partitioner)

- 25.repartition(numPartitions)

- 26.repartitionAndSortWithinPartitions(partitioner)

- 27.glom

- ==28.mapValues==

- 29.subtract

- 重新分区总结

- 2.2.3 常用的action操作示例(13个)

-

- 1.reduce(func)

- 2.collect()

- 3.count()

- 4.first()

- 5.take(n)

- 6.takeSample(withReplacement,num, [seed])

- 6.takeOrdered(n)

- 7.aggregate (zeroValue: U)(seqOp: (U, T) ⇒ U, combOp: (U, U) ⇒ U)

- 8.fold(num)(func)

- 9.saveAsTextFile(path)

- 10.saveAsSequenceFile(path)

- 11.saveAsObjectFile(path)

- 12.countByKey()

- 13.foreach(func)

- 14.数值RDD统计操作

- 3.RDD常用算子操作练习

- 4.通过spark实现点击流日志分析案例 搞定

- 5.通过Spark实现ip地址查询 搞定

- 6.RDD的依赖关系

- 7.RDD的缓存

- 8.DAG的生成以及shuffle的过程

- 9.Spark任务调度

- 10.RDD容错机制之checkpoint

- 11.Spark运行架构

- 12.数据读取与保存主要方式 了解

27 spark 核心模块RDD

1.RDD基本概念

rdd:弹性分布式数据集 Resilient Distributed Dataset

弹性:数据优先装在内存当中进行处理,如果内存不够用了,就将数据放到磁盘上面去处理

分布式:一个完整的文件,分布在不同的服务器上面

数据集:可以简单的理解为就是一个集合

特点:rdd不可变,可分区,里面的元素可以被并行的计算

1.1 为什么会产生RDD

第一代计算引擎:MR,使用代码来实现数据分析处理等等。缺点,需要使用大量代码,复杂性太高

第二代计算引擎:hive,使用hql来实现数据分析,摆脱了mr的繁琐的代码。缺点:执行效率慢

第三代计算引擎:以spark,impala,等等为首的内存计算框架。将数据尽量都放到内存当中进行计算

1.2 RDD的五大属性

- A list of partitions 分区列表

- A function for computing each split 作用在每一个文件切片上面的函数

- A list of dependencies on other RDDs 依赖于其他的一些RDD

- Optionally, a Partitioner for key-value RDDs (e.g. to say that the RDD is hash-partitioned) 可选项:对于key,value对的rdd,有分区函数

- Optionally, a list of preferred locations to compute each split on (e.g. block locations for an HDFS file) 可选项:数据的位置优先性来进行计算。移动计算比移动数据便宜

如果文件在哪一台服务器上面,就在哪一台服务器上面启动task进行运算,尽量避免数据的拷贝

回顾spark当中的单词计数统计来查看RDD属性以及分区规则

sc.textFile("file:///export/servers/sparkdatas/wordcount.txt").flatMap(_.split(" ")).map((_,1)).reduceByKey(_ + _).collect

1.3 rdd的弹性:

1、自动进行内存以及磁盘文件切换

2、基于血统lineage的高效容错性。lineage记录了rdd之间的依赖关系

3、task执行失败自动进行重试

4、stage失败自动进行重试

5、checkpoint实现数据的持久化保存

1.4 rdd的特点:

分区:多个分区

只读:rdd当中的数据是只读的,不能更改

依赖:rdd之间的依赖关系

缓存:常用的rdd我们可以给缓存起来

checkpoint:持久化,对于一些常用的rdd我们也可以试下持久化

2.RDD的创建以及操作方式

2.1 RDD的创建三种方式

第一种方式创建RDD:由一个已经存在的集合创建

val rdd1 = sc.parallelize(Array(1,2,3,4,5,6,7,8))

第二种方式创建RDD:由外部存储文件创建

包括本地的文件系统,还有所有Hadoop支持的数据集,比如HDFS、Cassandra、HBase等。

val rdd2 = sc.textFile("/words.txt")

第三种方式创建RDD:由已有的RDD经过算子转换,生成新的RDD

val rdd3=rdd2.flatMap(_.split(" "))

2.2 RDD的编程常用API

2.2.1 RDD的算子分类 重点

算子又分为两大类

1、 transfermation:转换的算子,对数据做进一步的转换

2、 action:动作算子,触发整个任务去执行

transfermation的算子全部都是懒执行的,如果在代码当中调用了transfermation算子,不会马上去执行,只是记录了血统关系。等到真正的调用action的时候,才会触发整个任务去执行

2.2.2 transfermation算子(27个)

1.map(func)

2.filter(func)

3.flatMap(func)

4.mapPartitions(func)

map:将分区里面每一条数据取出来,进行处理

mapPartitions :一次性将一个分区里面的数据全部取出来。效率更高

scala> val rdd = sc.parallelize(List(("kpop","female"),("zorro","male"),("mobin","male"),("lucy","female")))

rdd: org.apache.spark.rdd.RDD[(String, String)] = ParallelCollectionRDD[16] at parallelize at <console>:24

scala> :paste

// Entering paste mode (ctrl-D to finish)

def partitionsFun(iter : Iterator[(String,String)]) : Iterator[String] = {

var woman = List[String]()

while (iter.hasNext){

val next = iter.next()

next match {

case (_,"female") => woman = next._1 :: woman

case _ =>

}

}

woman.iterator

}

// Exiting paste mode, now interpreting.

partitionsFun: (iter: Iterator[(String, String)])Iterator[String]

scala> val result = rdd.mapPartitions(partitionsFun)

result: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[17] at mapPartitions at <console>:28

scala> result.collect()

res13: Array[String] = Array(kpop, lucy)

5.mapPartitionsWithIndex(func)

scala> val rdd = sc.parallelize(List(("kpop","female"),("zorro","male"),("mobin","male"),("lucy","female")))

rdd: org.apache.spark.rdd.RDD[(String, String)] = ParallelCollectionRDD[18] at parallelize at <console>:24

scala> :paste

// Entering paste mode (ctrl-D to finish)

def partitionsFun(index : Int, iter : Iterator[(String,String)]) : Iterator[String] = {

var woman = List[String]()

while (iter.hasNext){

val next = iter.next()

next match {

case (_,"female") => woman = "["+index+"]"+next._1 :: woman

case _ =>

}

}

woman.iterator

}

// Exiting paste mode, now interpreting.

partitionsFun: (index: Int, iter: Iterator[(String, String)])Iterator[String]

scala> val result = rdd.mapPartitionsWithIndex(partitionsFun)

result: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[19] at mapPartitionsWithIndex at <console>:28

scala> result.collect()

res14: Array[String] = Array([0]kpop, [3]lucy)

6.sample(withReplacement, fraction, seed)

scala> val rdd = sc.parallelize(1 to 10)

rdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[20] at parallelize at <console>:24

scala> rdd.collect()

res15: Array[Int] = Array(1, 2, 3, 4, 5, 6, 7, 8, 9, 10)

scala> var sample1 = rdd.sample(true,0.4,2)

sample1: org.apache.spark.rdd.RDD[Int] = PartitionwiseSampledRDD[21] at sample at <console>:26

scala> sample1.collect()

res16: Array[Int] = Array(1, 2, 2)

scala> var sample2 = rdd.sample(false,0.2,3)

sample2: org.apache.spark.rdd.RDD[Int] = PartitionwiseSampledRDD[22] at sample at <console>:26

scala> sample2.collect()

res17: Array[Int] = Array(1, 9)

7.takeSample

8.union(otherDataset)

scala> val rdd1 = sc.parallelize(1 to 5)

rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[23] at parallelize at <console>:24

scala> val rdd2 = sc.parallelize(5 to 10)

rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[24] at parallelize at <console>:24

scala> val rdd3 = rdd1.union(rdd2)

rdd3: org.apache.spark.rdd.RDD[Int] = UnionRDD[25] at union at <console>:28

scala> rdd3.collect()

res18: Array[Int] = Array(1, 2, 3, 4, 5, 5, 6, 7, 8, 9, 10)

9.intersection(otherDataset)

scala> val rdd1 = sc.parallelize(1 to 7)

rdd1: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[26] at parallelize at <console>:24

scala> val rdd2 = sc.parallelize(5 to 10)

rdd2: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[27] at parallelize at <console>:24

scala> val rdd3 = rdd1.intersection(rdd2)

rdd3: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[33] at intersection at <console>:28

scala> rdd3.collect()

[Stage 15:=============================> (2 + 2)

res19: Array[Int] = Array(5, 6, 7)

10.distinct([numTasks]))

scala> val distinctRdd = sc.parallelize(List(1,2,1,5,2,9,6,1))

distinctRdd: org.apache.spark.rdd.RDD[Int] = ParallelCollectionRDD[34] at parallelize at <console>:24

scala> val unionRDD = distinctRdd.distinct()

unionRDD: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[37] at distinct at <console>:26

scala> unionRDD.collect()

[Stage 16:> (0 + 4) [Stage 16:=============================> (2 + 2) res20: Array[Int] = Array(1, 9, 5, 6, 2)

scala> val unionRDD = distinctRdd.distinct(2)

unionRDD: org.apache.spark.rdd.RDD[Int] = MapPartitionsRDD[40] at distinct at <console>:26

scala> unionRDD.collect()

res21: Array[Int] = Array(6, 2, 1, 9, 5)

11.partitionBy

scala> val rdd = sc.parallelize(Array((1,"aaa"),(2,"bbb"),(3,"ccc"),(4,"ddd")),4) //指定4个分区

rdd: org.apache.spark.rdd.RDD[(Int, String)] = ParallelCollectionRDD[44] at parallelize at <console>:24

scala> rdd.partitions.size

res24: Int = 4

scala> var rdd2 = rdd.partitionBy(new org.apache.spark.HashPartitioner(2))

rdd2: org.apache.spark.rdd.RDD[(Int, String)] = ShuffledRDD[45] at partitionBy at <console>:26

scala> rdd2.partitions.size

res25: Int = 2

partitionBy过程

12.reduceByKey(func, [numTasks])

scala> val rdd = sc.parallelize(List(("female",1),("male",5),("female",5),("male",2)))

rdd: org.apache.spark.rdd.RDD[(String, Int)] = ParallelCollectionRDD[46] at parallelize at <console>:24

scala> val reduce = rdd.reduceByKey((x,y) => x+y)

reduce: org.apache.spark.rdd.RDD[(String, Int)] = ShuffledRDD[47] at reduceByKey at <console>:26

scala> reduce.collect()

res29: Array[(String, Int)] = Array((female,6), (male,7))

相当于mr当中的规约:在maptask端对数据进行部分的聚合

13.groupByKey

scala> val words = Array("one", "two", "two", "three", "three", "three")

words: Array[String] = Array(one, two, two, three, three, three)

scala> val wordPairsRDD = sc.parallelize(words).map(word => (word, 1))

wordPairsRDD: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[4] at map at <console>:26

scala> val group = wordPairsRDD.groupByKey()

group: org.apache.spark.rdd.RDD[(String, Iterable[Int])] = ShuffledRDD[5] at groupByKey at <console>:28

scala> group.collect()

res1: Array[(String, Iterable[Int])] = Array((two,CompactBuffer(1, 1)), (one,CompactBuffer(1)), (three,CompactBuffer(1, 1, 1)))

scala> group.map(t => (t._1, t._2.sum))

res2: org.apache.spark.rdd.RDD[(String, Int)] = MapPartitionsRDD[6] at map at <console>:31

scala> res2.collect()

res3: Array[(String, Int)] = Array((two,2), (one,1), (three,3))

scala> val map = group.map(t => (t._1, t._2.sum))

map: org.apache.spark.rdd.RDD[(String, Int)]

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

235

235

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?