这段时间一直在搞视频格式的转换问题,终于最近将一个图片的YUV格式转RGB格式转换成功了。下面就来介绍一下:

由于我的工程是在vs2008中的,其中包含一些相关头文件和库,所以下面只是列出部分核心代码,并不是全部代码。

1、下载一个包含YUV数据的文件也可以自己制作一个该文件

下载地址:

YUV数据文件

2、读入YUV数据文件中的yuv数据:

关键代码如下:

2.1读文件代码

unsigned char * readYUV(char *path)

{

FILE *fp;

unsigned char * buffer;

long size = 1280 * 720 * 3 / 2;

if((fp=fopen(path,"rb"))==NULL)

{

printf("cant open the file");

exit(0);

}

buffer = new unsigned char[size];

memset(buffer,'\0',size);

fread(buffer,size,1,fp);

fclose(fp);

return buffer;

}2.2读入数据,并将YUV数据分别制作成3个纹理

GLuint texYId;

GLuint texUId;

GLuint texVId;

void loadYUV(){

int width ;

int height ;

width = 640;

height = 480;

unsigned char *buffer = NULL;

buffer = readYUV("1.yuv");

glGenTextures ( 1, &texYId );

glBindTexture ( GL_TEXTURE_2D, texYId );

glTexImage2D ( GL_TEXTURE_2D, 0, GL_LUMINANCE, width, height, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, buffer );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE );

glGenTextures ( 1, &texUId );

glBindTexture ( GL_TEXTURE_2D, texUId );

glTexImage2D ( GL_TEXTURE_2D, 0, GL_LUMINANCE, width / 2, height / 2, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, buffer + width * height);

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE );

glGenTextures ( 1, &texVId );

glBindTexture ( GL_TEXTURE_2D, texVId );

glTexImage2D ( GL_TEXTURE_2D, 0, GL_LUMINANCE, width / 2, height / 2, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, buffer + width * height * 5 / 4 );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE );

glTexParameteri ( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE );

}上述代码中1.yuv就是YUV数据文件

3、将纹理传入

上述片段shader中就是根据yuv转rgb的公式得来的。也就是说是在shader中实现转换的。

3.1 顶点shader和片段shader代码

GLbyte vShaderStr[] =

"attribute vec4 vPosition; \n"

"attribute vec2 a_texCoord; \n"

"varying vec2 tc; \n"

"void main() \n"

"{ \n"

" gl_Position = vPosition; \n"

" tc = a_texCoord; \n"

"} \n";

GLbyte fShaderStr[] =

"precision mediump float; \n"

"uniform sampler2D tex_y; \n"

"uniform sampler2D tex_u; \n"

"uniform sampler2D tex_v; \n"

"varying vec2 tc; \n"

"void main() \n"

"{ \n"

" vec4 c = vec4((texture2D(tex_y, tc).r - 16./255.) * 1.164);\n"

" vec4 U = vec4(texture2D(tex_u, tc).r - 128./255.);\n"

" vec4 V = vec4(texture2D(tex_v, tc).r - 128./255.);\n"

" c += V * vec4(1.596, -0.813, 0, 0);\n"

" c += U * vec4(0, -0.392, 2.017, 0);\n"

" c.a = 1.0;\n"

" gl_FragColor = c;\n"

"} \n";

上述片段shader中就是根据yuv转rgb的公式得来的。也就是说是在shader中实现转换的。

4、显示结果

结果如下:

注意:该shader是OpenGL格式的shader有一点差别。

--------------------------------------------------------------------------------------------------------------------------------

YV12格式与YUV格式只是在UV的存储位置上不同,需要注意一下

YV12,I420,YUV420P的区别

YV12和I420的区别

一般来说,直接采集到的视频数据是RGB24的格式,RGB24一帧的大小size=width×heigth×3 Byte,RGB32的size=width×heigth×4,如果是I420(即YUV标准格式4:2:0)的数据量是 size=width×heigth×1.5 Byte。

在采集到RGB24数据后,需要对这个格式的数据进行第一次压缩。即将图像的颜色空间由RGB2YUV。因为,X264在进行编码的时候需要标准的YUV(4:2:0)。但是这里需要注意的是,虽然YV12也是(4:2:0),但是YV12和I420的却是不同的,在存储空间上面有些区别。如下:

YV12 : 亮度(行×列) + V(行×列/4) + U(行×列/4)

I420 : 亮度(行×列) + U(行×列/4) + V(行×列/4)

可以看出,YV12和I420基本上是一样的,就是UV的顺序不同。

继续我们的话题,经过第一次数据压缩后RGB24->YUV(I420)。这样,数据量将减少一半,为什么呢?呵呵,这个就太基础了,我就不多写了。同样,如果是RGB24->YUV(YV12),也是减少一半。但是,虽然都是一半,如果是YV12的话效果就有很大损失。然后,经过X264编码后,数据量将大大减少。将编码后的数据打包,通过RTP实时传送。到达目的地后,将数据取出,进行解码。完成解码后,数据仍然是YUV格式的,所以,还需要一次转换,这样windows的驱动才可以处理,就是YUV2RGB24。

在采集到RGB24数据后,需要对这个格式的数据进行第一次压缩。即将图像的颜色空间由RGB2YUV。因为,X264在进行编码的时候需要标准的YUV(4:2:0)。但是这里需要注意的是,虽然YV12也是(4:2:0),但是YV12和I420的却是不同的,在存储空间上面有些区别。如下:

YV12 : 亮度(行×列) + V(行×列/4) + U(行×列/4)

I420 : 亮度(行×列) + U(行×列/4) + V(行×列/4)

可以看出,YV12和I420基本上是一样的,就是UV的顺序不同。

继续我们的话题,经过第一次数据压缩后RGB24->YUV(I420)。这样,数据量将减少一半,为什么呢?呵呵,这个就太基础了,我就不多写了。同样,如果是RGB24->YUV(YV12),也是减少一半。但是,虽然都是一半,如果是YV12的话效果就有很大损失。然后,经过X264编码后,数据量将大大减少。将编码后的数据打包,通过RTP实时传送。到达目的地后,将数据取出,进行解码。完成解码后,数据仍然是YUV格式的,所以,还需要一次转换,这样windows的驱动才可以处理,就是YUV2RGB24。

补充=============

详细的格式之间的差异可以参考:

附一个YUV播放器的源代码:

http://download.csdn.net/detail/leixiaohua1020/6374065

查看YUV的时候也可以下载使用成熟的YUV播放器 ——YUV Player Deluxe:

http://www.yuvplayer.com/

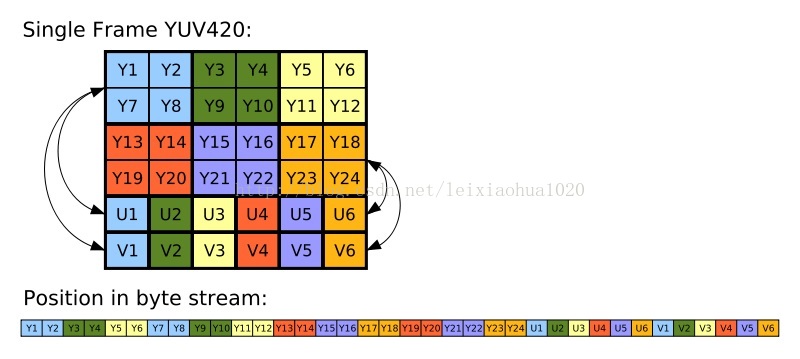

yuv420p就是I420格式,使用极其广泛,它的示意图:

【图像-视频处理】YUV420、YV12与RGB24的转换公式

- bool YV12ToBGR24_Native(unsigned char* pYUV,unsigned char* pBGR24,int width,int height)

- {

- if (width < 1 || height < 1 || pYUV == NULL || pBGR24 == NULL)

- return false;

- const long len = width * height;

- unsigned char* yData = pYUV;

- unsigned char* vData = &yData[len];

- unsigned char* uData = &vData[len >> 2];

- int bgr[3];

- int yIdx,uIdx,vIdx,idx;

- for (int i = 0;i < height;i++){

- for (int j = 0;j < width;j++){

- yIdx = i * width + j;

- vIdx = (i/2) * (width/2) + (j/2);

- uIdx = vIdx;

- bgr[0] = (int)(yData[yIdx] + 1.732446 * (uData[vIdx] - 128)); // b分量

- bgr[1] = (int)(yData[yIdx] - 0.698001 * (uData[uIdx] - 128) - 0.703125 * (vData[vIdx] - 128)); // g分量

- bgr[2] = (int)(yData[yIdx] + 1.370705 * (vData[uIdx] - 128)); // r分量

- for (int k = 0;k < 3;k++){

- idx = (i * width + j) * 3 + k;

- if(bgr[k] >= 0 && bgr[k] <= 255)

- pBGR24[idx] = bgr[k];

- else

- pBGR24[idx] = (bgr[k] < 0)?0:255;

- }

- }

- }

- return true;

- }

以上是yv12到RGB24的转换算法,如果是yuv420到RGB24转换,秩序u,v反过来就可以了。

即:

- unsigned char* uData = &yData[nYLen];

- unsigned char* vData = &vData[nYLen>>2];

注:海康威视网络摄像头一般就是yu12格式的!

2016-9-22 19:53

张朋艺 pyZhangBIT2010@126.com

找到的英文参考资料:

yv12 to rgb using glsl in iOS ,result image attached

https://stackoverflow.com/questions/11093061/yv12-to-rgb-using-glsl-in-ios-result-image-attached

following is my code for uploading the three planar data to textures:

- (GLuint) textureY: (Byte*)imageData

widthType: (int) width

heightType: (int) height

{

GLuint texName;

glGenTextures( 1, &texName );

glBindTexture(GL_TEXTURE_2D, texName);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE);

glTexImage2D( GL_TEXTURE_2D, 0, GL_LUMINANCE, width, height, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, imageData );

//free(imageData);

return texName;

}

- (GLuint) textureU: (Byte*)imageData

widthType: (int) width

heightType: (int) height

{

GLuint texName;

glGenTextures( 1, &texName );

glBindTexture(GL_TEXTURE_2D, texName);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE);

glTexImage2D( GL_TEXTURE_2D, 0, GL_LUMINANCE, width, height, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, imageData );

//free(imageData);

return texName;

}

- (GLuint) textureV: (Byte*)imageData

widthType: (int) width

heightType: (int) height

{

GLuint texName;

glGenTextures( 1, &texName );

glBindTexture(GL_TEXTURE_2D, texName);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR );

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE);

glTexParameteri( GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE);

glTexImage2D( GL_TEXTURE_2D, 0, GL_LUMINANCE, width, height, 0, GL_LUMINANCE, GL_UNSIGNED_BYTE, imageData );

//free(imageData);

return texName;

}

- (void) readYUVFile

{

NSString *file = [[NSBundle mainBundle] pathForResource:@"video" ofType:@"yv12"];

NSLog(@"%@",file);

NSData* fileData = [NSData dataWithContentsOfFile:file];

//NSLog(@"%@",[fileData description]);

NSInteger width = 352;

NSInteger height = 288;

NSInteger uv_width = width / 2;

NSInteger uv_height = height / 2;

NSInteger dataSize = [fileData length];

NSLog(@"%i\n",dataSize);

GLint nYsize = width * height;

GLint nUVsize = uv_width * uv_height;

GLint nCbOffSet = nYsize;

GLint nCrOffSet = nCbOffSet + nUVsize;

Byte *spriteData = (Byte *)malloc(dataSize);

[fileData getBytes:spriteData length:dataSize];

Byte* uData = spriteData + nCbOffSet;

//NSLog(@"%@\n",[[NSData dataWithBytes:uData length:nUVsize] description]);

Byte* vData = spriteData + nCrOffSet;

//NSLog(@"%@\n",[[NSData dataWithBytes:vData length:nUVsize] description]);

/**

Byte *YPlanarData = (Byte *)malloc(nYsize);

for (int i=0; i<nYsize; i++) {

YPlanarData[i]= spriteData[i];

}

Byte *UPlanarData = (Byte *)malloc(nYsize);

for (int i=0; i<height; i++) {

for (int j=0; j<width; j++) {

int numInUVsize = (i/2)*uv_width+j/2;

UPlanarData[i*width+j]=uData[numInUVsize];

}

}

Byte *VPlanarData = (Byte *)malloc(nYsize);

for (int i=0; i<height; i++) {

for (int j=0; j<width; j++) {

int numInUVsize = (i/2)*uv_width+j/2;

VPlanarData[i*width+j]=vData[numInUVsize];

}

}

**/

_textureUniformY = glGetUniformLocation(programHandle, "SamplerY");

_textureUniformU = glGetUniformLocation(programHandle, "SamplerU");

_textureUniformV = glGetUniformLocation(programHandle, "SamplerV");

free(spriteData);

}

and my fragment shaders code:

precision highp float;

uniform sampler2D SamplerY;

uniform sampler2D SamplerU;

uniform sampler2D SamplerV;

varying highp vec2 coordinate;

void main()

{

highp vec3 yuv,yuv1;

highp vec3 rgb;

yuv.x = texture2D(SamplerY, coordinate).r;

yuv.y = texture2D(SamplerU, coordinate).r-0.5;

yuv.z = texture2D(SamplerV, coordinate).r-0.5 ;

rgb = mat3( 1, 1, 1,

0, -.34414, 1.772,

1.402, -.71414, 0) * yuv;

gl_FragColor = vec4(rgb, 1);

}

2533

2533

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?