课程视频地址:

https://www.bilibili.com/video/BV1sT4y1p71V/

课程文档地址:

Tutorial/langchain/readme.md at main · InternLM/Tutorial · GitHub

1. 配置环境

执行脚本,配置 InternLM 环境:

环境所需要的包都存在 /root/share/pkgs.tar.gz 中,将其解压到 /root/.conda/pkgs 下 并且不会覆盖已有的pkgs文件夹。

将 /share/conda_envs/internlm-base 中的所有包和配置都复制到新环境 InternLM 中并激活该环境。

/root/share/install_conda_env_internlm_base.sh InternLM

conda activate InternLM接下来遵循指令往下走:

# 升级pip

python -m pip install --upgrade pip

# 安装其它依赖包

pip install modelscope==1.9.5

pip install transformers==4.35.2

pip install streamlit==1.24.0

pip install sentencepiece==0.1.99

pip install accelerate==0.24.1

# 模型下载

mkdir -p /root/data/model/Shanghai_AI_Laboratory

cp -r /root/share/temp/model_repos/internlm-chat-7b /root/data/model/Shanghai_AI_Laboratory/internlm-chat-7b

# 安装 LangChain 安装包

pip install langchain==0.0.292

pip install gradio==4.4.0

pip install chromadb==0.4.15

pip install sentence-transformers==2.2.2

pip install unstructured==0.10.30

pip install markdown==3.3.7

pip install -U huggingface_hub下载开源词向量模型

$ touch /root/data/download_hf.py

$ tee -a /root/data/download_hf.py << EOF

import os

# 下载模型

os.system('huggingface-cli download --resume-download sentence-transformers/paraphrase-multilingual-MiniLM-L12-v2 --local-dir /root/data/model/sentence-transformer')

EOF

$ python /root/data/download_hf.py下载NLTK相关资源

cd /root

git clone https://gitee.com/yzy0612/nltk_data.git --branch gh-pages

cd nltk_data

mv packages/* ./

cd tokenizers

unzip punkt.zip

cd ../taggers

unzip averaged_perceptron_tagger.zip下载项目代码

cd /root/data

git clone https://github.com/InternLM/tutorial2. 知识库搭建

下载语料库来源

# 进入到数据库盘

cd /root/data

# clone 上述开源仓库

git clone https://gitee.com/open-compass/opencompass.git

git clone https://gitee.com/InternLM/lmdeploy.git

git clone https://gitee.com/InternLM/xtuner.git

git clone https://gitee.com/InternLM/InternLM-XComposer.git

git clone https://gitee.com/InternLM/lagent.git

git clone https://gitee.com/InternLM/InternLM.git接着编写函数筛选出txt和md后缀的文件

import os

def get_files(dir_path):

# args:dir_path,目标文件夹路径

file_list = []

for filepath, dirnames, filenames in os.walk(dir_path):

# os.walk 函数将递归遍历指定文件夹

for filename in filenames:

# 通过后缀名判断文件类型是否满足要求

if filename.endswith(".md"):

# 如果满足要求,将其绝对路径加入到结果列表

file_list.append(os.path.join(filepath, filename))

elif filename.endswith(".txt"):

file_list.append(os.path.join(filepath, filename))

return file_list调用Langchain的DocumentLoader加载文件

from tqdm import tqdm

from langchain.document_loaders import UnstructuredFileLoader

from langchain.document_loaders import UnstructuredMarkdownLoader

# tqdm 是用来显示进度条的

def get_text(dir_path):

# args:dir_path,目标文件夹路径

# 首先调用上文定义的函数得到目标文件路径列表

file_lst = get_files(dir_path)

# docs 存放加载之后的纯文本对象

docs = []

# 遍历所有目标文件

for one_file in tqdm(file_lst):

file_type = one_file.split('.')[-1]

if file_type == 'md':

loader = UnstructuredMarkdownLoader(one_file)

elif file_type == 'txt':

loader = UnstructuredFileLoader(one_file)

else:

# 如果是不符合条件的文件,直接跳过

continue

docs.extend(loader.load())

return docs这样便可以获得一个纯文本列表

接着构建向量库,主要包括文本分块和向量化的操作

文本分块:

from langchain.text_splitter import RecursiveCharacterTextSplitter

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=500, chunk_overlap=150)

split_docs = text_splitter.split_documents(docs)向量化:

from langchain.embeddings.huggingface import HuggingFaceEmbeddings

embeddings = HuggingFaceEmbeddings(model_name="/root/data/model/sentence-transformer")我们也可以使用OpenAI的embedding功能:(记得配置api-key)

import time

from langchain_openai import OpenAIEmbeddings

from langchain.embeddings import CacheBackedEmbeddings

from langchain.storage import LocalFileStore

embeddings = OpenAIEmbeddings()

# CacheBackedEmbeddings会将文本和嵌入结果以 K-V 形式存储起来,在文本下次需要嵌入时,可以从缓存中直接返回,减少接口的调用次数

store = LocalFileStore("your_path")

cached_embedder = CacheBackedEmbeddings.from_bytes_store(embeddings, store)

text = "What's your name?"

embedded1 = cached_embedder.embed_documents([text])接着使用chroma存储向量化后的语料

from langchain.vectorstores import Chroma

# 定义持久化路径

persist_directory = 'data_base/vector_db/chroma'

# 加载数据库

vectordb = Chroma.from_documents(

documents=split_docs,

embedding=embeddings, # 也可以使用OpenAIEmbedding

persist_directory=persist_directory # 允许我们将persist_directory目录保存到磁盘上

)

# 将加载的向量数据库持久化到磁盘上

vectordb.persist()读取本地语料库、分块、向量化、chroma存储 的完整脚本略。

3. InterLM 接入 Langchain

由向量库查询到的信息需要交由LLM,得到响应后再将其返回给用户(这一整个过程用 检索器 Retriever)

基于InternLLM 自定义 LLM 类:

from langchain.llms.base import LLM

from typing import Any, List, Optional

from langchain.callbacks.manager import CallbackManagerForLLMRun

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

class InternLM_LLM(LLM):

# 基于本地 InternLM 自定义 LLM 类

tokenizer : AutoTokenizer = None

model: AutoModelForCausalLM = None

def __init__(self, model_path :str):

# model_path: InternLM 模型路径

# 从本地初始化模型

super().__init__()

print("正在从本地加载模型...")

self.tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

self.model = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True).to(torch.bfloat16).cuda()

self.model = self.model.eval()

print("完成本地模型的加载")

def _call(self, prompt : str, stop: Optional[List[str]] = None,

run_manager: Optional[CallbackManagerForLLMRun] = None,

**kwargs: Any):

# 重写调用函数

system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语).

- InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless.

- InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文.

"""

messages = [(system_prompt, '')]

response, history = self.model.chat(self.tokenizer, prompt , history=messages)

return response

@property

def _llm_type(self) -> str:

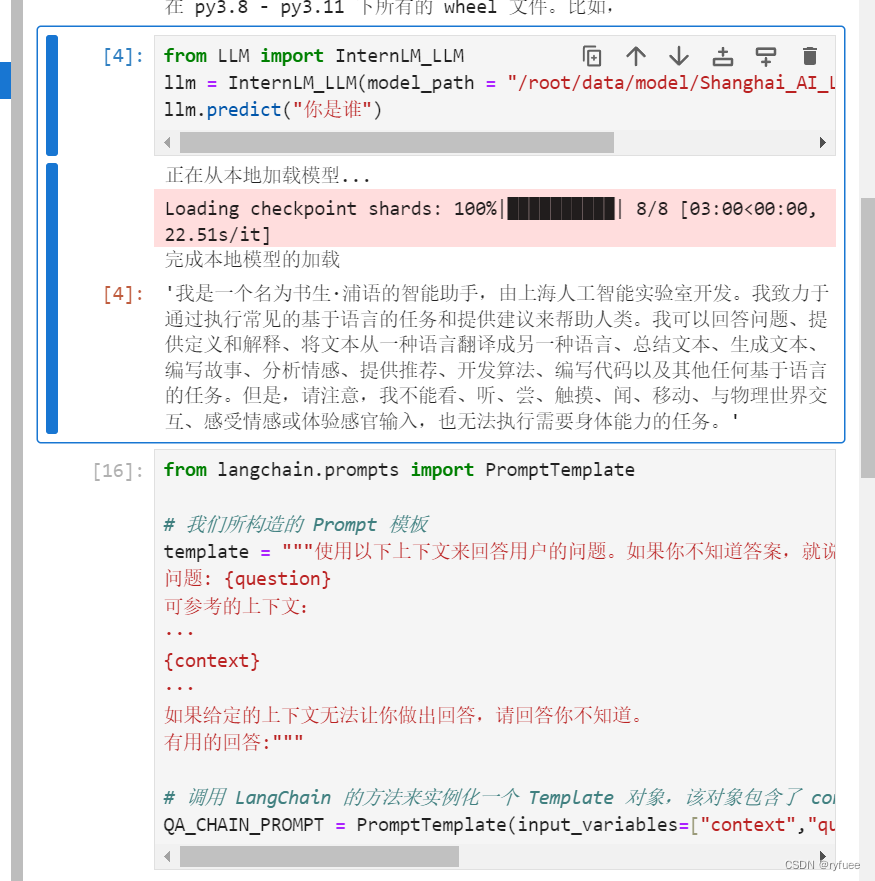

return "InternLM"4. 构建检索问答链

这里为了方便演示截图,直接采用jupyter notebook

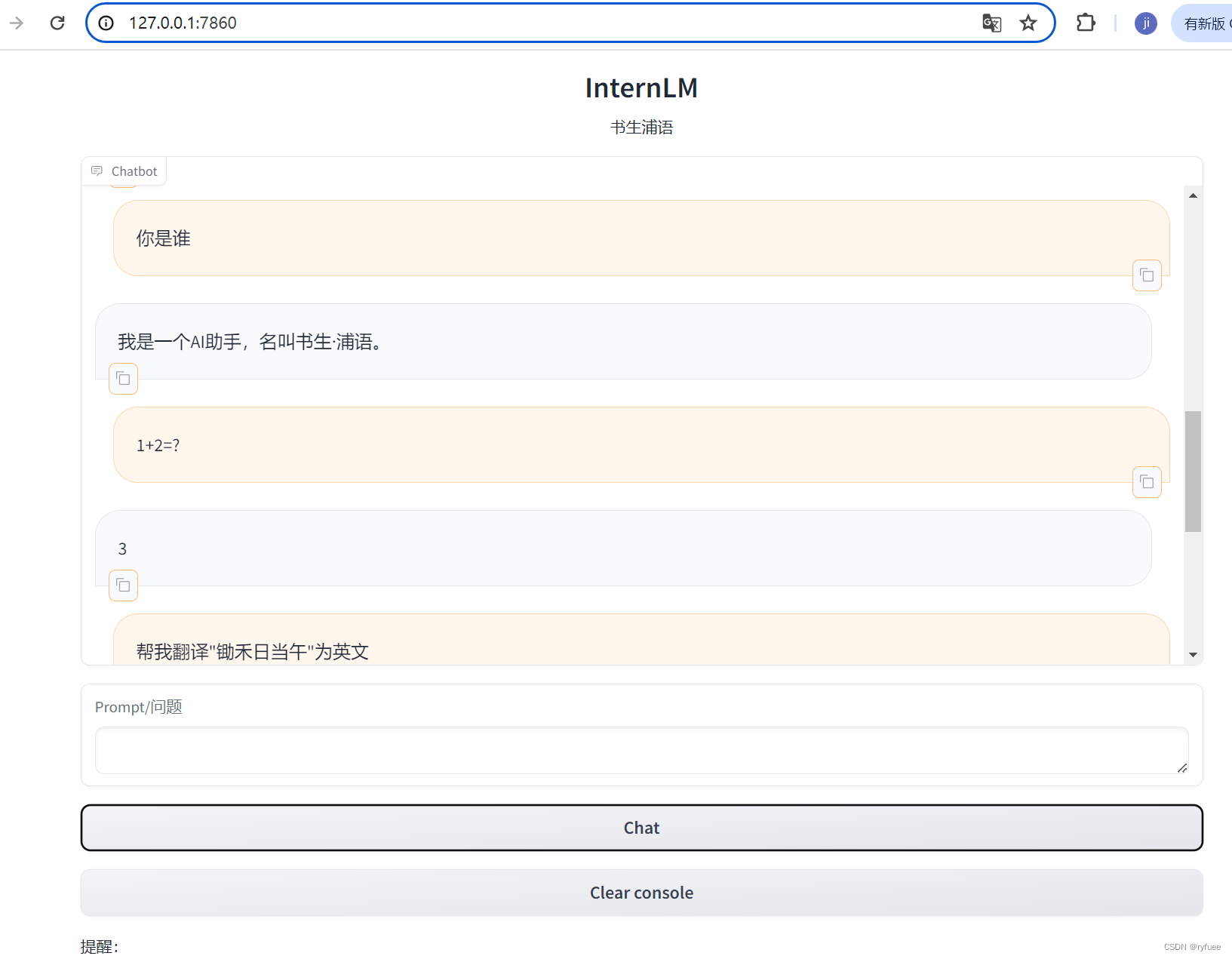

5. 部署WebDemo

作业

基础作业

复现课程知识库助手搭建过程 (截图)

过程截图如前文所示

1142

1142

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?