分词

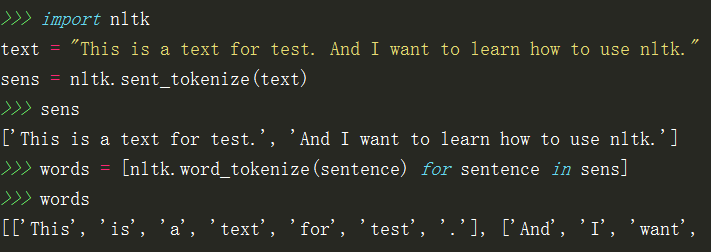

nltk.sent_tokenize(text) #按句子分割

nltk.word_tokenize(sentence) #分词

nltk的分词是句子级别的,所以对于一篇文档首先要将文章按句子进行分割,然后句子进行分词:

词性标注

nltk.pos_tag(tokens) #对分词后的句子进行词性标注

tags = [nltk.pos_tag(tokens) for tokens in words]

>>>tags

[[('This', 'DT'), ('is', 'VBZ'), ('a', 'DT'), ('text', 'NN'), ('for', 'IN'), ('test', 'NN'), ('.', '.')], [('And', 'CC'), ('I', 'PRP'), ('want', 'VBP'), ('to', 'TO'), ('learn', 'VB'), ('how', 'WRB'), ('to', 'TO'), ('use', 'VB'), ('nltk', 'NN'), ('.', '.')]]

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2499

2499

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?